What Is Post-Quantum Cryptography (PQC)?

Table of Contents

This is part of the Quantum Security Reference Deep Dive series. For the full landscape overview, see the capstone article on quantum security.

Introduction

Post-quantum cryptography (PQC) is a family of cryptographic algorithms designed to be secure against attacks from both classical computers and quantum computers. Unlike quantum cryptography, which uses quantum physics to protect information, PQC is implemented entirely in software and runs on existing classical hardware. It is the primary replacement for the public-key algorithms (RSA, ECC, and Diffie-Hellman) that Shor’s algorithm will eventually break.

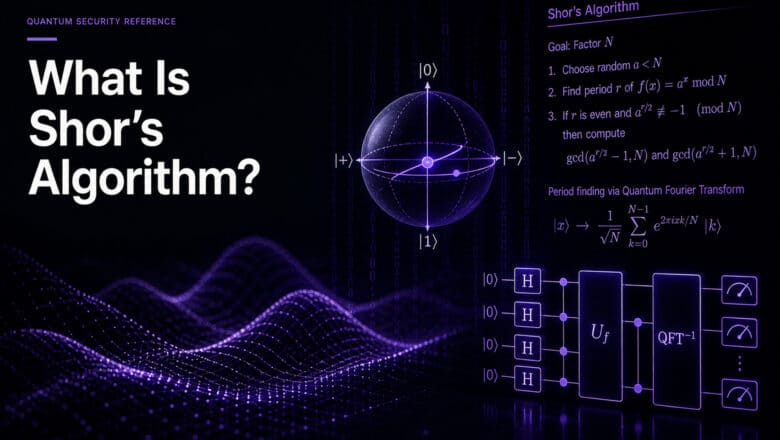

Why Current Cryptography Will Break

The public-key cryptography securing virtually all digital communications relies on mathematical problems that are extremely hard for classical computers to solve. RSA depends on the difficulty of factoring large numbers. Elliptic Curve Cryptography (ECC) depends on the discrete logarithm problem over elliptic curves. Diffie-Hellman key exchange relies on a related algebraic structure.

Shor’s algorithm, discovered by mathematician Peter Shor in 1994, solves all of these problems efficiently on a quantum computer. Once a sufficiently powerful quantum machine exists (a Cryptographically Relevant Quantum Computer, or CRQC), it could break RSA-2048, factor the elliptic curves protecting TLS connections, and compromise the Diffie-Hellman exchanges underpinning VPNs. As I detail in my analysis of how ECC became the easiest quantum target, the quantum resource estimates for breaking ECC have been dropping even faster than those for RSA. Recent research from EUROCRYPT 2026 and Google’s quantum team confirms the gap between RSA and ECC vulnerability is widening.

No CRQC exists today. The largest quantum computers operate with a few thousand noisy physical qubits, while breaking RSA-2048 requires hundreds of thousands to millions of error-corrected logical qubits. But the trajectory of resource estimates has been consistently downward, and the timeline is uncertain enough that the global cryptographic community, led by NIST and the NSA, has decided to migrate now rather than wait.

How PQC Algorithms Work Differently

PQC algorithms achieve quantum resistance by relying on mathematical problems believed to be hard for both classical and quantum computers. The approaches differ significantly in their underlying mathematics, performance characteristics, and maturity.

Lattice-based cryptography, the foundation for NIST’s primary standards, uses the difficulty of finding short vectors in high-dimensional mathematical lattices. These problems have been studied for decades with no known efficient quantum attack. Hash-based cryptography builds signatures from the well-understood security of hash functions like SHA-2 and SHA-3, offering arguably the strongest theoretical guarantees because the security depends only on the one-wayness of hash functions. The tradeoff is larger signatures. Code-based cryptography relies on the difficulty of decoding random linear codes, a problem that has resisted both classical and quantum attack since the 1970s; NIST selected HQC, a code-based algorithm, as a backup to ML-KEM specifically to provide algorithmic diversity in case lattice-based approaches encounter unexpected vulnerabilities.

Other families were explored during the NIST process. Isogeny-based approaches looked promising until SIKE, the primary candidate, was broken by a classical attack in 2022. That episode remains a useful reminder that new cryptographic assumptions require sustained scrutiny, and part of the rationale for maintaining multiple algorithmic families.

The NIST Standards

NIST’s post-quantum standardization process began in 2016 with 82 submissions. In August 2024, it reached its landmark milestone with the publication of three finalized standards. I maintain a comprehensive analysis of the NIST PQC standardization covering the full history and technical details; here is the summary.

ML-KEM (Module-Lattice-Based Key-Encapsulation Mechanism, FIPS 203, formerly CRYSTALS-Kyber) is the primary standard for key exchange and encryption. It replaces RSA and ECDH in TLS handshakes and similar key agreement protocols, and is the algorithm most organizations will deploy first.

ML-DSA (Module-Lattice-Based Digital Signature Algorithm, FIPS 204, formerly CRYSTALS-Dilithium) is the primary standard for digital signatures, replacing RSA and ECDSA in code signing, certificate issuance, and authentication. Its signatures are substantially larger than classical equivalents (roughly 2,420 bytes versus 64 bytes for P-256 ECDSA), which creates practical challenges for bandwidth-constrained systems.

SLH-DSA (Stateless Hash-Based Digital Signature Algorithm, FIPS 205, formerly SPHINCS+) is a conservative backup. Its security rests entirely on hash functions, making it the safest bet against future cryptanalytic surprises, at the cost of signatures up to roughly 50 KB depending on the parameter set.

Two additional standards are in progress. FN-DSA (FIPS 206, based on the FALCON algorithm) is in Initial Public Draft as of early 2026, with finalization expected in late 2026 or early 2027. It produces signatures of roughly 666 bytes at comparable security to ML-DSA’s 2,420 bytes, which makes it attractive for TLS certificate chains, IoT, and other bandwidth-sensitive applications. HQC, a code-based key encapsulation mechanism selected by NIST in March 2025 as a backup to ML-KEM, has a draft standard expected in 2026 and finalization in 2027.

PQC Runs on Existing Hardware

PQC does not require quantum computers or any specialized quantum hardware. These are classical algorithms running on the processors, servers, and devices organizations already own. The migration is a software, protocol, and standards challenge.

The larger key sizes and signature sizes do ripple through network protocols, certificate hierarchies, embedded systems, and any architecture designed around the compact payloads of classical cryptography. Many organizations will use hybrid cryptography during the transition, combining classical and PQC algorithms to provide defense-in-depth while the new standards accumulate real-world deployment experience.

What Organizations Need to Do

PQC migration is not a patch deployment. As I have detailed in my analysis of why this is the largest cryptographic overhaul in IT history, a large enterprise migration involves tens of thousands of discrete tasks spanning network infrastructure, application code, key management, PKI, vendor contracts, embedded systems, and operational technology.

The regulatory clock is already running. NIST’s draft IR 8547 deprecates RSA, ECDSA, EdDSA, and Diffie-Hellman for federal systems by 2030 and disallows them entirely by 2035. NSA’s CNSA 2.0 requires quantum-resistant algorithms for all new National Security System acquisitions starting January 2027. These requirements cascade through defense contractors, regulated industries, and any organization connected to government systems. In Europe, the NIS2 and DORA frameworks create parallel obligations.

As I argue throughout PostQuantum.com, the deadlines are already set. They are driven by regulators, insurers, investors, and clients, not by predictions about when a CRQC will arrive. Whether a quantum computer capable of breaking RSA exists in 2032 or 2042, the migration deadlines are locked in now.

For organizations beginning this journey, the Applied Quantum PQC Migration Framework provides a structured, open-source methodology. My practical steps guide maps the first concrete actions, and my forthcoming book Quantum Ready covers organizational readiness strategy comprehensively.

Go Deeper

This reference article covers the essentials. For deeper analysis, these PostQuantum.com articles provide the next level of detail:

- PQC Standardization — 2025 Update — full technical analysis of all NIST algorithms, the standardization process, and what comes next

- Inside NIST’s PQC: Kyber, Dilithium, and SPHINCS+ — technical deep dive into algorithm internals

- How ECC Became the Easiest Quantum Target — why elliptic curve cryptography may break before RSA

- Hybrid Cryptography for the Post-Quantum Era — combining classical and PQC algorithms during migration

- Infrastructure Challenges of PQC — what larger keys and signatures mean for real systems

- The Complete US PQC Regulatory Framework in 2026 — every federal mandate and deadline in one place

- Practical Steps to Quantum Readiness — where to start your migration program

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.