Table of Contents

15 May 2026 – A team led by Chao-Yang Lu and Jian-Wei Pan at the University of Science and Technology of China (USTC) published results from Jiuzhang 4.0 in Nature, reporting a programmable photonic quantum processor that incorporates 1,024 high-efficiency squeezed-state inputs into an 8,176-mode hybrid spatial–temporal circuit. The result is the largest Gaussian boson sampling (GBS) experiment reported to date, with detection events reaching up to 3,050 photon clicks, more than ten times the 255-photon maximum achieved by Jiuzhang 3.0 in 2023.

The processor achieves 92% source efficiency and 51% overall system efficiency (the latter figure includes 93%-efficient superconducting nanowire single-photon detectors), a substantial improvement over previous GBS experiments. The USTC team validated their samples against all classical simulation methods considered in the paper, including the matrix product state (MPS) approach specifically designed to exploit photon loss. For the main benchmark configuration (the L1024 dataset at 6 nJ), they estimate that El Capitan, currently the world’s most powerful supercomputer, would need more than 10⁴² years to generate a single equivalent sample. The quantum processor produces one in 25.6 microseconds, yielding a claimed speedup ratio exceeding 10⁵⁴.

A precision note: the headline numbers come from different operating configurations. The 3,050 photon-click maximum appears in a supplementary dataset (dataset B) used to demonstrate a second programmed unitary. The main quantum-advantage runtime estimate is based on dataset A, where the maximum observed photon-click number is 2,598 and the effective squeezed photon number N_eff is approximately 114. Nature‘s abstract compresses these into a single result; they should be understood as complementary benchmarks rather than a single measurement.

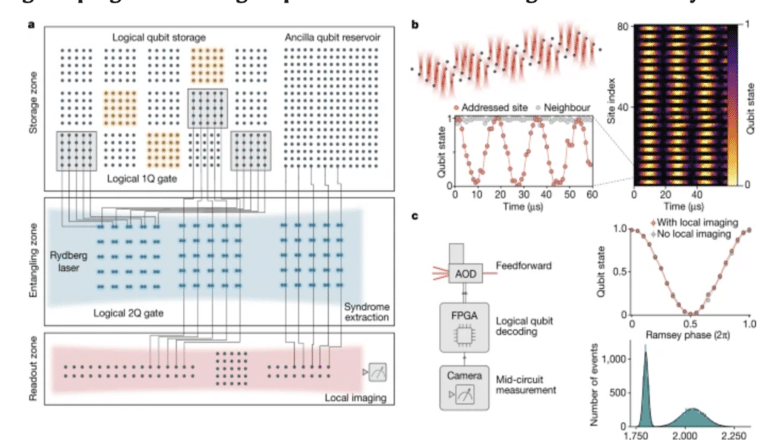

The system uses a cascaded architecture of three 16-mode interferometers connected by fiber delay loops, achieving cubic connectivity scaling (16³ = 4,096 modes) while requiring only linearly growing physical resources. The reported N_eff of approximately 114 far exceeds the N_eff of roughly 10 achieved by all previous GBS experiments.

The paper has been peer-reviewed and published in Nature. The raw experimental data have been made publicly available by the team.

My Analysis

What the Paper Actually Shows

Let me start with what Jiuzhang 4.0 genuinely achieves, because the engineering here is real and it matters.

The central technical advance is not that Jiuzhang 4.0 eliminates photon loss from photonic quantum computing. Rather, it engineers around the loss vulnerability that undermined previous GBS claims. Photon loss directly reduces the effective squeezing that makes GBS classically hard. In 2024, Oh et al. published an MPS-based algorithm in Nature Physics that quantified exactly how this works, effectively raising the bar for any future GBS experiment. The USTC team built Jiuzhang 4.0 to clear that bar.

By most measures, they succeeded. The 92% source efficiency (up from 70% in previous time-bin experiments) comes from an elegant spectral filtering design using cascaded Mach-Zehnder interferometers with near-unity transmission. The 51% overall system efficiency pushes N_eff to approximately 114, a regime where even the MPS algorithm would take more than 2 × 10⁶ years on a 100,000-GPU cluster to produce a single sample at a bond dimension of 10⁴. At that bond dimension, the truncation error reaches 0.9999, rendering the output meaningless for validation purposes. The team tested their results from multiple angles: photon-number-distribution checks, Bayesian tests on tractable subsystems, second- through fourth-order correlation-function benchmarking with mode binning for the largest systems, and a deliberately weakened “tractable proxy MPS” sampler. Cross-entropy benchmarking was also applied where computationally feasible.

One caveat makes the result more credible, not less. The experimental data do not win every auxiliary metric in the supplement. In the largest L1024 configuration, a few unbinned low-order correlation tests are marginal or within calibration uncertainty. But those tests inspect only a small fraction of the full 8,176-mode system. When the analysis moves to higher-order correlations, binned measurements, and photon-number distributions (checks that capture more of the system’s global structure), the classical mock-ups fall behind consistently.

This is careful, thorough work. The validation methodology goes further than what accompanied earlier GBS claims, and publication in Nature adds a layer of peer-reviewed scrutiny. The data are public, which means independent researchers can test these claims. As someone who has examined Chinese quantum hardware claims in depth, I find the scientific content of this paper far more credible than the announcement-driven coverage surrounding Hanyuan-2’s “dual-core” quantum computer, which arrived with headlines but no published benchmarks.

What the Headlines Get Wrong

Now for the part where I earn my keep.

Chinese state media like Xinhua, alongside outlets like the South China Morning Post, are framing Jiuzhang 4.0 as evidence that China is entering “the age of quantum supremacy.” The SCMP headline asks whether this result “heralds” that era. Xinhua describes a “new world record.” The implied narrative is straightforward: China is winning the quantum race, and this proves it.

That narrative conflates two very different things. Gaussian boson sampling is a specific, carefully constructed computational task designed to be hard for classical computers and natural for photonic quantum devices. It was chosen precisely because photons are good at it. Producing samples from this distribution faster than a supercomputer is a genuine scientific achievement, but it is not general-purpose quantum computing. More to the point for my readers, it is not on the path to a cryptographically relevant quantum computer (CRQC). GBS, as the Jiuzhang paper itself states, is “an intermediate model for quantum computing.” The processor does not implement universal gate operations, does not perform error correction, and cannot run Shor’s algorithm.

The paper’s authors are measured in their own claims. They acknowledge that the architecture “prioritizes scalability and connectivity over universal gate sets.” They position GBS not as a substitute for fault-tolerant computing but as a potential building block, citing its capacity for generating bosonic error-correcting codes (specifically Gottesman-Kitaev-Preskill states) that could eventually feed into fault-tolerant photonic architectures. That pathway exists in principle, but the distance between producing GKP states from a boson sampler and running a fault-tolerant computation on millions of logical qubits spans decades of additional engineering, not years.

The Real Significance: What It Tells Us About China’s Photonic Program

The honest assessment sits between the hype and the dismissal.

The engineering execution demonstrates USTC’s ability to push the photonic platform further than anyone else has. The cascaded spatial–temporal architecture achieves cubic connectivity growth with only linear hardware scaling. Phase stabilization, reported at approximately λ/200 at 1,550 nm across the GBS setup, is a serious engineering accomplishment. This is the same USTC-Hefei ecosystem I profiled in my Deep Dive on China’s Hefei National Laboratory, and this result validates the assessment that Hefei’s concentration of talent, infrastructure, and institutional focus produces real output, not just announcements.

Equally significant is how the team responded to the classical spoofing challenge. The quantum-classical competition is the engine that drives both sides forward: classical simulators improve, quantum experiments scale up to stay ahead. The Oh et al. MPS algorithm was a legitimate threat to previous GBS claims. The USTC team responded not by dismissing the classical challenge but by engineering around it, boosting efficiency until N_eff reached a regime where MPS becomes computationally futile. This is how healthy science works. Making the raw data publicly available invites further classical challenges, a welcome contrast to quantum announcements that rely on press releases alone.

Perhaps most important for the photonic quantum computing field broadly, the paper opens a plausible path toward large-scale cluster state generation. The authors explicitly point toward “trillion-qumode three-dimensional cluster states” as a next step. The hybrid spatial–temporal encoding and ultra-stable phase locking demonstrated here are precisely the ingredients that measurement-based photonic approaches (the kind PsiQuantum and Xanadu are pursuing) rely on for fault tolerance. If that pathway matures, it would position USTC as a competitive player in the race toward fault-tolerant photonic hardware. A recent Nature paper from Larsen et al. demonstrated an integrated photonic source of GKP qubits, showing that this line of research is advancing on multiple fronts simultaneously.

So What Does This Mean for CRQC?

Very little, directly. The Jiuzhang lineage is a sampling platform, not a fault-tolerant gate-model processor. It does not advance the specific CRQC capabilities I track: logical error suppression under a scalable code, logical gates, magic-state production, real-time decoding, or continuous operation over attack-relevant workloads. China’s superconducting program, particularly the Zuchongzhi lineage and its 105-qubit random circuit sampling work, is more directly legible for cryptographic-threat tracking because its benchmarks sit closer to the gate-operation and error-correction roadmap. (Random circuit sampling itself is also not a cryptographic workload, but the underlying architecture is the one that will eventually run Shor’s algorithm at scale.)

“Very little, directly” should not be read as “nothing at all,” however. Photonics has structural advantages: telecom-wavelength compatibility, low-loss optical propagation, and a natural fit with measurement-based architectures. Jiuzhang 4.0 still uses cryogenic superconducting nanowire detectors for the detection layer, so calling the system “room-temperature” would be an oversimplification; much of the optical hardware operates at room temperature, but the full stack does not. The more accurate point is that the platform’s strengths could matter considerably if fault-tolerant photonic architectures mature, and the GKP codes referenced in the paper are actively being pursued by multiple groups worldwide.

The right frame: Jiuzhang 4.0 is a significant photonic engineering achievement that strengthens China’s position in one branch of the quantum technology tree. For readers focused on cryptographic security timelines, it changes nothing.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.