Table of Contents

Editor’s Note (May 13, 2026): One week after Q-CTRL’s announcement, Algorithmiq posted results showing their Majorana Propagation method reproducing the 120-qubit quantum simulation data for a single site in 2 minutes 30 seconds on a standard MacBook Air (10 threads), 16 seconds faster than Q-CTRL’s reported QPU time. Majorana Propagation is not a new method: it was published in March 2025, has provable performance guarantees, and was already used as a classical benchmark in Google’s own Fermi-Hubbard experiment. It computes one observable at a time (whereas the quantum processor computes all 120 spin-orbitals simultaneously), so the full comparison is more nuanced, but the result narrows Q-CTRL’s advantage claim: the 3,000x speedup holds against TDVP, the most widely used classical tool, but appears not to hold against this newer method. This article’s analysis noted that the advantage was time-bound against available tools. That assessment proved correct sooner than I expected. I will update as the exchange develops.

Editor’s Note Update (May 13, 2026): Within hours of Algorithmiq’s post, Q-CTRL’s senior lead scientist responded in detail. The response makes a point that the single-site comparison dramatically understates what the quantum processor actually computed. Even I underweighted that. In 2.76 minutes, the QPU generated measurement data from which all 120 single-site occupations, over 7,000 two-point spin-spin correlators, and arbitrarily higher-order correlation functions can be extracted simultaneously. Majorana Propagation must compute each of these separately. At 2.5 minutes per observable, reproducing just the two-point correlators alone would take longer than TDVP’s 160 hours on the same laptop. Q-CTRL also raised an accuracy challenge: visually matching quantum data for one site is not the same as achieving the ~1% RMSE across all sites that their paper benchmarked against, and Algorithmiq has not published quantitative error analysis. Both teams are committing data to the Quantum Advantage Tracker. My initial editor’s note above overstated how much Algorithmiq’s result narrows Q-CTRL’s claim. The full picture is more favorable to Q-CTRL than the single-site headline suggests. There are still some open questions, so I’ll be watching how this develops.

May 6, 2026 – Two minutes and forty-six seconds. That is the QPU execution time Q-CTRL reports for IBM’s 156-qubit Heron processor to simulate the dynamics of 60 interacting electrons across a one-dimensional chain, using 120 qubits and over 9,000 two-qubit gates. The best available classical alternative, an industry-standard tensor network package called ITensor running the TDVP solver, needed over 100 hours to reach the divergence point and exceeded 160 hours for the longest evolution time, yielding up to a 3,000-fold wall-clock speedup.

Q-CTRL, the Australian quantum infrastructure software company, announced today that this result constitutes “practical quantum advantage”: the first time a quantum computer has outperformed the best available classical tool on a problem of known commercial relevance. The technical manuscript details digital quantum simulation of the one-dimensional Fermi-Hubbard model at scales beyond any prior demonstration.

Bottom line: The technical achievement here is genuine and substantial. This appears to be the largest and most accurate gate-based digital simulation of the one-dimensional Fermi-Hubbard model reported to date, measured by chain length, Trotter depth, gate count, and quantitative agreement with classical benchmarks. (Analog cold-atom simulators occupy a different category and have operated at larger lattice sizes.) Whether it constitutes “quantum advantage” depends on how precisely you draw the line between outperforming today’s best tools and outperforming the best tools that could theoretically exist.

What They Actually Did

The Fermi-Hubbard model is the workhorse of condensed matter physics, and one of the strongest near-term candidates for scientifically meaningful quantum computation at the fault-tolerant scale. It captures how electrons move through a material and interact with each other, using the simplest possible set of rules that still produces the complex behavior seen in real materials (including exotic phenomena like high-temperature superconductivity). The model’s static properties can be solved exactly with pen-and-paper mathematics, but simulating how the system evolves over time after a sudden change requires numerical methods that become exponentially more expensive as the simulation grows.

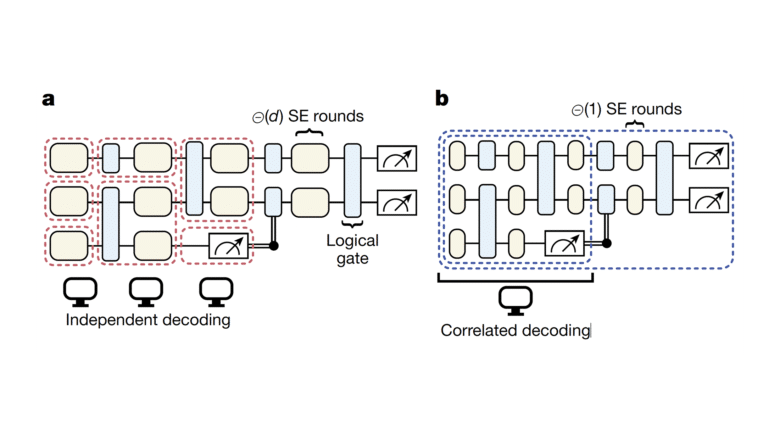

Q-CTRL’s approach used Trotterized time evolution to simulate quench dynamics from a Néel initial state (alternating spin-up and spin-down electrons). Their compilation pipeline is the key innovation. Quantum circuits must be translated from the abstract algorithm into operations the hardware can physically execute, and how you do that translation determines how deep (and therefore how error-prone) the circuit becomes. Q-CTRL developed a mapping scheme that reduces circuit depth by over 80% and gate count by over 60% compared to standard compilation, and layered on error suppression techniques that reduce noise without requiring additional circuit executions.

The numbers are worth digging into. Their deepest circuits used 62 qubits, 90 time-evolution steps, 13,829 two-qubit gates, and 452 layers of circuit depth. Their widest circuits used 120 qubits with 9,057 two-qubit gates across 152 layers. These are among the largest circuits successfully executed on any quantum processor for a physics simulation.

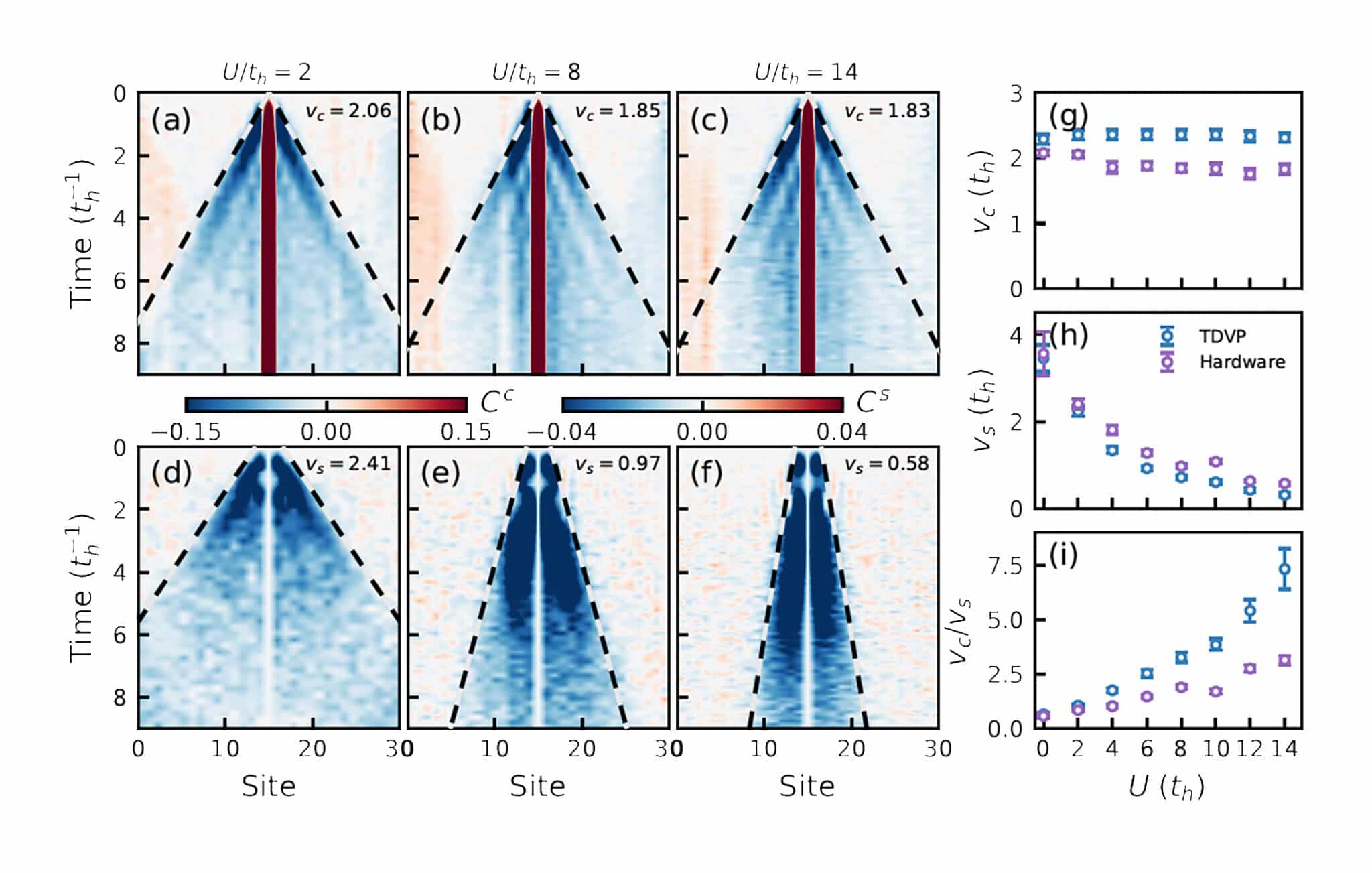

At the 31-site scale (62 qubits), they directly observed spin-charge separation: when you remove one electron from a chain of interacting electrons, the “hole” in the charge distribution and the disturbance in the magnetic ordering travel at different speeds. This phenomenon is a well-known prediction of one-dimensional physics, and the quantum processor reproduced it accurately across a wide range of interaction strengths, matching classical simulations.

At the 60-site scale (120 qubits), they pushed into a regime where the only viable classical comparison is the TDVP tensor network method. Agreement between quantum and classical remained within ~1% RMSE up to evolution time t ≈ 5.2 (in units of the inverse hopping amplitude), improving systematically as the classical simulation’s resolution parameter (bond dimension, χ) increased to 4096, its highest tested setting. Beyond this point, the two methods diverge.

The 3,000x Claim — and Its Caveats

Here is where the story gets more nuanced than the headlines suggest.

The 3,000x speedup is a wall-clock comparison across the full evolution, including the regime where quantum and classical methods no longer agree. The quantum processor completed all Trotter steps in about 2 minutes 46 seconds of QPU time. The TDVP simulation at its highest resolution setting (bond dimension χ = 4096) required over 100 hours to reach the divergence point (t≈5.2) and exceeded 160 hours for the final time step (t=6.0) on an optimized 32-vCPU AWS instance.

The paper is unusually candid about what happens at the boundary: “For evolution times beyond t ≈ 5.2, where agreement between quantum and classical simulations diverges at the largest values of χ, it is not possible to know which simulation methodology most accurately reflects the true system dynamics.” The authors point to the visual continuity of their quantum data as evidence that the quantum simulation may still be correct, but they explicitly acknowledge the uncertainty.

This is the critical point. The 3,000x advantage is measured precisely where neither method can be independently verified. In the regime where both methods can be cross-checked (t < 5.2), the quantum computer produces results that agree with classical simulation to ~1%, but it does not produce results that classical simulation cannot. The advantage is purely in wall-clock time, not in computational reach.

Q-CTRL defines “practical quantum advantage” as outperforming “the best available conventional alternative” on a problem of “known commercial or scientific relevance.” That aligns with how most of the industry uses the term. And the claim holds: TDVP is the workhorse tool in condensed matter physics, ITensor has enabled over 1,250 publications, and the quantum processor delivered equivalent accuracy thousands of times faster. The nuance is in the word “available.” Q-CTRL explicitly benchmarks against today’s best classical tooling, not against the best classical algorithm that could theoretically exist. The paper acknowledges that future GPU acceleration or algorithmic improvements could narrow the gap. That honesty is appropriate, but it also means the advantage claim is time-bound: it holds today, against today’s tools, and must be re-evaluated as classical methods improve.

The paper itself acknowledges this distinction clearly: “We cannot exclude the possibility that the classical computational runtime could be improved via future GPU acceleration, modifications to the underlying algorithm, or complete replacement with a novel computational method.” GPU acceleration for symmetry-adapted TDVP does not yet exist in production form, but there is active research in this direction. A classical GPU implementation could close some of the runtime gap, though the paper notes that no readily available GPU acceleration exists that incorporates the particle-number and spin conservation symmetries required to reproduce these runtimes. The authors also tested TDVP on 64 cores (double the baseline) and observed negligible improvement, consistent with fundamental bottlenecks in the algorithm’s core mathematical operations, which are inherently sequential and resist the kind of parallelization that makes GPUs effective for other problems.

What Does This Mean for the CRQC Timeline?

This result is a quantum computing milestone, not a CRQC milestone. That distinction matters for readers of PostQuantum.com.

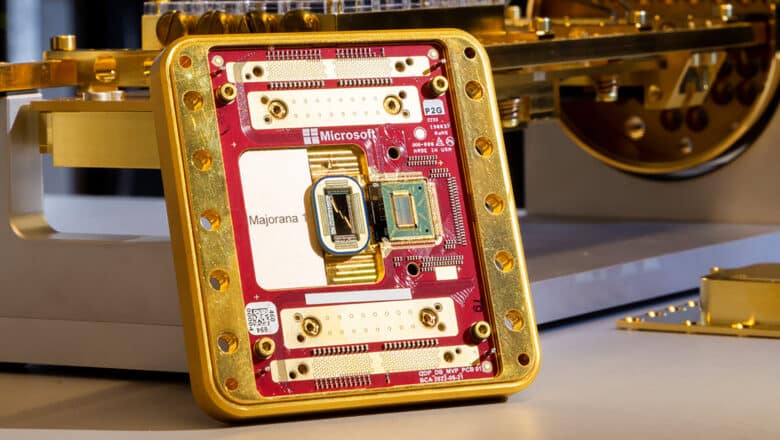

The Fermi-Hubbard simulation is a physics application operating far from the cryptographic domain. The 120 qubits involved are noisy physical qubits running Trotterized circuits with error suppression (not error correction). Breaking RSA-2048 via Shor’s algorithm requires hundreds of thousands to millions of physical qubits operating under fault-tolerant quantum error correction — a challenge mapped in detail through my CRQC Quantum Capability Framework.

The result does matter for the CRQC trajectory, though, in indirect but important ways:

Circuit depth and error suppression are advancing faster than expected. Running 9,000+ two-qubit gates on 120 qubits with ~1% RMSE accuracy against classical benchmarks, while a separate experiment pushed circuit depth to 13,800+ gates across 452 layers, would have seemed implausible two years ago. Both results avoided exponential sampling overhead from error mitigation. The Q-CTRL compilation pipeline reduced circuit depth by 80% and gate count by 60% compared to standard methods. In CRQC-framework terms, this is best understood as progress in pre-fault-tolerant circuit execution: compilation efficiency, layout selection, and runtime error suppression. It does not demonstrate below-threshold logical operation and should not be read as a direct CRQC milestone. Its relevance is indirect — the engineering stack needed to extract reliable circuit volume from noisy hardware is improving faster than many expected.

The wall-clock comparison reveals a structural advantage. The quantum processor’s runtime scales linearly with Trotter steps and is independent of system size. TDVP runtime grows steeply with both system size and the amount of quantum entanglement in the simulation, because the classical method must track exponentially more information as entanglement increases. This is not an artifact of a bad classical implementation; it reflects a fundamental asymmetry between quantum and classical approaches to entangled dynamics. Against today’s symmetry-adapted TDVP workflow, the scaling asymmetry favors the quantum approach as system size and evolution time increase. But the paper is right to leave room for future classical improvements, including GPU support or altogether different algorithms.

The error suppression approach preserves the quantum speed advantage. Other error-handling approaches require running the same circuit thousands or millions of additional times to statistically cancel out noise, which destroys any wall-clock advantage. Q-CTRL’s approach avoids that trap. Their pipeline does run lightweight characterization and echo circuits for post-processing (bringing total QPU time to about four and a half minutes), but it completely bypasses the multiplicative or exponential overhead of heavier mitigation techniques. This is a design principle that matters for scaling toward larger, more complex simulations.

My Analysis

Credit where it is due: the technical execution is excellent. The compilation innovations (pair-interleaved ordering, fSWAP networks, mirrored Trotterization for second-order accuracy) represent real engineering advances that will benefit the broader community. The paper’s comparison against multiple classical baselines (TDVP, Pauli path propagation, exact diagonalization) is thorough and honest.

I also want to credit the manuscript’s intellectual honesty. It explicitly states what it can and cannot verify, acknowledges the possibility of classical catch-up, and avoids overclaiming about the correctness of quantum results in the unverified regime. The press release naturally emphasizes the headline numbers, but the paper gives readers everything they need to form their own assessment. That transparency is rare in quantum computing announcements and worth recognizing.

And this is where the commercial significance comes in. End users of computational tools do not care about asymptotic complexity classes. They care about whether a tool gives them useful answers faster than the alternative. By that measure, Q-CTRL has delivered something real.

Physicists may view this as incremental. For investors, enterprise strategists, and government program managers, it is something different: the first concrete case where someone can point to a quantum computer and say “this solved a real problem faster than the classical alternative, on hardware you can buy access to today.” That changes procurement conversations, board-level risk assessments, and national technology strategies in ways that a complexity-theoretic argument about asymptotic advantage never could. The quantum computing industry has spent years selling a future. IBM’s CEO predicted exactly this moment during the Q1 2026 earnings call. Q-CTRL is now delivering on that prediction, even if the present comes with caveats.

That is why the business signal here may be bigger than the physics. A 1D Fermi-Hubbard simulation, however well-executed, is an incremental scientific contribution. A demonstrated wall-clock advantage on commercially available hardware, validated against an industry-standard classical tool, is a threshold event for procurement offices, investor due diligence, and national quantum strategies. The physics community will debate whether this is “real” advantage. The business community will start writing purchase orders.

Where I push back is on the extrapolation to commercial impact. The press release describes this as relevant to “energy transmission, storage, and generation” and cites the statistic that approximately one-third of global supercomputer time goes to chemistry and materials simulation. The 1D Fermi-Hubbard model is a canonical physics benchmark, but it is not a materials engineering problem. The path from this demonstration to simulations that predict the properties of real materials involves moving to two dimensions (where the fermionic sign problem makes classical methods far worse, but also where quantum circuits become much deeper), handling inhomogeneous systems, and computing observables beyond simple site occupancies. These are hard problems that the paper’s final paragraphs acknowledge.

The trajectory is promising. But as I document in my Quantum Utility Map analysis, the gap between “we can simulate a textbook model faster than classical software” and “we can predict the behavior of a real material that a chemist or engineer needs” remains wide.

Still, this is the kind of result that reinforces the quantum computing trajectory is real and accelerating.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.