IBM’s Quantum Advantage Claim: What the Heron-Fugaku Experiment Actually Shows

May 2, 2026 — IBM Chairman and CEO Arvind Krishna told investors Wednesday that partners using IBM quantum hardware will demonstrate “the first examples of quantum advantage this year,” marking IBM’s most specific timeline yet for when quantum computers will outperform classical systems on practical tasks.

Speaking during IBM’s first quarter 2026 earnings call, Krishna cited recent scientific demonstrations as evidence that quantum systems are transitioning from laboratory experiments to reliable computational tools. The company reported better-than-expected Q1 earnings of $15.92 billion in revenue and $1.91 earnings per share.

“We strongly believe that our partners will achieve the first examples of quantum advantage this year, leveraging IBM hardware,” Krishna said during the call. “We continue to make progress in quantum and remain on track to deliver the first large-scale fault-tolerant quantum computer by 2029.”

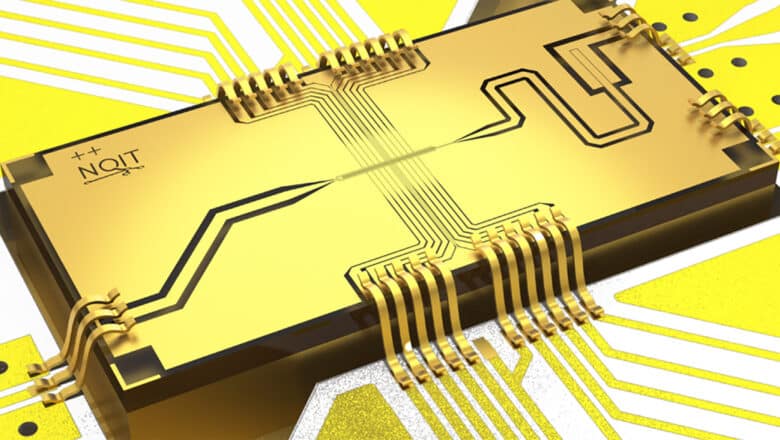

The claim appears to rest primarily on recent work by IBM researchers and Japan’s RIKEN Center for Computational Science, who published results showing a 77-qubit IBM Heron processor working in concert with all 152,064 nodes of Japan’s Fugaku supercomputer to calculate the electronic structure of iron-sulfur clusters. The paper, posted to arXiv on October 31, 2025, demonstrates what the authors call “the largest computation of electronic structure involving quantum and classical high-performance computing” to date.

The research team used 72 of Heron’s qubits to model 36 molecular orbitals in iron-sulfur compounds (2Fe-2S and 4Fe-4S clusters) under Jordan-Wigner encoding. These calculations exceeded the capabilities of exact diagonalization methods and improved upon 2024’s quantum state-of-the-art results by 278 milliHartrees (mEh), surpassing the accuracy of the coupled cluster singles and doubles (CCSD) method commonly used in computational chemistry.

The experiment employed a closed-loop workflow where a differential evolution optimizer synchronized quantum calculations on Heron with classical computations distributed across Fugaku. The quantum processor handled specific quantum mechanical calculations while Fugaku managed data preparation, error mitigation, and result aggregation.

During the earnings call, Krishna also highlighted a separate collaboration with Cleveland Clinic where researchers used IBM quantum hardware to simulate a 300-atom molecular system for pharmaceutical research. He described both experiments as “significant demonstrations to date that quantum computers can serve as reliable tools for scientific discovery.”

The timing of Krishna’s announcement coincides with IBM’s appointment of Jay Gambetta as the new Director of IBM Research. Gambetta, who previously led IBM’s quantum computing division, has been instrumental in developing the company’s quantum roadmap. In recent interviews, Gambetta has characterized quantum advantage as “a scientific debate” rather than a binary milestone, suggesting the transition will be gradual rather than sudden.

IBM has also launched a Quantum Advantage Tracker initiative, referenced in a Nature article published April 30, 2026, aimed at standardizing how quantum computing performance is measured and compared across different systems. The tracker comes as the field faces criticism for inconsistent benchmarking practices that make it difficult to evaluate competing quantum advantage claims.

The push for standardized metrics aligns with broader industry efforts including the UK’s National Physical Laboratory’s QCMet benchmarking suite, which provides standardized tests for quantum computing systems. These frameworks aim to bring clarity to a field where each research group typically uses different hardware configurations, algorithms, and evaluation criteria.

Krishna said IBM has released a blueprint for “quantum-centric supercomputing” that outlines how quantum processors will integrate with classical computing infrastructure. Rather than replacing classical computers, the approach positions quantum systems as specialized accelerators for specific computational tasks within larger hybrid workflows.

The company maintains its commitment to delivering a fault-tolerant quantum computer by 2029, which would use quantum error correction to enable sustained calculations on complex problems. Current quantum processors, including IBM’s Heron, operate in what’s known as the noisy intermediate-scale quantum (NISQ) regime, where calculations are limited by accumulating errors.

My Analysis

Let’s cut through the earnings call optimism and examine what IBM is actually claiming here. When Krishna says partners will achieve “quantum advantage” this year, he’s making a carefully calibrated statement that deserves scrutiny.

First, I need to address the elephant in the room: quantum advantage means different things to different people. IBM defines it as achieving results that are cheaper, faster, or more accurate than classical computation alone. That’s a much broader definition than the academic gold standard of computational supremacy—solving a problem that’s fundamentally intractable for any classical computer.

The iron-sulfur cluster experiment that underpins this announcement is genuinely impressive engineering. Orchestrating a 77-qubit quantum processor with every single node of the world’s fourth-most-powerful supercomputer represents a technical achievement that shouldn’t be understated. This is the first time quantum and classical resources have been integrated at this scale in a closed-loop workflow.

But here’s where we need to pump the brakes on the hype train.

The quantum-classical hybrid calculation achieved an accuracy improvement of 278 milliHartrees over previous quantum methods. That sounds impressive until you realize the extrapolated zero-variance energy remains approximately 0.1 Hartree away from what the density matrix renormalization group (DMRG) method achieves on classical computers. In chemistry terms, 0.1 Hartree is about 63 kcal/mol—a massive error when you’re trying to predict molecular behavior.

So while the quantum calculation surpassed CCSD accuracy (a common but not state-of-the-art classical method), it still falls significantly short of the best classical techniques. This is progress, absolutely. But calling it quantum advantage requires some definitional gymnastics.

What I find more significant than the accuracy claims is the workflow itself. The closed-loop integration between Heron and Fugaku demonstrates something I’ve been tracking in my CRQC Quantum Capability Framework: we’re entering the era where quantum processors function as specialized accelerators within larger computational systems rather than standalone miracle machines.

This hybrid approach aligns with how I see the path to cryptographically relevant quantum computers (CRQC) unfolding. We won’t wake up one morning to find classical encryption suddenly broken. Instead, quantum systems will gradually take on larger portions of specific computational tasks while classical systems handle everything else.

Jay Gambetta’s characterization of quantum advantage as “a scientific debate” rather than a clear milestone is refreshingly honest. He’s acknowledging what many of us have been saying: the transition from classical to quantum superiority will be messy, contested, and domain-specific.

The proliferation of quantum advantage claims, from HSBC and IBM’s optimization work to Quantinuum’s Helios experiments, highlights why standardized benchmarking efforts like IBM’s Quantum Advantage Tracker and the UK NPL’s QCMet suite are crucial. Without consistent metrics, we’re comparing apples to quantum oranges.

Here’s my take on what this announcement really means:

IBM is demonstrating that quantum-classical hybrid systems can tackle problems at scales where pure classical approaches become computationally expensive. The iron-sulfur calculation required resources beyond exact diagonalization capabilities. That’s meaningful progress.

But we’re still in the realm of scientific demonstrations, not practical applications. The 0.1 Hartree gap between quantum results and best classical methods represents years of improvement needed before quantum chemistry calculations become truly competitive.

More importantly for my readers concerned about cryptographic security: this announcement doesn’t change the timeline to Q-Day. IBM’s 2029 target for fault-tolerant quantum computing remains aspirational but plausible. The company’s roadmap through Nighthawk and Loon processors shows steady progress toward error-corrected systems.

The real story here isn’t that quantum computers are about to revolutionize chemistry or break encryption. It’s that we’re watching the careful construction of hybrid computational infrastructure that will eventually enable those breakthroughs.

IBM’s claim of imminent quantum advantage is both overstated and understated. Overstated because the chemistry results still lag behind classical methods. Understated because the successful orchestration of quantum and classical resources at this scale represents a fundamental shift in how we’ll compute in the future.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.