Theorists Prove Passive Quantum Memory Works in Three Dimensions, Settling a 25-Year-Old Question

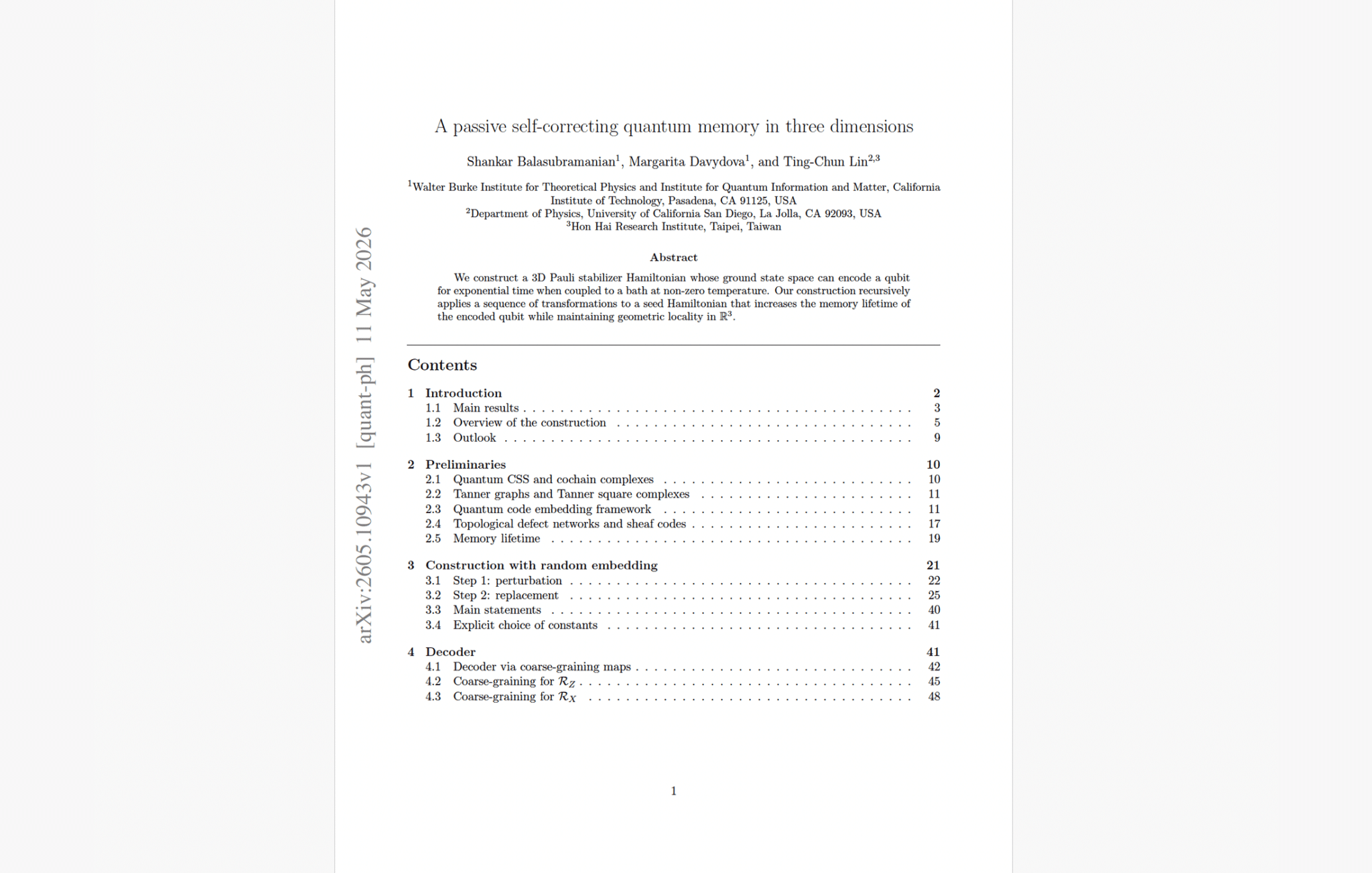

May 12, 2026 – A team of researchers from Caltech and UC San Diego has constructed the first provably self-correcting quantum memory that works in three spatial dimensions. The preprint, posted to arXiv on May 11, presents a family of 3D stabilizer Hamiltonians whose ground states can encode a qubit for exponentially long times at sufficiently low temperatures, without any active error correction.

The paper, authored by Shankar Balasubramanian and Margarita Davydova of Caltech’s Walter Burke Institute for Theoretical Physics and Institute for Quantum Information and Matter (IQIM), together with Ting-Chun Lin of UC San Diego and the Hon Hai Research Institute, resolves what has been one of the most persistent open questions in quantum information theory: whether a passive quantum memory is possible in the three-dimensional space we actually inhabit.

The result satisfies the strongest standard definition of self-correction: for sufficiently low temperature, memory lifetime grows as exp(Θ(n^η)) for some constant η > 0, meaning exponentially in a fractional power of the system size. Optimizing the exponent η remains open, but the scaling is what matters: even moderate system sizes produce memory lifetimes that dwarf any practical timescale, provided the temperature stays below a critical threshold. The authors prove both that such a critical temperature exists and that the construction is geometrically local, with each interaction term involving only nearby qubits at bounded density per unit volume.

Why This Problem Was So Hard

The idea of a self-correcting quantum memory dates to the early 2000s, when researchers recognized that the 4D toric code (a lattice model defined on a four-dimensional grid) could protect quantum information at finite temperature through the same mechanism that a ferromagnet uses to store classical bits. Below a critical temperature, the energy cost of growing error regions provides a natural barrier that thermal fluctuations cannot easily overcome. The system corrects itself passively, without any measurement or intervention.

In two dimensions, the situation is categorically different. The 2D toric code fails as a self-correcting memory because point-like excitations can be created by string-like operators at finite energy cost, and those excitations proliferate thermally regardless of system size. A series of no-go results established that 2D stabilizer codes cannot self-correct under any conditions.

That left three dimensions as the frontier — and for 25 years, the field made progress that was tantalizing but never definitive.

Jeongwan Haah’s cubic code, introduced in 2011, was the first real departure. Its excitations sit on fractal subsets of the lattice rather than at the ends of strings, producing an energy barrier that grows logarithmically with system size. But subsequent numerical and analytical work showed the memory lifetime remains constant. The logarithmic barrier does not produce true self-correction. A 2014 result by Michnicki achieved a polynomial energy barrier through “welded” toric codes, but the memory lifetime was again shown to be constant because the construction inherits the thermal instability of its 3D toric code building blocks.

The pattern repeated with each new attempt. Codes with optimal parameters in 3D (energy barriers scaling as $$n^{1/3}$$, optimal distance scaling) were constructed in a series of papers from 2023–2024, culminating in the Layer Codes by Williamson and Baspin. These codes achieve the best theoretically possible scaling in three dimensions — but as Gu et al. and Williamson independently showed in October 2025 they exhibit only “partial” self-correction: memory time grows exponentially with system size only up to a length scale that itself depends on temperature. Beyond that scale, the protection saturates.

In November 2024, Lin (one of the authors of the present paper) co-authored a proposals paper that laid out two new candidate constructions and reviewed why all existing 3D codes fail to self-correct. That paper was explicit about its provisional status: the constructions were candidates, not proofs. Eighteen months later, this new paper delivers the proof.

How the Construction Works

The authors build their code iteratively, starting from a small “seed” surface code and applying alternating transformations they call R_X and R_Z. Each R_X step doubles the syndrome cost of X-type Pauli errors; each R_Z step does the same for Z-type errors. After k iterations, the system has n_k = exp(Θ(k)) qubits and a single encoded logical qubit.

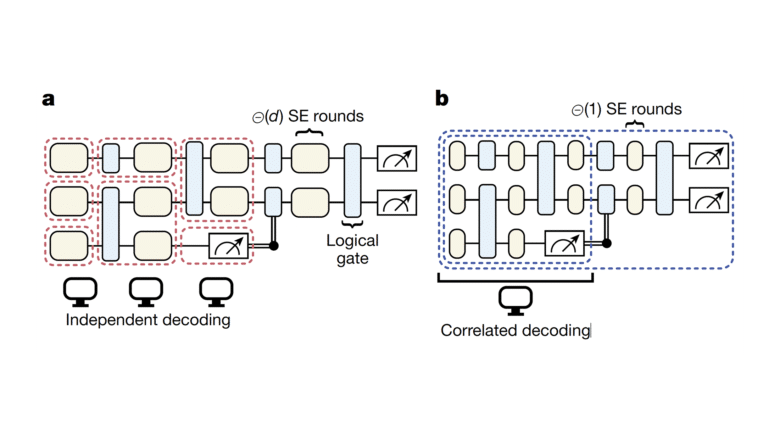

What makes this work when previous constructions failed is that the doubling must happen at every scale and for both error types simultaneously. Previous constructions that increased the energy barrier for one error type often inherited vulnerabilities to the other, or suffered from thermal instabilities at intermediate scales. By alternating between X-type and Z-type hardening at each recursive level, the new construction ensures that any error large enough to threaten the logical qubit must pay an energy cost that grows with the system size. Crucially, the entropic cost of producing such errors is overwhelmed by that energy cost at low enough temperatures.

To maintain geometric locality in three dimensions, the authors use a random embedding technique inspired by recent work on 3D code constructions. At each iteration, the code’s abstract structure (described by a “Tanner square complex”) is embedded into ℝ³ by first scaling the previous embedding and then applying random perturbations whose existence is guaranteed by the Lovász local lemma. This ensures two properties: bounded density (no unit ball in space contains more than a constant number of qubits) and constant interaction range (each Hamiltonian term involves only qubits within a ball of constant radius).

Proving that this construction actually self-corrects requires a Peierls-type argument, adapted for the multi-scale structure. The authors define a renormalization group decoder that processes errors from smallest to largest scale. Any error configuration that causes the decoder to fail must contain a connected error cluster with syndrome weight proportional to 2^k, where k is the number of recursive levels. Since the number of such clusters is bounded exponentially while their energy cost grows exponentially, a standard Peierls counting argument shows that the probability of a decoding failure is exponentially small in the system size at temperatures below a critical threshold.

The authors also present a second construction with explicit (deterministic) embedding, which avoids the probabilistic step entirely and gives concrete qubit layouts with optimized packing. The memory lifetime proof for this version is deferred to future work, but the structural similarity to the random construction makes the authors confident the result extends.

What This Does Not Mean

The paper is careful about its boundaries.

First, perturbative stability (the property that the self-correcting behavior survives when the Hamiltonian is perturbed by small local terms) is not yet proven. The authors note that proving this requires establishing the TQO-2 condition, a local-to-global consistency requirement for ground spaces used in perturbative-stability proofs, which they plan to address in an updated version. Without perturbative stability, the construction exists in a somewhat idealized setting where the Hamiltonian is exact.

Second, initialization is an open problem. The proof assumes the qubit is encoded via a thermal encoding: roughly, the logical state tensored with a Gibbs state on the remaining degrees of freedom. Preparing this state efficiently and passively (without active intervention) is not demonstrated. The authors note that energetic bottlenecks in their construction might obstruct the rapid thermalization needed for passive initialization.

Third, this is a memory, not a computer. The paper’s own outlook section asks whether a thermally stable quantum computer exists in three dimensions and identifies the main bottleneck: implementing a fault-tolerant non-Clifford gate via code-switching while the system is coupled to a thermal bath. That remains wide open.

Finally, this is a preprint posted yesterday. A result claiming to resolve a 25-year-old open problem will attract intense scrutiny. The proof runs over 100 pages of rigorous mathematics spanning chain complexes, homological perturbation theory, Peierls arguments, sheaf codes, and coarse-graining decoders. Independent verification will take time. That said, Davydova presented a talk titled “A passive self-correcting quantum memory in three dimensions” at the Simons Institute’s Quantum Interactive Dynamics workshop on April 6, 2026, suggesting the result has been circulating in the community for at least a month.

My Analysis

This is the kind of result that most quantum information theorists expected might never arrive – or at least, not for another decade. The problem of 3D self-correcting quantum memory has been a graveyard of promising ideas since Haah’s cubic code appeared in 2011. Each new construction achieved some impressive property (optimal energy barriers, optimal code parameters, polynomial distance scaling) and then turned out to fall short of true self-correction for reasons that seemed almost structural.

The field had begun to develop a soft consensus that 3D self-correction might simply be impossible for stabilizer codes, perhaps requiring an entirely different approach. The no-go results for translation-invariant codes (showing at most logarithmic energy barriers) and the repeated failures of codes built from glued surface codes or toric codes reinforced this pessimism.

What Balasubramanian, Davydova, and Lin appear to have done is find the right combination of ideas from multiple research threads: Haah-style fractal structure, homological algebra, random embedding techniques from recent optimal-code constructions, and the alternating X/Z hardening that ensures both Pauli error types are suppressed at every scale. The construction is not simple, but it is conceptually clean: start small, grow recursively, alternate between protecting against X and Z errors, and embed carefully to maintain 3D locality.

What It Means for the CRQC Trajectory

Let me be direct: this does not change Q-Day predictions or PQC migration urgency. A self-correcting quantum memory is a storage device, not a computation engine. Building a CRQC requires not just storing logical qubits but performing fault-tolerant operations on them: logical gates, measurements, magic state distillation, and algorithm integration. The paper’s own outlook section is clear that extending self-correction from memory to computation remains an open problem.

That said, the result matters for the long-term trajectory in two ways.

First, it eliminates a potential fundamental obstacle. Before this paper, one reasonable position was that the physics of three-dimensional space might simply prohibit passive quantum information storage, that the only path to scalable quantum error correction was active, with all the overhead that entails. That argument is now off the table. The physics allows it. Engineering it is a separate question, but knowing the physics is on your side is not a small thing.

Second, it has implications for the overhead picture. Active quantum error correction is the dominant overhead driver in every serious CRQC resource estimate, from Gidney’s RSA-2048 analysis to the Google/Oratomic ECC papers. A world where some portion of the quantum memory can self-correct passively while the computation engine handles active error correction on a smaller working set is a world where CRQC resource estimates might eventually decrease further. That world is still far away in engineering terms, but the theoretical foundation now exists.

Within my CRQC Quantum Capability Framework, this result is most relevant to B.1: Quantum Error Correction and D.3: Continuous Operation. It demonstrates a qualitatively different approach to protecting quantum information, one where the Hamiltonian itself does the work rather than external classical processing. And passive self-correction is, in a sense, the ultimate form of long-duration stability: a system that maintains coherence because its own thermal dynamics drive errors away, not because an external controller constantly intervenes. There are also downstream implications for E.1: Engineering Scale and Manufacturability, since a passive memory that does not require continuous high-bandwidth classical decoding infrastructure is, in principle, a simpler engineering target.

The Honest Assessment

I want to avoid both traps here. The hype trap would be framing this as “the quantum hard drive has been invented” – it hasn’t. This is a mathematical proof of existence, not a device, and there are real gaps between the proof and anything you could build. The critical temperature below which self-correction works is not explicitly computed in the paper (they bound it as T_c ≳ 1/log ℓ, where ℓ is the geometric scale parameter), and it may be impractically low. The random embedding construction requires an astronomically large scale factor (λ ≈ 5.4 × 10^24), which pushes the critical temperature impractically close to absolute zero. The explicit construction reduces this dramatically to ℓ = 8, giving a far more compact and concrete geometry, but its full self-correction proof is not yet complete.

The denial trap would be dismissing this because it is “merely theoretical” or “too far from practice.” That misses the point of what just happened. For a quarter century, the field’s best mathematicians and physicists could not prove that passive quantum memory works in 3D, despite considerable effort and motivation. The no-go results had accumulated to the point where the problem looked structurally intractable. A proof that it is possible, even a highly abstract one, resets the boundaries of what can be attempted. The history of quantum error correction is one of theoretical results preceding engineering breakthroughs by years or decades. Shor’s algorithm was theoretical in 1994; nobody has run it on a cryptographically relevant problem in 2026. But the theory is why we are all here, reading about post-quantum cryptography and preparing for a future that the mathematics says is coming.

This paper belongs in that category: a result that changes what theorists believe is possible without immediately changing the engineering timeline. It deserves attention proportionate to what it accomplishes, and sobriety proportionate to what remains undone. Both are considerable.

One more thing worth noting: the acknowledgments credit “ChatGPT 5.5” for improving the manuscript’s clarity. There is a certain recursive elegance in an AI-polished manuscript about self-correcting quantum memories appearing in an era when the broader question of whether quantum computing will reshape our information infrastructure remains one of the defining technological uncertainties of this decade.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.