China’s Quantum Computing Hardware: The Core Capability the West Keeps Misjudging

Table of Contents

Author’s Note: This is Article 6 of 10 in China’s Quantum Ambition, my Deep Dive series investigating whether China is on track to become the world’s first quantum superpower. This is the article that matters most for cryptographic security. It maps China’s gate-based quantum computing progress — superconducting, photonic, and trapped-ion — against the capabilities needed to build a cryptographically relevant quantum computer. The full thesis is laid out in Underestimating China: Why Beijing Could Win the Quantum Race.

Introduction

The published record suggests China trails the US by about a year. The actual gap may be narrower — or it may already be closed.

In December 2025, a team at the University of Science and Technology of China quietly posted a paper to Physical Review Letters demonstrating something only one other laboratory on Earth had achieved: quantum error correction operating below the surface code threshold. The paper landed as a PRL Editors’ Suggestion and cover article. It described how their 107-qubit Zuchongzhi 3.2 processor could suppress logical errors in a way that actually improves as the error-correcting code gets larger — the defining prerequisite for building a fault-tolerant quantum computer. I covered it here.

Google had demonstrated the same milestone twelve months earlier with its Willow processor. Twelve months is a meaningful gap. But here is the question that should keep Western strategists awake: does that twelve-month gap represent the actual distance between China and the United States — or merely the distance between their publication dates?

This is not a rhetorical question. Chinese quantum scientists operate under structural constraints that have no parallel in the Western research ecosystem. The MERICS China Tech Observatory documented that passports of “strategic scientists” are held by their institutions and released only under strict conditions — Pan Jianwei, despite having earned his PhD in Vienna and collaborated extensively with European institutions, reportedly can no longer travel overseas. Quantum encryption technology was added to China’s export restriction list in 2020. The U.S.-China Economic and Security Review Commission’s November 2025 report states bluntly: “The Commission assumes China is aggressively pursuing cryptographically relevant quantum computing and deliberately obscuring where its most sophisticated programs are located.”

In this environment, Chinese researchers do not have the same incentives, or permissions, to rush results onto arXiv the moment an experiment succeeds. Several analysts have suggested that Chinese publications may lag actual capability by 18 to 24 months, reflecting internal review, classification assessment, and strategic timing. I cannot verify that specific timeframe, but the structural conditions that would produce such a delay are well-documented and undeniable.

So when I say the published record shows China roughly 12 months behind the United States on the most critical error correction benchmarks, I am being precise about what the evidence supports. I am also being honest about what it does not tell us.

The Zuchongzhi Lineage: Five Years from Lab Demo to Error Correction Milestone

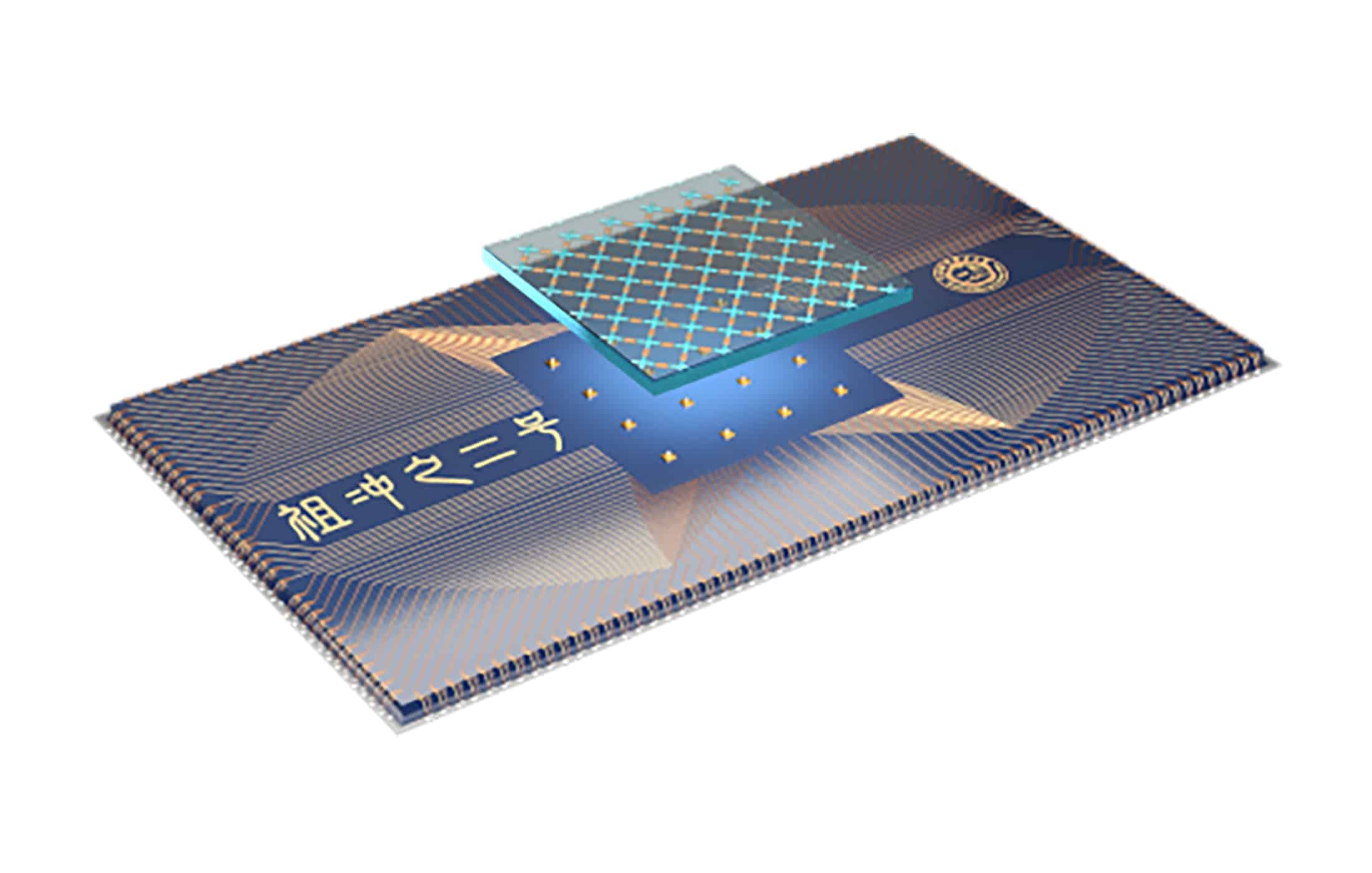

The Zuchongzhi processor series, developed by Pan Jianwei and Zhu Xiaobo’s team at USTC, is the single most important barometer of Chinese quantum computing capability. Named after the 5th-century Chinese mathematician who calculated π to seven decimal places, the series has progressed from a 62-qubit demonstration platform to a below-threshold error correction system in just four years — a pace that directly parallels Google’s trajectory from Sycamore to Willow.

Zuchongzhi 1.0 arrived in May 2021 with 62 functional transmon qubits in a two-dimensional square lattice. It was a foundation-layer device — programmable quantum walks, no quantum advantage claim. Think of it as China’s throat-clearing before the aria.

Zuchongzhi 2.0/2.1 followed within months, scaling to 66 qubits in an 11×6 rectangular layout with 110 tunable couplers on a flip-chip sapphire-on-sapphire architecture. The team performed random circuit sampling with 56 qubits at 20-cycle depth, completing in approximately 1.2 hours a computation that would take the TaihuLight supercomputer over eight years. This was two to three orders of magnitude harder than Google’s 2019 Sycamore demonstration. Both results were peer-reviewed in Physical Review Letters (arXiv:2106.14734) and Science Bulletin — not preprints, not press conferences. The team also ran the first surface code experiments on the platform, demonstrating distance-3 error detection.

Zuchongzhi 3.0 (arXiv:2412.11924, published as a Physical Review Letters cover article in March 2025) was where the international community sat up. With 105 qubits and 182 tunable couplers on a 15×7 lattice, the processor achieved single-qubit gate fidelities of 99.90% and two-qubit gate fidelities of 99.62%, with average coherence times of 72 microseconds. The quantum advantage claim was dramatic: 83-qubit, 32-cycle random circuit sampling generating one million samples in hundreds of seconds, versus an estimated 6.4 billion years on the Frontier exascale supercomputer — a 10¹⁵-fold speedup.

The numbers rivaled Google’s Willow, which had been announced just days earlier. Live Science and Phys.org ran headlines about China achieving “1 quadrillion times faster” performance. As I analyzed at the time, the headline obscured important nuances — random circuit sampling is a benchmark designed to be hard for classical computers, not a useful computation — but the engineering achievement was genuine.

Then came the result that actually matters.

Zuchongzhi 3.2 (December 2025, Physical Review Letters 135, 260601; arXiv:2505.01978 — Editors’ Suggestion and cover article) demonstrated below-threshold quantum error correction on a distance-7 surface code using 107 qubits. The error suppression factor — the metric that tells you whether adding more error-correcting qubits actually helps — came in at Λ = 1.40 ± 0.06. Anything above 1.0 means the system is operating below threshold: logical error rates decrease as code distance increases. This is the single most important prerequisite for fault-tolerant quantum computing.

The breakthrough was enabled by a novel all-microwave leakage suppression architecture that reduced qubit leakage population by a factor of 72 without requiring the hardware-intensive DC pulse wiring that Google employs. Independent expert Joseph Emerson of the University of Waterloo validated the result’s significance. No Zuchongzhi 4.0 has been announced as of April 2026; the team has stated it is working toward distance-9 and distance-11 surface codes, which would demonstrate the exponential scaling behavior needed for practical fault tolerance.

Here is a useful summary of the lineage:

| Processor | Date | Qubits | 2Q Gate Fidelity | Key Achievement |

|---|---|---|---|---|

| Zuchongzhi 1.0 | May 2021 | 62 | Not reported | Programmable quantum walks |

| Zuchongzhi 2.0/2.1 | Oct 2021 | 66 | ~97–98% | RCS quantum advantage; first surface code on chip |

| Zuchongzhi 3.0 | Mar 2025 | 105 | 99.62% | 10¹⁵× classical speedup in RCS |

| Zuchongzhi 3.2 | Dec 2025 | 107 | ~99.6%+ | Below-threshold QEC (Λ=1.40) |

The trajectory is unmistakable: from zero demonstrated quantum advantage to below-threshold error correction in four years, closely tracking — and in some architectural choices potentially exceeding — Google’s own progression from Sycamore (2019) to Willow (2024).

The Error Correction Race: What Actually Matters for CRQC

Random circuit sampling makes headlines. Below-threshold error correction makes CRQCs. These are fundamentally different milestones, and conflating them is one of the persistent errors in quantum reporting. As I have detailed in my CRQC Quantum Capability Framework, below-threshold operation is capability B.3 — and without it, nothing else matters.

Google’s Willow achieved it first in December 2024 (Nature 638, 920–926; arXiv:2408.13687) with a substantially higher error suppression factor of Λ = 2.14 ± 0.02 at distance-7, and demonstrated break-even — meaning the logical qubit’s lifetime exceeded that of the best physical qubit by 2.4×. Zuchongzhi 3.2 followed twelve months later with Λ = 1.40. That is measurably weaker suppression, and no explicit break-even claim was made.

But the architectural choice behind Zuchongzhi 3.2 deserves careful attention, because it may matter more than the headline numbers suggest. Google’s leakage suppression requires additional DC pulse hardware and dedicated wiring inside the dilution refrigerator for every qubit — complexity that compounds as systems scale to thousands, then millions, of qubits. USTC’s all-microwave approach is entirely software-controlled, requiring no additional physical infrastructure. The Quantum Computing Report assessed that this could prove “a more efficient route” to million-qubit fault-tolerant systems. The South China Morning Post reported that multiple analysts viewed the Chinese approach as potentially superior for scalability.

This matters because the path to CRQC is not just about demonstrating error correction — it is about scaling it. A technique that requires per-qubit hardware modifications in a cryogenic environment faces fundamentally different engineering constraints than one that achieves the same result through microwave pulse sequences. We will not know which approach wins until someone builds a system with tens of thousands of qubits. But dismissing the Chinese result because its Λ value is lower is like judging a rocket engine solely on thrust while ignoring fuel efficiency for a Mars mission.

The broader error correction landscape puts both achievements in context. Quantinuum’s Helios trapped-ion processor demonstrated 94 error-detected logical qubits and 48 error-corrected logical qubits with logical gate error rates approaching 10⁻⁴ — the best demonstrated anywhere. QuEra and Harvard achieved 96 logical qubits with below-threshold error rates on a neutral-atom platform. IBM demonstrated real-time qLDPC code decoding in under 480 nanoseconds, a year ahead of schedule.

China has not yet demonstrated logical qubit operations at meaningful scale. This is the most significant gap in the Chinese program — not qubit count, not gate fidelity, but the ability to perform useful logical operations on error-corrected qubits. It is one thing to show that errors decrease as codes grow larger. It is quite another to compose those logical qubits into circuits that perform computation. Quantinuum and Google are leading here, and China is watching.

For context on what CRQC actually requires: Google’s March 2026 paper estimated (Google Research blog) that breaking ECDLP-256 would need approximately 1,200 logical qubits and 90 million Toffoli gates, requiring fewer than 500,000 physical qubits on a superconducting architecture. The best current systems have roughly 107 high-quality physical qubits. That gap, roughly 650,000× in logical operations budget, is enormous for everyone. The honest assessment mapped against my CRQC Quantum Capability Framework is that China has now demonstrated capabilities B.1 through B.3 (basic quantum error correction, syndrome extraction, and below-threshold operation) but has not yet publicly demonstrated C.1 (high-fidelity logical Clifford gates) or C.2 (magic state production) at scale. Neither has anyone else at CRQC-relevant scale — but the Western leaders are further along the progression.

Jiuzhang: 3,050 Photons and the Limits of Boson Sampling

China’s photonic quantum computing program, led by Lu Chaoyang at USTC, has produced the world’s largest photonic quantum demonstrations — and also the most frequently misunderstood. All four versions of the Jiuzhang series perform Gaussian boson sampling (GBS), a task that is provably hard for classical computers but has limited practical application. This is not a path to breaking cryptography. It is a scientific proof of principle, and an impressive one, but it should not be confused with universal quantum computation.

The lineage is worth tracing briefly. Jiuzhang 1.0 (December 2020, Science 370, 1460; arXiv:2012.01625) detected 76 photons through a 100-mode interferometer — making China the second country, after the US, to demonstrate quantum computational advantage. Jiuzhang 3.0 (October 2023, PRL 131, 150601; arXiv:2304.12240) reached 255 detected photons using pseudo-photon-number-resolving detection.

Jiuzhang 4.0 (August 2025, preprint — not yet peer-reviewed or posted to arXiv as of April 2026) represents a qualitative leap: 3,050 detected photons from 1,024 squeezed states through an 8,176-mode spatial-temporal hybrid photonic circuit, claiming a 10⁵⁴× advantage over El Capitan. As I detailed in my analysis, the most consequential aspect was not the photon count but the fact that Jiuzhang 4.0 is the first programmable system in the series, explicitly designed to counter the Oh & Jiang 2024 Nature Physics paper that had shown classical matrix product state methods could simulate earlier GBS experiments. The team signaled future work toward fault-tolerant photonic quantum computing using three-dimensional cluster states.

The question for photonic quantum computing in China is whether the massive GBS demonstrations will evolve toward universal computation — or remain scientific showcases. Compared to Western photonic efforts, China leads in demonstration scale but trails in the path to universality. PsiQuantum, valued at $7 billion, is building directly toward a million-qubit fault-tolerant photonic computer using semiconductor fabrication. Xanadu demonstrated the first programmable photonic quantum advantage in 2022 and is pursuing GKP states for error correction.

China’s commercial photonic ecosystem is emerging. QBoson raised approximately $145 million (CNY 1 billion) in April 2026 and is building China’s first photonic quantum computing chip factory. TuringQ has raised over $128 million. But here I must flag an important finding: when Chinese researchers at Peking University demonstrated a photonic chip on an integrated microcomb platform generating multipartite entanglement (published in Nature, February 2025), Chinese state media celebrated it as a “quantum computing chip breakthrough.” As I analyzed, the chip is actually a remarkable continuous-variable quantum optics platform — but it is not a quantum computing chip in any operational sense. This kind of “quantum-washing” — inflating claims beyond what the science supports — is a recurring problem in the Chinese quantum ecosystem that makes honest assessment harder.

Beyond Superconducting and Photonic: The Broadening Portfolio

A narrow focus on Zuchongzhi and Jiuzhang would miss the breadth of China’s quantum hardware effort. Multiple modalities are advancing in parallel, some with results that genuinely lead the world.

Trapped Ions: Tsinghua’s 512-Ion Record

Professor Duan Luming’s group at Tsinghua University achieved a world record in May 2024, published in Nature 630, 613–618: 512 ions stably trapped in a two-dimensional crystal using a cryogenic monolithic ion trap, with 300 ions featuring single-qubit-resolved readout. This is the largest site-resolved trapped-ion quantum system demonstrated anywhere.

Four commercial trapped-ion startups have emerged. Hyqubit (a Tsinghua spinout) offers a 100+ qubit commercial system with a roadmap to 300–600 qubits. Huayi Quantum raised over CNY 100 million in an angel round with Sequoia China backing. Unitary Quantum completed China’s first 4K QCCD chip-type ion trap system in August 2025, pursuing a Quantinuum-style architecture. QuDoor is the oldest Chinese trapped-ion company, with defense-sector focus.

The critical gap here is transparency. Gate fidelity data for Chinese trapped-ion commercial systems remains largely unpublished. Quantinuum’s Helios reports 99.921% two-qubit fidelity. IonQ claims 99.99%. Chinese trapped-ion companies report qubit counts but not the fidelity metrics that actually determine computational power. Until those numbers appear in peer-reviewed journals, the trapped-ion sector in China remains promising but unverifiable.

Neutral Atoms: Hanyuan-1 and the 2,024-Atom Array

Neutral atoms may be the modality where China’s commercial trajectory is most surprising. Hanyuan-1, developed by the CAS Institute of Precision Measurement in Wuhan, is a 100-qubit neutral-atom system with 99.9% single-qubit and 98% two-qubit gate fidelity. It fits in three standard equipment racks at room temperature — no dilution refrigerator required — and began commercial deployment in October 2025, generating approximately $5.6 million in sales including an export order to Pakistan.

Meanwhile, Pan Jianwei’s USTC group demonstrated a 2,024-atom defect-free rubidium array using AI-driven optical tweezers in August 2025 — a 10× record at the time. Startups Buchou Quantum (claiming a 1,000-qubit prototype) and MatriQ are entering the space. This is relevant because neutral atoms are one of the modalities where CRQC-relevant scaling is being most actively pursued globally — QuEra’s roadmap targets 10,000+ qubit systems, and Harvard’s demonstration of 96 logical qubits used neutral atoms.

Silicon Spin Qubits: A Genuine World First

In perhaps the least-noticed but most significant result, the Shenzhen International Quantum Academy (SZIQA) demonstrated the first universal logical operations on a silicon quantum processor — published in Nature Nanotechnology on March 23, 2026. The team implemented a [[4,2,2]] quantum error-detecting code encoding four physical phosphorus donor nuclear spins into two logical qubits, with a full universal logical gate set. Physical gate fidelities reached 99.57% single-qubit and 97.76% two-qubit. They also ran a variational quantum eigensolver to compute the ground-state energy of H₂O using logical qubits — the first such demonstration in silicon globally.

This matters because silicon spin qubits are the modality that could potentially leverage existing semiconductor manufacturing infrastructure. As I have covered in my silicon quantum computing series, the manufacturing claims around silicon qubits require heavy qualification — isotopic purification, ultra-clean gate oxides, and precision lithography are required, and this is not trivial CMOS adaptation. But a Chinese team achieving the first universal logical operations in silicon is a marker worth tracking.

Tianyan-504 and Origin Quantum: The Commercial Ecosystem

The Tianyan-504 system, built around the Xiaohong 504-qubit superconducting chip, was unveiled in December 2024 by China Telecom Quantum Group, CAS, and QuantumCTek. At 504 qubits, it is China’s largest-qubit-count processor. But qubit count without fidelity data is marketing, not science. The system’s primary significance is as measurement-and-control infrastructure — validating that Chinese-built electronics can manage 500+ qubit systems — rather than as a computational breakthrough. Claims of IBM-competitive performance remain unverified by independent benchmarking.

Origin Quantum is China’s most important commercial quantum computing company and deserves careful assessment. Founded in 2017 as a USTC/CAS spinout by Guo Guoping and Guo Guangcan, it is the sole domestic firm delivering full-stack superconducting quantum computers. Its 72-qubit Wukong processor, launched in January 2024, attracted over 30 million cloud platform visits from 145+ countries and executed 530,000+ quantum computing tasks — though these figures are company-reported via state media.

The honest technical assessment is mixed. Wukong’s published coherence metrics (T1: 15.22 μs, T2: 2.23 μs) lag significantly behind Western leaders — Google’s Willow achieves approximately 68 μs T1. Origin Quantum’s 2021 roadmap targeted 1,024 qubits by 2025; it reached 72. Roadmaps and reality diverge everywhere in quantum computing, but the gap between aspiration and delivery in this case is worth noting.

What is genuinely significant is Origin Quantum’s operating system. Origin Pilot OS (version 4.0), released for free public download in February 2026, is the world’s first freely downloadable quantum computer operating system. It handles hardware management, calibration, scheduling, and multi-user task orchestration across superconducting, trapped-ion, and neutral-atom platforms. This is a standards-setting play: any university building a quantum computer can download Origin Pilot for free from China rather than building an OS from scratch or licensing Western alternatives. The strategic parallel to DeepSeek’s open-source AI approach is intentional. As I analyzed when it launched, this is infrastructure colonization — building the software layer that others depend on.

In October 2025, CAS opened a Zuchongzhi 3.0-series superconducting quantum computer for commercial use via China Telecom’s Tianyan quantum cloud platform, which now hosts an 880-qubit cluster across multiple processors with over 37 million visits from 60+ countries. The broader Chinese quantum ecosystem grew from 93 companies in 2023 to 153 in 2024 — a 40% increase — with the sector reaching RMB 11.56 billion ($1.61 billion) in 2025.

But the ecosystem’s structure reveals a vulnerability. Alibaba shut its quantum lab in November 2023. Baidu closed its lab in January 2024, donating equipment to universities. The private tech giants have exited, leaving the field to state-aligned entities. This concentrates resources but narrows the innovation base compared to the US ecosystem of approximately 300 quantum startups and multiple well-funded corporate research labs. In my assessment, this is the single biggest structural disadvantage in China’s quantum computing program — not hardware capability, but the thin commercial layer between state research and industrial deployment.

The Military Dimension Is Structural

This is the section that makes Western analysts most uncomfortable, because it requires acknowledging uncertainty rather than offering reassuring assessments.

The dual-use nature of China’s quantum computing program is not an afterthought. It is architectural. Pan Jianwei told local officials that technology developed at the Hefei National Laboratory “would be of immediate use to the armed forces.” The PLA has disclosed over 10 experimental quantum cyber warfare tools under development, led by the National University of Defense Technology’s (NUDT) supercomputing laboratory, combining quantum with AI and cloud computing. The USCC’s 2025 report states directly: the Commission assumes China is aggressively pursuing CRQC and deliberately obscuring its most advanced programs.

Seven Chinese quantum entities were added to the US Entity List in 2024–2025 for advancing quantum technology capabilities with military applications. Export controls on dilution refrigerators, cryogenic circuits, and quantum processors have created short-term disruption but triggered a paradoxical acceleration of domestic supply chains that I will examine in my forthcoming article on China’s quantum supply chain and self-sufficiency. The punchline: QuantumCTek now manufactures dilution refrigerators domestically, Origin Quantum has developed its own (SL1000/SL400) and is exporting them to Belt and Road countries, and photonic approaches are gaining investment partly because they sidestep cryogenic supply chain constraints entirely.

RUSI analysis concludes the controls “may even be counter-productive” in the long run, giving Chinese manufacturers a political mandate and a captive market. This pattern — sanctions accelerating domestic capability rather than constraining it — is exactly what happened with Huawei in 5G and with SMIC in semiconductors. As I detailed in my Leapfrog Doctrine article, the West keeps deploying the same containment strategy and keeps being surprised when it produces the same result.

Mapping China Against the CRQC Capability Framework

So where does China actually stand on the path to a CRQC — a quantum computer capable of running Shor’s algorithm at cryptographically relevant scale?

My CRQC Quantum Capability Framework tracks ten capability dimensions. Here is an honest assessment of where China’s published results sit:

B.1 Quantum Error Correction: Demonstrated. Surface code on Zuchongzhi 2.1, distance-7 on Zuchongzhi 3.2.

B.2 Syndrome Extraction: Demonstrated. Real-time syndrome measurement on Zuchongzhi 3.2 with all-microwave architecture.

B.3 Below-Threshold Operation: Demonstrated. Λ = 1.40 at distance-7 (December 2025). Second globally after Google’s Willow (Λ = 2.14).

B.4 Qubit Connectivity & Routing: Partial. 2D lattice with tunable couplers demonstrated. No published work on lattice surgery routing at scale.

C.1 High-Fidelity Logical Clifford Gates: Not demonstrated at scale. SZIQA’s silicon demonstration showed logical gates but on a 4-physical-qubit system. No surface-code-based logical gate demonstrations comparable to Quantinuum’s work.

C.2 Magic State Production: Not demonstrated. No published Chinese work on magic state distillation or injection at meaningful scale.

D.1 Algorithm Integration: Not demonstrated. No fault-tolerant algorithm compilation demonstrated.

D.2 Decoder Performance: Unknown. No published decoder latency benchmarks for Chinese systems.

D.3 Continuous Operation: Not demonstrated. No long-duration quantum computation published.

E.1 Engineering Scale & Manufacturability: Partial. 504-qubit chip fabricated. Domestic dilution refrigerator production. But no published yield data or manufacturing process benchmarks.

The pattern is clear: China has demonstrated the foundational capabilities (B-tier) but has not yet publicly shown the computational capabilities (C-tier and D-tier) that would constitute actual progress toward running Shor’s algorithm. This is exactly where I would expect a program to be if it were genuinely 12–24 months behind the US leaders. It is also where I would expect it to be if it were keeping its most advanced results classified.

I cannot resolve that ambiguity. Nobody outside China’s national security establishment can. What I can say is that the B-tier capabilities are real, peer-reviewed, and impressive — and the gap between China’s B-tier results and the Western leaders’ C-tier results is narrower than most analysts appreciated even a year ago.

The 15th Five-Year Plan and What Comes Next

As I detailed in my analysis of the 15th Five-Year Plan, the plan approved in March 2026 lists quantum technology first among seven “future industries” — elevated from second place behind AI in the 14th FYP. The National Venture Guidance Fund has allocated RMB 121.8 billion ($17.5 billion) across three regional quantum-focused funds. Near-term targets include a quantum computing measurement-and-control system supporting at least 1,000 qubits with feedback latency under one microsecond by 2026.

The strategic shift is from research grants to industrial policy: government procurement preferences, manufacturing subsidies, and mandated application deployments. This is how China scaled its semiconductor, solar, EV, and 5G industries. As I argued in my Leapfrog Doctrine analysis, the question is not whether China can do this — the pattern is well-established — but whether quantum computing’s fundamental scientific uncertainties make the industrial scaling playbook less applicable than in previous technology domains.

There is a legitimate argument that quantum is different. The engineering challenges are genuinely unprecedented. Nobody has solved the scaling problem — not Google, not IBM, not Quantinuum, not China. The difference between 107 qubits and 500,000 qubits is not a manufacturing problem that money can solve. It requires scientific breakthroughs that cannot be mandated by Five-Year Plans.

But that argument, while technically correct, misses the structural point. China does not need to be first to CRQC to change the strategic calculus. It needs to be close enough that the West cannot assume a comfortable lead. And on the published evidence — setting aside whatever classified programs may exist — China is already close enough to make that assumption dangerous.

The quantum computing race between the US and China is real, consequential, and — based on the published evidence — closer than the West wants to believe. What happens in the classified programs we cannot see may make it closer still.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.