QuEra Achieves 2:1 Physical-to-Logical Qubit Ratio With Ultra-High-Rate qLDPC Codes

Table of Contents

20 Apr 2026 — A collaboration between QuEra Computing, Harvard University, and MIT has posted a preprint demonstrating quantum Low-Density Parity-Check (qLDPC) codes that achieve an encoding rate exceeding 1/2 – meaning each logical qubit requires fewer than two physical qubits to encode. The paper, “Towards Ultra-High-Rate Quantum Error Correction with Reconfigurable Atom Arrays” (Zhao, Duckering, Gu, Maskara, Zhou), presents circuit-level noise simulations achieving a per-logical-qubit, per-round error rate of approximately 1.3×10⁻¹³ at a physical error rate of 0.1% – performance in the “Teraquop” regime, corresponding to one error per trillion logical operations.

Note: This is a preprint. The results are based on simulations, not experimental demonstrations on quantum hardware.

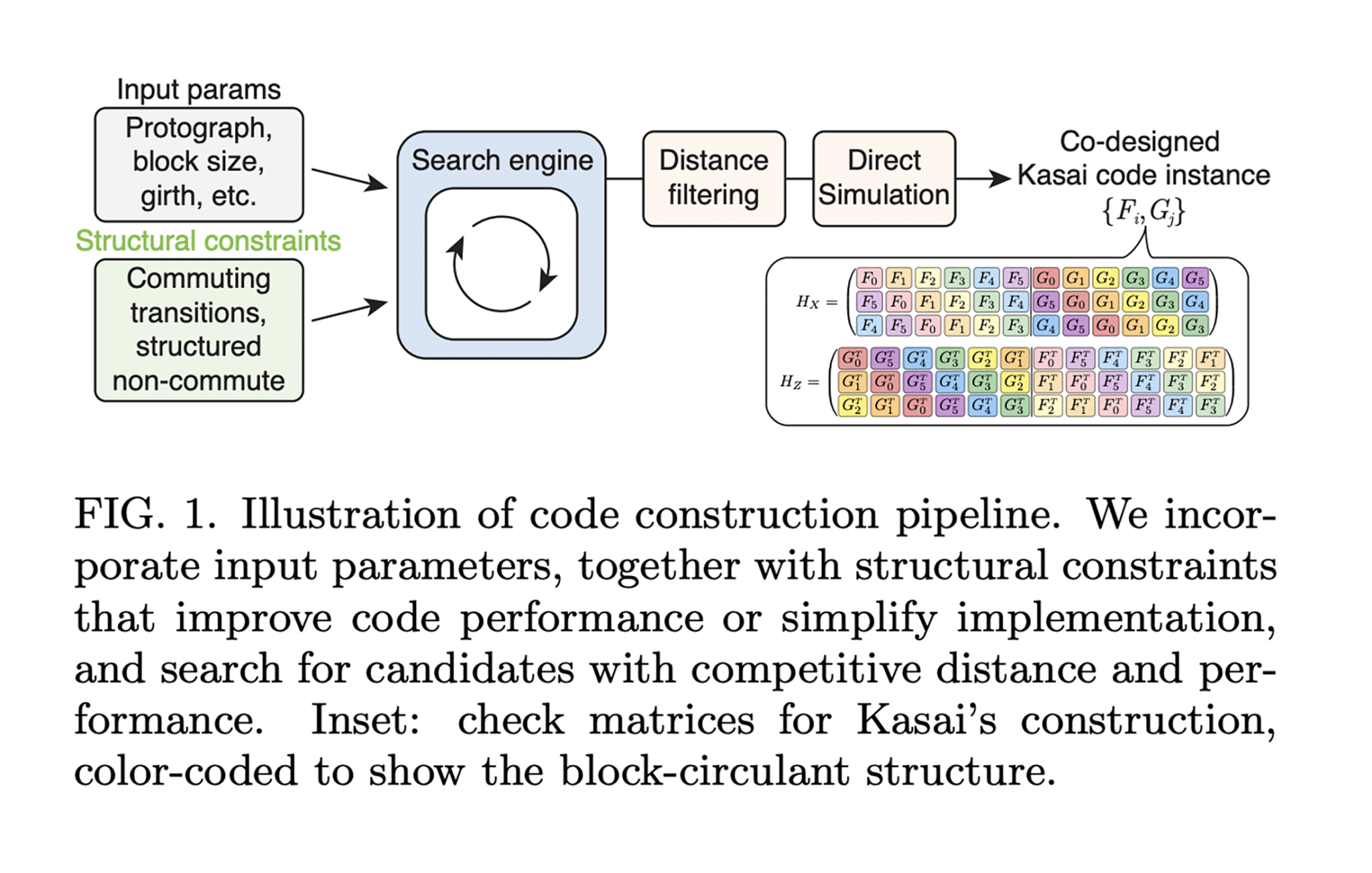

The work builds on a recent ultra-high-rate code construction by Kenta Kasai (arXiv:2601.08824), identifying new structural conditions on affine permutation matrices that make these codes compatible with the row-column movement constraints of reconfigurable neutral-atom arrays using Acousto-Optic Deflectors (AODs). The team verified two specific code instances:

- [[1152, 580, ≤12]]: 580 logical qubits encoded in 1,152 physical qubits (rate: 0.503), correcting up to 5 errors

- [[2304, 1156, ≤14]]: 1,156 logical qubits encoded in 2,304 physical qubits (rate: 0.502), correcting up to 6 errors

The syndrome extraction circuit operates in constant time by exploiting the parallel movement capabilities of neutral-atom hardware, and a hierarchical decoder provides the accuracy and throughput needed to maintain these error rates.

My Analysis: The Most Important Number in Quantum Error Correction Just Changed

To appreciate why an encoding rate above 1/2 matters, consider the current landscape.

The surface code – the workhorse of nearly every major quantum error correction demonstration to date, including Google’s Willow chip – uses one logical qubit per code block and requires roughly 2d²-1 physical qubits for a distance-d code. For the distances needed for cryptanalytically relevant computation (d=13 to d=27, depending on the architecture), that translates to hundreds or thousands of physical qubits per logical qubit. Even the most optimized surface code architectures, like those in Gidney’s 2025 RSA-2048 resource estimate, require close to a million physical qubits. The encoding rate of the surface code is effectively zero in the asymptotic limit – it encodes a vanishing fraction of its physical qubits as logical information.

The Pinnacle Architecture, which I covered earlier this year, used generalized bicycle qLDPC codes to bring RSA-2048 factoring down to approximately 100,000 physical qubits. Those codes achieve encoding rates around 1/6 to 1/10 at the distances needed for practical computation – a major improvement over the surface code, but still far from the theoretical limits.

This new QuEra–Harvard–MIT result pushes the encoding rate past 1/2. More than half of the physical qubits carry logical information. The overhead of the physical qubits consumed by error correction itself is less than the payload. To put it bluntly: this is a code family where quantum error correction costs less than the computation it protects. That is a qualitative threshold, not just a quantitative improvement.

The Teraquop Number Demands Attention

The 1.3×10⁻¹³ per-logical-qubit, per-round error rate at 0.1% physical noise is extraordinary – if it holds under experimental conditions. The “Teraquop” regime (one error per 10¹² operations) is the performance level required for the deep quantum circuits used in molecular simulation and, critically, cryptanalysis. Shor’s algorithm applied to RSA-2048 requires sustained computation over billions of logical operations; a Teraquop-class error rate means the algorithm can complete before accumulated logical errors corrupt the result.

For context, Google’s Willow chip demonstrated below-threshold surface code performance at physical error rates of approximately 0.3%, but at much smaller code distances and without achieving Teraquop-class logical error rates. The gap between Willow’s demonstration and what this paper simulates is enormous – but it is a gap in code distance and system size, not in fundamental physics. The question is engineering, not principle.

What This Is, and Is Not

I want to be precise about the claim-evidence gap here, because it matters enormously.

This is a simulation study. The authors ran circuit-level noise models at p=0.1%, not experiments on actual neutral-atom hardware. The codes have not been physically implemented. No qubits were harmed, or helped, in the making of this paper.

That said, the simulation is not hand-waving. Circuit-level noise models include realistic error sources such as gate errors, measurement errors, idle errors, atom loss; and the codes are specifically co-designed for hardware that already exists. QuEra and Harvard have demonstrated reconfigurable atom arrays with hundreds of qubits, parallel AOD-based rearrangement, and multi-round error correction on surface codes with below-threshold performance (Nature, January 2026). The hardware platform these codes are designed for is not hypothetical; it is running experiments today, just not at the scale needed for these specific codes.

The critical limitation the authors acknowledge is that this result establishes a quantum memory baseline, storing and protecting logical qubits, but does not yet demonstrate the full set of fault-tolerant logical gates needed for universal quantum computation. Performing gates on high-rate qLDPC codes remains an active and difficult research problem. The Pinnacle Architecture addressed this through generalized lattice surgery on lower-rate codes; how to perform computation on codes with rates exceeding 1/2 is a separate challenge that the authors leave to future work. This is an honest and appropriate scope limitation, but readers should not interpret a 2:1 memory ratio as meaning a fault-tolerant quantum computer requires only twice as many physical as logical qubits – the computation overhead will be additional.

Why Neutral Atoms Keep Winning the qLDPC Race

It is not a coincidence that this result comes from the neutral-atom platform. The unique capability of reconfigurable atom arrays to dynamically rearrane qubits in two dimensions using optical tweezers and AODs, is precisely what qLDPC codes need. These codes require non-local connectivity (each qubit participates in checks involving distant qubits) that is impossible or prohibitively expensive on fixed-geometry platforms like superconducting circuits. Neutral atoms can physically move qubits to where they need to interact, then move them back.

The same Harvard–MIT group behind this paper published the foundational proposal for qLDPC implementation on atom arrays in Nature Physics in 2024 (Xu et al., “Constant-overhead fault-tolerant quantum computation with reconfigurable atom arrays”), showing that qLDPC architectures outperform the surface code with as few as several hundred physical qubits. The new preprint extends this line of work to higher-rate codes, the Kasai construction, and shows they remain compatible with the same hardware constraints.

This creates a strategic dynamic worth watching. If qLDPC codes prove to be the path to low-overhead fault tolerance, and the evidence is accumulating that they are, then hardware platforms with native long-range connectivity have a structural advantage over those limited to nearest-neighbor interactions. Superconducting platforms, which dominate the current landscape, would need to find alternative ways to implement qLDPC syndrome extraction — through chip interconnects, multi-layer architectures, or entirely different code families optimized for their geometry. The Pinnacle Architecture’s requirement for degree-ten, non-planar connectivity reflects exactly this tension.

This result matters. It demonstrates that the overhead of quantum error correction may not be the insurmountable scaling barrier it once appeared to be. Two physical qubits per logical qubit for memory, at Teraquop-class error rates, is a whole different regime.

The race to add fault-tolerant logical gates to these ultra-high-rate codes is now one of the most consequential open problems in quantum computing. If that problem is solved, and the QuEra–Harvard–MIT collaboration is among the best-positioned teams in the world to attack it, the path to a CRQC becomes considerably shorter than most current estimates assume.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.