Why Scaling Logical Qubits Gets Exponentially Harder — And Which Walls Hit First

This article is a companion to my Quantum Utility Ladder Deep Dive, which maps what fault-tolerant quantum computers will actually do at each logical qubit tier. Here, I examine the question that ladder deliberately sidesteps: why climbing from one rung to the next gets disproportionately harder, and which engineering dimensions become the binding constraint at each scale.

The Roadmap Illusion

Vendor roadmaps are seductive. IBM projects 200 logical qubits by 2029, 2,000 by 2033. IonQ claims 40,000–80,000 by 2030. QuEra targets 100 logical qubits by 2029 with a path to 10,000. Plot these on a chart and the line goes up and to the right, as lines in pitch decks always do.

The implicit message is that scaling logical qubits is a smooth, continuous process. Build a machine with 100 logical qubits, learn some lessons, then build one with 1,000. A thousand? Do it again for 10,000. The progression looks like building bigger factories or faster chips — hard, but fundamentally the same kind of hard, repeated at larger scale.

That is wrong. And the way it is wrong has direct consequences for how organizations should think about timelines for both quantum utility and quantum risk.

The bottom line: Scaling from 100 to 1,000 logical qubits is an engineering challenge where the path is visible and the problems are well-defined. Scaling from 1,000 to 100,000 is closer to a collection of open research problems that happen to also require engineering at unprecedented scale. Several dimensions of my CRQC Quantum Capability Framework hit qualitative walls, not just quantitative ones, and which wall hits hardest depends entirely on the hardware modality.

What 100 Logical Qubits Actually Requires

To understand why scaling gets harder, it helps to start with what it takes to build a machine at the bottom of the ladder.

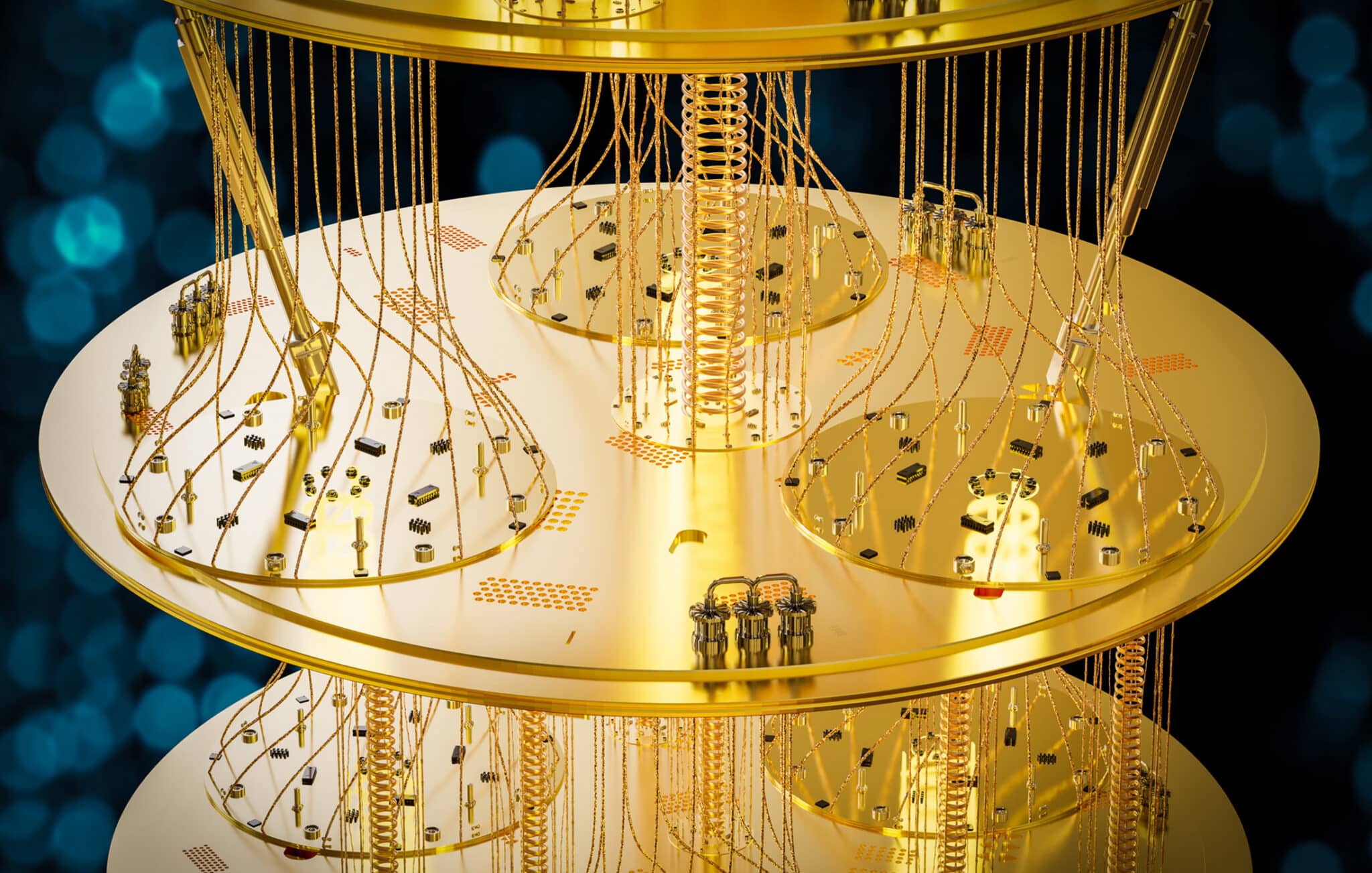

At 100 logical qubits using surface codes at distance 17 (a reasonable assumption for scientifically useful computations), you need roughly 58,000 physical qubits just for data. Add magic state factories and routing overhead, and realistic estimates land between 100,000 and 200,000 physical qubits. With qLDPC codes, that number could compress to perhaps 10,000–30,000 physical qubits, though qLDPC implementation at this scale remains undemonstrated.

The challenges at this tier are demanding but well-characterized. You need physical error rates comfortably below threshold (roughly 10⁻³ for surface codes). You need real-time decoder performance that can keep pace with syndrome extraction on tens of thousands of qubits. You need functional magic state factories that can produce T-gates at a rate sufficient to execute your algorithm within a reasonable timeframe. And you need the ability to run the computation continuously for hours without losing coherence.

Multiple vendors have credible paths to machines in this class by 2028–2030. IBM’s Starling targets 200 logical qubits by 2029. QuEra targets 100 logical qubits with 10,000 physical qubits. Quantinuum’s Apollo system aims for similar capability. The engineering challenges are severe, but the physics is understood and the component technologies have been demonstrated individually.

Now consider what happens when you try to build something ten times bigger.

The Three Walls That Hit Hardest at Scale

In my CRQC Quantum Capability Framework, I track ten capability dimensions that must all mature before a cryptographically relevant quantum computer becomes feasible. At the 100-logical-qubit level, progress across these dimensions can proceed somewhat independently: improve error rates here, demonstrate a decoder there, build a small magic state factory over there. The dimensions interact, but they don’t strangle each other.

At 10,000 logical qubits and above, three dimensions become disproportionately constraining — and they compound in ways that create problems qualitatively different from anything the field has solved.

Wall 1: Engineering Scale and Manufacturability (E.1)

For superconducting platforms, engineering scale and manufacturability is the dominant constraint at extreme scale. The arithmetic is unforgiving.

With surface codes, 100,000 logical qubits at distance 25 implies roughly 100 million to 1 billion physical qubits. Even with qLDPC codes offering 10–50x compression in the physical-to-logical ratio, you are still looking at millions of physical qubits that need to be fabricated with uniform quality, individually addressed, and cooled to roughly 15 millikelvin.

Nobody has a credible engineering design for a dilution refrigerator system at that scale. Current dilution refrigerators cool processors with perhaps 1,000–1,500 qubits. The thermal load of control wiring alone scales linearly with qubit count (or worse), and at some point the cryogenic cooling power simply cannot keep up. Proposals exist for cryogenic CMOS control electronics that would reduce wiring density, but these introduce their own noise and calibration challenges that remain unresolved at scale.

The Google Quantum AI team confronted this directly in their 2025 Willow paper: going from their current ~1,000-qubit processors to the ~500,000 physical qubits needed for a CRQC under their architecture represents a 500x scaling factor. That is a different kind of machine — one that has more in common with a particle accelerator or a semiconductor fabrication facility than with today’s quantum processors.

The manufacturing uniformity problem compounds this. At 1,000 qubits, you can tune each qubit individually and calibrate around defects. At 1 million qubits, fabrication yield becomes existential. If 1% of qubits are defective, you have 10,000 broken components scattered across your processor. The error correction scheme must either tolerate this (surface codes are somewhat robust to qubit loss, qLDPC codes less so) or the manufacturing process must achieve yields that approach semiconductor industry standards — a target that requires years of process engineering.

Wall 2: Decoder Performance (D.2)

Decoder performance is the scaling challenge that most roadmaps quietly ignore.

The decoder is the classical computer that processes syndrome measurement data in real time to determine which errors have occurred and how to correct them. At modest scales, this is manageable: a decoder handling a single distance-17 surface code patch processes syndromes from a few hundred physical qubits. Current implementations using minimum-weight perfect matching (MWPM) or Union-Find algorithms can do this in microseconds.

At 100,000 logical qubits, the decoder must process syndrome data from millions of physical qubits simultaneously. The data rate is staggering: if each physical qubit produces one syndrome measurement every microsecond, a 10-million-qubit machine generates 10 terabytes of syndrome data per second. The decoding must keep pace with this data stream, because any backlog means errors accumulate faster than they can be corrected, and the computation fails.

This is fundamentally a classical computing and systems architecture problem, but it compounds in ways that are non-obvious. As code distance increases (which you need at larger scale to maintain error suppression), the decoder’s computational complexity grows super-linearly with the number of physical qubits it handles. MWPM scales as O(n³) in the worst case, though approximate decoders like Union-Find achieve near-linear time at the cost of reduced accuracy. The accuracy-versus-speed tradeoff is a hard constraint: a faster but less accurate decoder means you need higher code distance (more physical qubits) to compensate, which in turn generates more syndrome data, which demands an even faster decoder.

At extreme scale, the decoder also faces a communication challenge. A single decoder processing the entire machine’s syndrome data is infeasible — the data cannot be shipped to a central location fast enough. Instead, you need distributed decoding, with multiple decoder units processing local regions and coordinating at boundaries. The boundary coordination introduces latency and can create correlated decoding failures that the error correction scheme was not designed to handle. This remains an active research problem with no proven solution at the scale required for 100,000 logical qubits.

Wall 3: Magic State Production (C.2)

Magic state production is the constraint that does not get enough attention.

Every non-Clifford gate (every T-gate or Toffoli gate) requires a specially prepared ancillary quantum state called a magic state. These states must be “distilled” to high fidelity through a process that consumes multiple lower-fidelity magic states to produce one higher-fidelity output. The distillation process is itself a quantum computation that requires dedicated physical qubits — the magic state factory.

At modest scale, the factory overhead is significant but manageable. For a 100-logical-qubit machine running an algorithm with 10⁸ T-gates, you might need 2–4 magic state factories, each consuming a few thousand physical qubits. The factories run continuously, producing magic states at a rate that matches the algorithm’s consumption.

At 100,000 logical qubits running algorithms with 10¹²–10¹⁵ T-gates, the magic state factory overhead can easily consume 80–90% of the total physical qubit budget. The headline “100,000 logical qubits” does not mean 100,000 logical qubits doing computation. It means perhaps 10,000–20,000 data qubits and the rest dedicated to manufacturing the magic states those data qubits consume. The machine is mostly factory, with a comparatively small computational core.

Recent advances in magic state cultivation (Gidney, Shutty, and Jones, 2024) significantly reduce this overhead by integrating magic state preparation into the computational fabric rather than requiring dedicated factory regions. And innovations like algorithmic fault tolerance from QuEra, Harvard, and Yale cut runtime overhead by running fewer error correction rounds per logical layer. These are genuine architectural shifts. But even with these advances, magic state overhead at 100,000-logical-qubit scale remains a dominant cost driver that scales with circuit depth and T-gate count in ways that no current technique fully resolves.

How the Walls Hit Differently Across Modalities

The three walls above are universal and every hardware modality faces them. But which wall dominates, and at what scale it becomes the binding constraint, varies dramatically across platforms. This is why “which quantum computer will scale best” has no single answer. It depends on what you are scaling to.

Superconducting (IBM, Google)

The dominant wall is E.1: engineering scale and manufacturability. Superconducting qubits have the fastest gate speeds (~10–100 nanoseconds), which is an advantage for algorithmic runtime. But everything about the physical infrastructure gets harder faster than the qubits get better.

The cryogenics problem is unique to superconducting systems. Dilution refrigerators capable of reaching 15 mK are expensive, power-hungry, and volume-limited. Current systems cool a single processor module. Scaling to millions of qubits likely requires either modular cryogenic architectures (multiple dilution refrigerators connected by quantum interconnects — an undemonstrated technology at the required fidelity) or radical redesigns of cryogenic infrastructure.

The wiring density problem is equally severe. Each qubit requires multiple microwave control lines. At 1 million qubits, the aggregate bandwidth of control signals entering the cryostat exceeds anything that current interconnect technology can deliver. Cryogenic CMOS multiplexing can help, but introduces its own heat load and calibration challenges.

IBM’s modular approach (quantum chiplets connected through classical and quantum communication channels) is the leading architectural response, but chiplet-to-chiplet gate fidelity has not been demonstrated at production quality. Google’s monolithic scaling approach pushes the fabrication uniformity challenge to the forefront.

Trapped Ion (Quantinuum, IonQ)

The dominant wall is D.3: continuous operation and gate speed. Trapped ion platforms offer the highest native gate fidelities (99.9%+ for two-qubit gates) and all-to-all connectivity within a trapping zone, which significantly reduces the routing overhead that plagues other platforms. But ion trap gates are roughly 1,000 times slower than superconducting gates at tens of microseconds versus tens of nanoseconds.

At 100 logical qubits running an algorithm with 10⁸ gates, this speed disadvantage is manageable. The computation takes hours or days. At 100,000 logical qubits running 10¹⁵ gates, the computation time stretches to months or years of continuous operation. That is not a realistic operating regime. No quantum system has demonstrated continuous coherent operation for more than hours, let alone months.

The modular scaling approach (multiple trapping zones connected by photonic interconnects) addresses the qubit count challenge but introduces its own bottleneck: inter-module entanglement rates. IonQ’s recent photonic interconnect demonstration between two commercial trapped-ion systems is a milestone, but the entanglement rate between modules is orders of magnitude slower than intra-module gate rates. At extreme scale, the inter-module communication bandwidth becomes the system’s throughput ceiling.

Neutral Atom (QuEra, Pasqal)

Neutral atom platforms occupy an interesting middle ground. Reconfigurable atom arrays offer flexible connectivity (atoms can be physically moved to create entangling gates between arbitrary pairs), which dramatically reduces the routing overhead that inflates qubit requirements in fixed-connectivity architectures. Current systems have demonstrated 6,100 trapped atoms and fault-tolerant operations on ~500 qubits at below-threshold error rates.

The dominant wall is a combination of D.3 (continuous operation) and a challenge unique to neutral atoms: atom loss. During long computations, individual atoms are stochastically lost from the trap through collisions with background gas, photon scattering, or excitation to untrapped states. Lost atoms must be replaced and re-initialized without disrupting the ongoing computation. At 100 logical qubits, the loss rate is manageable. At 100,000, the system must continuously replace atoms across a vast array while maintaining phase coherence of the ongoing computation — a challenge with no demonstrated solution.

Mid-circuit measurement fidelity is another area where neutral atoms lag. Efficient syndrome extraction requires non-destructive measurement of ancilla qubits, and neutral atom platforms have made rapid progress here, but the measurement process itself can disturb neighboring atoms through stray photon scatter.

Photonic (PsiQuantum, Xanadu)

Photonic platforms face the E.1 wall in a different guise. Photonic qubits (single photons) do not need cryogenic cooling, which eliminates the dilution refrigerator constraint. But photonic entangling gates are inherently probabilistic and a photonic CNOT gate succeeds with some probability less than unity, and failure destroys the entangled state.

At scale, this probabilistic nature means massive multiplexing: to achieve deterministic computation, you need to attempt many entangling operations in parallel and select the successful ones. The resource overhead scales with the inverse of the gate success probability. PsiQuantum’s active-volume architecture addresses this through clever scheduling of operations across a large array of photonic components, but the underlying requirement is an enormous number of optical switches, delay lines, and single-photon detectors operating at production quality.

PsiQuantum’s approach of manufacturing photonic quantum chips in a GlobalFoundries semiconductor fab is the most compelling response to the E.1 challenge in any modality — leveraging existing semiconductor manufacturing infrastructure rather than building bespoke quantum hardware. Whether this approach can achieve the optical loss budgets required for fault-tolerant computation at 100,000 logical qubits remains unproven.

The qLDPC Compression: Real but Not Magic

Much of the optimism about scaling timelines comes from qLDPC codes, and for good reason. IBM’s bivariate bicycle [[144,12,12]] code encodes 12 logical qubits in 144 data qubits, a 12:1 ratio versus surface code’s roughly 1,000:1 at comparable error suppression. That is a 40x compression that transforms the scaling math.

But qLDPC codes introduce their own challenges that become more severe at scale.

Non-local stabilizer checks. Unlike surface codes, where each stabilizer check involves only nearest-neighbor qubits on a 2D grid, qLDPC codes require stabilizer measurements connecting qubits that may be physically distant on the chip. On a planar superconducting processor, this means either long-range couplers (which degrade fidelity), a 3D chip architecture (which compounds the E.1 manufacturing challenge), or qubit routing (which introduces overhead that partially negates the encoding advantage).

Less mature decoders. Surface code decoding is a well-studied problem with multiple high-performance algorithms. qLDPC code decoding is newer and less optimized. The decoder complexity depends on the code structure, and for some promising qLDPC families, efficient decoding algorithms are still being developed. This compounds the D.2 wall at scale.

Less tolerance to qubit loss. Surface codes degrade gracefully when individual physical qubits fail — the code can be locally deformed around a defective qubit with modest overhead. qLDPC codes, with their more complex structure, are generally less tolerant of qubit loss, making manufacturing yield more critical.

These are engineering challenges, not fundamental barriers. qLDPC codes will almost certainly replace surface codes as the dominant error correction approach. But the idea that qLDPC adoption alone eliminates the scaling challenge is an oversimplification. It shifts the binding constraint from one dimension to another rather than dissolving it.

The Compounding Problem: When Walls Interact

The most pernicious aspect of scaling is that the three walls are not independent. They interact in ways that make the total challenge worse than the sum of its parts.

Consider a concrete scenario: you want to build a superconducting machine with 10,000 logical qubits using qLDPC codes. Even with 40x compression, you need roughly 500,000–1,000,000 physical qubits. At that scale:

- The fabrication challenge (E.1) requires manufacturing ~1 million qubits at uniform quality, with yield high enough that the qLDPC code’s reduced loss tolerance is not violated.

- The decoder (D.2) must process syndrome data from a million qubits in real time, using algorithms optimized for qLDPC codes that are still maturing.

- The magic state factories (C.2) must produce T-gates at a rate matching the algorithm’s consumption across 10,000 logical qubits, which may require dedicated regions of the processor that compete for the same physical qubit budget.

Now add the temporal dimension: if the algorithm requires 10¹⁰ T-gates at a production rate of 1 million T-gates per second, the computation runs for ~3 hours. During those 3 hours, every one of the million physical qubits must maintain calibration. Drift in qubit frequencies, changing two-level-system defect configurations in the substrate, and thermal fluctuations in the cryostat all introduce time-varying error profiles that the decoder was not calibrated for at startup. This is the continuous operation (D.3) challenge, and it gets harder with both qubit count and runtime.

The result is that scaling logical qubit count by 10x does not scale engineering difficulty by 10x. It scales it by something closer to 10x in each of several interacting dimensions, making the aggregate challenge closer to 100x or 1,000x harder, depending on which modality you are working with and which dimensions are already near their limits.

What This Means for the Utility Ladder

In my Quantum Utility Ladder, I mapped what fault-tolerant quantum computers will actually be used for at each logical qubit tier: from Fermi-Hubbard models at ~130 logical qubits, through FeMoco and Cytochrome P450 at 1,000–5,000, to bulk solid-state physics at 100,000+.

The scaling analysis here adds a critical dimension to that picture. The time between reaching Rung 1 (25–100 logical qubits) and Rung 3 (300–1,000 logical qubits) will be measured in years — perhaps 3–5 years if error correction advances continue at their current pace. But the time between Rung 3 and Rung 5 (5,000–100,000+ logical qubits) is likely measured in decades. The rungs are not evenly spaced on the time axis, even though they look evenly spaced on the qubit axis.

This has a specific and uncomfortable implication for CRQC timelines. Breaking RSA-2048 requires roughly 1,200–1,400 logical qubits — solidly in the Rung 3–4 range. This sits at the boundary between “engineering challenge” (path visible, components demonstrated) and “research problem” (architectural innovations still needed). It is accessible sooner than the 100,000-logical-qubit applications that dominate the extreme end of the utility ladder, but it is not just a straightforward engineering scale-up from 100-logical-qubit machines.

For the cryptographic threat assessment, the honest framing is: a CRQC requires solving the scaling challenges at the 1,000–2,000 logical qubit tier, where E.1, D.2, and C.2 are all demanding but none has yet hit its qualitative wall. The challenges at this scale are within the range that concerted engineering effort can plausibly address within the 2030–2035 timeframe that vendor roadmaps project. Above 10,000 logical qubits, the challenges change character — and the timeline stretches accordingly.

Organizations waiting for 100,000-logical-qubit machines as their signal that quantum computing is “real” will miss the more consequential milestones that arrive much earlier. As I have argued elsewhere, the reason to begin PQC migration now is not that 100,000-logical-qubit machines are imminent, but that 1,000-logical-qubit machines are within a decade — and the migration itself takes years.

The Honest Summary

The growth in logical qubit count is not linear. It is not exponential in the convenient direction. Each tier of logical qubits introduces new binding constraints, and the nature of those constraints depends on both the scale you are targeting and the hardware modality you are building with.

At ~100 logical qubits, the challenges are severe but the path is visible: get below threshold, demonstrate real-time decoding, build magic state factories, prove continuous operation. Hard engineering. Known physics.

At ~1,000 logical qubits, the same challenges intensify and begin to interact: manufacturing uniformity matters more, decoder throughput becomes a real constraint, magic state overhead starts to dominate the physical qubit budget. This is the regime where current cryptographic algorithms become vulnerable, and where the most transformative chemistry applications begin. It is accessible with sustained engineering effort in the next decade.

At ~100,000 logical qubits, the challenges change character. Cryogenic infrastructure at this scale has no precedent. Distributed decoding across millions of qubits is an open research problem. Magic state production at the required rate may consume the vast majority of the machine. Continuous operation for the required runtime demands stability that no quantum system has approached. These are not problems that more funding and better engineers will solve on a predictable timeline. They require architectural innovations we cannot yet specify.

This is why, when someone asks me whether 100,000-logical-qubit applications will be feasible in ten years, the answer is not a qualified “maybe.” It is that the question itself mischaracterizes the challenge. Getting to 1,000 logical qubits is an engineering scaling problem. Getting to 100,000 is a set of open research problems that happen to also be engineering scaling problems. The distinction matters, both for investment strategy and for security planning.

The CRQC Quantum Capability Framework is designed precisely to track these distinctions: which capability dimensions are progressing on an engineering trajectory, and which still require fundamental advances. That granularity is what separates useful timeline analysis from roadmap extrapolation.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.