Quantum Computing Simulates 12,635-Atom Protein — Largest Ever

Table of Contents

May 6, 2026 – Four months ago, a collaboration between Cleveland Clinic, RIKEN, and IBM used quantum computing to simulate the 303-atom miniprotein Trp-cage for the first time. This week, the same team announced they have simulated the electronic structure of a 12,635-atom protein-ligand complex — a 40-fold increase in system size and a 210-fold improvement in accuracy on a key workflow step, achieved in roughly the time it takes for a new semester to start.

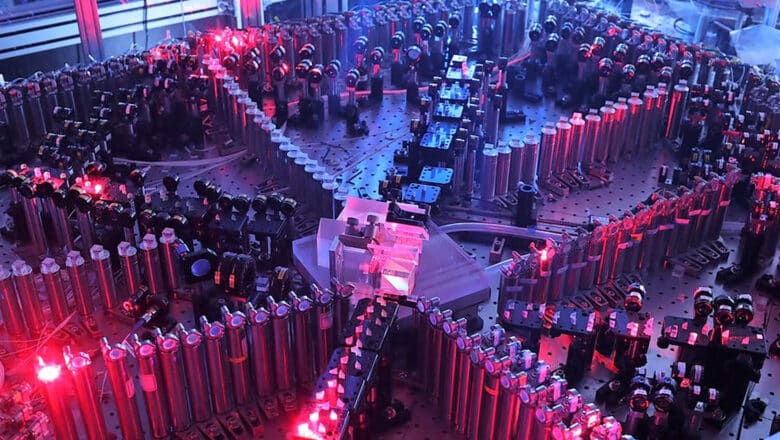

The preprint, led by Dr. Kenneth Merz of Cleveland Clinic, describes quantum-centric supercomputing (QCSC) calculations on T4-Lysozyme (11,608 atoms) and Trypsin (12,635 atoms), both modeled with bound ligands and immersed in explicit water solvent. Two IBM Heron r2 processors (156 qubits each) performed quantum sampling using up to 94 qubits, executing 9,200 circuits over 100 hours and collecting 1.3 billion measurement outcomes. The classical heavy lifting ran on RIKEN’s Fugaku and the University of Tokyo’s Miyabi-G supercomputers.

Bottom line: This is the largest heterogeneous quantum-classical electronic structure calculation ever performed. It does not yet outperform the best purely classical methods for protein chemistry, but the trajectory of improvement — from 303 atoms to 12,635 atoms in four months — is striking. If the pace holds, quantum-centric approaches to computational chemistry could become competitive with classical alternatives within the next few years.

How the Workflow Scales

The technique underlying this result is Sample-based Quantum Diagonalization (SQD), the same method IBM has been developing as the flagship application for its QCSC architecture. In this workflow, a quantum processor generates samples representing electronic configurations (Slater determinants). Classical supercomputers then take those samples through configuration recovery, subsampling, and subspace diagonalization to estimate ground-state energies.

What makes this approach scalable is the embedding framework. Wave function-based embedding (EWF) fragments the protein into computationally manageable clusters. Classical methods handle the simpler clusters. The quantum processor, using SQD, tackles the clusters where electron correlation is strongest — where classical methods struggle most with accuracy.

The jump from 303 atoms to over 12,000 required more than bigger hardware. The critical algorithmic advance was applying linear-scaling methods to the MP2 step that determines how to fragment the molecule. In the original implementation, doubling the molecule size made this fragmentation step 32 times more expensive (fifth-power scaling). At 12,635 atoms, the naive approach would have been 24 million times more costly than the Trp-cage calculation.

Merz and his Cleveland Clinic colleagues recognized that in a large protein, electron entanglement is localized. Quantum mechanical correlations beyond about 7–10 angstroms are negligible. By restricting the MP2 calculation to a sphere around each atom, the team collapsed the scaling from O(N⁵) to effectively linear, making the calculation feasible on available hardware.

What It Means, and What It Does Not

The honest assessment, which the IBM blog post itself provides, is that the quantum-centric method does not yet outperform the best classical approaches for protein electronic structure calculations. As I examined in Quantum Chemistry’s Honest Ledger, the quantum advantage in chemistry is real but narrow: it applies to specific strongly correlated subsystems where classical methods are least reliable, embedded in exactly the kind of hybrid workflow this protein simulation demonstrates. This matters because the value proposition depends on the quantum component eventually providing accuracy gains that purely classical methods cannot match.

The 210-fold accuracy improvement over previous QCSC approaches refers specifically to a step within the SQD workflow, not to the overall accuracy of the protein simulation compared to established classical chemistry methods like coupled-cluster or full configuration interaction. The quantum contribution here is a component within a larger classical workflow, and the bottleneck remains whether that component delivers enough accuracy improvement to justify the additional complexity.

Still, there are reasons this trajectory should be taken seriously.

The workflow architecture is sound. EWF plus SQD is a genuinely modular design where the quantum processor handles the hardest fragments and classical resources handle everything else. This is precisely the kind of hybrid approach that plays to the strengths of both computational paradigms. As quantum hardware improves (more qubits, lower error rates, deeper circuits), the quantum component can tackle progressively harder clusters without redesigning the workflow.

The scale of classical resources is also notable. This is the most resource-intensive known QCSC execution for quantum chemistry: 1.3 billion measurement samples across two quantum processors, processed on two of the world’s most powerful supercomputers. It demonstrates that the engineering infrastructure for production-scale quantum chemistry workflows is being actively built, not just theorized.

For readers who track my CRQC Quantum Capability Framework, this work connects most directly to Full Fault-Tolerant Algorithm Integration (D.1). The demonstration itself is pre-fault-tolerant, but SQD represents a concrete algorithmic pathway that benefits from both near-term noisy hardware and future error-corrected systems. The same workflow running on a fault-tolerant quantum processor could access the deep circuits needed for higher accuracy on the most strongly correlated fragments, potentially matching or exceeding the best classical chemistry methods. The Quantum Utility Ladder catalogs the resource estimates for these targets, from early active-space chemistry at 100–300 logical qubits to cytochrome P450 enzyme simulation at roughly 4,900 logical qubits.

The QCSC Ecosystem Is Consolidating

This protein simulation does not exist in isolation. It arrives alongside Q-CTRL’s Fermi-Hubbard simulation demonstrating 3,000x wall-clock speedup over classical tensor network methods, and IBM’s reference architecture paper laying out the systems blueprint for quantum-centric supercomputing. IBM CEO Arvind Krishna has publicly predicted that the first examples of quantum advantage on IBM hardware will arrive in 2026.

Taken together, these developments suggest that the quantum computing industry’s center of gravity is shifting from benchmarking isolated processors toward building integrated quantum-classical workflows. The protein simulation tells that story: quantum sampling, classical embedding, supercomputer-scale diagonalization, and algorithmic innovation coordinated across an international team spanning the United States and Japan.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.