IBM’s Quantum-Centric Supercomputing Blueprint

Table of Contents

March 20, 2026 – The most consequential document IBM Quantum has published this year may not be a research paper about qubits or error correction. It is a systems architecture blueprint, a 20-page reference design showing how quantum processors fit into the same data centers, schedulers, networking fabrics, and security frameworks that run today’s supercomputers.

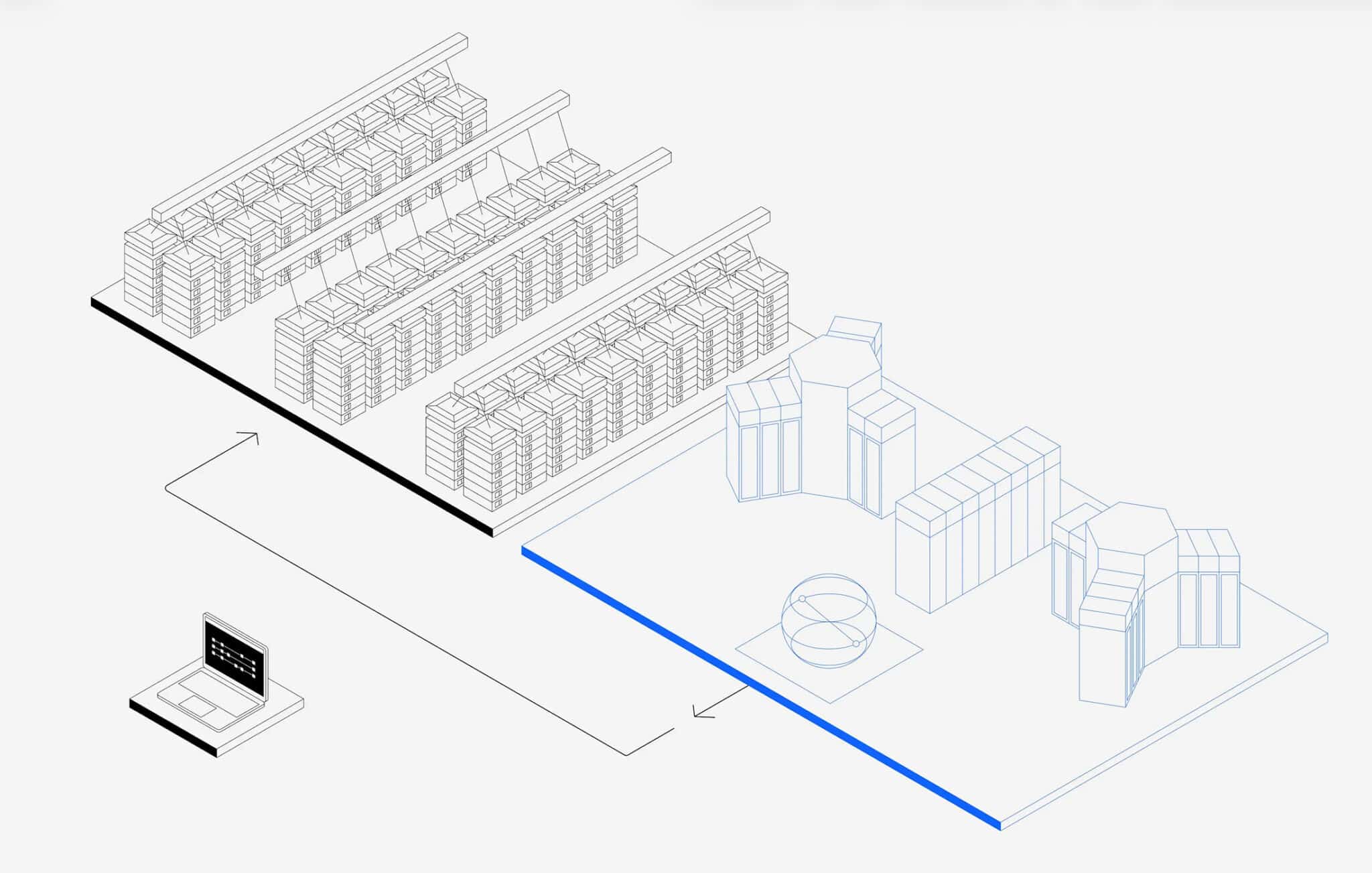

The paper, authored by a team including Jerry Chow, Jay Gambetta, Sarah Sheldon, and Abhinav Kandala, describes a Quantum-Centric Supercomputing (QCSC) architecture that integrates Quantum Processing Units (QPUs), GPUs, and CPUs across three evolutionary phases spanning roughly the next decade. The first phase is already operational at RIKEN in Japan and Rensselaer Polytechnic Institute in the United States, where IBM Quantum System installations sit alongside classical HPC infrastructure. IBM CEO Arvind Krishna has staked a specific timeline on this architecture delivering quantum advantage in 2026.

Bottom line: This architecture paper is IBM’s clearest statement yet that quantum computing’s near-term value lies in integration with heterogeneous compute workflows, not in standalone quantum processors. For organizations tracking quantum maturity, the message is concrete: the systems engineering (schedulers, networking, security, middleware) is being built now, well before fault-tolerant hardware arrives.

The Three Phases

IBM’s roadmap follows the trajectory that GPUs took in HPC, from loosely attached accelerators to fully co-designed system components. That analogy is instructive and, for once, appropriate.

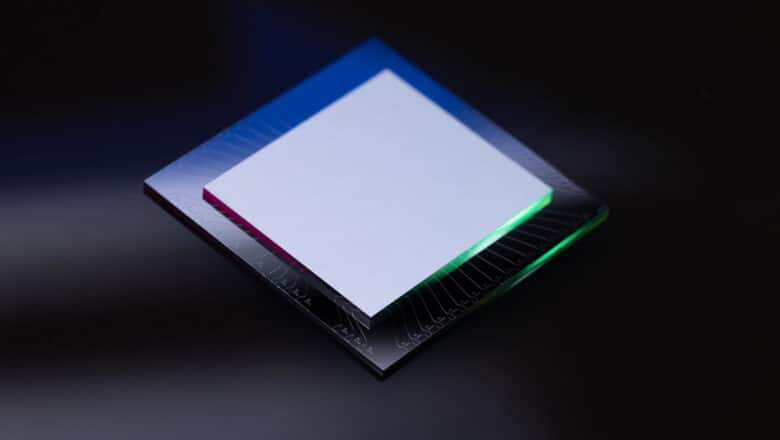

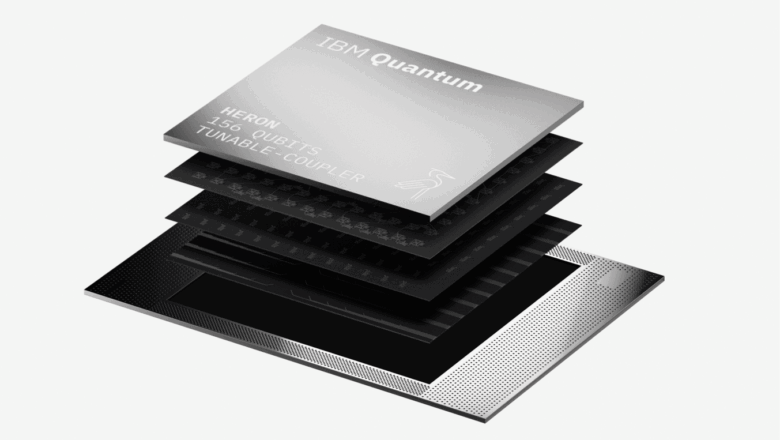

Phase 1 (2025–2027): Quantum as co-processor. Quantum systems function as specialized compute offload engines within existing HPC complexes. This is already happening. At RIKEN, an IBM Heron processor connects to the Fugaku supercomputer via Ethernet. The quantum system handles sampling workloads (generating bitstrings from quantum circuits), while Fugaku runs the heavy classical computation: configuration recovery, subsampling, subspace diagonalization. Orchestration between the two systems uses independent job queues with batch-time scheduling. The Cleveland Clinic collaboration that simulated a 12,635-atom protein is a production example of this phase.

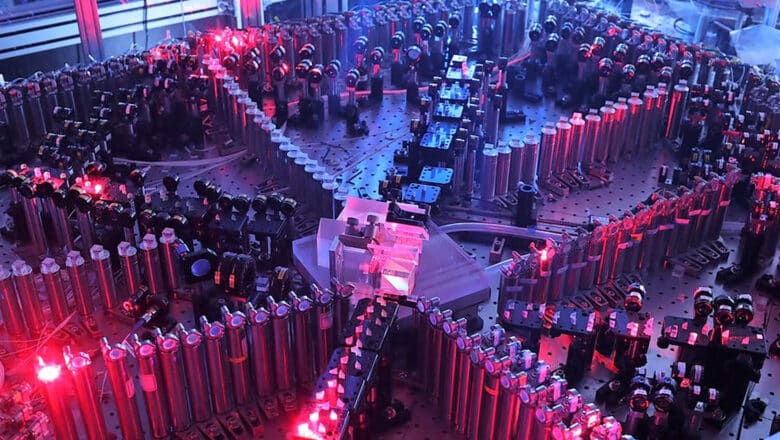

Phase 2 (2028–2030): Heterogeneous quantum-HPC systems. Quantum and classical resources become tightly coupled through advanced middleware, low-latency interconnects (Ultra Ethernet, NVQLink, UALink), and unified scheduling. The key shift is from sequential orchestration (classical pre-processes, quantum samples, classical post-processes) to iterative closed-loop workflows where outputs from one system feed directly into the next iteration on the other. The closed-loop SQD workflow demonstrated by Shirakawa et al., which used half of Fugaku (72,000+ nodes) alongside an IBM Heron processor with continuous data exchange, exemplifies this pattern. Phase 2 also targets outer-code quantum error correction research, where co-located GPU nodes process decoded syndrome streams from the quantum processor at near-time latencies (~100 µs to 1 ms).

Phase 3 (2031+): Fully co-designed systems. Unified programming models span quantum and classical resources. Real-time quantum error correction runs on inner codes (qLDPC codes on custom ASICs/FPGAs) while outer codes execute on co-located GPU accelerators. Multi-tenant execution. IBM compares this to the NVIDIA Grace Blackwell architecture, where CPUs are designed around GPU requirements rather than GPUs being bolted onto CPU systems.

The Architecture’s Key Components

Several technical elements stand out for their maturity and implications.

QRMI (Quantum Resource Management Interface). This is a lightweight library that exposes quantum processors to traditional HPC schedulers like Slurm. Currently implemented as a Slurm SPANK plugin, QRMI lets system administrators manage QPUs as generic resources within familiar HPC job-scheduling frameworks. Users can request QPUs through Slurm while the plugin handles credential mediation, device discovery, and job submission via the quantum system’s REST APIs. This is unglamorous engineering, but it is exactly what HPC centers need to adopt quantum resources without rebuilding their software stacks.

Quantum Systems API (QSA). The architectural boundary between the tightly coupled real-time layer (where FPGAs and custom ASICs handle syndrome extraction and mid-circuit measurement) and the rest of the stack. The QSA abstracts the internal heterogeneity of the classical runtime and quantum hardware, presenting a stable interface for the orchestration layer. This is IBM’s answer to how quantum systems can be vendor-portable at the API level even when the underlying hardware is deeply proprietary.

Hierarchical Error Correction. The architecture explicitly separates inner codes (hardware-adapted qLDPC codes operating on physical qubits at ~10 µs cycle times, running on co-located FPGAs/ASICs) from outer codes (algorithm-adapted codes operating on logical qubits at ~100 µs to 1 ms, running on nearby GPU nodes). This separation matters because outer-code research (new decoding algorithms, hierarchical partitioning strategies, AI-based decoders) can proceed on flexible classical hardware without being constrained by the microsecond latency budgets of the inner code. For my CRQC Quantum Capability Framework, this hierarchical approach directly addresses Decoder Performance (D.2) requirements. The broader qLDPC and magic state cultivation advances driving this hierarchy are covered in depth in my Error Correction Revolution analysis.

Confidential Data and Execution (CDE). The security architecture introduces a programming abstraction where users encode data access policies at the API level. Jobs are decomposed into tasks with verified execution envelopes running in hardware-backed Trusted Execution Environments (TEEs). The compartmentalization relies on mathematically verified firmware with proofs embedded in the continuous integration pipeline. This is targeted at the multi-tenant Phase 3 scenario, but the security model is being designed now. That matters for defense and intelligence organizations evaluating quantum systems for classified workloads.

My Analysis

I have been tracking quantum computing systems architecture for over a decade, and this paper marks an inflection point. Previous IBM quantum publications focused on processor roadmaps (qubit counts, error rates, coherence times) or algorithm demonstrations. This paper focuses on the engineering that makes quantum computing deployable, which is a different kind of maturity signal entirely.

A few observations for the PostQuantum.com audience.

The GPU analogy is more than marketing. IBM traces the evolution from GPUs-on-PCIe (loose coupling, limited bandwidth) to GPUs-on-NVLink (tight coupling, high bandwidth) to Grace Blackwell (co-designed CPU-GPU systems). Quantum processors are currently at the PCIe stage. The architecture paper charts a concrete path through the next two stages. This matters because it means quantum computing’s integration trajectory is not hypothetical; it follows a proven pattern with defined engineering milestones.

The five use cases tell you where quantum computing goes first. The paper grounds its architecture in five concrete use cases: electronic structure calculations (SQD), closed-loop SQD, error mitigation with tensor networks and Pauli propagation, open-loop QEC research, and outer-code fault-tolerant QEC research. Every one of these is a simulation or optimization problem. None involve cryptanalysis. This concentration is not accidental; as I argue in The Narrow Advantage, the evidence for quantum advantage is strongest in exactly these domains and structurally weak in the finance, logistics, and ML applications where quantum is most heavily marketed. The near-term quantum advantage pathway runs through physics and chemistry applications where quantum circuits have a natural mapping to the problem structure; cryptanalysis requires fault-tolerant hardware with capabilities that Phase 3 begins to address, but likely will not reach CRQC-scale until well beyond the 2034 horizon shown in the roadmap figure.

The systems infrastructure being built now will be repurposed later. QRMI, QSA, hierarchical error correction, high-bandwidth interconnects between QPUs and GPUs — these are general-purpose capabilities. The workflows running protein simulations today and Fermi-Hubbard simulations will eventually be adapted for quantum algorithms targeting cryptographic problems. The organizational knowledge and engineering tooling being accumulated at RIKEN, Cleveland Clinic, and the growing network of QCSC installations are the same capabilities that a state-level actor would need to operate a CRQC.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.