Q-PAC Goes Live: The First U.S. Quantum Open Architecture System Went From Announcement to Fully Operational in Five Months

Marc 16, 2026 – Five months. That is how long it took to go from announcing a plan to standing up a fully operational quantum computer in Denver, Colorado. Not a research prototype behind a locked lab door. Not a demonstration rig assembled for a conference. A commercially deployable, cloud-accessible quantum computing system assembled from components made by five different companies on two continents.

On March 16, 2026, Elevate Quantum and its partners announced that the Quantum Platform for the Advancement of Commercialization (Q-PAC) is now live and operational at Elevate Quantum’s Commercialization Lab on the Quantum Commons campus in Denver. The system represents the first Quantum Open Architecture (QOA) quantum computer deployed on U.S. soil.

The bottom line: Q-PAC’s five-month deployment timeline is the strongest evidence yet that the QOA model — assembling quantum computers from modular, multi-vendor components rather than buying monolithic proprietary stacks — is not just a theoretical argument. It is an operational reality. And the speed and cost at which it was done should concern every vertically integrated quantum computing company.

The News

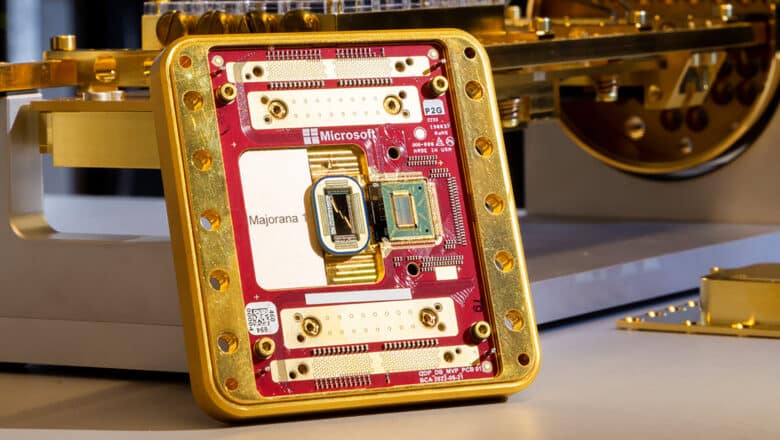

The Q-PAC system was first announced in November 2025 as a collaboration between Elevate Quantum, QuantWare (quantum processors), Qblox (control electronics), Q-CTRL (infrastructure software), and Maybell Quantum (cryogenic infrastructure). The March 2026 announcement confirms the system has advanced from concept to full operation, with a new partner (Arrow Electronics) joining the consortium to provide HPC integration capabilities.

The system is built on the Quantum Utility Block (QUB) architecture, a family of pre-validated, modular quantum computer reference designs jointly engineered by QuantWare, Qblox, and Q-CTRL. QUB was launched alongside the original Q-PAC announcement in November 2025 and comes in three configurations: Small (5 qubits), Medium (17 qubits), and Large (41 qubits).

Q-PAC launches with a 17-qubit QuantWare quantum processing unit (QPU), with an upgrade path aligned to QuantWare’s processor roadmap. The partnership says the same platform will support 100-qubit-class processors heading into 2027 without replacing infrastructure — a key claim that, if validated, demonstrates the modular upgradeability that QOA advocates have long promised.

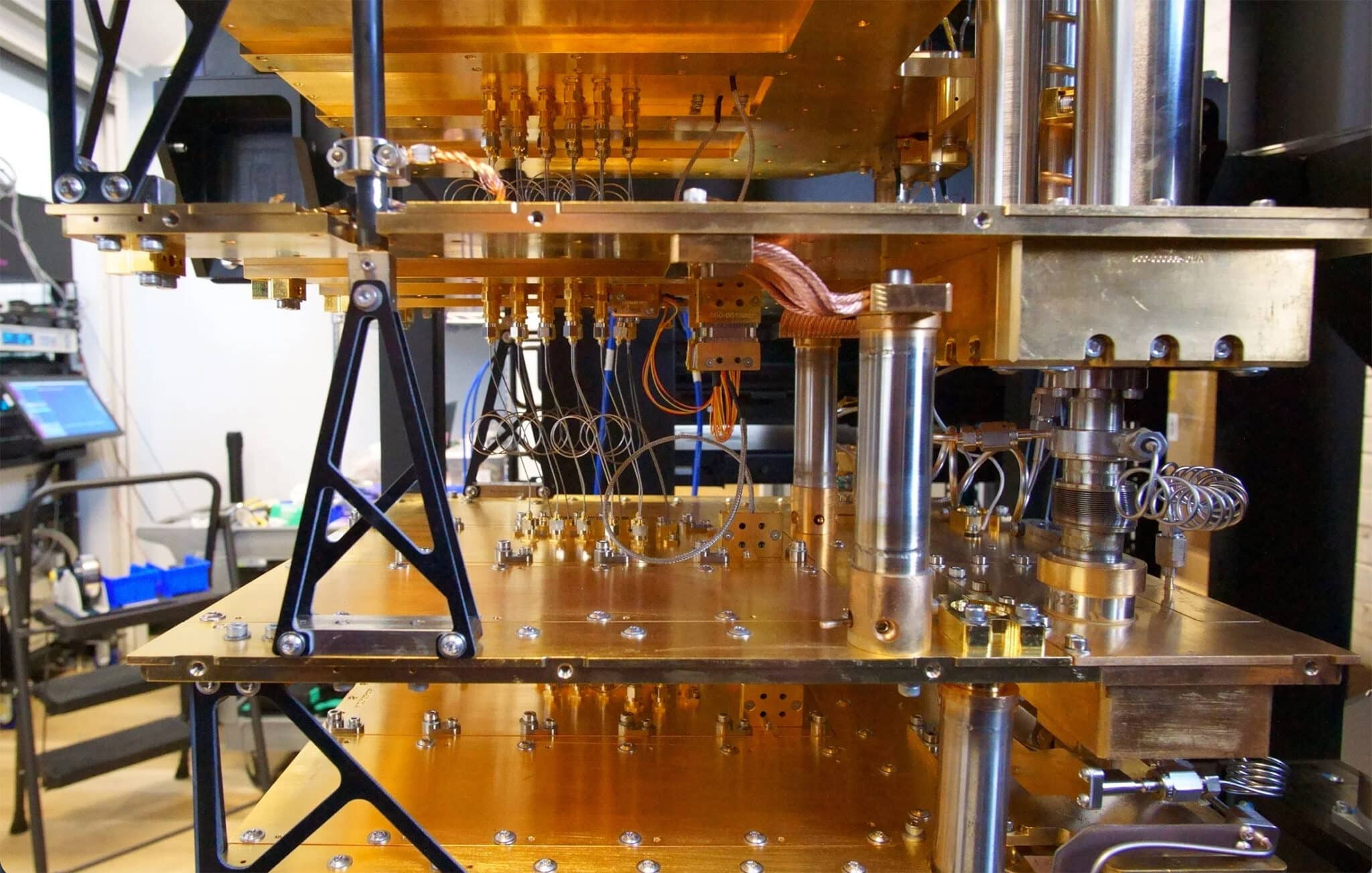

The software stack integrates Q-CTRL’s Boulder Opal Scale-Up for AI-driven autonomous calibration and control, and Fire Opal for error suppression and circuit performance management. Qblox supplies the modular control electronics. Maybell Quantum provides the cryogenic wiring and dilution refrigeration.

Looking ahead, Arrow Electronics will integrate a GPU-cluster reference server connected to the quantum hardware via NVIDIA NVQLink, enabling low-latency hybrid classical-quantum workflows without requiring FPGA programming. This positions Q-PAC as a hybrid compute platform for organizations operating within high-performance computing (HPC) environments.

Elevate Quantum is the federally designated quantum Tech Hub under the U.S. Department of Commerce, representing the nation’s largest quantum industry cluster across Colorado, New Mexico, and Wyoming.

My Analysis

Five Months Is the Number That Matters

Forget the qubit count for a moment. Seventeen qubits is not going to solve any commercially relevant problem. Everyone involved knows that. What matters here is the deployment velocity and the cost structure.

I wrote about this collaboration when it was first announced in November 2025, and at the time, the open question was whether the QOA model could deliver on its core promise: that modular, multi-vendor quantum systems could be assembled faster and cheaper than proprietary alternatives. Five months later, that question has an initial answer.

To put the timeline in context: procuring and deploying a comparable system from one of the vertically integrated vendors (IBM, Google, or IonQ’s on-premises offerings) typically involves multi-year engagement cycles. These involve extensive custom engineering, site preparation, and vendor-controlled installation processes. Q-PAC compressed that into less than half a year.

The cost claim is harder to verify independently. The announcement describes the deployment as happening “at a fraction of the cost compared to closed, full-stack systems,” but no specific figures were disclosed. I would welcome transparency here. The QOA thesis lives or dies on whether the economics actually pencil out at scale, and self-reported claims from partners in a consortium are exactly the kind of assertion that needs independent validation.

The QUB Architecture Proves Its First Real Test

The Quantum Utility Block is the reference architecture that made this deployment speed possible. As I covered in my deep dive on QOA and Quantum Systems Integration, the QUB model is essentially a bill of materials with pre-validated integration specifications — the quantum equivalent of a PC reference design.

Q-PAC is QUB’s first real deployment. And first deployments matter enormously in establishing reference architectures. The classical computing industry learned this with IBM’s PC in 1981: the technical specifications were less important than the proof that independent component manufacturers could build to a common design and produce working systems. Q-PAC serves a similar function for QOA. It proves that the blueprint works, which gives confidence to the next organization considering a QUB-based deployment.

The upgrade path claim is particularly worth watching. If Q-PAC can swap in a 100-qubit-class processor in 2027 without replacing the control electronics, cryogenic infrastructure, or software stack, that validates the core QOA proposition: that modularity enables non-disruptive scaling. If the upgrade turns out to require significant re-engineering of the surrounding infrastructure, it will raise legitimate questions about how “open” and “modular” the architecture really is in practice.

Arrow Electronics and the HPC Bridge

The addition of Arrow Electronics to the consortium signals something important about where QOA is heading. Arrow is not a quantum company. It is one of the world’s largest distributors of electronic components and enterprise computing solutions, with deep relationships in the data center and HPC market.

Their role — integrating a GPU-cluster reference server via NVIDIA NVQLink — is about bridging quantum hardware into existing classical compute infrastructure. This is the quantum systems integration challenge I have been writing about: quantum computers do not operate in isolation. They need classical pre- and post-processing, data pipelines, orchestration layers, and low-latency interconnects to be useful in practice.

The NVQLink pathway is interesting because it avoids the FPGA bottleneck that has complicated many early quantum-classical integration efforts. If the GPU-cluster integration delivers on the promise of faster calibration and more efficient hybrid workflow execution, it makes Q-PAC more useful as a development platform than a standalone quantum system would be.

What This Means for the QOA Ecosystem

This Q-PAC deployment does not exist in a vacuum. The enabling technology layer beneath QOA has been maturing rapidly: QuantWare is now the highest-volume commercial QPU supplier worldwide, shipping to customers in over 20 countries. Qblox sells modular control stacks to more than 100 labs. Bluefors has installed 1,800 cryogenic systems globally. The components of an open quantum computing ecosystem are no longer hypothetical — they are shipping products with real install bases.

The pattern is becoming clear: quantum computing is disaggregating, much as classical computing did in the 1980s. Individual component companies are becoming the specialists. Systems integrators are emerging as the assemblers. Reference architectures like QUB are providing the interoperability specifications. And regional hubs like Elevate Quantum are providing the physical infrastructure and institutional support to bring it all together.

For organizations evaluating their quantum computing strategies, Q-PAC offers a useful signal. The QOA model is no longer theoretical. There is now a working, deployed, commercially accessible system in Denver that anyone can evaluate. The question has shifted from “can this work?” to “how fast does it scale?”

Caveats Worth Stating

A few important qualifications.

First, 17 qubits is a starting point, not a destination. The system’s value today is as a development, training, and integration testbed — not as a tool for solving hard problems. The real test comes when the 100-qubit-class upgrade arrives in 2027 and users attempt computationally meaningful workloads.

Second, the consortium’s self-described speed and cost claims need independent benchmarking. Deployment velocity is impressive, but “fraction of the cost” needs a denominator. Until there are public price-performance comparisons between QUB-based deployments and vertically integrated alternatives, the economic argument for QOA remains an assertion rather than a demonstrated fact.

Third, the interoperability promise cuts both ways. As I noted in my QOA analysis, there is a strategic risk that one layer of the open stack becomes a chokepoint, creating a new form of vendor lock-in at the software or control electronics tier rather than the full-stack tier. The industry needs to watch whether genuine multi-vendor competition develops at every layer, or whether de facto standards consolidate around a single supplier at each level.

Finally, Q-PAC is currently one system in one location. The QOA thesis requires reproducibility at scale — multiple organizations deploying QUB-based systems independently, with different configurations and use cases. That is the next milestone to watch.

The Bigger Picture

The Q-PAC deployment sits at the intersection of two trends I have been tracking closely: the industrialization of quantum computing through open architecture and the growing role of regional quantum ecosystems in national technology strategy.

Colorado’s Quantum Commons campus, backed by Department of Commerce Tech Hub designation, is a deliberate bet that distributed, regionally anchored quantum infrastructure is a stronger foundation for national capability than relying exclusively on a handful of vertically integrated vendors in Silicon Valley or the Pacific Northwest. Whether that bet pays off depends on whether Q-PAC becomes a template that other hubs replicate, or remains a singular showcase.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.