The SLH-DSA Exclusion: Why NSA Left Out NIST’s Conservative Fallback

Table of Contents

Introduction

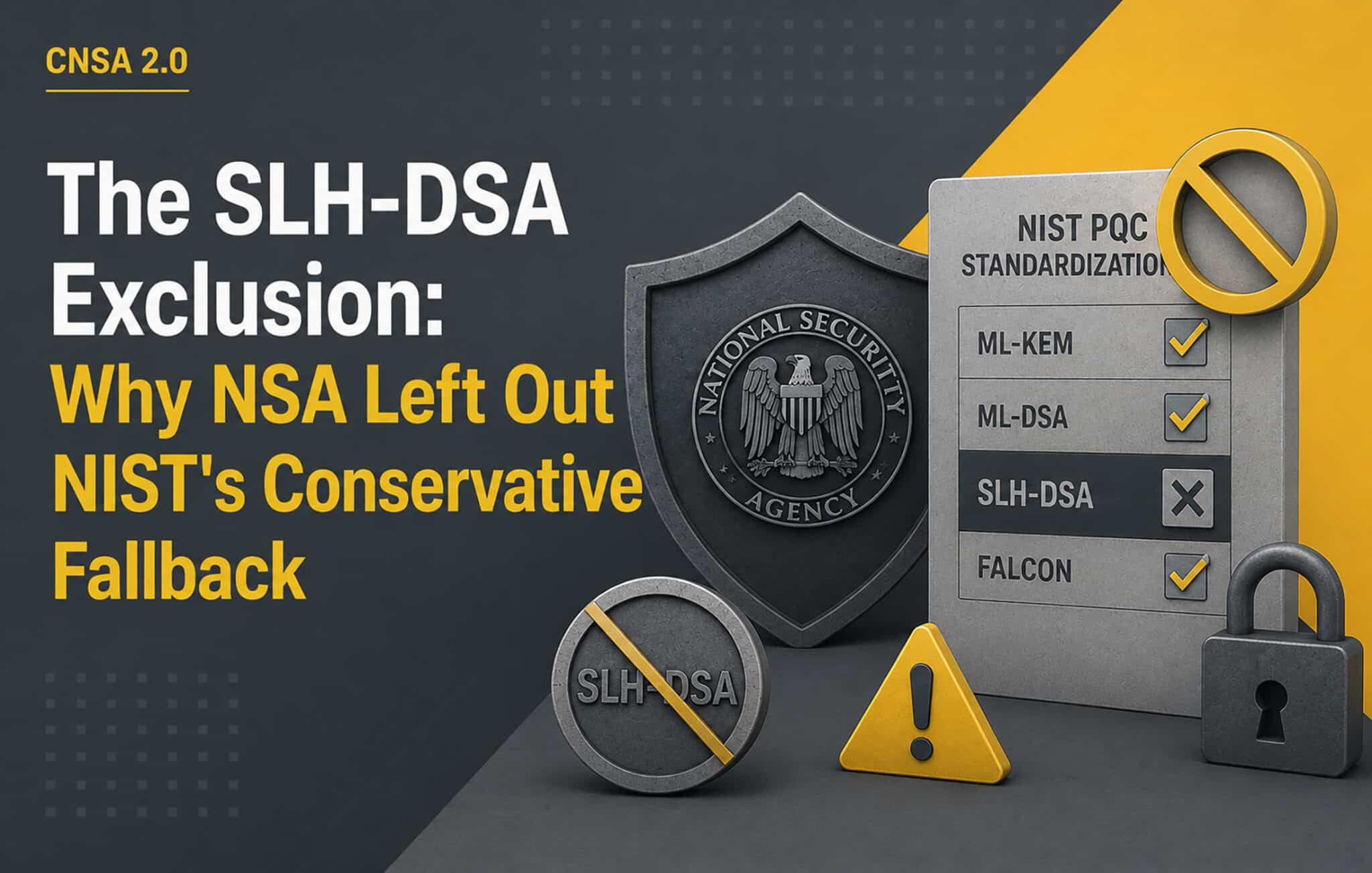

NIST standardized three post-quantum signature algorithms. NSA approved one of them for National Security Systems.

ML-DSA (FIPS 204), the lattice-based digital signature algorithm, is the sole general-purpose signature option in CNSA 2.0. FN-DSA (the lattice-based algorithm formerly called Falcon) will not be added, even after NIST finalizes it. And SLH-DSA (FIPS 205), the stateless hash-based signature that NIST standardized specifically to provide a conservative, non-lattice alternative, is excluded from all NSS use.

The exclusion of SLH-DSA is the single most revealing algorithm choice in the entire CNSA 2.0 framework. It tells you what NSA believes about lattice security, how they weight operational simplicity against algorithmic diversity, and where they draw the line between theoretical hedging and practical deployment. It also puts the United States on a different side of a consequential debate from Germany, France, and the broader European cryptographic community. This article examines the decision, the reasoning on both sides, and what it means for organizations planning their own post-quantum migration.

What SLH-DSA Is and Why NIST Standardized It

SLH-DSA (formerly SPHINCS+) is a stateless hash-based signature scheme. Its security rests on a single, well-understood assumption: that the underlying hash function (SHA-256 or SHAKE) is one-way. No lattice problems, no algebraic structure, no number-theoretic hardness assumptions. If SHA-256 is secure, SLH-DSA is secure. Period.

NIST chose to standardize SLH-DSA alongside ML-DSA precisely because it provides security diversity. ML-DSA and ML-KEM are both built on the Module-LWE (Learning With Errors) problem, a structured lattice construction. If a mathematical breakthrough were to weaken Module-LWE, both the encryption and signature algorithms in CNSA 2.0 would be affected simultaneously. SLH-DSA exists as insurance against that scenario: an algorithm whose security comes from entirely different mathematical foundations.

The conservative case for SLH-DSA is easy to state, even if the operational trade-offs are not. Hash functions have been studied for decades. The one-wayness of SHA-256 is among the most thoroughly analyzed assumptions in all of cryptography. Lattice problems, while extensively studied since the mid-1990s, carry less cryptanalytic history. The theoretical worst-case to average-case reductions that underpin LWE’s security are strong, but for the specific structured (Module-LWE) constructions used in ML-KEM and ML-DSA, the practical security estimates rely more on empirical analysis of attack costs than on provable reductions. As one summary from the academic literature puts it, for practical lattice-based constructions “meaningful reduction-based guarantees of security are not known.”

None of this means ML-DSA is likely to be broken. The algorithm has survived years of intense scrutiny through NIST’s multi-round evaluation process, and no practical attack has emerged. But “survived scrutiny so far” and “rests on a well-understood assumption with decades of cryptanalytic history” are different levels of confidence, and NIST decided both levels should be available.

NSA’s Stated Reasoning

The CNSA 2.0 FAQ addresses SLH-DSA directly: “While SLH-DSA is hash-based, it is not part of CNSA and is not approved for any use in NSS.”

The FAQ goes further in a separate question about future algorithm additions: NSA “does not currently plan to add future NIST post-quantum standards to CNSA.” The rationale offered is interoperability. Adding more algorithms “generally makes interoperability more complex.”

Two operational arguments support the exclusion.

First, SLH-DSA signatures are large. At NIST Security Level 1, the “fast” variant (SLH-DSA-SHA2-128f) produces signatures of 17,088 bytes. At Level 5 (SLH-DSA-SHA2-256f), signatures grow to 49,856 bytes, more than ten times the size of an ML-DSA-87 signature (4,595 bytes). In TLS handshakes, certificate chains, and bandwidth-constrained environments, this size penalty is operationally real. A hybrid TLS certificate chain carrying SLH-DSA signatures at every level would add tens of kilobytes to every connection establishment.

Second, NSA manages cryptographic transitions across thousands of NSS implementations. Every additional approved algorithm expands the testing matrix for interoperability, increases the certification burden for NIAP validation, and creates more configurations that must be verified to work correctly together. A tight, two-algorithm suite (ML-KEM for key establishment, ML-DSA for signatures) minimizes this burden. Adding SLH-DSA as a third signature option would increase the number of valid configurations without providing a capability that ML-DSA does not already offer, at least under current assumptions about lattice security.

These arguments are legitimate operational considerations. For an organization running a managed, centrally governed cryptographic ecosystem like NSS, minimizing algorithm count has genuine value.

The Implicit Signal

The operational arguments explain why NSA might prefer not to include SLH-DSA. They do not explain why NSA would exclude it entirely and preemptively state that no future NIST standards will be added.

That stronger position carries an implicit signal about NSA’s confidence in lattice cryptography. By declining to include a non-lattice fallback for national security applications, NSA is expressing a judgment that lattice-based constructions will remain secure for the multi-decade classification horizons that NSS data requires. This is a consequential assessment. National security information classified at the TOP SECRET level may need protection for 50 years or more. NSA is committing that assessment, implicitly, to the proposition that Module-LWE will withstand cryptanalytic advances through the 2070s and beyond.

NSA has the advantage of internal cryptanalytic resources that are not publicly visible. The FAQ states that “NSA performed its own analysis of CNSA 2.0 algorithms and considers them appropriate for long-term use.” It is reasonable to assume that this analysis goes beyond what is available in the public literature. If NSA’s internal cryptanalysts had identified reasons to doubt lattice security, SLH-DSA would almost certainly be in the suite.

The absence of SLH-DSA, read through this lens, is a positive signal about lattice security from the organization with the deepest classified cryptanalytic capability in the Western world. That signal has value. But it is not the same as a proof, and not everyone finds it sufficient.

The BSI Counterpoint

Germany’s Federal Office for Information Security (BSI) reads the same public cryptanalytic literature and reaches a different policy conclusion. BSI recommends SLH-DSA alongside ML-DSA for digital signatures. BSI also recommends FrodoKEM (a KEM based on unstructured LWE, which is more conservative than the structured Module-LWE used by ML-KEM) alongside ML-KEM for key establishment. And BSI mandates that all lattice-based PQC algorithms be deployed in hybrid mode, combined with a classical algorithm, until confidence in their standalone security is established.

The difference in philosophy is clear. NSA asks: “Are these algorithms strong enough to protect national secrets?” and concludes yes, with sufficient confidence to exclude alternatives. BSI asks: “What happens if we are wrong?” and structures its recommendations to provide fallback options in that scenario.

As I analyzed in the global PQC requirements comparison, ANSSI (France) and the ECCG (the EU’s cybersecurity certification coordination body) align with BSI on this question. The ECCG’s Agreed Cryptographic Mechanisms v2 states that LWE and MLWE-based mechanisms “shouldn’t be used in a standalone way.” ANSSI recommends SLH-DSA for signatures. The European position is that algorithmic diversity is a design requirement, not an optional extra.

Neither position is objectively wrong. They represent different risk appetites applied to the same evidence base. NSA optimizes for operational simplicity and interoperability, accepting the concentrated risk that lattice assumptions bear the full weight. BSI optimizes for resilience against unknown unknowns, accepting the operational cost of supporting more algorithms and larger signatures. The right choice depends on the threat model, the operational constraints, and the consequence of being wrong.

The Lattice Security Question

The security of lattice-based cryptography has been studied extensively since Ajtai’s foundational work in 1996. The LWE problem was introduced by Regev in 2005 with a quantum worst-case to average-case reduction, meaning that breaking random LWE instances is at least as hard as solving worst-case lattice problems using quantum computation. This theoretical foundation is strong.

The practical picture is more nuanced. ML-KEM and ML-DSA use Module-LWE, a structured variant that introduces algebraic relationships between the lattice components to improve performance. These algebraic relationships are also, in principle, potential attack surfaces. The concrete security estimates for Module-LWE parameter sets rely on evaluating the cost of known lattice reduction algorithms (primarily BKZ and its variants) applied to the specific dimensions and noise distributions used. If a new algorithmic technique were discovered that exploited the algebraic structure of Module-LWE more efficiently than generic lattice reduction, the security estimates could shift.

No such technique has been demonstrated. The NIST evaluation process subjected the lattice candidates to years of public cryptanalysis, and the parameter sets that survived reflect the community’s current understanding of attack costs. Recent research has also provided evidence that LWE likely remains hard even against novel quantum attack strategies, including approaches based on quantum holographic techniques.

But cryptanalysis is not a closed problem. Novel attack vectors continue to emerge. The SALSA project from Meta AI demonstrated that machine learning techniques (specifically transformers) could be combined with statistical cryptanalysis to attack small-to-mid-size LWE instances with sparse binary secrets. While this specific attack does not scale to threaten production parameter sets, it illustrates that the attack surface for lattice problems is broader than the classical lattice reduction framework that dominates current security estimates.

Side-channel attacks on lattice implementations are another active research area. Work presented at NIST’s Fifth PQC Standardization Conference showed that a naive SLH-DSA implementation leaks its master key through side channels, but also that hardware-accelerated implementations with protection measures can be made resistant. Similar side-channel concerns apply to ML-DSA and ML-KEM. These are implementation vulnerabilities rather than algorithmic weaknesses, but they illustrate that the overall security of a deployed PQC system depends on far more than the theoretical hardness of the underlying mathematical problem.

What This Means for Organizations

If you are building exclusively for CNSA 2.0 compliance and your products will only ever operate within U.S. National Security Systems, the guidance is clear: use ML-DSA-87 for signatures, do not implement SLH-DSA, and move on. NSA has made its assessment, and deviating from it within the NSS context creates compliance risk without regulatory benefit.

For everyone else, the calculus is different.

Organizations subject to European requirements (BSI, ANSSI, or the ECCG framework) should support SLH-DSA. It is recommended, and in some configurations expected, by the authorities that govern those environments. As I detail in the financial services article in this series, financial institutions voluntarily adopting CNSA 2.0 as a benchmark should consider adding SLH-DSA even though CNSA 2.0 does not require it, because the European regulatory direction clearly favors it and because it provides insurance that a CNSA 2.0-only implementation lacks.

Organizations designing for crypto-agility should support SLH-DSA regardless of their current compliance obligations. The entire point of crypto-agility is the ability to respond to changes in the cryptographic environment without emergency re-engineering. If lattice assumptions weakened tomorrow, an organization that had SLH-DSA support ready could activate it as a configuration change. An organization that had built exclusively around ML-DSA would face a retrofit under pressure, with all the risk and cost that entails.

The practical cost of supporting SLH-DSA is real but bounded. The algorithm is standardized (FIPS 205), reference implementations exist, and major cryptographic libraries include it. The primary cost is engineering effort to integrate and test a second signature algorithm, plus the bandwidth overhead of larger signatures in contexts where SLH-DSA is actually used. For most organizations, the sensible approach is to implement SLH-DSA support in the cryptographic layer but default to ML-DSA for production use, keeping SLH-DSA available as a fallback that can be activated if needed.

The SLH-DSA public key is actually smaller than ML-DSA’s (32 bytes versus 2,592 bytes at comparable security levels), which means systems that cache or store many public keys may find SLH-DSA advantageous in that dimension even as its signatures are larger. For firmware verification (where a single signature is checked infrequently, and the verifier stores the public key for the device’s lifetime), SLH-DSA’s profile may be a better fit than its TLS performance would suggest. Recent hardware acceleration research has shown that SLH-DSA’s “small” parameter sets offer verification performance competitive with ML-DSA on root-of-trust hardware targets.

The Broader Pattern

The SLH-DSA exclusion is one instance of a broader pattern in CNSA 2.0: NSA consistently chose the narrower, simpler option at every decision point. Only the highest parameter levels. Only single-tree hash-based schemes. No HashML-DSA. No FN-DSA. No SHA-3 as a general-purpose hash. The suite is engineered for a specific kind of operational environment where interoperability constraints dominate, where central governance can enforce uniformity, and where the organization has enough internal cryptanalytic capability to make its own assessment of algorithm security rather than hedging against uncertainty.

Most organizations do not operate in that environment. They face multiple jurisdictions with conflicting requirements, as I map in the global comparison. They lack NSA’s internal cryptanalytic resources and must rely on the public literature plus the diversity of expert opinion to calibrate their risk. They cannot centrally enforce algorithm choices across their entire ecosystem and must design for environments where different components may support different algorithms.

For these organizations, CNSA 2.0’s algorithm choices are a useful starting point, not a complete answer. ML-KEM-1024 and ML-DSA-87 should be the primary algorithms. AES-256 and SHA-384/512 remain unchanged. But the SLH-DSA exclusion is one CNSA 2.0 choice that most organizations should not follow. The insurance value of a non-lattice signature option, available and tested in the cryptographic layer even if not used in daily operations, is worth the engineering investment. It is the definition of a no-regret decision: if lattice assumptions hold, the cost is minimal; if they weaken, the value is immense.

The PQC Migration Framework builds crypto-agility into the migration methodology from the outset, and its algorithm selection phase explicitly addresses the question of which backup algorithms to support alongside the primary suite. Whether your primary compliance driver is CNSA 2.0, BSI TR-02102, or your own risk assessment, the framework provides a structured approach to making these choices and implementing them sustainably. And for the organizational strategy question, including how to explain the SLH-DSA decision to a board that just heard “we’re using the same algorithms as the NSA,” Quantum Ready covers the governance and communication dimensions.

NSA made its bet. BSI made a different one. Your organization needs to make its own, with clear eyes about what each choice gains and what it gives up.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.