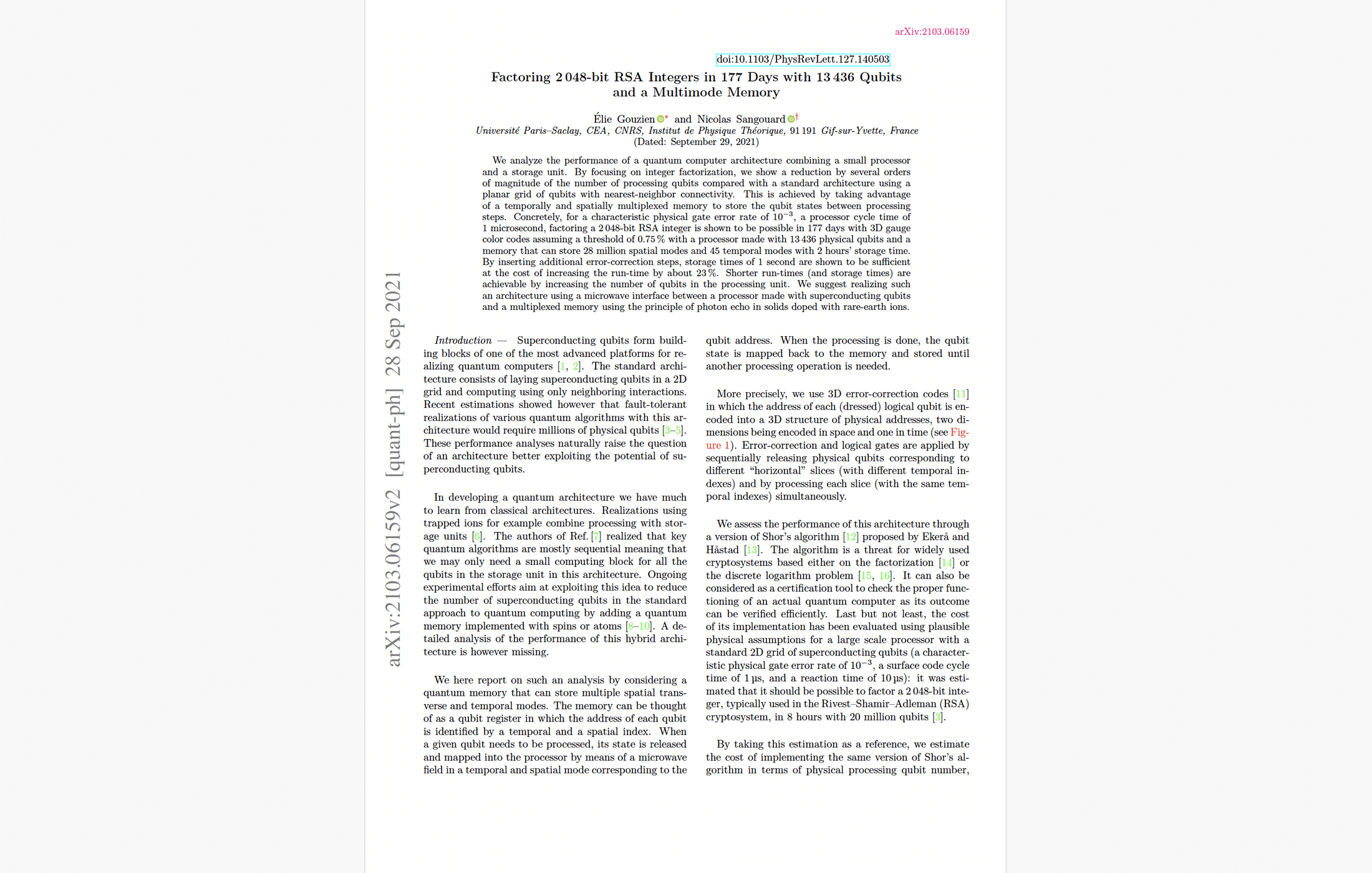

RSA-2048 Cracked in 177 Days With ~13K Processing Qubits? New Preprint Trades Qubits for Quantum Memory.

Table of Contents

1 Oct 2021 – A new preprint by Elie Gouzien and Nicolas Sangouard proposes an architectural trade: replace the “millions of qubits in one giant chip” model with a small quantum processor plus a very large multimode quantum memory. Under optimistic – but clearly stated – fault-tolerance assumptions, the authors estimate that factoring an RSA-2048 integer could be done in ~177 days with ~13,436 physical qubits in the processor, provided the system also includes a massive quantum memory storing ~430 million qubit-modes (organized into ~27.8 million spatial modes and 45 temporal modes) and supports error correction based on 3D gauge color codes.

Conceptually, this does not “make RSA fall next year.” It does, however, highlight a potentially important direction for fault-tolerant quantum engineering: shift complexity from individually controlled processing qubits to a memory-centric architecture, at the cost of runtime and extremely ambitious memory requirements.

What the paper claims and how it gets there

The paper analyzes a hybrid architecture in which quantum information mostly resides in a storage unit (a multimode quantum memory) and is streamed through a smaller 2D processor only when gates or error-correction steps are needed. The memory acts like a “qubit register” whose addresses are indexed by both space and time (spatial and temporal modes).

For factoring, the authors adopt the Ekerå–Håstad variant of Shor’s approach – recasting factoring into a short discrete logarithm computation – so that the dominant cost remains modular exponentiation, but with reduced register requirements relative to textbook Shor. The circuit-level arithmetic is built from (i) windowed arithmetic (lookup-based batching of controls) and (ii) coset representations that approximate modular addition with cheaper adders.

Fault tolerance is modeled using 3D gauge color codes (a subsystem-code family) with code switching / gauge fixing to realize a universal gate set without relying on magic-state distillation in the standard surface-code style. In the proposal, the “third dimension” of the 3D code is effectively supplied by time-slicing via the memory, allowing a 2D processor to manipulate 3D code slices sequentially.

Under a representative physical error rate p = 10⁻³, a processor cycle time t₍c₎ = 1 μs, and an assumed gauge-color-code threshold of pₜₕ ≈ 0.75%, their optimization over arithmetic window sizes and code distance yields, for RSA-2048: d = 47, processor size 13,436 physical qubits, and expected runtime ~177 days. Their memory requirements at this optimum include ~8,284 logical qubits worth of encoded data, totaling ~430,229,540 stored modes, with peak “slice width” corresponding to ~27,825,956 spatial modes and 45 temporal modes.

What is actually new compared to prior RSA-2048 benchmarks

I previously wrote about the Craig Gidney and Martin Ekerå estimate: RSA-2048 in ~8 hours using ~20 million noisy qubits, assuming a planar superconducting architecture, surface-code fault tolerance, 1 μs cycle time, 10 μs classical reaction time, and 10⁻³ physical error rates.

Gouzien–Sangouard’s novelty is not a new factoring algorithmic breakthrough on the level of changing asymptotics; instead it is an architectural reframing:

- They target “processing-qubit count” rather than total stored qubits. The processor falls from ~20,000,000 physical qubits (Gidney–Ekerå surface-code layout) to ~13,436 physical qubits – over three orders of magnitude reduction – by making computation highly sequential and by offloading almost all qubits into memory between operations.

- They aim to eliminate the surface-code magic-state factory bottleneck by using 3D gauge color codes with transversal gates and gauge fixing.

- They quantify the memory/processor trade end-to-end for a Shor/Ekerå–Håstad-style construction, rather than treating memory as a hand-wavy add-on.

Importantly, the authors themselves provide an internal control comparison: if one keeps a surface-code-like approach but introduces a memory to offload idle qubits, they estimate RSA-2048 in ~68 days with a processor of ~184k qubits (most for a magic-state factory) plus a memory storing ~5 million modes. Their fully “memory + 3D gauge color code” design is then the step that collapses processor size further to ~13k.

This “code switching vs distillation” theme was being actively debated. A notable counterpoint is the Michael E. Beverland, Aleksander Kubica, and Krysta M. Svore analysis, which finds that code switching to a 3D color code for T gates may not yield major overhead savings in a detailed circuit-noise model and can have a substantially lower threshold for the switching procedure in their setting. This does not directly refute Gouzien–Sangouard (different architecture, different code choices), but it underlines how sensitive end-to-end “no distillation” stories are to realistic circuit-level noise and decoding assumptions.

Technical assessment of the resource estimates

The paper’s headline number—13,436 qubits – is best read as: 13,436 physical qubits in the processor core needed to stream slices of encoded data and perform gates plus syndrome extraction for a 3D gauge color code at distance d = 47. It is not the total system size.

Qubits and memory modes

For RSA-2048, the memory must store the encoded data for thousands of logical qubits, totaling roughly 430 million stored qubit-modes. The peak slice width corresponds to ~27.8 million “spatial modes” and the code requires 45 temporal slices (consistent with their slicing of a 3D code into a sequence of 2D cross-sections).

Practically, this sets a daunting target for any proposed microwave/solid-state memory technology – not only in coherence time, but in multimode capacity, routing, and addressability.

Gate counts and depth sanity check

Using the paper’s leading-order arithmetic cost expressions (based on windowed exponentiation/multiplication and lookup-add-unlookup structure), their optimized parameters for RSA-2048 (n = 2048, nₑ ≈ 3029, m = 30, wₑ = 3, wₘ = 3) imply order-of-magnitude logical work on the scale of:

- ~1.2 × 10¹¹ two-qubit gates (CNOT-class), and

- ~5.8 × 10⁹ Toffoli-equivalent operations (via AND compute/uncompute accounting),

before translating into fault-tolerant protected gates. These back-of-the-envelope numbers align with the paper’s framing that the computation is dominated by modular exponentiation and its heavy lookup-driven structure.

On timing: with t₍c₎ = 1 μs and their gate-time approximation of ~2(d−2)t₍c₎ per logical gate, distance d = 47 implies ~90 μs per logical gate. Multiplying through, a 177-day runtime corresponds to ~10¹¹–10¹² logical-gate-time quanta, consistent with the gate-count scale above.

Error correction assumptions and thresholds

A central uncertainty is the assumed fault-tolerance threshold pₜₕ ≈ 0.75% for their gauge-color-code treatment. The authors explicitly acknowledge that the circuit-level threshold is not settled and adopt 0.75% as a working hypothesis, providing sensitivity plots versus p/pₜₕ.

The broader gauge-color-code literature supports that thresholds can be “surface-code-like” under some models and decoders, and also that 3D gauge color codes have special properties such as single-shot error correction (in principle, one round of local measurements suffices). But the devil is in the circuit-level details: measurement scheduling, correlated faults, leakage, and decoder performance at huge scale.

Connectivity and classical control

The scheme assumes that the memory can map each stored “address” to three processor locations (control, target, and an error-correction/1-qubit-gate role), effectively enabling all-to-all logical connectivity while keeping the processor small. This is an extremely strong hardware assumption: the advantage hinges on fast, low-error, high-fanout routing between memory modes and the processor, plus a classical control plane that can keep up with syndrome extraction and feedforward (even if corrections are delayed).

Memory feasibility relative to 2021 demonstrations

The paper points to a plausible hardware direction: microwave photon-echo-style memories in rare-earth doped solids coupled to superconducting resonators. Experimental work by 2020 had demonstrated multimode storage of microwave fields with ~100 ms storage in spin ensembles (a meaningful step for modular architectures), but this remains vastly short of the paper’s “hundreds of millions of modes” ambition.

Practical impact on RSA-2048 and CRQC timelines

Interpreting this result for real-world RSA-2048 risk requires keeping two points simultaneously true:

First, it is a genuine reduction in processor qubits under a plausible error rate (10⁻³) and aggressive architectural assumptions. If the hardest bottleneck were “we cannot individually control tens of millions of qubits on a chip,” then a memory-centric design is a serious attempt to route around that.

Second, it is not a near-term cryptanalytic threat because the architecture substitutes one bottleneck for another: the memory is not a footnote; it is the dominant physical scale (hundreds of millions of stored qubit-modes), and the runtime (months) is long unless the processor is scaled up substantially.

For an attacker, a 177‑day single-instance runtime could still be meaningful for high-value targets, but only if the underlying fault-tolerant machine exists. Conversely, the earlier “8 hours with 20 million qubits” benchmark remains a more direct model for a future high-throughput cryptanalytic quantum computer based on surface-code factories, because it already assumes heavy parallelism and mature error correction.

A reasonable, conservative position is that cryptanalytically relevant quantum computers are still at least a decade away, with the critical milestones being: (i) sustained logical qubit operation below threshold, (ii) scalable decoding/control infrastructure, (iii) a manufacturable path to millions of physical qubits or a comparably scalable memory-centric alternative, and (iv) system-level demonstrations that integrate computation, memory movement, and error correction without catastrophic correlated errors. This paper contributes primarily by sharpening milestone (iii): it sketches what “memory-centric fault tolerance” would have to look like quantitatively

Caveats, open questions, and a balanced take

The most important caveat is that the paper optimizes processor qubits, not “total physical qubits in the full machine.” For RSA-2048 the memory scale – hundreds of millions of stored modes – would be a world-class engineering project on its own, likely requiring massive multiplexing, many parallel resonators/cavities, and a control stack comparable in difficulty to scaling a very large chip.

Second, the assumed gauge-color-code threshold is a key sensitivity. The gauge-code literature supports encouraging thresholds in certain models, but detailed circuit-level overhead and decoder behavior at the required scale remain uncertain, and other 2021 analyses caution that code-switching approaches can suffer from lower effective thresholds under circuit noise.

Third, the timing assumptions bundle memory load/store, multi-qubit gauge measurements, and re-storage into a 1 μs cycle-time abstraction. Even if plausible someday, it is far from demonstrated today, especially given the bandwidth and fanout implied by addressing tens of millions of spatial modes.

Conclusion

This is interesting and valuable research because it expands the design space for fault-tolerant quantum computers and provides concrete numbers that force clarity about what “memory-centric” really entails. But it should not be read as implying imminent cryptographic collapse. If anything, it reframes the question from “how fast can we build tens of millions of qubits on a grid” to “can we build any architecture – grid-based or memory-centric – that reliably supports fault tolerance at enormous scale.” On conservative assumptions, reaching a genuine CRQC remains plausibly ≥10 years out, and likely longer unless multiple hard milestones (large-scale QEC, low correlated error, scalable memory, and integrated control) fall rapidly.

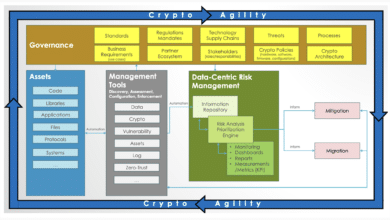

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.