China’s Zuchongzhi 3.2 Crosses the Error Correction Threshold – and Takes a Different Path Than Google to Get There

Table of Contents

30 Dec 2026 – Exactly one year after Google’s Willow became the first quantum processor to operate below the surface code threshold, China’s USTC has matched the feat – and done it without the extra hardware that Google required.

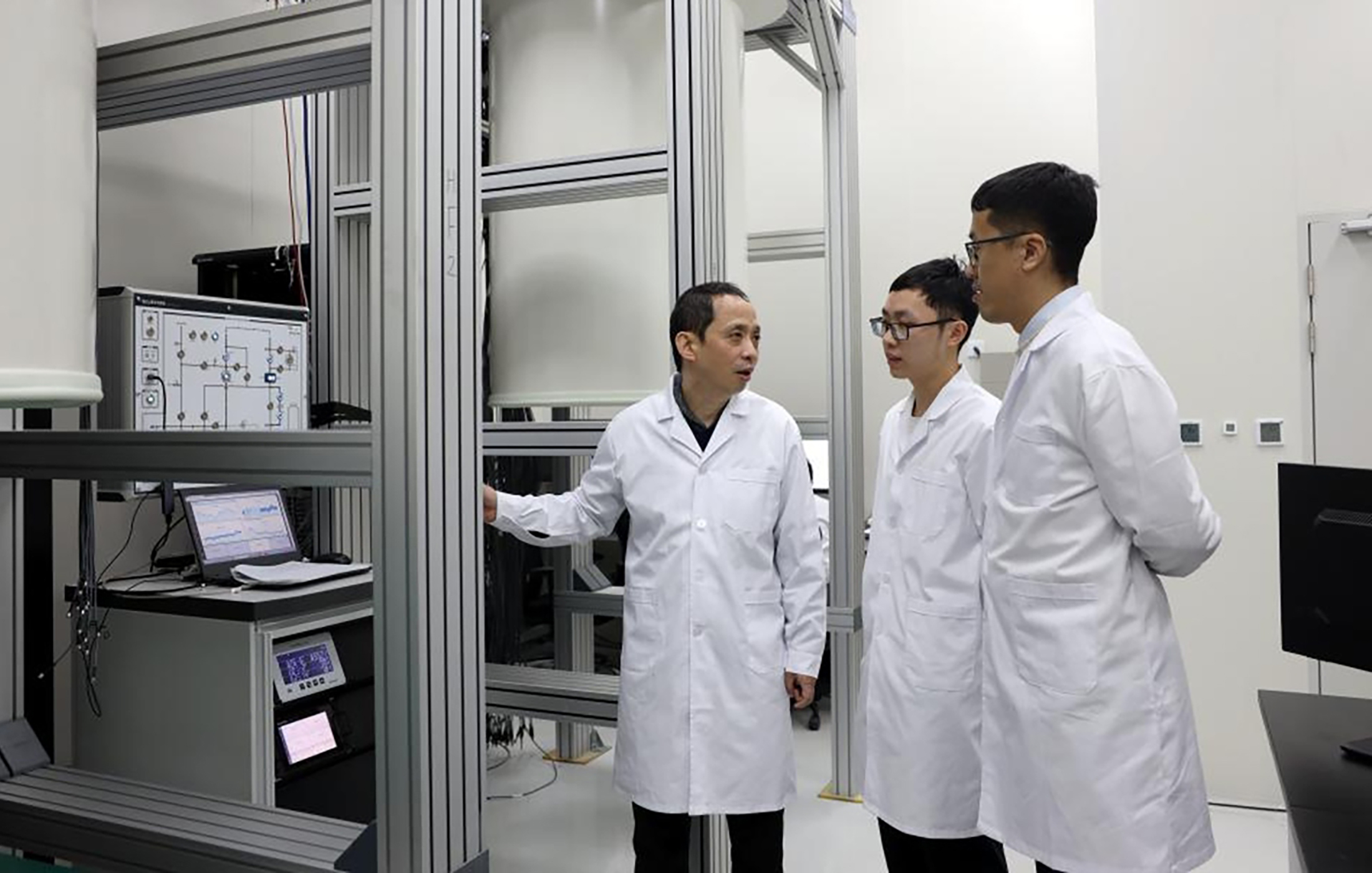

Researchers at the University of Science and Technology of China (USTC) have demonstrated quantum error correction operating below the fault-tolerance threshold on their 107-qubit Zuchongzhi 3.2 superconducting processor, making China only the second country, and USTC only the second research group, to reach this critical milestone.

The results, published December 22, 2025 in Physical Review Letters as both a cover article and an Editors’ Suggestion, show that the USTC team achieved a logical error suppression factor of Λ = 1.40 on a distance-7 surface code – meaning that as they increased the size of their error-correcting code, logical errors decreased rather than accumulated. This reversal is the defining signature of below-threshold operation, the point at which quantum error correction begins working as theory has long promised.

The team, led by Pan Jianwei, Zhu Xiaobo, and Peng Chengzhi, with associate professor Chen Fusheng, used 97 of the processor’s 107 qubits to implement a full distance-7 surface code. But the headline technical innovation is not the code distance itself. It is how they suppressed leakage errors: through an entirely new all-microwave architecture that eliminates the need for dedicated hardware-based leakage reduction.

What is leakage, and why does it matter?

Superconducting qubits are designed to operate in two energy states — the 0 and 1 of a quantum bit. But these are artificial atoms with many energy levels, and during computation, quantum information can “leak” into higher, unintended states. Once a qubit leaks, it corrupts not just itself but its neighbors, spreading correlated errors across the chip and across successive error correction cycles. Leakage is one of the most pernicious failure modes in surface code quantum error correction, because it violates the independence assumptions that error correction relies on.

When Google demonstrated below-threshold operation on its Willow processor in December 2024, it tackled leakage using direct-current (DC) pulse-based hardware: a dedicated data qubit leakage removal (DQLR) mechanism that shifts qubit frequencies to dump leaked population. It worked – Willow achieved a stronger error suppression factor of Λ = 2.14 – but the approach requires additional hardware control lines routed into the dilution refrigerator, adding wiring complexity and constraining chip layout as systems scale.

The USTC team took a fundamentally different approach. Their all-microwave leakage suppression architecture uses precisely timed microwave pulses – the same type of signals already used to manipulate qubits – to perform two operations simultaneously: a leakage reduction unit (LRU) that nudges data qubits back from higher energy states into the computational subspace, and a fast unconditional reset that clears ancilla qubits between error correction cycles. The result: leakage population was suppressed by a factor of 72 after 40 error correction cycles, driving it down to just 6.4 × 10⁻⁴.

Critically, the LRU adds negligible computational error. The team verified this using interleaved randomized benchmarking, showing nearly identical error distributions with and without the leakage suppression active. And because microwave signals can be multiplexed – multiple control tones sharing a single physical line – this approach avoids the wiring bottleneck that plagues hardware-intensive alternatives.

The numbers in context

The achievement sits alongside Google’s Willow result as only the second demonstration of below-threshold surface code error correction on a superconducting processor. Here is how the two compare on key metrics:

| Metric | Google Willow (Dec 2024) | USTC Zuchongzhi 3.2 (Dec 2025) |

|---|---|---|

| Processor size | 105 qubits | 107 qubits |

| Surface code distance | 7 | 7 |

| Physical qubits used | 101 | 97 |

| Error suppression factor (Λ) | 2.14 ± 0.02 | 1.40 ± 0.06 |

| Logical error per cycle (d=7) | 0.143% | Not publicly reported at comparable precision |

| Leakage suppression method | DC-pulse hardware (DQLR) | All-microwave (LRU + reset) |

| Beyond breakeven | Yes (2.4× best physical qubit) | Not claimed |

| Real-time decoding | Yes (distance-5, 63 µs latency) | Not demonstrated |

| Published in | Nature | Physical Review Letters (cover + Editors’ Suggestion) |

Google’s Willow remains ahead on raw error suppression performance, and demonstrated additional capabilities – real-time decoding and beyond-breakeven logical qubit lifetime – that the Zuchongzhi 3.2 paper does not claim. But the USTC result is not simply a replication of Google’s achievement. The all-microwave pathway is a distinct technical contribution with potentially significant implications for scalability.

Joseph Emerson, a physicist at the University of Waterloo who was not involved in the research, noted that the study addressed one of the most difficult problems in the field: qubits drifting out of their intended states and spreading errors through the system.

Timeline of the Zuchongzhi–Willow leapfrog

This result is the latest move in a multi-year back-and-forth between USTC and Google in surface code error correction:

In 2022, Pan’s group used an earlier Zuchongzhi processor to demonstrate a minimal distance-3 surface code – the smallest meaningful unit. Google then advanced to distance-5 in 2023. Neither had yet crossed the threshold. Then in December 2024, Google’s Willow achieved the breakthrough: a distance-7 code operating definitively below threshold, published in Nature. Now, exactly one year later, USTC has matched the distance-7 milestone – and done so with a leakage suppression method that is architecturally simpler and potentially easier to scale.

The result follows USTC’s separate achievement earlier in 2025 with Zuchongzhi 3.0, which set a new record in random circuit sampling – a different benchmark focused on raw computational speed rather than error correction. With Zuchongzhi 3.2, the same team has now demonstrated competitiveness on both fronts.

My Analysis – Don’t Underestimate China’s Quantum Program

I’ve been tracking the Zuchongzhi program since its early iterations, and I covered the 3.0 announcement in detail earlier this year. At that time, I noted that China and the US were effectively trading different kinds of bragging rights – China claiming the speed crown in raw quantum processing, while Google held the edge in error-corrected quantum computing. That framing is now obsolete. With the Zuchongzhi 3.2 result, China has closed the error correction gap.

Not entirely, of course. Google’s Willow still has the superior error suppression factor (Λ = 2.14 vs. 1.40), and Google demonstrated real-time decoding and beyond-breakeven logical qubit lifetime – capabilities the USTC paper does not claim. If this were a boxing match, you’d score the round for Google on points.

But scoring this on points misses the strategic picture.

The gap closed faster than anyone expected

When I compared Willow, Heron, Ocelot, Majorana, and Zuchongzhi 3.0 earlier this year, the implicit assumption in most Western analysis was that China was perhaps two to three years behind Google on error correction. USTC had demonstrated distance-3 in 2022. Google had leapt to below-threshold distance-7 by late 2024. The USTC team was working from the same 105-qubit class hardware, but hadn’t published error correction results at comparable scale.

Twelve months later, they’ve matched the code distance and crossed the threshold. And they did it with a technique that Google’s team hadn’t used – one that may turn out to be more practical at million-qubit scale.

This pattern should be familiar to anyone who has watched China’s technology trajectory over the past two decades. The initial result lags. The gap closes rapidly. And when it closes, it often closes with a twist – a different engineering path that carries its own advantages.

The scalability argument is real

The most important line in the USTC paper isn’t the error suppression factor. It’s the engineering argument for all-microwave control.

Every superconducting quantum computer already uses microwave signals to manipulate qubits. Gates, measurements, state preparation – it’s all done with microwaves. Google’s approach to leakage suppression added a separate hardware layer on top of this: DC pulse channels that shift qubit frequencies to dump leaked population. It works, but each additional hardware channel means more wiring threading down into a dilution refrigerator that already operates at temperatures colder than deep space.

This is the scaling bottleneck that everyone in the field talks about in private and nobody has fully solved. Current 100-qubit processors already push the limits of cryogenic wiring. Getting to 1,000 qubits is an engineering headache. Getting to a million – the scale required for cryptanalytically relevant computation – is a different class of problem entirely.

The USTC approach sidesteps a piece of this problem. By handling leakage suppression through the existing microwave control layer rather than adding new hardware, they reduce the wiring density and simplify chip packaging. Microwave signals can be multiplexed, meaning multiple control tones can share a single physical line. That’s a tangible advantage when you’re trying to scale from hundreds to thousands to millions of qubits.

Now, whether this advantage survives the brutal engineering realities of scaling is an open question. At Λ = 1.40, the USTC system is just barely below threshold. That means errors are decreasing as code distance grows, but only slowly – each increment in code distance buys only a modest improvement in logical error rate. By contrast, Google’s Λ = 2.14 means errors decrease much faster with scale, requiring fewer physical qubits per logical qubit to reach a given target error rate. In a regime where physical qubits are expensive and rare, the stronger error suppression matters more than the simpler wiring.

But the two are not necessarily in tension. There is no fundamental reason why the all-microwave approach can’t achieve higher Λ values with better qubits. If USTC can improve their gate fidelities – which currently lag Google’s by roughly a factor of two in two-qubit error rates — the same architecture could yield significantly stronger error suppression without adding hardware complexity.

What Western analysts keep getting wrong

There is a persistent tendency in Western quantum analysis to treat China’s quantum program as a follower – technically capable but always a step behind, replicating results that the US achieves first. This framing is comfortable and it is wrong.

Consider the record. USTC pioneered quantum advantage demonstrations in photonic systems with Jiuzhang in 2020 – a capability no Western lab has matched at comparable scale. They were among the first to deploy a quantum computer for commercial cloud access via the Tianyan platform. And their classical simulation team is arguably the best in the world at finding weaknesses in quantum advantage claims – it was USTC researchers who demolished Google’s 2019 supremacy claim by simulating it classically in 14 seconds.

When the same team that broke your benchmark then rebuilds their own hardware and crosses the threshold you crossed, that is not a follower. That is a peer competitor.

The Zuchongzhi 3.2 result also reflects something that is easy to miss from the outside: the depth of China’s quantum talent pipeline. This isn’t one lucky lab. Pan Jianwei’s group at USTC has been producing world-class quantum results for over a decade, across multiple modalities and multiple applications. They have hundreds of researchers, sustained government funding, and a national laboratory infrastructure purpose-built for quantum science. And they are not the only Chinese quantum team – the neutral-atom program in Wuhan recently deployed a 100-qubit commercial system, and companies like Origin Quantum are building the commercial ecosystem alongside the academic research.

What this means for quantum security

Let me be direct about what this does and does not mean for cryptographic security.

The Zuchongzhi 3.2 result does not bring us closer to a cryptanalytically relevant quantum computer (CRQC) in any immediate, measurable sense. A distance-7 surface code with Λ = 1.40 is so far from what would be needed to run Shor’s algorithm against real keys – where you’d need logical error rates on the order of 10⁻¹² and millions of physical qubits – that the direct cryptographic relevance is zero.

What it does tell us is that the error correction race is now genuinely international. The assumption that the US would be the sole source of breakthroughs in fault-tolerant quantum computing – and that security planners could therefore gauge the threat by watching Google, IBM, and a handful of American startups – needs to be updated.

China’s quantum program is advancing on all fronts simultaneously: raw computational speed (Zuchongzhi 3.0), error correction capability (Zuchongzhi 3.2), commercial deployment (Tianyan platform), and alternative modalities (neutral atoms, photonics). It is backed by an estimated $15 billion in cumulative government investment and organized around a national laboratory system designed for precisely this kind of sustained, multi-front push.

For CISOs and security leaders already engaged in post-quantum cryptography migration, this changes nothing about the urgency or the playbook. If anything, it reinforces the case that the quantum threat is not hypothetical and not distant – it is being actively pursued by multiple technically capable nation-states with enormous resources. The right response is the same as it has been: conduct your cryptographic inventory, prioritize systems with long-lived data, and begin your migration to post-quantum algorithms. Don’t wait for a single dramatic breakthrough to force your hand. By the time one arrives, it may already be too late for organizations that haven’t started.

The real competition is at higher code distances

Looking forward, the next milestone to watch is not who gets to distance-7 next – that race is effectively over, with Google and USTC both there. The next milestone is distance-9 and beyond. At distance-9, a surface code would use roughly 145 physical qubits, and the error suppression scaling would become much more informative about whether a given architecture can reach the error rates needed for practical fault-tolerant computation.

Google has the head start here. Their Willow simulations suggest they can maintain error suppression scaling out to distance-11 with their current noise model. USTC has stated publicly that they are pursuing distance-9 and distance-11 on the Zuchongzhi platform. The question is whether the all-microwave approach can sustain below-threshold performance as codes grow – because at some point, the slightly weaker error suppression factor starts to matter, requiring more physical qubits and more cycles to achieve the same logical error rate.

This is where the competition gets genuinely interesting, and genuinely consequential. The team that first demonstrates strong error suppression at distance-9 or higher on a scalable architecture will have the strongest claim to a credible path toward fault-tolerant quantum computing. Right now, both Google and USTC are in the running.

Don’t count China out.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.