The Dark Horse: How Silicon Quietly Assembled Every Building Block for Fault-Tolerant Quantum Computing

Table of Contents

The quantum computing modality race has had a clear narrative for most of the past decade. Superconducting qubits were the frontrunners — Google‘s quantum supremacy demonstration in 2019, IBM‘s steadily growing processor roadmap, the first below-threshold surface code results in 2024. Trapped ions were the precision contenders — the highest individual gate fidelities of any platform, with companies like Quantinuum and IonQ pushing toward commercial systems. Photonic quantum computing offered the exotic long shot — room-temperature operation and optical interconnects, championed by PsiQuantum and Xanadu.

Then, starting in late 2023 and accelerating through 2025, neutral atoms exploded onto the scene. The Harvard-MIT-QuEra collaboration demonstrated a 280-qubit logical quantum processor, followed by a universal fault-tolerant architecture with 448 atoms, below-threshold error correction, and continuous operation of over 3,000 qubits. Microsoft and Atom Computing announced plans for a 50-logical-qubit error-corrected machine. Neutral atoms went from dark horse to serious contender in under two years.

But while the world was watching neutral atoms surge, something quieter — and potentially more consequential — was happening in silicon.

Over the past four years, silicon spin qubits have systematically demonstrated every fundamental capability required for fault-tolerant quantum computing. Not with the flash of a single landmark paper, but through a steady, methodical progression across multiple research groups, multiple continents, and multiple approaches within the platform itself. Gates above threshold. Error correction. Algorithms. Multi-register scaling. Stabilizer-based error detection. And now, as of March 2026, universal logical operations and distillable magic states.

Silicon has not demonstrated the largest quantum processors. It has not set error correction distance records. It has not generated the most headlines. But it has assembled all the pieces — and it is the only platform that can leverage the most powerful manufacturing infrastructure on earth to put them together.

This is the case for silicon as the dark horse that matters most.

The Progression: Four Years, Six Milestones

The story of silicon’s rise to fault-tolerance readiness can be told through six papers, each clearing a specific milestone on the path from bare physical qubits to logical quantum computation.

January 2022: Gates above the fault-tolerance threshold. Three independent teams — at RIKEN (Japan), QuTech (Netherlands), and UNSW (Australia) — simultaneously published results in Nature showing silicon spin qubit gate fidelities exceeding 99%. For the first time, silicon met the quality standard required by the surface code. The simultaneity of the result, across three continents and two different qubit implementations (quantum dots and donor spins), signalled that the achievement was reproducible — not a one-lab anomaly.

August 2022: First error correction. RIKEN’s Takeda et al. demonstrated a three-qubit phase-flip correcting code using an efficient single-step iToffoli gate. Separately, QuTech’s van Riggelen et al. implemented a phase-flip code in germanium hole-spin qubits. Neither result achieved fault-tolerant performance, but both proved that the procedural machinery of quantum error correction could run on semiconductor spin qubits.

February 2025: First multi-qubit algorithm above threshold. SQC demonstrated Grover’s search algorithm on a four-qubit silicon processor with ~95% success probability — with every single operation in the processor above the fault-tolerance threshold. This was the first time silicon executed a meaningful algorithm beyond two qubits, proving that gate quality could be maintained across the depth of a real circuit.

December 2025: Modular scaling with record fidelity. SQC unveiled an 11-qubit atom processor linking two phosphorus donor registers via electron exchange coupling, with gate fidelities from 99.1% to 99.9%. The 99.9% two-qubit gate is a silicon record. More importantly, the result proved that connecting multiple registers does not degrade performance — the foundational experiment for a modular scaling strategy.

January 2026: Stabilizer-based error detection. SZIQA demonstrated the first stabilizer-based error detection in silicon, using four nuclear spin qubits to detect arbitrary single-qubit errors. The experiment revealed strongly biased noise in the system — a finding with profound implications for error correction overhead.

March 2026: Universal logical operations. The same SZIQA team demonstrated the complete universal logical gate set — including the non-Clifford T gate via gate-by-measurement — on two logical qubits encoded with the [[4,2,2]] code. Magic states were prepared above the Bravyi-Kitaev distillation threshold. A VQE algorithm was executed on encoded logical qubits to compute the ground-state energy of a water molecule. This is the first time any silicon platform has operated at the logical level.

Read that list again. It is the complete checklist for fault-tolerant quantum computing: physical gate quality ✓, error correction protocols ✓, algorithmic execution above threshold ✓, multi-register connectivity ✓, stabilizer-based syndrome extraction ✓, universal logical gates ✓, magic state preparation above distillation threshold ✓.

No other platform demonstrated all of these capabilities in four years. Superconducting qubits took over a decade from the first above-threshold gates (2014) to below-threshold error correction (2024). Silicon compressed this timeline dramatically — in part because it could learn from the superconducting roadmap, in part because nuclear spin coherence times give silicon circuits more room to accumulate operations before decoherence limits performance.

How Silicon Compares to the Competition

Silicon’s checklist completion is impressive, but raw milestone-counting obscures important differences in scale and maturity. A fair comparison requires acknowledging where silicon leads, where it lags, and why the differences matter.

Versus superconducting qubits: Google’s Willow processor demonstrated below-threshold surface code error correction with over 100 qubits — a landmark achievement in late 2024. IBM has operated processors with over 1,000 qubits. In terms of system-level integration, superconducting platforms are years ahead of silicon. But superconducting qubits are physically large (hundreds of micrometres per qubit), require bespoke fabrication, and face fundamental wiring constraints as systems scale. The 20-million-qubit estimate for RSA-2048 factoring under standard surface-code assumptions reflects, in part, these overhead costs. Silicon’s potential advantage is manufacturing density: a million silicon spin qubits could fit on a chip the size of a fingernail.

Versus trapped ions: Quantinuum has demonstrated the highest individual gate fidelities of any platform (~99.9%+ for two-qubit gates) and operates commercially available quantum computers. Trapped ions offer excellent connectivity and long coherence. But scaling faces mechanical challenges — trapping and controlling millions of individual ions requires complex electrode arrays and shuttling protocols. Clock speeds are also slow, with gate times in the milliseconds rather than the nanoseconds of silicon. For cryptanalytic applications where wall-clock time matters — as the Google cryptocurrency analysis detailed — this speed difference is critical.

Versus neutral atoms: This is silicon’s most interesting comparison. Neutral atoms have had a remarkable run: Bluvstein et al.’s work at Harvard demonstrated up to 448-atom fault-tolerant architectures with below-threshold error correction, mid-circuit readout, and logical teleportation — capabilities silicon has not yet matched at comparable scale. QuEra has delivered machines with 37 logical qubits to research institutions. Microsoft and Atom Computing are targeting 50 logical qubits by early 2027.

But neutral atoms have their own constraints. Gate speeds are two to three orders of magnitude slower than silicon. Atom loss during computation remains a challenge (though the 3,000-qubit continuous operation result addresses this). And the “manufacturing” story is fundamentally different: neutral atom systems are laboratory apparatus — vacuum chambers, laser arrays, optical tweezers — not semiconductor chips. There is no equivalent of a semiconductor foundry for neutral atom quantum computers. Each system is assembled, not manufactured.

Silicon’s unique position: What sets silicon apart is not that it leads on any single metric — it doesn’t. It is that silicon is the only platform where every demonstrated capability has a credible path to industrial-scale manufacturing. The three approaches within silicon — atomically precise donor qubits delivering the best fidelities, gate-defined quantum dots enabling industrial-scale fabrication, and foundry-compatible devices bridging the two — represent a diversified bet on the same material, backed by the largest industrial infrastructure on the planet. The ecosystem pursuing these approaches now spans companies on four continents, national laboratories, and major semiconductor foundries.

No other qubit platform has an analogue of this. Superconducting fabrication is specialised. Trapped ion and neutral atom systems are assembled from optical and electrical components. Photonic systems require custom photonic integrated circuits. Only silicon offers the prospect of leveraging the existing semiconductor manufacturing infrastructure — the same tools, cleanrooms, lithography machines, and process engineering expertise — to build a quantum computer.

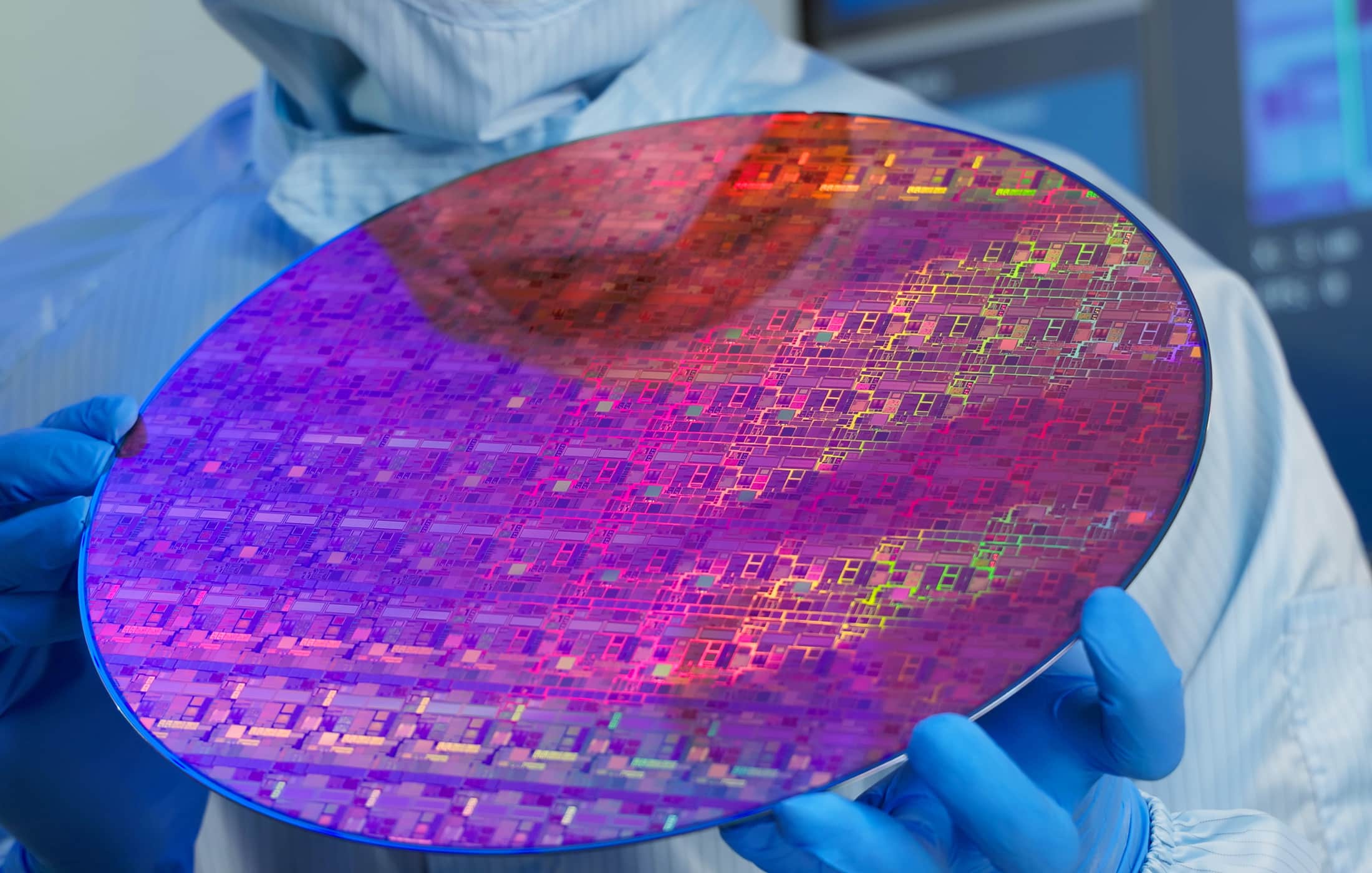

A necessary caveat: leveraging is not the same as copying. You cannot walk into a standard CMOS fab today and produce quantum-grade silicon chips without significant process modifications. Silicon qubits require isotopically purified ²⁸Si substrates (natural silicon contains ~5% ²⁹Si, whose nuclear spin dephases qubits — this must be reduced to below 0.1%), ultra-clean gate oxide interfaces far beyond what standard logic processes demand, and lithographic precision at the aggressive end of what EUV can deliver. imec spent close to a decade optimising these processes. Intel adapted its D1 fab with what it describes as “few changes” to standard CMOS flow, but those few changes encode years of materials science. The ²⁸Si supply chain itself is only now being commercialised — ASP Isotopes began commercial production from South Africa in early 2025, and Quobly is running the first ²⁸Si wafers through STMicroelectronics’ 300 mm lines in Crolles, France.

The bridge from CMOS to quantum silicon is real, but it is an engineering bridge — not a trivial walk across a flat road. What makes it qualitatively different from other platforms is that the bridge uses existing infrastructure. The EUV scanners, the CVD chambers, the metrology tools, the cleanroom protocols are the same ones that produce classical chips. The modifications are targeted, not architectural. No other qubit platform can say this.

The Biased Noise Advantage

There is one more factor that deserves dedicated attention, because it is underappreciated in most comparisons and directly relevant to CRQC resource estimates. I cover it in depth in a separate analysis, but the executive summary belongs here.

The SZIQA team’s error detection and logical operations experiments both revealed that silicon donor qubits exhibit strongly biased noise — phase-flip errors dominate overwhelmingly while bit-flip errors are essentially absent. This is not a device quirk. It is a consequence of fundamental physics: nuclear spins in silicon have effectively infinite T₁ lifetimes. They do not spontaneously flip. They only lose phase coherence.

The theoretical work of Tuckett, Bartlett, Flammia, and Brown at the University of Sydney has shown that under biased noise, fault-tolerance thresholds can exceed 5% — more than five times the standard ~1% threshold under symmetric noise. Tailored codes like the XZZX surface code push these gains further. The practical consequence is that silicon may need significantly fewer physical qubits per logical qubit than any estimate based on standard symmetric noise assumptions suggests — potentially a factor-of-several reduction in total physical qubit counts for a given computation. As I detail in my full analysis of this advantage, this changes the math on how many qubits a silicon-based CRQC would actually require, and it means the standard resource estimates — Gidney RSA-2048, the Pinnacle Architecture, Google’s ECC analysis — are likely conservative when applied to silicon.

This is not a speculative advantage. It is a measured property of the hardware. And it is unique to silicon among platforms with a credible path to industrial-scale manufacturing.

What’s Still Missing — and What to Watch For

Silicon has assembled the building blocks. It has not assembled the building. Several critical capabilities remain to be demonstrated, and they need to come roughly in the following order:

1. Mid-circuit measurement and real-time feedback. This is the single most important missing capability. All silicon error correction to date has been performed through postprocessing — measuring at the end of the circuit and discarding erroneous results after the fact. True fault-tolerant operation requires measuring syndrome qubits in the middle of a computation, processing the result classically in real time, and applying corrections without disturbing the data qubits. Neutral atoms and superconducting qubits have both demonstrated this. Silicon has not.

Watch for: Any silicon experiment demonstrating non-destructive, mid-circuit syndrome extraction. This is the bridge between error detection (demonstrated) and real-time error correction (needed).

2. Repeated error correction cycles with net benefit. The “break-even” point — where an encoded logical qubit retains fidelity longer than the best unencoded physical qubit — has been demonstrated in superconducting qubits and is being approached in neutral atoms. Silicon needs to demonstrate this, ideally with multiple rounds of syndrome extraction showing that errors are being suppressed rather than accumulated.

Watch for: A silicon experiment showing that logical qubit lifetime exceeds physical qubit lifetime under repeated error correction.

3. Scaling beyond a single cluster. SQC’s 11-qubit processor connected two donor registers. The next step is arrays of clusters — four, eight, sixteen registers — demonstrating that the modular architecture scales with maintained performance. This is where the engineering of precise inter-cluster spacing and exchange coupling becomes critical.

Watch for: SQC or SZIQA publications demonstrating multi-cluster arrays. The companion pre-print on “quantum computer based on donor-cluster arrays in silicon” (Zhang et al., arXiv:2509.24749) from the SZIQA group signals this work is underway.

4. Foundry qubits reaching logical operation. The Diraq/imec result proved foundry-fabricated qubits can cross the fault-tolerance threshold. But foundry qubits have not yet demonstrated multi-qubit algorithms, error correction, or logical operations. Closing this gap — proving that industrially manufactured qubits can do what bespoke qubits have done — is essential for the manufacturing thesis to hold.

Watch for: Any foundry-fabricated silicon device demonstrating error correction or algorithmic performance above threshold. This would be the decisive proof point for silicon’s scaling story.

5. Cryo-CMOS integration. Controlling millions of qubits with room-temperature electronics connected by cables is physically impossible — the wiring alone would overwhelm any dilution refrigerator. Silicon’s unique advantage is that classical CMOS control circuits can, in principle, be co-fabricated with qubits on the same chip or in the same cryogenic environment. Quantum Motion and others are pursuing this, but fully integrated qubit-plus-control systems have not been demonstrated.

Watch for: Demonstrations of qubit operation controlled by co-located cryo-CMOS electronics without performance degradation.

What This Means for Quantum Security

For CISOs and security leaders calibrating PQC migration timelines, the message from silicon’s recent progress is not that threat timelines have shortened — they haven’t, at least not from silicon alone. Two logical qubits with postprocessed error detection is a very long way from the ~1,400 logical qubits needed to factor RSA-2048 or the ~1,200 needed for elliptic curve attacks.

The message is about portfolio risk. The probability that some platform achieves a CRQC is higher than the probability that any specific platform achieves it. And silicon’s recent demonstration of every fault-tolerance building block, combined with its unique manufacturing scalability, adds a credible new pathway to that outcome — one that was easy to dismiss two years ago and is not easy to dismiss today.

Moreover, silicon’s classification as a “fast-clock” architecture — with nanosecond-scale gates and microsecond-scale error correction cycles — means that a silicon-based CRQC would pose the most acute form of the quantum threat. Unlike slow-clock architectures (neutral atoms, trapped ions) where cryptanalytic attacks might take hours or days, a fast-clock CRQC could complete attacks in minutes, enabling the “on-spend” attack scenarios that the Google cryptocurrency analysis detailed and that the U.S. Intelligence Community’s threat assessment implicitly encompasses.

The practical takeaway for risk management: do not wait for the modality race to produce a winner before beginning PQC migration. The race is diversifying, not converging. Silicon’s progress reinforces what the Applied Quantum PQC Migration Framework has long argued: the correct posture is migration now, hedged against architectural diversity. The platform that ultimately breaks your cryptography may be one you’re not currently watching.

The Material That Built the World

There is something poetically appropriate about silicon’s candidacy for quantum computing. The material that enabled the classical information revolution — that turned computing from room-sized vacuum-tube machines into the ubiquitous, invisible infrastructure of modern life — may yet enable the quantum one.

The path is not guaranteed. Silicon lags the leaders in system scale by a wide margin. The fabrication challenges for atomically precise qubits remain severe. The performance of foundry-manufactured qubits, while promising, is unproven at scale. Every milestone described in this article series is a necessary step, not a sufficient one.

But the building blocks are all in place. The physics works. The noise profile is favourable. Multiple independent approaches are converging. The manufacturing infrastructure exists. And the pace of progress — from threshold-crossing to logical operations in four years — suggests that the remaining obstacles are engineering challenges, not fundamental barriers.

Silicon is no longer the platform that might matter someday. It is the platform that matters now — the dark horse that, quietly and systematically, has put itself in position to change the race.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.