The Quantum Computer That Breaks Your Encryption Won’t Be a Single Chip

Table of Contents

(Updated in November 2025)

There’s a question that has quietly bothered me for years, one the quantum computing industry has mostly avoided asking out loud: why are we trying to build a quantum computer the way we stopped building classical computers fifty years ago?

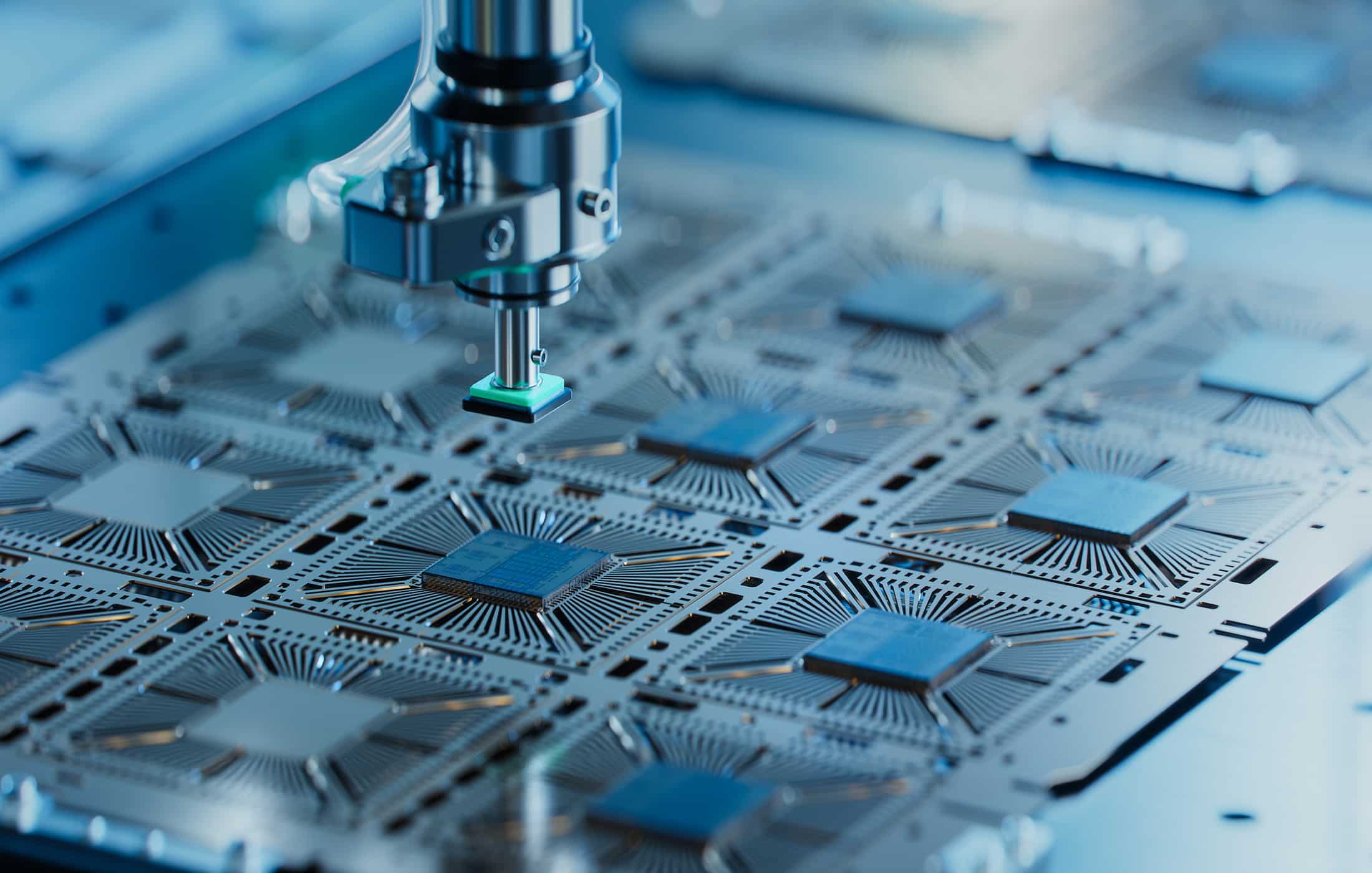

The modern data center doesn’t run on one kind of chip. It runs on CPUs, GPUs, TPUs, FPGAs, DPUs, and increasingly specialized accelerators – each optimized for a narrow class of operations, connected by high-bandwidth fabrics, orchestrated by software that routes workloads to whichever processing element handles them best. The smartphone in your pocket contains more types of specialized silicon than most people can name. This is not an accident. It is the result of a fundamental lesson the classical computing industry learned the hard way: no single processor architecture is optimal for all tasks, and the system that wins is the one that specializes its components and integrates them intelligently.

Quantum computing, by contrast, remains largely trapped in a monolithic mindset. The prevailing narrative is a horse race: superconducting vs. trapped ion vs. neutral atom vs. photonic vs. silicon spin. Pick your modality, scale it up, and win. The implicit assumption is that one qubit technology will emerge as the “winner” – the quantum equivalent of the x86 architecture – and everything else will fade away.

I think that assumption is wrong. And I think the quantum computer that eventually poses a real threat to today’s cryptography will look nothing like what most people imagine when they hear “quantum computer.” It won’t be a single chip. It won’t use a single qubit modality. And it won’t be orchestrated by a human writing gate sequences. It will be a heterogeneous system of specialized quantum and classical processing elements, dynamically orchestrated by AI.

I want to be clear upfront: this is speculation. Everything I describe here depends on solving engineering problems that remain formidable — chief among them actually building fault-tolerant quantum processors of any kind, which no one has yet achieved at cryptographically relevant scale. But I believe the architectural logic is sound, and understanding it matters for how we think about the timeline and nature of the quantum threat.

The QPU Is an Accelerator, Not a Computer

The first conceptual shift is to stop thinking of a quantum processing unit (QPU) as a standalone computer. A QPU is an accelerator – a specialized coprocessor that handles specific mathematical operations faster than a classical processor, just as a GPU handles matrix multiplications faster than a CPU.

No one runs an entire application on a GPU. You run the parts that benefit from massive parallelism on the GPU, and everything else on the CPU. The same logic applies to quantum computing. Shor’s algorithm doesn’t run entirely on quantum hardware. The quantum part – the modular exponentiation and period finding – is a subroutine within a larger classical computation that includes number theory, classical post-processing, and repeated measurement-and-retry cycles. Grover’s algorithm similarly provides a quadratic speedup on a specific search subroutine, not a replacement for the entire computational stack.

This isn’t a minor architectural detail. It means every practical quantum computation already requires tight integration between quantum and classical processing. The QPU produces intermediate results that must be classically decoded, error-corrected, and fed back into the next quantum operation – often on microsecond timescales. The classical control system is not a peripheral; it is an integral part of the quantum computer’s execution engine.

Once you accept that the QPU is an accelerator, the next question follows naturally: accelerator for what, exactly? And this is where the monolithic mindset breaks down.

No Single Qubit Modality Excels at Everything

Each quantum computing modality has a distinctive profile of strengths and weaknesses – and those profiles are not converging. Consider what each brings to the table, using the lens of my CRQC Quantum Capability Framework:

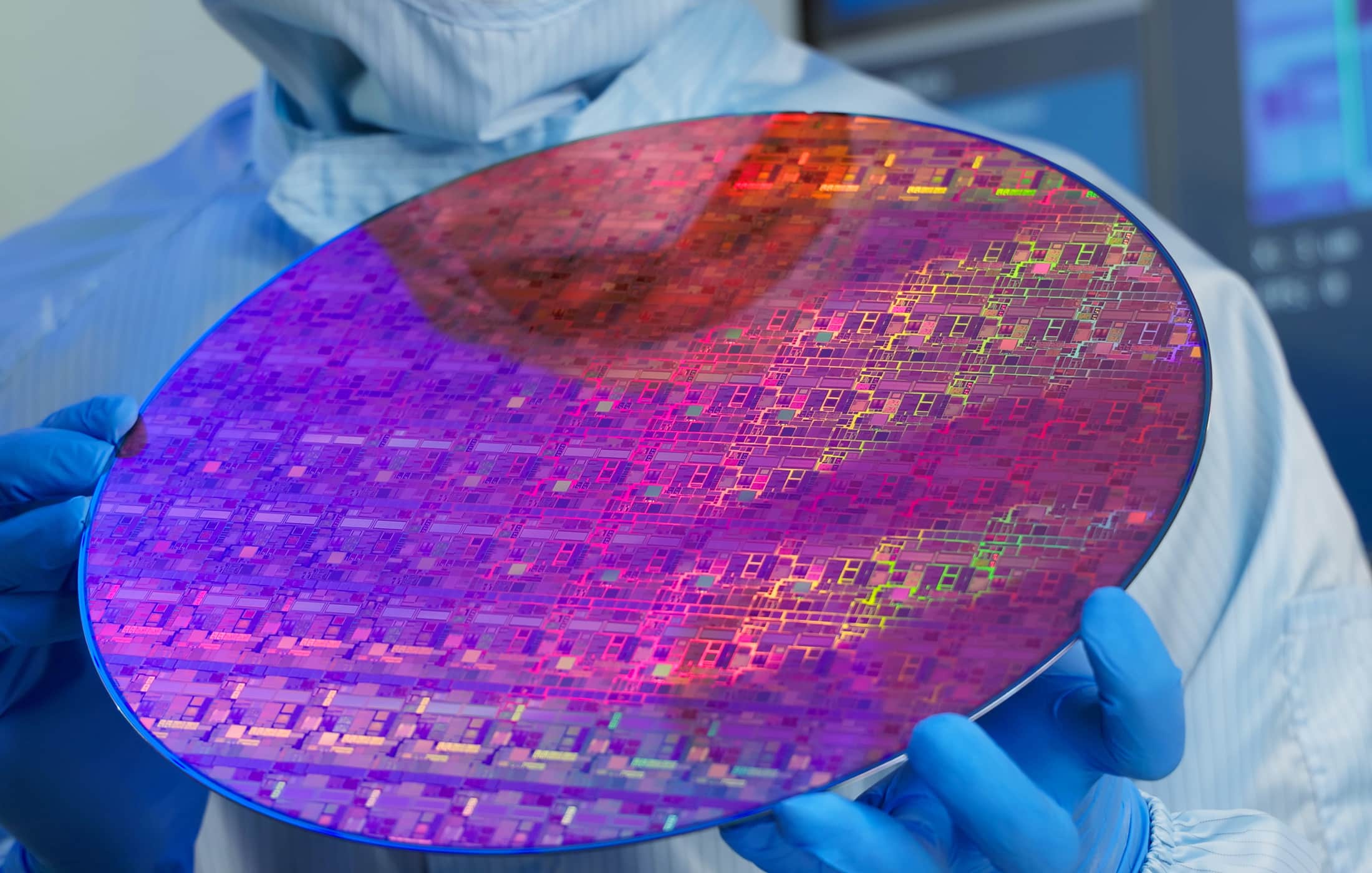

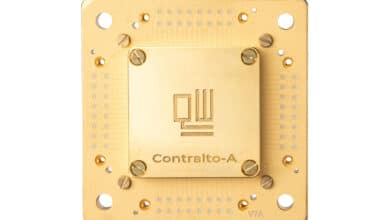

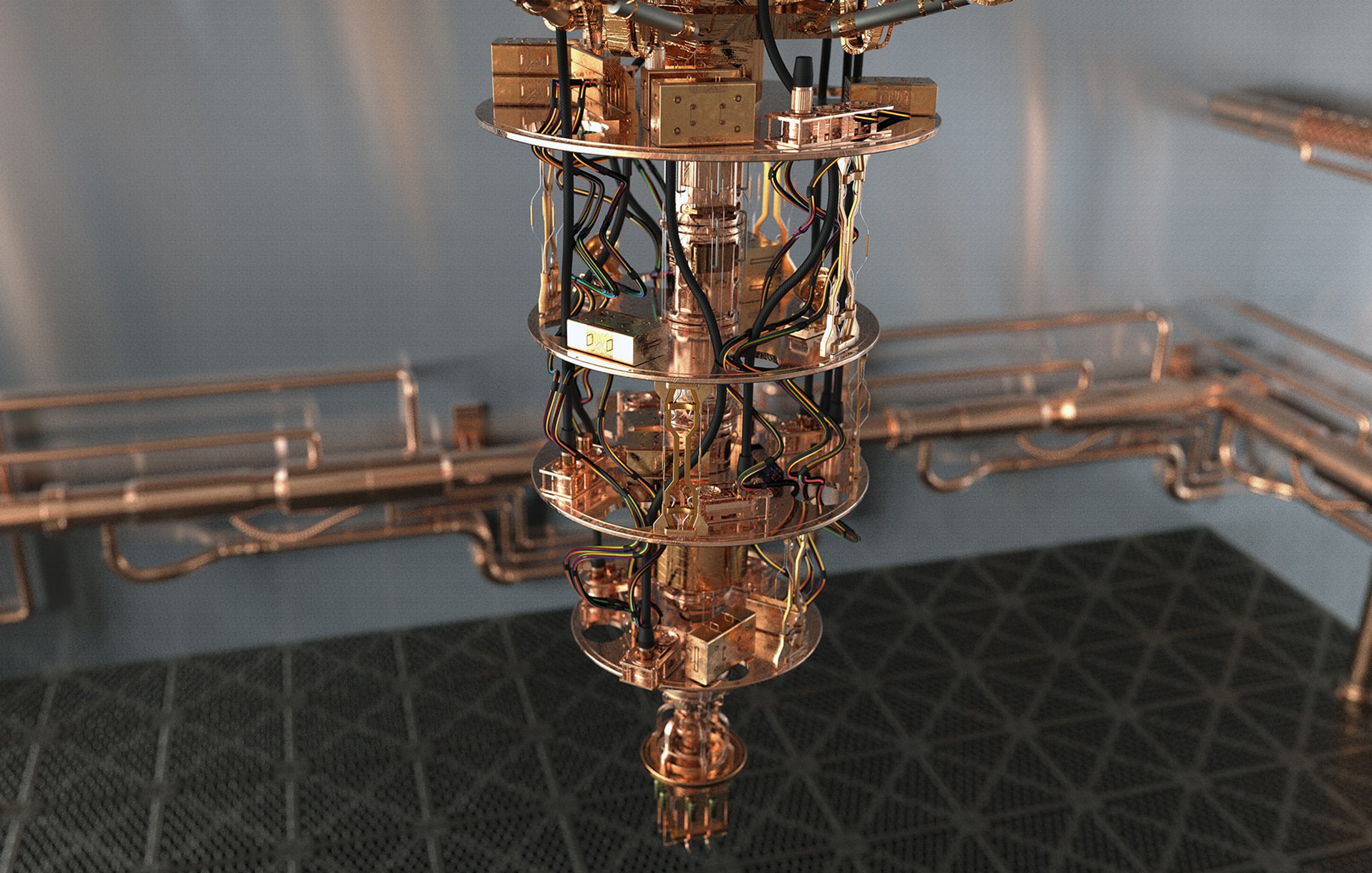

Superconducting qubits are fast. Gate times in the nanosecond range, QEC cycle times around a microsecond, and a deep industrial base from companies like Google, IBM, and Rigetti. But they require millikelvin cryogenic temperatures, suffer from relatively short coherence times (hundreds of microseconds), and face brutal scaling challenges in wiring density and frequency collision management as chip sizes grow. IBM’s 1,121-qubit Condor processor reportedly required approximately a mile of cryogenic flex I/O wiring within a single dilution refrigerator.

Trapped-ion qubits have exceptional coherence – seconds to minutes – and the highest demonstrated two-qubit gate fidelities in the field. But they are slow. Gate times are measured in microseconds to milliseconds, and QEC cycle times in the tens of milliseconds. For a computation like RSA-2048 factoring that requires days of sustained operation even on fast hardware, a trapped-ion-only machine might require months or years.

Neutral-atom qubits offer massive parallelism and reconfigurable connectivity through optical tweezer arrays. They’ve demonstrated impressive fault-tolerant operations on hundreds of qubits. But their clock speeds are similar to trapped ions (millisecond-scale cycles), and their scaling path depends on solving atom loss, crosstalk, and continuous operation challenges.

Photonic qubits operate at room temperature and can leverage existing telecommunications infrastructure for interconnects. But generating and manipulating photonic qubits deterministically is extremely difficult.

Silicon spin qubits promise compatibility with existing CMOS fabrication processes – potentially enabling quantum chips manufactured in conventional semiconductor fabs. But they’re early in development.

Now here’s the key observation: the optimal qubit modality depends on the function being performed. Fast gates? Superconducting. Long-term storage with minimal error accumulation? Trapped ions or rare-earth ions. Massively parallel operations with flexible connectivity? Neutral atoms. Long-distance quantum state transfer? Photonics. Each modality is a specialist, not a generalist.

The industry’s current approach of asking each modality to be good at everything is like asking a sprint car to also be a long-haul truck. You can engineer compromises, but you’ll never match the performance of purpose-built vehicles doing what they do best.

The Heterogeneous Quantum Computer

If we accept that different qubit modalities excel at different functions, and that a CRQC requires multiple functions performed at extreme precision for extended durations, the logical conclusion is a heterogeneous quantum computer that combines multiple modalities, each assigned to its optimal role.

What might this look like? I’ll sketch a speculative architecture, drawing on the functional decomposition that any large-scale fault-tolerant quantum computation requires:

Quantum Processing Units (fast-clock modality). The computational core, where universal fault-tolerant logic operations happen, would likely use the fastest available qubit modality. Today, superconducting qubits are the leading candidate, with microsecond-scale QEC cycles. The QPU doesn’t need to be large. Multiple small QPU cores could operate in parallel on different parts of the algorithm, much like CPU cores in a modern processor.

Quantum Memory (long-coherence modality). Most qubits in any fault-tolerant computation spend most of their time idle waiting for other operations to complete before they’re needed again. In Gidney’s implementation of Shor’s algorithm, each qubit is inactive for approximately 96–97% of all logical clock cycles. Storing these idle qubits in the same expensive, fast, high-error-rate QPU hardware is enormously wasteful. A dedicated quantum memory tier using long-coherence qubits — trapped ions, neutral atoms, or ultra-long-coherence systems like rare-earth ions with coherence times exceeding hours — could store idle quantum states with dramatically lower error rates and hardware overhead. Different memory tiers could serve different latency needs, much like the L1/L2/L3 cache hierarchy in classical processors.

Quantum State Factories (specialized generation). Magic state distillation – the process of creating the high-fidelity resource states needed for non-Clifford gates like T-gates – is one of the dominant overheads in fault-tolerant quantum computing. Dedicated factory modules, potentially using photonic circuits capable of extremely high-rate state generation, could feed purified resource states to the QPU on demand.

Quantum Interconnect (photonic modality). Moving quantum states between modules requires a quantum bus – a communication layer that can transfer entangled states over distances without destroying them. Photonic interconnects are the natural candidate here, using Bell-pair generation and teleportation-based protocols to move logical qubits between QPUs, memories, and factories. This is analogous to the optical interconnects in modern data centers, and several companies (Nu Quantum, Qunnect, Aliro) are specifically developing this technology.

Classical HPC Integration. The classical control stack – real-time decoders, syndrome processors, scheduling engines, and application software – would run on conventional high-performance computing hardware tightly coupled to the quantum modules. This is not an afterthought; the classical processing requirements for a CRQC-scale computation are themselves formidable. Real-time decoding of surface codes at scale generates data rates that challenge the fastest classical processors.

The Orchestration Problem: Why AI Ends Up in the Middle

Here’s where the complexity becomes genuinely daunting and where, I believe, AI becomes not just useful but necessary.

A heterogeneous quantum computer with multiple QPU cores, tiered quantum memories, state factories, and photonic interconnects, all operating at different clock rates with different error profiles and latency characteristics, presents a scheduling and orchestration problem of extraordinary complexity. At any given moment, the system must decide:

- Which qubits should stay in the QPU and which should be moved to memory?

- Which memory tier – fast cache or dense long-term storage – is optimal for a given idle duration?

- When should states be pre-fetched from memory to minimize QPU wait times?

- How should magic states be scheduled for delivery from factories?

- When does the cost of transferring a state between modules exceed the cost of just letting it accumulate errors in place?

- How should operations be reordered to maximize QPU utilization without violating algorithmic dependencies?

These decisions must be made continuously, in real time, across thousands or millions of quantum states, with the optimization landscape changing every QEC cycle. The cost function is multi-dimensional – balancing error accumulation, transfer latency, memory capacity, factory throughput, and algorithmic dependencies simultaneously.

This is precisely the kind of optimization problem where AI excels and human-designed heuristics struggle. A learned scheduler trained on simulations and refined through online optimization could potentially discover scheduling strategies that no human engineer would find. It would need to operate at the speed of the quantum system’s classical control loop (microseconds for superconducting QPUs), which is feasible for inference on specialized AI hardware even if training occurs offline.

I am speculating here. No one has built such a system, and the control latency requirements are demanding. But the pattern is consistent with what has happened in every other domain where heterogeneous computing has emerged: the orchestration layer eventually becomes the hardest and most valuable part of the stack, and it eventually becomes AI-assisted.

The Geopolitical Dimension

There’s a dimension to this that extends beyond pure engineering: quantum sovereignty. If the path to a CRQC requires assembling a heterogeneous system from components that span multiple qubit modalities, interconnect technologies, and classical computing platforms, then no single company or country needs to master every modality. The game becomes one of systems integration – assembling the best available components into a working whole.

This has profound implications. A nation that has strong superconducting qubit programs but weak photonic interconnect capabilities might partner with, or acquire from, nations or companies that have the missing pieces. The CRQC becomes a supply chain and integration challenge as much as a physics challenge. DARPA’s Heterogeneous Architectures for Quantum (HARQ) initiative signals that the U.S. defense establishment already sees this. My forthcoming book Quantum Sovereignty explores these dynamics in depth.

The Engineering Chasms That Remain

I want to be explicit about what stands between the architecture I’ve described and reality, because the gaps are real:

Fault-tolerant QPUs don’t exist yet at useful scale. No quantum processor has demonstrated the sustained, low-error logical operations needed for cryptographically relevant computations.

Quantum memory at the required scale is unproven. Rare-earth ion memories with multi-hour coherence have been demonstrated in laboratories, but not integrated with superconducting QPUs or operated as part of a computing stack. The interface between cryogenic superconducting processors and room-temperature or different-cryogenics memory systems is an unsolved engineering challenge.

Quantum interconnects need massive improvement. Teleportation-based state transfer between modules has been demonstrated, but at rates and fidelities far below what a CRQC would require. Sustaining high-fidelity Bell-pair generation at rates sufficient for a multi-module architecture is among the hardest near-term challenges.

The AI orchestration layer is hypothetical. No one has trained a scheduling AI for heterogeneous quantum systems at scale. Whether the latency requirements are compatible with AI inference, whether the optimization landscape is tractable, and whether simulation-trained models transfer to real hardware are all open questions.

Classical control at scale is itself a major challenge. A CRQC-scale computation generates enormous volumes of syndrome data that must be decoded in real time. The classical computing requirements for the control stack are substantial and growing.

None of these problems are known to be fundamentally impossible. But all of them are genuinely hard, and the integration challenge of making them all work together as a system is harder than any individual component.

Why This Matters for Quantum Readiness

If I’m right that the CRQC will be a heterogeneous system rather than a monolithic chip, several implications follow for organizations planning their quantum readiness:

The “which modality will win?” question is the wrong question. The relevant question is which modalities will combine most effectively, and how quickly the integration challenges will be solved. Monitoring only one quantum computing platform is no longer sufficient for threat assessment.

Progress may be non-linear. In a monolithic paradigm, building a CRQC requires one modality to solve all its problems. In a heterogeneous paradigm, breakthroughs in any modality can contribute to the overall system. A sudden advance in quantum memory, or in photonic interconnects, or in AI-based scheduling could accelerate the entire ecosystem. This makes the timeline harder to predict and the tail risks fatter.

The “million qubit” mental model is obsolete. Between algorithmic improvements, QEC code innovations, and now architectural optimization, the physical qubit requirements for breaking RSA-2048 have dropped from 20 million to potentially under 200,000 in the space of five years. The heterogeneous architecture approach suggests this trend will continue. Planning your PQC migration around “it takes a million qubits and no one has that” is not a defensible strategy.

Systems integration capability is the key variable. The entity – whether a nation, corporation, or defense agency – that first assembles a working heterogeneous quantum system may not be the one with the best qubits. It may be the one with the best integration engineering and the most effective orchestration software. This shifts the competitive landscape in ways that conventional qubit-count comparisons miss entirely.

The quantum computer that breaks your encryption won’t look like what you expect. It will be a system – distributed, specialized, AI-orchestrated, and assembled from the best components multiple modalities can offer.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.