The Nervous System of Quantum Computing: A Deep Dive into Quantum Control Systems

Table of Contents

(Updated in March 2026 after the Origin Pilot OS release)

In July 2025, Keysight Technologies shipped a piece of equipment to a research institute in Tsukuba, Japan, that most people outside the quantum industry had never heard of – yet without it, the 1,000-qubit quantum computer it was destined for would have been little more than an extraordinarily expensive refrigerator. The device was a quantum control system: a dense rack of electronics designed to translate human-readable quantum programs into the precisely timed electromagnetic pulses that manipulate individual qubits. It was, at the time, the largest such system ever commercially delivered.

That milestone tells a story the quantum computing industry has been slow to acknowledge. For years, the public narrative has centered on qubit counts, error rates, and algorithmic breakthroughs – the glamorous physics of the quantum processor itself. But between the quantum program written by a developer and the fragile quantum states dancing inside a dilution refrigerator or vacuum chamber, there sits a vast classical infrastructure that most roadmap presentations gloss over entirely. This is the domain of the quantum control system (QCS): the layer that generates, times, shapes, and measures the signals that make quantum computation physically happen.

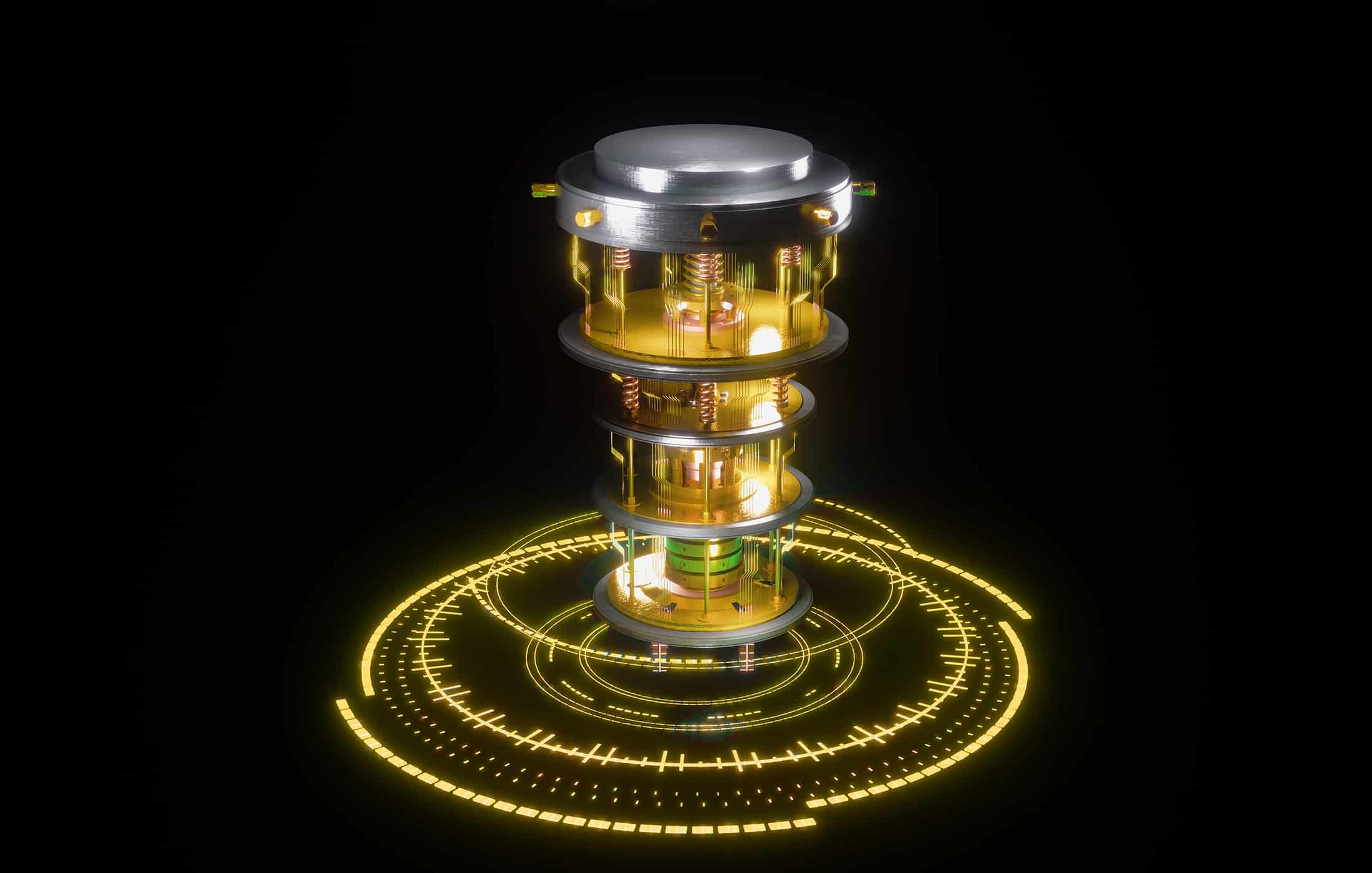

If the quantum processor is the brain of a quantum computer, the control system is its nervous system – the intricate wiring that converts intention into action and sensation into data. And as quantum computers scale from tens of qubits toward the thousands and millions needed for fault-tolerant computation, the control system is rapidly emerging as the most critical engineering bottleneck in the entire stack.

What a Quantum Control System Actually Does

At its most fundamental level, a quantum control system performs three functions: it generates the signals that manipulate qubits, it captures the signals that reveal qubit states, and it processes those signals fast enough to keep up with the quantum hardware’s unforgiving clock.

That sounds deceptively simple. In practice, it is anything but.

Consider a superconducting qubit – the kind used by IBM, Google, and dozens of startups. To perform a single-qubit gate, the control system must generate a microwave pulse at a precise frequency (typically between 4 and 8 GHz), with a carefully calibrated amplitude, duration (often around 20 nanoseconds), and phase. The pulse shape matters: a sloppy pulse excites unwanted transitions and introduces errors. A pulse that arrives a nanosecond too early or too late can destroy the intended operation. And because neighboring qubits interact with each other, the control system must account for crosstalk – the electromagnetic equivalent of shouting in a library.

Now multiply that challenge across hundreds or thousands of qubits, each requiring its own calibrated control signals, each drifting slightly in frequency as the system heats and cools, each needing to be measured and re-measured in real time. The control system must orchestrate all of this with nanosecond precision across hundreds of synchronized analog channels, while also running classical feedback loops that process measurement outcomes and adjust subsequent operations – all within the coherence window of the qubits, which might be as short as a hundred microseconds.

This is why the quantum control systems market is projected to grow at more than 27 percent annually, reaching nearly $384 million by 2031. The physics of the qubit gets the headlines, but the engineering of the control system determines whether that physics can be harnessed at scale.

Control System vs. Quantum Operating System: Drawing the Line

A question that increasingly surfaces in industry discussions is where a quantum control system ends and a quantum operating system begins. The answer matters, because the two concepts address fundamentally different problems, and conflating them leads to muddled thinking about the quantum stack.

A quantum control system operates at the physical layer. It is concerned with signals: generating waveforms, timing pulses, digitizing readout responses, running real-time feedback loops. It deals in volts, gigahertz, and nanoseconds. Its job is to faithfully execute the lowest-level instructions that physically manipulate qubits – what the QNodeOS team at QuTech calls the “QDevice” layer. Most control systems today are built around FPGAs (field-programmable gate arrays) that can process and respond to signals with deterministic, sub-microsecond latency. They are, in effect, specialized real-time embedded systems.

A quantum operating system, by contrast, operates at the system software layer. It is concerned with abstraction: managing quantum resources, scheduling jobs, interfacing with compilers, coordinating classical and quantum processing, and, critically, providing a hardware-abstraction layer that decouples applications from specific physical implementations. When QuTech published the first implementation of QNodeOS in Nature in March 2025, they demonstrated a system that could execute quantum network applications in platform-independent high-level software across different types of quantum processors – a fundamentally different problem than generating the right microwave pulse at the right time.

The distinction is analogous to the relationship between a network interface card (NIC) and a network operating system in classical computing. The NIC handles the physical transmission and reception of electrical signals on the wire. The network OS manages connections, routes packets, allocates bandwidth, and presents a uniform interface to applications regardless of the underlying hardware. Both are essential, and they must work together seamlessly, but they solve different problems at different levels of abstraction.

In the quantum world, this boundary is still being defined – and some systems blur it deliberately. Quantum Machines’ Quantum Orchestration Platform (QOP), for example, combines a hardware control system (the OPX family) with a software layer (the QUA programming language) that incorporates elements of compilation, scheduling, and real-time decision-making that could reasonably be called “operating system” functions. Similarly, Zurich Instruments’ LabOne Q software provides a programming abstraction that sits above the hardware control layer. And Origin Pilot, the quantum OS by China’s Origin Quantum, incorporates job scheduling, resource management, and compilation alongside hardware control – a vertical integration that mirrors the early days of classical computing, when operating systems and hardware were tightly coupled.

The Quantum Open Architecture (QOA) movement is, in part, an effort to formalize this boundary – to define clean interfaces between control systems, operating systems, compilers, and applications so that the quantum ecosystem can mature in the same modular, interoperable way that the classical computing industry did. But today, the boundaries remain fluid, and understanding the control system layer on its own terms is essential for anyone trying to evaluate the maturity and scalability of a quantum computing platform.

The Anatomy of a Quantum Control System

Despite the diversity of qubit technologies – superconducting circuits, trapped ions, neutral atoms, photonic systems, silicon spin qubits – every quantum control system shares a common architectural backbone with three primary stages.

Signal Generation: Speaking the Qubit’s Language

The first stage translates digital instructions into analog signals that physically manipulate qubits. For superconducting qubits, this means generating precisely shaped microwave pulses in the 4–8 GHz range. For trapped ions, it means modulating laser beams with acousto-optic modulators or driving microwave transitions. For neutral atoms, it means controlling the intensity, frequency, and spatial addressing of laser fields that trap and excite atoms into Rydberg states. For photonic systems, it means generating and routing squeezed light states through networks of beam splitters and phase shifters.

The key specifications here are bandwidth, phase noise, spurious-free dynamic range (SFDR), and timing resolution. A control system with poor phase noise will introduce phase errors that accumulate across a quantum circuit. Insufficient SFDR means unwanted spectral components can drive parasitic transitions. And timing jitter – variations in when pulses actually arrive – directly degrades gate fidelity, especially for two-qubit gates where the relative timing between control pulses on different qubits must be held to sub-nanosecond precision.

Signal Acquisition: Reading the Quantum Tea Leaves

The second stage captures and processes the faint signals that encode qubit measurement outcomes. In superconducting systems, this typically involves reading the frequency shift of a coupled microwave resonator – a signal so weak it often passes through a chain of quantum-limited amplifiers (such as Josephson parametric amplifiers) before reaching the control electronics. The readout electronics must digitize this signal, apply matched filtering or weighted integration to discriminate between qubit states, and deliver a binary outcome – all within microseconds.

Readout fidelity is a direct bottleneck for quantum error correction. If a control system cannot reliably distinguish |0⟩ from |1⟩, no amount of algorithmic cleverness can compensate. This is why vendors compete fiercely on readout specifications: multiplexed readout channel counts, integration times, and state discrimination fidelity.

Real-Time Processing: The Feedback Imperative

The third, and increasingly critical, stage is real-time classical processing that closes the loop between measurement outcomes and subsequent control actions. In the current era, this is most important for mid-circuit measurements (where a qubit is measured partway through a computation and the result determines what happens next) and for quantum error correction, where syndrome measurements must be decoded and correction operations applied before errors propagate.

The latency of this feedback loop is a hard engineering constraint. If it takes too long to process a measurement outcome and generate the corresponding corrective pulse, the qubit may have already decohered. For superconducting qubits with coherence times of a few hundred microseconds, the feedback loop must complete in single-digit microseconds – a requirement that pushes toward FPGA-based or ASIC-based processing rather than general-purpose CPUs, which cannot guarantee deterministic latency.

This is where the distinction between a control system and an operating system becomes especially important. The real-time feedback loop is fundamentally a control-system function – it operates at the physical timescale of the qubits. But the decisions being made within that loop (which error correction code to apply, how to decode a syndrome, when to discard a qubit and reallocate) carry the flavor of operating-system resource management. The companies that can bridge this gap most elegantly will have a significant competitive advantage as the industry moves toward fault-tolerant computing.

The Control System Landscape by Qubit Modality

One of the most important things to understand about quantum control systems is that different qubit technologies impose radically different control requirements. A system designed for superconducting qubits is not trivially repurposed for trapped ions or neutral atoms. This modality dependence has spawned a diverse ecosystem of control system vendors and open-source projects, each optimized for the physics of a specific qubit type – though a few players are making credible bids for cross-modality relevance.

Superconducting Qubits: The Most Contested Battleground

Superconducting qubits – the technology used by IBM, Google, Rigetti, IQM, Alice & Bob, Qilimanjaro, and many others – require microwave control signals in the 4–8 GHz range, along with lower-frequency flux-control signals (DC to a few hundred MHz) for frequency-tunable qubits. Readout involves probing coupled resonators and discriminating qubit states from the reflected or transmitted microwave signal. The control electronics are almost always at room temperature, connected to the quantum processor inside a dilution refrigerator via a tangle of coaxial cables – a “wiring problem” that is itself a major scaling challenge.

Three companies dominate the commercial control system market for superconducting qubits, each with a distinct philosophy:

Quantum Machines (Tel Aviv, Israel) has positioned itself as the category leader with its OPX family of controllers, built around a proprietary processor architecture called the Pulse Processing Unit (PPU). Unlike traditional arbitrary waveform generators (AWGs) that replay pre-stored waveforms from memory, the PPU synthesizes pulses in real time from parametric descriptions – an approach that dramatically reduces memory requirements and enables adaptive, feedback-driven sequences without round-trip communication to a host computer. The company’s QUA programming language provides a pulse-level abstraction that is expressive enough for complex quantum error correction protocols yet accessible to researchers who are not hardware engineers. The latest OPX1000 platform supports scaling to over 1,000 qubits with any combination of low-frequency and microwave front-end modules, and Quantum Machines has deepened its position in the quantum hardware stack through the acquisition of Danish cryogenic electronics company QDevil, whose QDAC ultra-low-noise DACs and QFilter cryogenic filters complement the room-temperature control electronics. More than 200 deployments across academic labs, national laboratories, and commercial quantum computer builders – including a notable partnership with QuantWare to offer pre-integrated QPU-plus-control-system packages – make Quantum Machines the most widely adopted commercial control system in the superconducting qubit ecosystem.

Zurich Instruments (Zurich, Switzerland) takes a more test-and-measurement-rooted approach with its Quantum Computing Control System (QCCS). Built around a family of purpose-built instruments – the SHFSG+ signal generator, SHFQA+ quantum analyzer, SHFQC+ qubit controller, HDAWG arbitrary waveform generator, and PQSC programmable quantum system controller – the QCCS emphasizes analog signal quality above all else. Zurich Instruments’ double-superheterodyne frequency conversion scheme avoids the IQ mixer calibration headaches that plague many competing systems, and their SHF+ product line delivers signal-to-noise ratios that are among the highest on the market – an advantage that matters more and more as qubit coherence times improve and the control electronics become the limiting factor in gate fidelity. The LabOne Q software provides a declarative, Python-based programming interface that abstracts away hardware configuration details while maintaining pulse-level control. Zurich Instruments’ customer base tends toward groups that prioritize signal purity and measurement precision, including several of the world’s leading academic quantum computing labs and companies like ETH Zurich spin-out institutions.

Keysight Technologies (Santa Rosa, USA) entered the quantum control market more recently but has moved aggressively, leveraging its deep legacy in RF test and measurement. The company’s PXI-based Quantum Control System, introduced in early 2023, has been adopted by Fujitsu and RIKEN for their 256-qubit quantum computer at the RIKEN RQC-FUJITSU Collaboration Center – and in July 2025, Keysight delivered the first commercial control system capable of supporting more than 1,000 superconducting qubits, installed at Japan’s National Institute of Advanced Industrial Science and Technology (AIST). Keysight’s differentiator is its broader quantum engineering ecosystem: in addition to control systems, the company offers Quantum EDA tools for chip design (QuantumPro), electromagnetic simulation, and the recently launched Quantum System Analysis platform for system-level simulation of superconducting qubit systems. This design-to-control integration appeals to organizations building quantum computers from the chip level up.

Beyond these three, several quantum computer builders have developed proprietary control systems tailored to their own hardware. IBM’s control electronics are deeply integrated into its quantum systems and not sold separately. Google has built custom control electronics for its processors, including the Sycamore and Willow chips. And a notable frontier is the integration of control electronics directly into the cryogenic environment – a recent Nature Electronics paper demonstrated a quantum processor unit where superconducting digital control electronics based on single-flux quantum (SFQ) logic were integrated with qubits in a single multi-chip module via flip-chip bonding, achieving single-qubit fidelities above 99% and up to 99.9%. This approach could eventually break the linear scaling of wiring that currently limits superconducting quantum computers.

Trapped Ions: ARTIQ and the Open-Source Tradition

Trapped-ion quantum computing – the technology pursued by Quantinuum (formerly Honeywell Quantum Solutions), IonQ, Alpine Quantum Technologies, eleQtron, and Oxford Ionics, among others – has a notably different control culture than the superconducting world. Where superconducting qubit labs have increasingly standardized on commercial control systems, the trapped-ion community has a deep tradition of building its own control infrastructure – and the dominant framework is open source.

ARTIQ (Advanced Real-Time Infrastructure for Quantum physics) is the de facto standard control system for trapped-ion experiments, and its influence extends well beyond that modality. Developed by M-Labs in partnership with the Ion Storage Group at NIST – the group led by Nobel laureate David Wineland – ARTIQ provides a Python-based programming environment that compiles experiment code onto dedicated FPGA hardware with nanosecond (1 ns) timing resolution and sub-microsecond latency for conditional branching. The system was designed from the ground up for the complex, feedback-intensive control sequences that trapped-ion quantum computing demands: sequences where laser pulses must be precisely timed relative to ion motional modes, where measurement outcomes determine subsequent gate operations, and where calibration routines must run autonomously to compensate for slow drifts.

ARTIQ is tightly integrated with the Sinara open-source hardware ecosystem, which comprises over 50 modular boards – including high-speed DACs, digital-to-RF upconverters, and timing distribution systems – all licensed under the CERN Open Hardware License. This combination of open software and open hardware has made ARTIQ the backbone of dozens of trapped-ion labs worldwide, including groups at the University of Oxford, the University of Maryland, the Army Research Lab, Duke University, and ETH Zurich. Duke’s DAX (Duke ARTIQ Extensions) framework adds a modular software architecture on top of ARTIQ that enables code portability across different trapped-ion systems – and the QisDAX project bridges ARTIQ to the Qiskit ecosystem, providing the first open-source, end-to-end pipeline for remote submission of quantum programs to trapped-ion devices.

The Open Quantum Design (OQD) project is building what it describes as the world’s first open-source quantum computer on top of the ARTIQ/Sinara stack, with an architecture that spans from high-level quantum circuit descriptions (via OpenQASM and QIR) through analog Hamiltonian simulation interfaces down to the ARTIQ real-time control layer.

Commercial trapped-ion companies have largely built proprietary control systems, though many incorporate elements of or lessons from the ARTIQ ecosystem. Quantinuum’s System Model H-series machines use custom control electronics optimized for their specific trap architectures. IonQ has developed its own control stack tailored to its photonic interconnect approach. But even commercial teams often use ARTIQ or ARTIQ-derived systems for prototyping and early-stage hardware bring-up, testimony to the framework’s flexibility and the community’s trust in its reliability.

Quantum Machines has also made inroads into the trapped-ion world – the OPX platform’s flexibility in handling diverse signal types has attracted several trapped-ion research groups – but ARTIQ remains the community’s center of gravity.

Neutral Atoms: Laser Control and the Pulser Framework

Neutral-atom quantum computing – led by Pasqal, QuEra, and Atom Computing – presents a control challenge that is fundamentally optical rather than electronic. Qubits are individual atoms (typically rubidium or cesium) suspended in vacuum by tightly focused laser beams called optical tweezers. Quantum operations are performed by modulating laser fields to drive transitions between atomic energy levels, including the highly excited Rydberg states that enable long-range atom-atom interactions.

The control system for a neutral-atom machine therefore revolves around laser management: spatial light modulators (SLMs) and acousto-optic deflectors (AODs) that arrange atoms into arbitrary 2D or 3D configurations, precisely tuned lasers that drive qubit transitions, and camera-based imaging systems that read out atomic states by detecting fluorescence. The timing requirements are somewhat more relaxed than for superconducting systems – qubit coherence times can exceed one second for certain encoding schemes – but the spatial complexity is higher, as the control system must manage the positions, velocities, and internal states of hundreds or thousands of individual atoms simultaneously.

Pulser, developed by Pasqal and released as open source under the Apache 2.0 license, is the leading software framework for programming neutral-atom quantum processors. Unlike control frameworks for other modalities that operate at the gate level, Pulser is designed at the pulse level – users define qubit arrangements (the spatial geometry of the atomic array) and then compose sequences of laser pulses that act on the system, specifying parameters like Rabi frequency, detuning, and phase as functions of time. This pulse-level approach is essential for neutral-atom systems, which can operate in both digital (gate-based) and analog (Hamiltonian simulation) modes, and Pulser supports both paradigms natively.

Pulser includes a classical simulation backend built on QuTiP that allows users to emulate the behavior of their pulse sequences before submitting them to hardware, along with noise models that account for physical effects like Doppler shifts from atomic motion. Higher-level interfaces – including Pasqal’s Qadence library for quantum machine learning – build on Pulser to provide more abstract programming models. Pulser is used to program Pasqal’s cloud-accessible Fresnel processor and is the software entry point for the broader Pasqal ecosystem.

QuEra has taken a different approach, making its Aquila processor accessible through Amazon Braket and providing a higher-level interface that abstracts away much of the pulse-level detail. At the hardware level, QuEra’s control system manages the complex laser infrastructure – including the SLMs, AODs, and Rydberg excitation lasers – through custom electronics and software that are not publicly documented to the same degree as Pulser.

The intersection of neutral-atom control with optimization is an active area: Q-CTRL’s Boulder Opal toolkit has demonstrated significant performance improvements on QuEra’s Aquila hardware by applying model-based pulse optimization – designing laser control pulses that are inherently robust against amplitude fluctuations and other noise sources. In one demonstration, optimized control pulses delivered by Boulder Opal achieved over 3x higher probability on target states compared to standard flat-top pulses.

Photonic Systems: A Different Kind of Control

Photonic quantum computers – built by Xanadu, PsiQuantum, Quandela, and ORCA Computing, among others – require control systems that are fundamentally different from those used for matter-based qubits. Instead of manipulating the internal states of atoms or circuits, photonic control systems manage the generation, routing, and measurement of photons through networks of optical components.

For Xanadu’s continuous-variable approach, the control system manages squeezed light sources, programmable beam splitter networks implemented on silicon nitride photonic chips, phase shifters, and photon-number-resolving detectors. The company’s Aurora system – the first universal photonic quantum computer prototype, unveiled in early 2025 – operates at room temperature across four photonically interconnected server racks containing 35 photonic chips and 13 kilometers of fiber optics. The system is described as fully automated, capable of running for hours without human intervention – a testament to the maturity of its classical control infrastructure. Xanadu has developed its own software stack, including the open-source Strawberry Fields library for programming continuous-variable quantum circuits and the PennyLane framework for quantum machine learning.

PsiQuantum’s approach uses single-photon qubits manufactured on silicon photonic chips at GlobalFoundries’ semiconductor fab. Because photons don’t interact with heat or electromagnetic interference, PsiQuantum can colocate control electronics close to the qubits – a significant architectural advantage over superconducting systems, where the control electronics must be at room temperature, connected to millikelvin qubits through a long, lossy chain of cables. PsiQuantum’s Omega chipset integrates photon sources, interferometric circuits, and single-photon detectors on chip, with inter-chip communication via standard telecom optical fiber.

The control challenge for photonic systems is less about generating precisely shaped electromagnetic pulses and more about managing optical alignment, stabilizing interferometric phases, synchronizing photon generation with detection, and implementing the classical feed-forward logic required by measurement-based quantum computing architectures. This is a different flavor of control engineering – more optical systems engineering than RF electronics engineering — and it explains why photonic quantum computing companies tend to build their control infrastructure entirely in-house rather than purchasing from the same vendors that serve the superconducting community.

Silicon Spin Qubits: Borrowing from the Semiconductor Toolbox

Silicon spin qubits – pursued by Intel, Diraq, CEA-Leti, IMEC, and several academic groups – encode quantum information in the spin states of individual electrons confined in quantum dots fabricated on silicon chips. The control signals are a mix of microwave pulses (for spin manipulation) and low-frequency voltage signals (for tuning the electrostatic potentials that define the quantum dots), operating at millikelvin temperatures in a dilution refrigerator.

The control requirements overlap significantly with those of superconducting qubits, and silicon spin qubit groups have adopted commercial control systems from both Quantum Machines and Zurich Instruments. Diraq, the UNSW Sydney spin-off founded by pioneer Andrew Dzurak, has specifically credited Quantum Machines’ OPX platform for its real-time capabilities in enabling their research. The QUA programming language’s ability to express complex feedback sequences is particularly valuable for semiconductor qubit systems, where real-time Hamiltonian estimation and adaptive tuning protocols are essential for maintaining qubit operation in the face of charge noise.

The long-term vision for silicon spin qubits – leveraging CMOS manufacturing to produce millions of qubits on a chip – will eventually demand a control paradigm shift. Today’s room-temperature control systems connected by cables cannot scale to millions of qubits. The industry’s answer is cryo-CMOS: classical control electronics fabricated in advanced semiconductor processes and operated at cryogenic temperatures, positioned close to or on the same chip as the qubits themselves. Intel’s Horse Ridge and Horse Ridge II cryogenic controllers are early steps in this direction, integrating qubit control functions onto a single chip that operates at approximately 4 Kelvin. This approach echoes the SFQ-based cryogenic control demonstrated for superconducting qubits, and it may eventually make the room-temperature control rack a relic of the early quantum era.

The Firmware Layer: Q-CTRL and the Art of Pulse Optimization

Between the raw signal generation of a control system and the gate-level abstractions of a quantum programming framework sits an increasingly important intermediate layer that the Australian company Q-CTRL calls “quantum firmware.” This layer uses optimal control theory – the mathematics of finding the best way to drive a dynamical system toward a desired state – to design control pulses that are inherently robust against noise and hardware imperfections.

The concept is straightforward: rather than applying a simple square pulse to rotate a qubit, you apply a carefully shaped pulse that achieves the same rotation but is far less sensitive to amplitude fluctuations, frequency drift, crosstalk, and other error sources. The math is well understood – it’s a direct descendant of decades of work in NMR spectroscopy and aerospace control theory – but the computational challenge of optimizing pulses for multi-qubit systems with realistic hardware constraints is formidable, which is why Q-CTRL’s cloud-based optimization infrastructure has found a market.

Q-CTRL’s product suite reflects the breadth of this firmware layer. Boulder Opal provides professional-grade tools for hardware developers to design, automate, and scale quantum control – compatible with superconducting, trapped-ion, and silicon qubit architectures. Fire Opal, aimed at algorithm developers, provides automated error suppression and performance optimization for quantum circuits run on cloud-accessible hardware, with independently validated performance improvements of up to 9,000x over baseline techniques. The company has partnerships with IBM, NVIDIA, AWS, IonQ, and Quantum Machines, whose OPX platform integrates Q-CTRL’s firmware as an additional optimization layer.

The emergence of quantum firmware as a distinct commercial category underscores a broader truth about the quantum control stack: raw hardware performance is necessary but not sufficient. The intelligence embedded in the control signals – the shapes, timings, and adaptive strategies – is increasingly what separates a quantum computer that can run meaningful algorithms from one that cannot.

The Scaling Crisis: Why Control Systems Are the Next Bottleneck

As quantum computers scale from the current era of hundreds of qubits toward the thousands and millions needed for fault-tolerant computation, the control system faces a set of engineering challenges that are every bit as daunting as the physics of the qubits themselves.

The most obvious challenge is the wiring problem. Today’s superconducting quantum computers require multiple coaxial cables per qubit – typically 2-3 for control and 1 for readout – running from room-temperature electronics through the stages of a dilution refrigerator to the millikelvin quantum processor. A 1,000-qubit system might need 3,000 or more cables, each contributing heat load to the cryogenic environment and introducing opportunities for signal degradation. At 10,000 qubits, this approach becomes physically untenable. Solutions under development include cryogenic multiplexing (sharing cables among multiple qubits using frequency-division or time-division techniques), cryogenic control electronics that operate inside the refrigerator, and photonic interconnects that replace coaxial cables with optical fiber.

The second challenge is calibration drift. Qubit parameters – frequencies, coupling strengths, coherence times – shift over time due to environmental fluctuations, material aging, and quasi-particle poisoning. A control system that was perfectly calibrated in the morning may be significantly miscalibrated by afternoon. Today, recalibration is a semi-manual process that consumes a substantial fraction of system uptime. At scale, it must become fully autonomous – a control system that continuously monitors and adapts to hardware drift without human intervention.

The third challenge is real-time decoding for quantum error correction. Fault-tolerant quantum computing requires measuring syndromes (error indicators) at every error correction cycle – potentially millions of times per second – and decoding those syndromes into correction operations faster than errors accumulate. The computational demands of syndrome decoding scale with code size, and the latency requirements are brutally strict. This is not a problem that can be solved in software running on a general-purpose processor; it requires dedicated hardware (FPGAs or ASICs) tightly integrated into the control system.

These challenges are driving a fundamental rethinking of quantum control system architecture. The future control system will not be a rack of room-temperature electronics connected to a refrigerator by cables. It will be a distributed, heterogeneous computing system spanning multiple temperature stages, incorporating cryogenic processors alongside room-temperature FPGAs and GPUs, connected by a mix of electrical and photonic links, and running a complex stack of real-time software that manages calibration, error correction, and classical-quantum orchestration simultaneously.

This is, in essence, the quantum systems integration challenge – and it is why the control system layer, far from being a solved problem, is one of the most active frontiers in quantum engineering.

Strategic Implications: What This Means for the Industry

For organizations evaluating quantum computing platforms – whether as end users, investors, or technology partners – the control system landscape offers several strategic insights.

First, control system maturity is a reliable proxy for platform maturity. A quantum computing company that has invested heavily in its control infrastructure – whether building its own or integrating with established vendors – is signaling that it understands the full-stack engineering challenge of quantum computing. Conversely, a company that presents impressive qubit metrics but glosses over its control architecture may be further from a scalable system than its headlines suggest.

Second, the control system ecosystem is consolidating around a few key players, but modality dependence limits winner-take-all dynamics. Quantum Machines, Zurich Instruments, and Keysight are competing fiercely for the superconducting qubit market, while ARTIQ dominates in trapped ions and Pulser is the standard for neutral atoms. A control system vendor that can credibly serve multiple modalities – as Quantum Machines is attempting – will have a significant structural advantage as the industry matures and cross-modality integration becomes more important.

Third, the control-to-OS boundary will define competitive dynamics in the next era. As quantum computers scale, the control system will absorb more and more functions that we currently think of as operating system responsibilities: autonomous calibration, real-time error correction, dynamic resource allocation. Companies that can build this integrated control-OS stack – whether from the bottom up (Quantum Machines, Zurich Instruments) or the top down (IBM, Google, Origin Quantum) – will be best positioned to deliver fault-tolerant quantum computing as a service.

Fourth, for organizations building or acquiring quantum computers, the choice of control system is not merely a procurement decision – it is an architectural commitment that will constrain and enable future capabilities. The control system determines which quantum error correction protocols are feasible, how fast calibration can run, how tightly classical and quantum processing can be integrated, and how far the system can scale. It deserves the same strategic attention that organizations devote to choosing a cloud provider or an enterprise operating system.

The Road Ahead

The quantum control system is, in many ways, the unsung hero of quantum computing – the layer that translates theoretical possibility into physical reality. For years, it has been treated as plumbing: necessary, unglamorous, and somebody else’s problem. That era is ending.

As the industry pushes toward fault-tolerant quantum computation, the control system is moving from the background to center stage. The challenges it must solve – cryogenic integration, autonomous calibration, real-time error decoding, multi-modality support – are among the hardest engineering problems in quantum computing. And the companies and research groups that solve them will hold the keys to the quantum future.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.