The CRQC Scorecard: How Close Is Each Quantum Modality to Breaking Your Encryption?

Table of Contents

Yesterday, two papers landed that set social media on fire.

Google Quantum AI published a landmark resource estimate showing that fewer than 500,000 superconducting qubits could break Bitcoin’s elliptic curve cryptography in under nine minutes. Hours later, a team from Oratomic, Caltech, and UC Berkeley — including some of the most credible names in fault-tolerant quantum computing — dropped a paper claiming that Shor’s algorithm can be executed at cryptographically relevant scales with as few as 10,000 neutral atom qubits.

Predictably, the reaction was a mix of breathless hype and genuine concern. “Q-Day is around the corner!” proclaimed dozens of posts. Crypto Twitter panicked. Vendors rushed to sell quantum-safe solutions.

I understand the alarm. These are serious papers from serious researchers. The numbers are genuinely lower than anyone expected. And they come on top of a cascade of resource estimation breakthroughs over the past year that have collectively reduced the estimated cost of breaking RSA-2048 by over 2,000× — from 20 million physical qubits to potentially under 10,000.

But the gap between “a paper says 10,000 qubits could work” and “a machine actually breaks your encryption” remains vast. Not because the papers are wrong, but because actually building a quantum computer that meets the paper’s assumptions is an engineering challenge of extraordinary complexity.

This article provides the reality check. Using my CRQC Quantum Capability Framework and its three executive-level metrics — LQC, LOB, and QOT — I’ll map every major resource estimation paper published in the past year against the latest hardware achievements from each quantum computing modality. I’ll show you the gap in concrete, quantified terms that a CISO can take to their board.

My bottom line, though, is nuanced. The gap is real and significant today. But I also believe we’ve crossed a threshold: the remaining challenges are primarily engineering problems, not fundamental scientific ones. And when this much money, talent, and geopolitical urgency is flowing into solving engineering problems, those problems tend to fall — one after another, and often faster than anyone predicted. I do believe we will see a cryptographically relevant quantum computer (CRQC) within a few years. Which is precisely why the time to act is now.

The Three Metrics That Define the CRQC Threat

Before diving into the numbers, a word on methodology. My CRQC Quantum Capability Framework decomposes the path to a CRQC into nine interdependent hardware capabilities across four layers — from foundational physics (quantum error correction, syndrome extraction, below-threshold operation, qubit connectivity) through logical gates (Clifford gates, magic state production) to system-level execution (algorithm integration, decoder performance, continuous operation) plus the cross-cutting challenge of engineering scale and manufacturability.

Tracking all nine capabilities individually is essential for researchers and engineers. But for executive communication — and for the kind of cross-modality comparison this article attempts — I compress them into three aggregate metrics, as I described in my CRQC Readiness Benchmark:

Logical Qubit Capacity (LQC) — How many error-corrected logical qubits can the system maintain simultaneously? This is the “workspace” for the algorithm. For RSA-2048, current best estimates require roughly 1,399 logical qubits. LQC is primarily driven by QEC code efficiency, below-threshold operation, qubit connectivity, and raw physical qubit count.

Logical Operations Budget (LOB) — How many non-trivial logical operations (Toffoli/T-gates) can the machine execute before accumulated errors corrupt the result? Think of this as computational endurance. For RSA-2048, the requirement is roughly 6.5 billion Toffoli gates at error rates so low that the entire computation has a >93% chance of completing without a single logical error. LOB depends on logical gate fidelity, magic state production quality, decoder accuracy, and code distance.

Quantum Operations Throughput (QOT) — How fast can those logical operations be performed? This determines whether the computation finishes in days or centuries. For RSA-2048, the machine needs to sustain roughly a million error correction cycles per second for approximately five continuous days. QOT is driven by physical gate speed, decoder latency, syndrome extraction speed, and the ability to operate continuously without interruption.

I want to be transparent about the limitations of this approach. Compressing nine deeply interdependent capabilities into three metrics requires assumptions — and those assumptions can be wrong. Different QEC codes produce different LQC-to-physical-qubit ratios. Different architectures distribute the LOB burden differently between magic state factories and logical gate layers. Different modalities have fundamentally different QOT profiles. This three-metric framework is a deliberate simplification — useful for executive comparison, but no substitute for the full capability-level analysis when making detailed risk assessments.

Mapping individual experimental papers to these aggregate metrics is also inherently imperfect. I may have missed relevant papers, or there may be better ways to link specific achievements to these complex, interdependent metrics. The relationships between the nine underlying capabilities are non-linear — a breakthrough in one can shift the requirements on others in ways that are hard to predict. This is, in fact, one reason why predicting Q-Day is so difficult: we don’t know which engineering challenge will fall first, and when it does, it may dramatically reshape what the remaining challenges look like. I welcome corrections and suggestions from readers — please reach out via my contact page.

The Shrinking Target: How Resource Estimates Have Evolved

Before evaluating how close hardware is, we need to understand what it’s aiming at. The “demand side” of the CRQC equation — the algorithmic resource requirements — is a moving target, and it’s been moving in the wrong direction for defenders.

Gidney & Ekerå 2021: The 20-Million-Qubit Baseline

The foundational modern estimate established that breaking RSA-2048 would require roughly 20 million physical qubits, approximately 6,189 logical qubits (LQC), about 3 billion Toffoli gates (LOB), and around 8 hours of runtime — using surface codes on a square grid of superconducting qubits with 0.1% physical error rates and 1 µs cycle times.

Gidney 2025: Under One Million Qubits

In May 2025, Google’s Craig Gidney published “How to factor 2048 bit RSA integers with less than a million noisy qubits,” combining approximate residue arithmetic, yoked surface codes, and magic state cultivation to achieve: approximately 897,864 physical qubits, only 1,399 logical qubits (LQC — a 4.4× reduction from 2021), 6.5 billion Toffoli gates (LOB), and about 4.96 days runtime. Same physical assumptions as 2021.

Gidney himself noted that he saw “no way to reduce the qubit count by another order of magnitude” under the same assumptions. He couldn’t plausibly claim a hundred thousand noisy qubits would suffice. As he wrote, “attacks always get better.”

The Pinnacle Architecture (February 2026): Under 100,000 Qubits

Nine months later, Iceberg Quantum proved Gidney wrong. The Pinnacle Architecture used quantum LDPC (qLDPC) codes instead of surface codes to achieve RSA-2048 factoring with fewer than 100,000 physical qubits.

But — and this is a crucial nuance that many commentators missed — Pinnacle didn’t simply make the problem easier. It shifted the challenge. The 10× reduction in physical qubits came from assuming efficient qLDPC codes with much higher encoding rates than surface codes. These codes require non-local connectivity between physical qubits (not just nearest-neighbor), sophisticated “magic engines” for continuous T-gate production, and fast, accurate decoders for codes with much more complex syndrome structures than surface codes. None of these have been demonstrated on real hardware at the required scale. So while Pinnacle reduced the demand on physical qubit count (Capability E.1), it increased the demands on qubit connectivity (B.4), magic state production (C.2), and decoder performance (D.2). The total difficulty didn’t vanish — it migrated. This is a perfect illustration of why predicting Q-Day is so difficult: breakthroughs in one capability can shift — not eliminate — requirements onto others.

Critically, Pinnacle also provided estimates for non-superconducting hardware. With millisecond cycle times (typical of trapped-ion or neutral-atom platforms), factoring is feasible in about one month at ~100,000 physical qubits and 10⁻⁴ error rates. This opened the CRQC conversation beyond superconducting-only architectures.

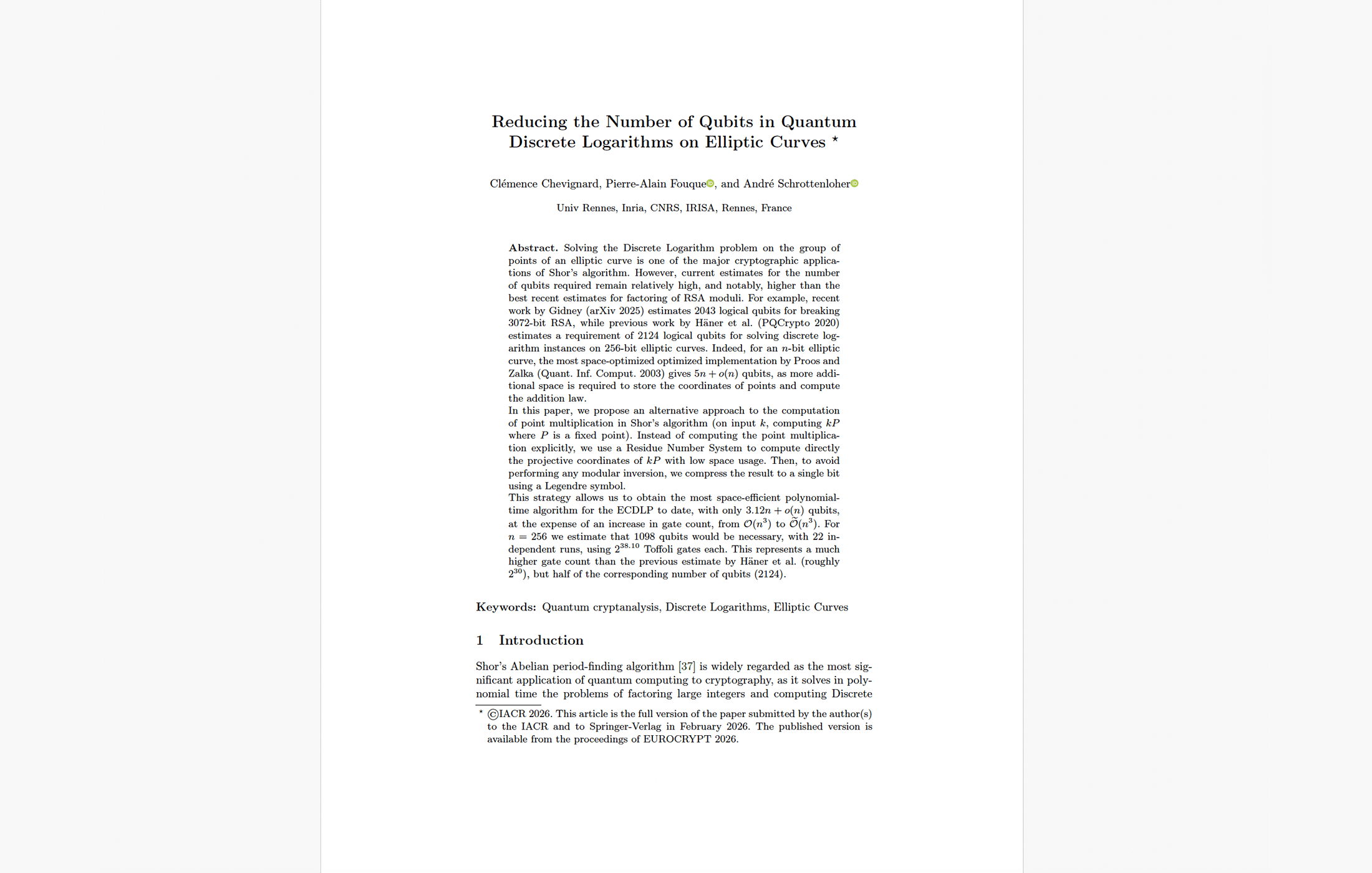

Google Quantum AI (March 31, 2026): Breaking Cryptocurrency in Minutes

Yesterday’s Google paper on ECDLP-256 achieved a 10× reduction in the resources needed to break elliptic curve cryptography. Using surface codes on superconducting qubits, the team estimated fewer than 500,000 physical qubits could solve the ECDLP on 256-bit curves in under 9 minutes. Google’s optimized circuits require no more than 1,200–1,450 logical qubits (LQC) and just 70–90 million Toffoli gates (LOB) — roughly 100× fewer gates than the earlier Chevignard et al. estimates, which is why the runtime drops from hours to minutes.

Oratomic/Caltech/UC Berkeley (March 31, 2026): 10,000 Qubits

Published the same day, this paper claims Shor’s algorithm can run with as few as 10,000 reconfigurable neutral atom qubits — using Google’s optimized circuits on a fundamentally different architecture with high-rate qLDPC codes at approximately 30% encoding rate. Their largest code encodes 1,480 logical qubits in just 5,278 physical qubits. For RSA-2048, the underlying algorithmic requirements remain similar to Gidney 2025: ~1,399 logical qubits (LQC) and ~6.5 × 10⁹ Toffoli gates (LOB).

The same trade-off dynamic applies here as with Pinnacle: the dramatic physical qubit reduction comes from assuming qLDPC codes that have never been implemented on any hardware, and that place even greater demands on qubit connectivity and decoder complexity.

The qLDPC Revolution: A Cross-Cutting Trend

What ties the Pinnacle and Oratomic papers together — and connects to IBM’s independently developed bicycle codes and Photonic Inc.’s SHYPS codes — is the rise of quantum low-density parity-check (qLDPC) codes. These codes encode multiple logical qubits per code block with far higher efficiency than surface codes, potentially reducing physical-to-logical overhead by 10–20×. This is the single most impactful cross-cutting trend in quantum error correction today. But it comes with a trade-off: qLDPC codes require non-local qubit connectivity that is challenging for fixed-grid architectures like superconducting chips, and their decoding is significantly more complex.

Summary: The Demand Curve Is Falling Fast

| Estimate | Year | Physical Qubits | Logical Qubits (LQC) | Non-Clifford Gates (LOB) | Runtime |

|---|---|---|---|---|---|

| Gidney & Ekerå | 2021 | ~20,000,000 | ~6,189 | ~3 × 10⁹ | ~8 hours |

| Gidney | 2025 | ~900,000 | ~1,399 | ~6.5 × 10⁹ | ~5 days |

| Pinnacle | Feb 2026 | ~100,000 | ~1,399 | ~6.5 × 10⁹ | ~1 month |

| Chevignard et al. ECC-256 | 2026 | — | ~1,098 | ~2.7 × 10¹¹ | — |

| Google ECDLP-256 | Mar 2026 | ~500,000 | ~1,200–1,450 | ~70–90 million | ~9 min |

| Oratomic ECC-256 | Mar 2026 | ~10,000–13,300 | ~1,200–1,450 | ~70–90 million | months |

| Oratomic RSA-2048 | Mar 2026 | ~11,000–102,000 | ~1,399 | ~6.5 × 10⁹ | weeks–months |

Figure 1: Resource estimation trend — physical qubits over time” scatter chart showing the 2,000× drop from 20M (2021) to ~10K (2026), log scale, with RSA-2048 and ECC-256 estimates as separate series. Caption: “Figure 1: The shrinking target — estimated physical qubits needed to break cryptography have fallen by over 2,000× in five years, driven primarily by algorithmic optimizations and the shift from surface codes to qLDPC codes. Note: lower physical qubit counts come with trade-offs in runtime and architectural complexity.

In short, the algorithmic target has shrunk dramatically — but only on paper. The hardware supply side has not kept pace.

The Supply Side: What Has Each Modality Actually Achieved?

Now let’s measure the hardware against those targets. For each modality, I assess the latest demonstrated achievements on Logical Qubit Capacity (LQC), Logical Operations Budget (LOB), and Quantum Operations Throughput (QOT) — and quantify the gap to the CRQC requirement.

Superconducting Qubits

Key players: Google Quantum AI, IBM, Rigetti, USTC (China)

Logical Qubit Capacity (LQC): 1 logical qubit demonstrated

The maximum number of simultaneously operating logical qubits demonstrated on superconducting hardware is one. Multiple groups have achieved this milestone using different error correction codes, but none have gone beyond it:

Google’s Willow processor (105 physical qubits, published in Nature, February 2025) demonstrated the first below-threshold surface code memory at distance 7 — a single logical qubit encoded across 101 physical qubits. The error suppression factor was Λ = 2.14 ± 0.02 per distance-2 increase, the per-cycle logical error rate was 0.143%, and the logical qubit lifetime exceeded its best physical qubit by 2.4×, surpassing the break-even point. USTC’s Zuchongzhi 3 achieved comparable distance-7 results using a microwave-based leakage suppression approach. A separate team demonstrated a color code scaled from d=3 to d=5 with a logical error suppression factor of 1.56 and transversal Clifford gates exceeding 99% fidelity — still one logical qubit but demonstrating a different, potentially more efficient, code family. Alice & Bob demonstrated a cat qubit repetition code at distance 5, below threshold — again one logical qubit, using a bosonic approach.

IBM’s qLDPC architecture (announced June 2025 with the Starling roadmap) targets 200 logical qubits on 10,000 physical qubits by 2029, but no logical qubits have been demonstrated on IBM production hardware yet. The Kookaburra processor (2026) will be the first QEC-enabled module; Cockatoo (2027) will demonstrate entanglement between modules.

The Logical Qubit Capacity (LQC) gap: ~1,399×. From 1 to ~1,399 simultaneously active logical qubits.

Logical Operations Budget (LOB): No fault-tolerant logical gates at Willow-class distances

Willow demonstrated that a logical qubit can be stored with below-threshold error suppression across millions of QEC cycles, but it did not implement logical gates on that distance-7 code. Other superconducting experiments have demonstrated logical Clifford gates and magic state injection in smaller codes — notably the color code d=3→d=5 result with transversal gates exceeding 99% fidelity — but these remain at lower code distances and shallower depths than the trapped-ion and neutral-atom logical gate results. IBM’s current Heron processors execute approximately 5,000 physical gates per circuit.

The Logical Operations Budget (LOB) gap: Effectively infinite — from zero demonstrated logical gates to 6.5 billion required. IBM’s Starling target (10⁸ gates by 2029) would still be 65× short.

Quantum Operations Throughput (QOT): ~1 µs cycle time — matches the CRQC target

This is where superconducting shines and where it has the strongest competitive advantage. Google’s Willow achieved a ~1.1 µs surface code cycle time with real-time decoding — matching the physical assumption in Gidney’s resource estimates. Physical gates operate at 10–100 ns, roughly 1,000× faster than any competing modality. Recent work demonstrated end-to-end QEC decoding-feedback latency of just 446 nanoseconds for a distance-3 code. IBM achieved a 10× speedup in classical decoding to under 480 ns.

The Quantum Operations Throughput (QOT) gap: ≈0 for cycle speed. The ~1 µs cycle requirement is already met. The limitation is sustained operation — running continuously for five days, not seconds to minutes. This maps to Capability D.3 (Continuous Operation), which remains undemonstrated at the required scale.

Trapped-Ion Qubits

Key players: Quantinuum (Helios), IonQ, Oxford Ionics

Logical Qubit Capacity (LQC): 94 error-detected / 48 error-corrected logical qubits

Quantinuum’s Helios system (98 barium-137 ions, launched November 2025) achieved a remarkable encoding efficiency. Using iceberg codes, the team produced 94 error-detected logical qubits, or 48 fully error-corrected logical qubits, from just 98 physical qubits — a roughly 2:1 encoding ratio that was considered impossible a few years ago. All demonstrated “beyond break-even” performance, meaning encoded operations were more accurate than raw physical operations. Two-qubit gate fidelity: 99.921% across all qubit pairs. Single-qubit: 99.9975%.

Earlier, in collaboration with Microsoft, Quantinuum had demonstrated 4 logical qubits with 800× error rate improvement (April 2024), then 12 logical qubits with a 22× circuit error rate improvement (September 2024) on the H2 system.

Growth factor: LQC has grown from 4 (April 2024) → 12 (September 2024) → 48–94 (November 2025–March 2026) on Quantinuum’s platform — roughly a 12–24× improvement in under two years.

The Logical Qubit Capacity (LQC) gap: ~15–29×. From 48–94 to ~1,399. The smallest gap of any modality. But scaling to 1,399 logical qubits would require ~2,800–3,000 physical ions — far beyond any demonstrated trapped-ion system. IonQ targets 2 million physical qubits by 2030 but has not published comparable logical qubit demonstrations.

Logical Operations Budget (LOB): ~10⁻⁴ logical gate error → ~10⁴ operations before failure

Quantinuum is the only platform to have demonstrated a complete universal fault-tolerant gate set at the logical level — including logical Clifford gates, magic state generation via code switching (Daguerre et al., Phys. Rev. X, October 2025), and a below-threshold non-Clifford controlled-Hadamard (CH) gate at ≤2.3 × 10⁻⁴ error. The March 2026 paper showed logical gate error rates of approximately 10⁻⁴ across dozens of logical qubits. Oxford achieved a world-record single-qubit gate error of <10⁻⁷ — the most accurate qubit operation ever recorded. IonQ (Oxford Ionics) demonstrated 99.99% two-qubit gate fidelity.

At a 10⁻⁴ logical gate error rate, the expected number of operations before failure is roughly 10,000.

The Logical Operations Budget (LOB) gap: ~650,000×. From ~10⁴ to ~6.5 × 10⁹. To bridge this, logical error rates would need to drop to ~10⁻¹⁰ or below, requiring higher code distances and significantly more physical qubits per logical qubit.

Quantum Operations Throughput (QOT): ~55 ms per circuit layer

Trapped-ion gates operate at roughly 100 µs to 1 ms — about 1,000× slower than superconducting. The Helios system’s benchmarked “depth-1 time” is approximately 55 ms per dense program layer, implying roughly 18 layers per second. All-to-all connectivity reduces the SWAP overhead that plagues fixed-grid architectures, partially offsetting the raw speed disadvantage.

The Quantum Operations Throughput (QOT) gap: ~1,000× for raw cycle speed. At current speeds, the ~5-day RSA computation would take ~13 years. The Pinnacle and Oratomic papers help here: with ms cycle times and parallelization, factoring is feasible in months at trapped-ion error rates (10⁻⁴). But even months-long computations require continuous, uninterrupted operation.

Neutral-Atom Qubits

Key players: QuEra Computing, Harvard/MIT (Lukin group), Oratomic, Pasqal, Atom Computing

Logical Qubit Capacity (LQC): 96 logical qubits (below-threshold); 3,000+ physical qubits continuously

The Harvard/MIT/QuEra collaboration delivered arguably the most complete fault-tolerant architecture demonstration of 2025. Using reconfigurable arrays of up to 448 rubidium atoms, the team demonstrated all core elements of universal fault-tolerant computation in a single system (published in Nature, January 2026): below-threshold surface code QEC (2.14× error suppression), up to 96 logical qubits with error rates that improved as the system scaled, transversal gates, lattice surgery, and teleportation-based universality. Atom Computing separately demonstrated 24 entangled logical qubits using a Bacon-Shor code.

In a separate experiment, a 3,000-qubit array operated continuously for over two hours (Nature, September 2025) with mid-computation atom replenishment at 300,000 atoms/sec — over 50 million atoms cycled through. A Caltech team demonstrated a 6,100-qubit array, though for only ~13 seconds.

The Oratomic paper’s architecture theoretically encodes ~1,480 logical qubits in ~5,278 physical qubits — but this is a paper estimate using qLDPC codes never demonstrated on neutral-atom hardware.

Growth factor: Physical qubit count has grown from 256 (2023 Harvard logical qubit paper) → 448 (2025 fault-tolerant demo) → 3,000 (continuous operation) → 6,100 (Caltech pulsed) in about two years — roughly 10× per year.

The Logical Qubit Capacity (LQC) gap: ~15× from demonstrated hardware. The physical qubit scaling is the most favorable of any modality, but current two-qubit (Rydberg) fidelities of ~99.5% are below the ~99.9% level that enables efficient error correction at high code distances.

Logical Operations Budget (LOB): Below-threshold logical gates; magic state distillation achieved

The 448-atom architecture demonstrated transversal logical gates (27 transversal CNOTs in 17.7 ms) and the first logical magic state distillation on a neutral-atom platform. The Algorithmic Fault Tolerance (AFT) framework (QuEra/Harvard/Yale, Nature September 2025) demonstrated that runtime overhead of error correction can be reduced by 10–100× by performing logical gates with a single error-checking round instead of d rounds.

The Logical Operations Budget (LOB) gap: ~5–6 orders of magnitude (comparable to trapped-ion).

Quantum Operations Throughput (QOT): ~655 µs per transversal CNOT; massive parallelism; 2+ hours continuous

Individual gate speeds are in the ~100 µs–1 ms range. But neutral atoms offer two unique advantages: massive parallelism (hundreds of gates simultaneously across the array) and demonstrated continuous operation. The Harvard team projects that 3–5× gate error reduction and ~10× clock speedup are achievable with known techniques, which would enable hundreds of logical qubits with ~10⁻⁸ error rates.

The Quantum Operations Throughput (QOT) gap: ~100–1,000× for raw speed, but partially offset by parallelism, AFT, and — uniquely — the demonstrated ability to run for hours. Continuous operation addresses Capability D.3 in a way no other modality has demonstrated.

Photonic Qubits

Key players: Xanadu, PsiQuantum, Photonic Inc., ORCA Computing

Logical Qubit Capacity (LQC): 0 demonstrated logical qubits

No photonic system has demonstrated a logical qubit. Xanadu’s Aurora (Nature 2025) is the first universal photonic quantum computer — a 12-qubit machine integrating all fault-tolerant subsystems in a single architecture (published in Nature). Xanadu also demonstrated on-chip GKP state generation (Nature, June 2025) — error-resistant photonic qubits on silicon nitride chips with >99% detector efficiency. Photonic Inc. introduced SHYPS qLDPC codes (March 2025) claiming 20× fewer physical qubits than surface codes. PsiQuantum was selected by DARPA for its US2QC program but no public experimental results have been released.

All gaps: effectively the entire journey remains — from 0 to CRQC targets on every metric. Photonic has potentially the fastest raw throughput (GHz range in theory) and room-temperature operation, but these advantages are unrealized at the logical level.

Silicon Spin Qubits

Key players: Intel, Diraq/imec, SQC/UNSW, QuTech, SIQSE

I include silicon in this analysis because, despite being the least mature modality for logical qubit demonstrations, it is arguably the dark horse of quantum computing — the only platform where the path to millions of qubits runs directly through existing semiconductor fabrication lines that already produce billions of transistors per chip.

Logical Qubit Capacity (LQC): 2 logical qubits (error-detected)

In March 2026, researchers at SIQSE published the first universal logical operations in silicon (Nature Nanotechnology) — using five phosphorus-donor nuclear spins to encode two logical qubits via the [[4,2,2]] code. They demonstrated fault-tolerant state preparation, a complete universal gate set including the T gate via gate-by-measurement, and executed a VQE algorithm on the logical qubits. SQC/UNSW demonstrated an 11-qubit processor with 99.9% two-qubit gates (Nature, December 2025) that maintained performance as qubit numbers increased. Diraq/imec showed >99% fidelity on standard 300-mm CMOS wafer lines (Nature, September 2025) — a manufacturing milestone with 95% yield and 24,000+ devices per wafer.

The Logical Qubit Capacity (LQC) gap: ~700×. Logical Operations Budget (LOB) and Quantum Operations Throughput (QOT) gaps are comparably large. Silicon’s coherence times are exceptional (T1 up to 9.5 seconds in isotopically purified silicon), and gate speeds are moderate (~1–10 µs). The long-term bet is manufacturing: if CMOS fabs can produce millions of qubits per wafer, silicon wins on scale.

The Complete Gap to CRQC Analysis

Bars show orders-of-magnitude gap to CRQC target. Lower = closer. LOB (endurance) is the universal bottleneck across all modalities. Photonic excluded — no logical qubits demonstrated.

Figure 2: Gap to CRQC by modality and metric” grouped bar chart showing orders-of-magnitude gap for LQC, LOB, QOT across superconducting, trapped-ion, neutral atom, and silicon. Caption: “Figure 2: The gap to a CRQC across four modalities (photonic excluded — no logical qubits demonstrated). The Logical Operations Budget (LOB) is the universal bottleneck: a ~650,000× gap even for the best-performing platforms. Superconducting uniquely meets the QOT (speed) target but trails on LQC and LOB.

| Metric | CRQC Target | Superconducting | Trapped-Ion | Neutral Atom | Photonic | Silicon |

|---|---|---|---|---|---|---|

| LQC | ~1,399 | 1 | 48–94 | 96 | 0 | 2 |

| LQC gap | — | ~1,399× | ~15–29× | ~15× | ∞ | ~700× |

| LOB | ~6.5 × 10⁹ | ~10¹–10² | ~10⁴ | ~10⁴ | 0 | ~10¹ |

| LOB gap | — | ~10⁷–10⁸× | ~650,000× | ~650,000× | ∞ | ~10⁸× |

| QOT | ~1 µs | ~1 µs | ~100–1,000 µs | ~100–1,000 µs | Unknown | ~1–10 µs |

| QOT gap | — | ≈0 | ~100–1,000× | ~100–1,000×* | Unknown | ~10–100× |

| Continuous operation | ~5 days | Seconds | Minutes–hours | 2+ hours | Unknown | Seconds |

*Neutral atom QOT gap partially offset by massive parallelism and AFT.

LOB Is the Universal Bottleneck

The most important insight from this analysis: across every modality, the Logical Operations Budget is the binding constraint — and it’s not close. The best demonstrated logical circuit depth on any platform is roughly 10,000 operations (Quantinuum, March 2026). The CRQC needs 6.5 billion. That’s approximately 650,000×, or nearly six orders of magnitude. For ECC-256, the picture is more concerning: Google’s optimized circuits need only 70–90 million Toffoli gates, shrinking the LOB gap to roughly 7,000–9,000× — still large, but only four orders of magnitude rather than six. This is why elliptic curve cryptography is the more immediate quantum target.

Bridging this requires exponentially better error rates at the logical level — which means higher code distances, more physical qubits per logical qubit, and faster, more accurate decoders running in real time. No modality is close.

What Should CISOs Do About It?

I enjoy these discussions immensely. Analyzing resource estimates, tracking modality progress, debating Q-Day predictions — it’s intellectually stimulating, and I believe it’s important for cutting through both Q-FUD (the quantum panic industry) and quantum denialism. I love clarifying the misconceptions that surround all of this.

But the bottom line for anyone responsible for organizational security is this: the precise date of Q-Day shouldn’t be your risk management approach. The ecosystem has already moved. NIST has finalized post-quantum cryptography standards. NSA’s CNSA 2.0 mandates migration by 2030–2033. Harvest Now, Decrypt Later (HNDL) attacks are happening today. And Trust Now, Forge Later attacks against digital signatures are an emerging concern.

As I’ve argued in detail: forget Q-Day predictions — regulators, insurers, investors, and clients are your new quantum clock. The 650,000× LOB gap is reassuring if you’re asking “will my data be broken tomorrow?” It is not reassuring if you’re asking “will data I send today be readable in 2035?” — because PQC migration typically takes 5–10 years, and the gap is closing from both directions simultaneously.

Start your migration. The quantum computer that breaks your encryption doesn’t exist yet. The deadline to protect against it already does.

Try It Yourself: The Q‑Day Estimator

The scorecard above gives you a snapshot — here’s the tool to run your own scenarios. Pick a resource estimation paper on the left (the “demand” — how many logical qubits, operations, and throughput a specific cryptographic attack requires) and a quantum modality on the right (the “supply” — where that technology stands today). The tool calculates a Composite CRQC Readiness Score and projects when it crosses 1.0 — the estimated Q‑Day.

Each modality comes pre-loaded with the values from my scorecard above and per-metric growth factors. Where a growth rate is derived from observed hardware progress (e.g., trapped-ion LQC scaling ~12×/year), it’s shown as-is; where I’ve used a default assumption, it’s flagged with ⚑. Adjust any value to test your own assumptions — what if neutral-atom LOB grows faster than I’ve estimated? What if Gidney’s 2025 resource estimates are further optimized?

For the full methodology behind the three metrics and scoring, see the CRQC Readiness Benchmark — Methodology & Assumptions. For the permanent version of this tool with additional context, visit the CRQC Readiness Benchmark (Q‑Day Estimator) page.

CRQC Readiness Benchmark (Q-Day Estimator)

Current Capability (Supply)

Benchmark Explanation | Scorecard | CRQC Capability Framework

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.