Architecture Matters as Much as the Algorithm: Q-CTRL’s Heterogeneous Quantum Computer Design Cuts RSA-2048 to 190k-381k Qubits

Table of Contents

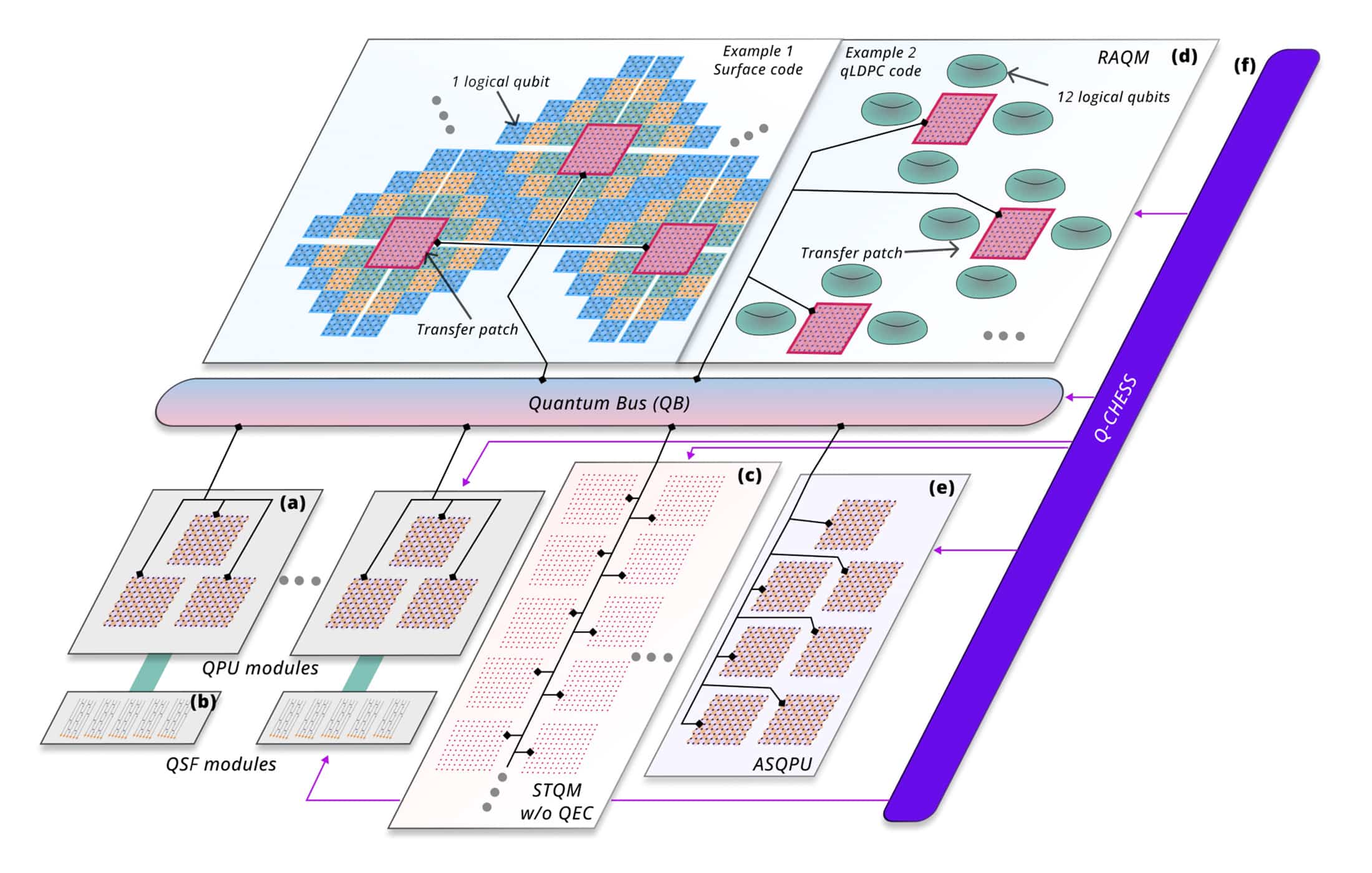

9 Apr 2026 – Researchers at Q-CTRL, a quantum infrastructure software company headquartered in Los Angeles and Sydney, have published a paper introducing Q-NEXUS, a heterogeneous quantum computing architecture that claims to reduce the physical qubit requirements for factoring 2048-bit RSA integers to as few as 190,000 physical qubits — a roughly 4.7× reduction from the current monolithic baseline.

The paper, titled “Heterogeneous architectures enable a 138× reduction in physical qubit requirements for fault-tolerant quantum computing under detailed accounting”, was just posted to arXiv. The research team, led by Pranav S. Mundada, Aleksei Khindanov, Yulun Wang, and including Q-CTRL founder Michael J. Biercuk, proposes separating quantum computation into specialized functional modules — processing units, quantum memory, state factories, and a quantum bus — rather than building a single monolithic qubit array.

The core result for RSA-2048 factorization: using an experimentally demonstrated grid-coupling topology and Gidney’s 2025 algorithm, the architecture requires 381,000 physical qubits and 9.2 days. Adding an application-specific accelerator for the dominant Adder subroutine reduces factorization time to 4.9 days at a cost of 439,000 qubits. If hypothetical long-range qubit coupling is assumed, enabling qLDPC codes in memory, the requirement drops to 190,000 qubits in under 10 days.

The paper also introduces Q-CHESS, a compiler framework that produces machine-level instructions for the heterogeneous architecture, explicitly modeling timing mismatches, scheduling, and error accumulation across modules with different clock rates.

Notably, Q-CTRL’s approach does not modify the underlying cryptanalytic algorithm. The gains come entirely from how the computation is organized and executed across specialized hardware, drawing explicit parallels to the von Neumann stored-program architecture that underpins all modern classical computing.

The Architectural Revolution I’ve Been Arguing For

I’ll confess some satisfaction with this paper. For years, I’ve been arguing that the most likely path to a cryptographically relevant quantum computer (CRQC) won’t be a single monolithic quantum chip, but rather a heterogeneous system where different qubit modalities and classical HPC are dynamically orchestrated – with AI-supported scheduling routing the right parts of problems to the most suitable QPU, memory tier, or classical processor. The industry’s obsession with finding the single “Goldilocks qubit” that excels at everything has always struck me as a conceptual dead end, analogous to trying to build a supercomputer from a single type of transistor rather than combining CPUs, GPUs, memory hierarchies, and interconnects.

Q-CTRL’s paper is the most rigorous validation to date of this thesis on a real cryptographic benchmark. They didn’t invent a new algorithm. They didn’t assume exotic hardware. They took the existing best-in-class factoring algorithm and showed that how you organize and orchestrate the computation matters as much as the computation itself.

If March 2026 was the month that algorithmic breakthroughs and aggressive QEC code optimization dominated the headlines, April opens with what I consider the third independent lever for reducing CRQC resource requirements – and potentially the one that tells us most how (in my, and obviously Q-CTRL’s opinion) these machines will actually get built.

Q-CTRL’s paper doesn’t discover a new algorithm. It doesn’t assume exotic qubit connectivity that nobody has built. What it does is apply a principle that the classical computing industry learned sixty years ago: you don’t build a supercomputer by making one giant chip – you build it by specializing components for what they’re good at, and connecting them intelligently.

That sounds obvious. But the quantum computing field has been surprisingly resistant to this idea, and Q-CTRL makes a compelling case.

Why Qubits Spend 97% of Their Time Doing Nothing

The paper’s most striking observation is also its simplest: in Gidney’s efficient implementation of Shor’s algorithm, each qubit is inactive for approximately 96–97% of all logical clock cycles. In a monolithic architecture, those idle qubits sit in the same expensive, high-error-rate, actively error-corrected hardware as the qubits doing computation. They accumulate errors. They consume cryogenic cooling capacity. They demand the same dense wiring and control infrastructure as working qubits.

This is the quantum equivalent of running your entire data center at full power to serve a single database query. It’s wildly inefficient and it’s how essentially every major resource estimation paper has modeled fault-tolerant quantum computing until now.

Q-CTRL’s insight is to separate storage from processing. Idle qubits get shipped to quantum memory – either a “static” memory (STQM) using ultra-long-coherence qubits like rare-earth ions with coherence times exceeding 13 hours, or a “random-access” memory (RAQM) using actively error-corrected but slower qubit modalities like neutral atoms or trapped ions. The quantum processing unit (QPU) stays small at just three logical qubits per core, and operates at microsecond timescales on superconducting hardware.

The result? Idling errors, which dominate the error budget in monolithic architectures, are effectively suppressed. The paper shows that for a 1,000-logical-qubit approximate quantum Fourier transform, idling accounts for the vast majority of total algorithmic error in a monolithic machine but becomes negligible in the heterogeneous design. For 1,000-logical-qubit benchmarks, this architectural change produces up to a 59× reduction in algorithmic error for the Quantum Fourier Transform (and a staggering 551× for the Quantum Adder subroutine), along with up to a 138× reduction in physical qubit count – the latter depending on whether memory is implemented as static (STQM) or random-access (RAQM).

How This Compares to the March 2026 Blockbusters

To put Q-CTRL’s numbers in context, consider the cascade of resource estimation papers that have landed over the past year — each one I’ve analyzed for my CRQC Scorecard (I’ll have to update my scorecard. For the fourth time in two weeks!):

| Paper | Physical Qubits (RSA-2048) | Runtime | Key Assumption |

|---|---|---|---|

| Gidney & Ekerå 2021 | 20 million | 8 hours | Surface code, grid topology |

| Gidney 2025 | ~1 million | ~1 week | Surface code, grid topology, yoked storage |

| Pinnacle (Webster et al. 2026) | ~100,000 | ~1 month | qLDPC codes, non-local connectivity |

| Cain et al. 2026 | ~10,000 (space-efficient) | ~120 years | Neutral atoms, reconfigurable connectivity |

| Q-CTRL Q-NEXUS (2026) | 190,000-381,000 | 9.2 days | Heterogeneous modules, grid topology (QPU) |

| Q-CTRL Q-NEXUS + accelerator (2026) | 250,000-439,000 | 4.9 days | As above, plus dedicated Adder ASQPU |

What immediately stands out is that Q-CTRL occupies a unique position in this landscape. It doesn’t chase the lowest possible qubit count – Cain et al.’s 10,000-qubit result wins that race, though at the cost of a runtime measured in decades (their more practical time-efficient configuration scales to ~102,000 qubits and 97 days). And it doesn’t assume the aggressive non-local connectivity that makes the Pinnacle architecture’s 100,000-qubit result contingent on hardware capabilities that haven’t been demonstrated in superconducting devices.

Instead, Q-CTRL takes Gidney’s 2025 algorithm, the current gold standard, and asks: what if we just organized the hardware better? Starting from Gidney’s ~900,000-qubit monolithic baseline, architectural reorganization alone delivers a 2.4× to 4.7× reduction in physical qubits while maintaining comparable or better runtime. No new algorithm. No exotic connectivity. Just better engineering.

This is the paper’s real contribution, and it’s one that hasn’t received enough attention in the resource estimation discourse. The community has been fixated on algorithmic innovation (Chevignard-Fouque-Schrottenloher’s approximate residue arithmetic) and QEC code innovation (qLDPC codes, bivariate bicycle codes). Q-CTRL demonstrates that architecture is a third, independent lever – and one that compounds with the other two.

It also acts as a force multiplier for Google’s ECDLP result. When Google estimated that fewer than 500,000 superconducting qubits could break Bitcoin’s elliptic curve cryptography in minutes, a reasonable skeptic could argue: cramming 500,000 microwave-controlled superconducting qubits with their dense wiring into dilution refrigerators is an engineering nightmare. Q-CTRL’s answer is: you don’t have to. You only need a tiny superconducting processing core, literally three logical qubits per core in their model, networked with a dense, easily scalable, slow-clock quantum memory bank. The compiler masks the memory latency, and the system operates at the speed of the fast-clock QPU. The monolithic wiring nightmare isn’t solved; it’s bypassed.

The Real Significance: Architecture Creates a Path for Today’s Hardware

Here’s what I think the quantum computing community is underweighting: Q-CTRL’s approach dramatically lowers the bar for which qubit modalities can contribute to a CRQC, and how.

In the monolithic paradigm, you need a single qubit modality that excels at everything – fast gates, long coherence, high connectivity, low errors, and scalable fabrication. No modality checks all these boxes. Superconducting qubits are fast but have poor coherence. Trapped ions have excellent coherence but are slow. Neutral atoms offer reconfigurability but face scaling challenges.

Q-NEXUS breaks this deadlock. It lets each modality do what it does best:

- Superconducting qubits for the QPU – fast gates, microsecond cycle times, well-understood surface codes.

- Rare-earth ions or similar ultra-long-coherence systems for static memory – coherence times of hours to days, no active error correction needed.

- Neutral atoms or trapped ions for random-access memory – long coherence enabling lower code distances and higher storage density.

- Photonic interconnects for the quantum bus – enabling module-to-module communication without dense physical wiring.

This is, in my assessment, a much more realistic path to a CRQC than any architecture that demands one modality to do everything. It also explains why DARPA launched its Heterogeneous Architectures for Quantum (HARQ) initiative – the U.S. defense establishment clearly sees this architectural approach as strategically significant.

The Rise of the Quantum ASIC

There’s another concept buried in Q-CTRL’s paper that deserves its own spotlight: the Application-Specific QPU (ASQPU). In classical computing, we long ago stopped running everything on general-purpose CPUs. We use GPUs for graphics and machine learning, ASICs for Bitcoin mining, TPUs for tensor operations. Each is a piece of hardware custom-designed to execute a specific class of operations with ruthless efficiency.

Q-CTRL proposes the same logic for quantum computing. Their analysis of RSA-2048 reveals that approximately 70% of the total factoring runtime is consumed by a single subroutine: the Adder. This routine is fundamentally serial – you can’t speed it up by adding more general-purpose QPU cores. But you can build a dedicated 37-logical-qubit hardware accelerator optimized specifically for that arithmetic operation.

The result: a 46% reduction in runtime (from 9.2 to 4.9 days) for a mere 13% increase in hardware. That’s an extraordinary return on a modest investment – the kind of asymmetric tradeoff that makes an attacker’s job significantly easier.

This matters for threat modeling. An adversary building a CRQC wouldn’t construct a general-purpose quantum mainframe. They would build a purpose-optimized system – with custom accelerators for the specific subroutines that dominate their target workload, whether that’s RSA factoring, ECDLP for cryptocurrency attacks, or something else entirely. In classical computing, this is how every major performance breakthrough has been achieved: not by making CPUs faster, but by identifying the bottleneck and building specialized hardware to eliminate it. There’s no reason to assume quantum computing will be different.

The Caveats: What Q-CTRL Doesn’t Have Yet

I would be failing my editorial mandate if I didn’t note the significant engineering gaps between this paper and a working machine.

The quantum bus is the hard part – and the new metric to watch. The entire architecture depends on high-fidelity, high-rate transfer of quantum states between modules via Bell-pair generation and teleportation. Q-CTRL’s paper uses experimentally demonstrated Bell-pair purification schemes, but sustaining the required logical state transfer rate of 0.1 MHz across potentially thousands of interconnect channels while maintaining fidelity is a formidable engineering challenge. The paper is transparent about this – they note the quantum bus is “technically demanding” – but it remains the critical integration point that could make or break the timeline.

This has a strategic implication that goes beyond Q-CTRL’s paper. If heterogeneous architectures are indeed the most practical path to a CRQC, then the race to Q-Day becomes fundamentally a quantum networking race. The traditional threat indicator has been “how many qubits can platform X fabricate?” The new indicator should be “who has demonstrated high-fidelity, high-bandwidth quantum state transfer between a fast-clock QPU and a slow-clock quantum memory?” A breakthrough in quantum interconnects or microwave-to-optical transduction would do more to accelerate the CRQC timeline than adding another thousand qubits to a monolithic chip. Companies like Nu Quantum, Qunnect, and Aliro, along with the interconnect acquisitions by IonQ (Lightsynq) and Pasqal (Aeponyx), are where I’d focus monitoring attention.

The memory technologies are early-stage. Rare-earth ion memories with 13-hour coherence times have been demonstrated in laboratory settings. They have not been demonstrated at scale, integrated with superconducting QPUs, or operated as part of a quantum computing stack. Similarly, the random-access quantum memory using neutral atoms or trapped ions with active QEC at the parameters Q-CTRL assumes has not been built.

The compiler is simulated, not demonstrated. Q-CHESS produces impressive compiled schedules for algorithms up to 1,000 logical qubits, but this is a software artifact. No physical quantum computer has been orchestrated by this compiler, and the real-world behavior of heterogeneous timing mismatches, cross-module synchronization errors, and classical control latencies may introduce costs not captured in the model.

These are real gaps. But, and this is important, they are all engineering gaps, not fundamental physics barriers. Every component Q-CTRL proposes has been demonstrated individually in some form. The challenge is integration at scale, which is precisely the kind of problem that money, talent, and time can solve.

What This Means for the CRQC Scorecard

My CRQC Scorecard assesses the gap between current hardware capabilities and CRQC requirements across three metrics: Logical Qubit Capacity (LQC), Logical Operations Budget (LOB), and Quantum Operations Throughput (QOT). The Q-CTRL paper has important but precisely bounded implications for each — and getting this distinction right matters.

The core demand metrics do not change. This is crucial. Q-CTRL uses Gidney’s RSA-2048 circuit unchanged: ~1,399 logical qubits (LQC), ~6.5 billion Toffoli gates (LOB), and ~5–9 days of sustained operation (QOT). The architecture doesn’t reduce the logical workload – it reduces the physical hardware needed to execute that workload. The LOB bottleneck I identified as the universal weakness across all modalities – the 650,000× gap between demonstrated logical operations and what RSA-2048 demands – remains completely intact.

What changes is the physical qubit target. On the demand side of the scorecard’s “Shrinking Target” table, Q-CTRL adds a new data point that sits between Gidney 2025 (~1M) and the Pinnacle architecture (~100k). For fast-clock superconducting RSA-2048 scenarios, the responsible planning range shifts from “roughly a million” to “a few hundred thousand” – and that range is now architecture-dependent rather than algorithm-dependent.

What also changes is how we think about the supply side. Currently, my scorecard assesses each modality independently. Q-CTRL argues persuasively that the relevant question shifts from “which modality wins?” to “which modalities combine best?” This changes the gap analysis: the QOT and LQC gaps shrink if you allow different modalities to handle different functions, even as the LOB gap persists.

My updated assessment: I plan to add Q-NEXUS as a new row in the Scorecard’s “Shrinking Target” table and add a heterogeneous architecture section on the supply side. I would not change the LQC/LOB/QOT bars or the qualitative conclusion that LOB is the universal bottleneck. Q-CTRL makes the hardware target less daunting in qubit count, but the machine still needs to sustain billions of logical operations at error rates below 10⁻¹⁰ for days on end. That endurance challenge hasn’t gotten any easier. I also plan to add quantum interconnect maturity as a new supplementary tracking metric – because in a heterogeneous world, the quantum bus becomes the critical enabler, and monolithic qubit counts alone no longer capture the full threat picture.

The Two-Front Squeeze: Demand Drops, Then Drops Again

Here’s the strategic picture that should worry defenders. The resource estimates for breaking cryptography are being compressed from two directions simultaneously: the algorithm gets more efficient (fewer logical qubits, fewer Toffoli gates), and then the machine that runs the algorithm gets more efficient (fewer physical qubits per logical qubit, better utilization, less waste). These two improvements are largely independent – they multiply rather than add.

This is exactly the pattern that matters for security planners: the demand curve drops because the circuits improve, and then it drops again because someone figures out how to build the machine more efficiently. The uncomfortable corollary: each “resource reduction” paper doesn’t eliminate difficulty, it redistributes it. qLDPC codes push difficulty into connectivity and decoder complexity. Fast-clock superconducting designs push difficulty into continuous operation and cryogenic scaling. Heterogeneous architectures push difficulty into interconnect reliability, quantum memory integration, and orchestration software. The total difficulty hasn’t vanished, but it has been decomposed into sub-problems that are each independently tractable, which is how engineering makes progress.

The Broader Pattern: The Finish Line Is Moving — In Both Directions

Zoom out and the pattern of the past 12 months is unmistakable. The resource estimates for breaking RSA-2048 have fallen from 20 million qubits to potentially under 200,000 – a two-order-of-magnitude reduction driven by three independent lines of innovation:

- Algorithmic innovation – Chevignard-Fouque-Schrottenloher’s approximate residue arithmetic, streamlined by Gidney, reduced logical qubit requirements from ~3n to ~0.5n.

- QEC code innovation – qLDPC codes (bivariate bicycle, Gross codes) increased storage density by orders of magnitude.

- Architectural innovation – Q-CTRL’s Q-NEXUS demonstrates that separating processing from storage yields another 2–5× reduction, independently of the other two.

These three levers multiply. A future system combining all three innovations could plausibly require well under 100,000 physical qubits to factor RSA-2048 – a number that is starting to overlap with industry roadmaps for the late 2020s to early 2030s.

The Q-CTRL paper isn’t the scariest resource estimation paper of 2026 – that honor goes to Google’s 9-minute Bitcoin result or the 10,000-qubit Shor’s result from Cain et al. But it may be the most strategically significant, because it illuminates how a CRQC will most likely get built: not as a monolithic miracle chip, but as an engineered system of specialized components. For security practitioners, Google’s paper changes the operational threat model more. For anyone trying to understand the path to Q-Day, this paper another important one to read.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.