Three Labs, One Week, One Threshold: Silicon Qubits Cross the Fault-Tolerance Line

Table of Contents

26 Jan 2022 – For a decade, silicon spin qubits have been quantum computing’s most tantalizing “not yet.” The pitch was always compelling: qubits built from the same material that powers every smartphone and data centre on earth, manufactured using processes the semiconductor industry has spent half a century perfecting. But the performance gap was real. While superconducting circuits and trapped ions had long since demonstrated the gate fidelities required for quantum error correction, silicon lagged — its two-qubit operations stuck below the critical 99% threshold that the surface code demands.

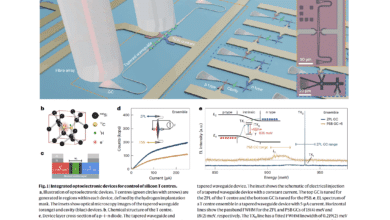

That gap closed this week. Three independent research groups — at RIKEN in Japan, QuTech at TU Delft in the Netherlands, and UNSW in Australia — have simultaneously published results in Nature demonstrating silicon quantum gates that exceed the fault-tolerance threshold. The papers appear back-to-back in the same issue, a coordinated editorial statement about the maturity of a platform that many in the quantum community had relegated to “promising but unproven.”

The message is unambiguous: silicon is now a first-class contender for fault-tolerant quantum computing.

Three Approaches, One Conclusion

The three papers take notably different paths to the same destination, which strengthens the result considerably. This is not a single lab getting lucky with one device — it is three separate architectures, on three continents, independently converging on the same conclusion.

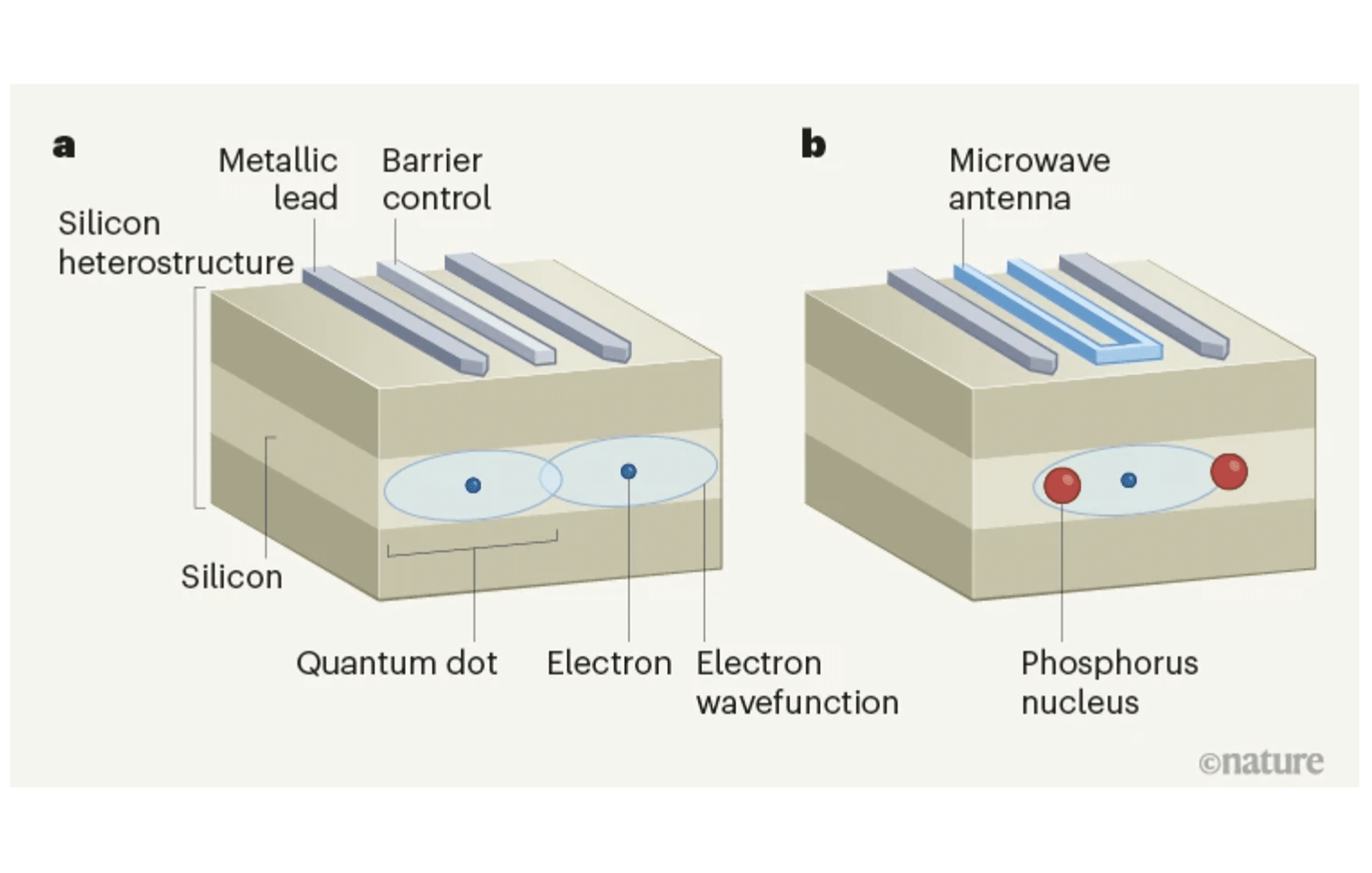

Noiri et al. (RIKEN/QuTech) demonstrated a two-qubit gate fidelity of 99.5% alongside single-qubit gate fidelities of 99.8%, using electron spin qubits in silicon-germanium (Si/SiGe) quantum dots. The key innovation was speed: by exploiting a micromagnet-induced gradient field and tunable two-qubit coupling, they achieved fast electrical control that suppressed the decoherence errors which had limited previous silicon two-qubit gates to ~98% fidelity. The two-qubit gate executes in just 100 nanoseconds — fast enough that environmental noise has minimal time to corrupt the operation.

Xue et al. (QuTech/TU Delft) achieved single-qubit and two-qubit gate fidelities all above 99.5%, using a similar Si/SiGe quantum dot platform but characterized with the rigorous gate-set tomography (GST) method rather than conventional randomized benchmarking. GST provides a more complete picture of gate errors, including coherent errors and crosstalk that benchmarking can miss. The fact that fidelities remain above threshold even under this more demanding characterization is significant — it means the result is robust, not an artefact of a forgiving metric.

Mądzik et al. (UNSW) took an entirely different physical approach: phosphorus donor nuclear spins in silicon, controlled via a shared electron. They demonstrated a nuclear two-qubit controlled-Z gate with 99.37% fidelity and single-qubit gates reaching 99.95% — the highest single-qubit fidelity reported in the trio. They also prepared entangled Bell states with fidelities up to 94.2% and demonstrated a three-qubit processor, the first multi-qubit donor system characterized with full gate-set tomography.

The donor approach is architecturally distinct from quantum dots. Where quantum dots confine individual electrons using electrical gates (analogous to tiny transistors), donor qubits use the nuclear spins of phosphorus atoms embedded directly in the silicon lattice. The nuclear spins offer exceptionally long coherence times — seconds rather than microseconds — at the cost of more demanding fabrication, since each phosphorus atom must be placed with near-atomic precision.

Why the 99% Threshold Matters

The significance of 99% is not arbitrary. It derives from the theoretical requirements of the surface code — currently the most practical and best-understood quantum error-correcting code. The surface code can tolerate physical gate error rates up to approximately 1% (equivalently, fidelities above 99%) and still correct errors faster than they accumulate. Below this threshold, errors cascade faster than the code can fix them, and the computation fails.

Crossing this threshold does not mean fault-tolerant quantum computing is here. It means that, in principle, silicon qubits now have the raw operational quality needed to benefit from error correction. The distinction matters enormously: without crossing the threshold, no amount of additional qubits helps — you are simply amplifying noise. Above the threshold, adding more qubits genuinely improves reliability, opening the path to large-scale, fault-tolerant computation.

Until this week, only three qubit platforms had cleared this bar: superconducting circuits, trapped ions, and nitrogen-vacancy centres in diamond. Silicon is now the fourth.

What This Means for Quantum Threat Timelines

For organizations tracking the trajectory toward a cryptanalytically relevant quantum computer (CRQC), this result reshapes the competitive landscape in a specific and important way.

Silicon has always had the strongest scaling story of any qubit platform. A single silicon spin qubit occupies roughly 50 × 50 nanometres — about the size of a modern transistor and roughly a million times smaller than a superconducting qubit. In principle, millions of qubits could fit on a single chip, manufactured using the same lithographic processes that produce today’s processors. Companies like Intel are already fabricating silicon qubit test chips on 300 mm wafers in their existing fabs.

The missing ingredient was performance. If silicon qubits couldn’t meet the fault-tolerance threshold, their manufacturing advantages were academic. These three papers remove that objection.

This does not mean silicon will be the first platform to achieve a CRQC. Superconducting qubits have a significant head start in system integration, with Google and IBM operating processors with dozens to hundreds of qubits. Trapped-ion systems from IonQ and Honeywell have demonstrated high-fidelity operations at small scale. But silicon now enters the race with a credible path from lab demonstration to industrial-scale manufacturing — a path that no other platform can match.

The practical implication for security planners: the number of viable pathways to a CRQC just increased. Any threat model that dismissed silicon as “too far behind” needs revision. The platform has now demonstrated the fundamental quality requirement; what remains is engineering — scaling qubit counts, integrating control electronics, and implementing error correction protocols. These are hard problems, but they are the kind of problems the semiconductor industry has been solving for decades.

The Road From Threshold to Scale

Crossing the fault-tolerance threshold is necessary but far from sufficient. Several major challenges remain before silicon can deliver on its scaling promise.

The first is qubit count. The largest silicon quantum processors today contain fewer than ten qubits. Fault-tolerant quantum computing will require thousands of physical qubits per logical qubit, meaning millions of physical qubits for practically useful computations. The path from six qubits to six million is not merely quantitative — it requires solving problems of interconnect routing, frequency crowding, cross-talk management, and classical control integration that do not arise at small scale.

The second is measurement. Current silicon qubit read-out relies on charge sensing with single-electron transistors — a technique that is slow and difficult to parallelise. Scalable architectures will likely require dispersive read-out through microwave resonators, an approach that is standard in superconducting systems but still being developed for silicon.

The third is operating temperature. Most silicon qubit experiments run at ~20 millikelvin in dilution refrigerators. While some recent work has shown operation above 1 kelvin — dramatically relaxing cooling requirements — maintaining high fidelity at elevated temperatures remains an open challenge.

These are real obstacles. But they are engineering obstacles, not fundamental physics obstacles. The papers published this week establish that the physics works. The question is now how fast the engineering can follow.

The Significance of Simultaneity

There is one more aspect of this result worth noting: the simultaneity. Three independent groups, using different device architectures and fabrication methods, all crossing the same threshold in the same week is not coincidence — it is a signal that the field has matured to a tipping point. The techniques needed to achieve high-fidelity silicon gates (isotopic purification, advanced pulse shaping, careful characterization) are no longer confined to a single group with unique expertise. They have become reproducible.

Reproducibility is what separates a laboratory curiosity from a technology. Silicon spin qubits just crossed that line.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.