Capability E.1: Engineering Scale & Manufacturability

Table of Contents

This piece is part of an ten‑article series mapping the capabilities needed to reach a cryptanalytically relevant quantum computer (CRQC). For definitions, interdependencies, and the Q‑Day roadmap, begin with the overview: The CRQC Quantum Capability Framework.

(Updated in Nov 2025)

(Note: This is a living document. I update it as credible results, vendor roadmaps, or standards shift. Figures and timelines may lag new announcements; no warranties are given; always validate key assumptions against primary sources and your own risk posture.)

Introduction

Building a cryptography-breaking quantum computer (often dubbed Q-Day) will demand far more than just better algorithms or a few more qubits. It requires a massive scale-up in engineering – reaching hundreds of thousands or even millions of physical qubits – and doing so in a practical, manufacturable way.

Engineering Scale & Manufacturability (Capability E.1) is about bridging the gap between today’s laboratory prototypes and tomorrow’s industrial quantum machines. This means not only increasing qubit counts, but also ensuring those qubits can be fabricated with high yield, integrated into large modules, controlled efficiently, kept stable for long periods, and produced at a reasonable cost. In essence, E.1 addresses the question: Can we build a million-qubit quantum computer with the reliability and economy of classical computing systems?

To answer that, we look at several key engineering dimensions – from chip yields and packaging to cryogenics and system uptime. Below we outline the main areas to consider, along with signals to watch for that indicate progress in each area. When these engineering challenges are overcome together, the feasibility of building a large-scale quantum computer dramatically increases – laying the groundwork for Q-Day.

Signals That Engineering Feasibility Is Improving:

- Economic feasibility plans: Signs that scaling isn’t just possible in principle but planned in practice – for example, vendor roadmaps or government programs targeting deployable systems of >100k qubits (not just lab prototypes), with budgets and manufacturing partners aligned.

- Module yield milestones: Demonstrations of high-yield multi-chip QPU modules (e.g. chips with 500–5,000 qubits each) that have few or no defective qubits or couplers.

- Cryo-integrated control electronics: Successful use of cryogenic controllers (cryo-CMOS chips, cryo-ASIC decoders, microwave multiplexers) inside the fridge, dramatically cutting the wiring count and heat load per qubit.

- Reduced cryogenic power per qubit: Evidence that newer systems require significantly less cooling power per qubit – for example, each generation of hardware reduces per-qubit power draw by 2×–10× – indicating better efficiency and thermal management.

- Reliable modular QPU interconnects: High-fidelity links between separate quantum chips or cryostats becoming routine (not just one-off experiments), showing that multiple modules can function as one large processor.

- Industrial-grade uptime: Reports of multi-hour or multi-day quantum runs with minimal downtime, thanks to automated recalibration, self-healing of faulty components, and redundant subsystems.

When we start to see these signals appear together, it means E.1 is improving – the hardware isn’t just working in physics experiments, but is becoming buildable at scale. This level of engineering maturity is a prerequisite for Q-Day.

QPU Module Size and Yield

A foundational step toward scalable quantum computing is the ability to create large quantum processing unit (QPU) modules with high yield. Instead of a monolithic million-qubit chip (which is impractical), engineers envision tiling together many smaller QPU modules – each a chip containing perhaps a few hundred to a few thousand physical qubits. Achieving E.1 means defining an optimal module size and demonstrating that these modules can be manufactured reliably and reproducibly.

Yield is a critical metric here: if a chip has 1000 qubits, how many of those qubits (and their couplers or control circuits) work as intended? Early quantum chips have often been limited in size partly because yield drops dramatically as you pack in more qubits – tiny fabrication defects or variation can disable a qubit or a connection. An encouraging sign is when hardware teams report high yields on larger chips. For instance, if a company can produce a 1000-qubit chip where 995+ qubits are functional, that’s a huge leap in manufacturability. We look for announcements of yield milestones (e.g. “95%+ yield on a 1024-qubit wafer”) as evidence that scaling to bigger modules is becoming practical.

Equally important is how designs cope with the inevitable defects. In classical processors, it’s common to include spare elements or to map around defective regions; similarly, a scalable QPU design might include extra “backup” qubits on each tile or flexible wiring that can bypass a bad qubit. When evaluating progress, we ask: Does the architecture have a plan for faulty qubits or bad tiles? For example, some proposals suggest dynamic remapping of logical qubits to different physical qubits if one goes bad, or using error correction to simply ignore a few non-functioning qubits. A manufacturable million-qubit system will likely tolerate a percentage of defective components without performance loss. If a team explicitly mentions strategies like spare qubit regions, fuse-able qubit clusters, or error-tolerant tiling, it shows they are tackling the real-world yield problem head-on.

In summary, module yield and scalability is about both making bigger quantum chips and making them work. A clear indicator of progress is the existence of a defined module (say 500 or 1000 qubits per chip) that they plan to replicate, and evidence that this size is at or near the sweet spot for high yield. As those modules grow and improve, the road to millions of qubits comes into view.

Packaging, Wiring & Control Electronics

Scaling up isn’t just about qubit chips – it’s also about how you package and control those chips. Today’s small quantum computers are notoriously wiring-heavy: each qubit might have multiple control and readout lines (coaxes, wires, optical fibers, etc.) running from room-temperature instruments down into a dilution refrigerator. That approach does not scale to thousands of qubits, let alone millions – you’d end up with an impractical tangle of millions of wires and an immense heat load into the cryostat. Thus, a key aspect of E.1 is engineering dense, efficient wiring and control systems for large qubit counts.

One sign of progress is the use of multiplexing and on-chip control to reduce the number of wires per qubit. Multiplexing can be in frequency (sending different qubit signals over different frequency bands on the same line) or in time, or using a single line to address many qubits sequentially at high speed. We are seeing new techniques where a single coax line can deliver control pulses to dozens of qubits by using cryogenic switch networks or resonant circuits.

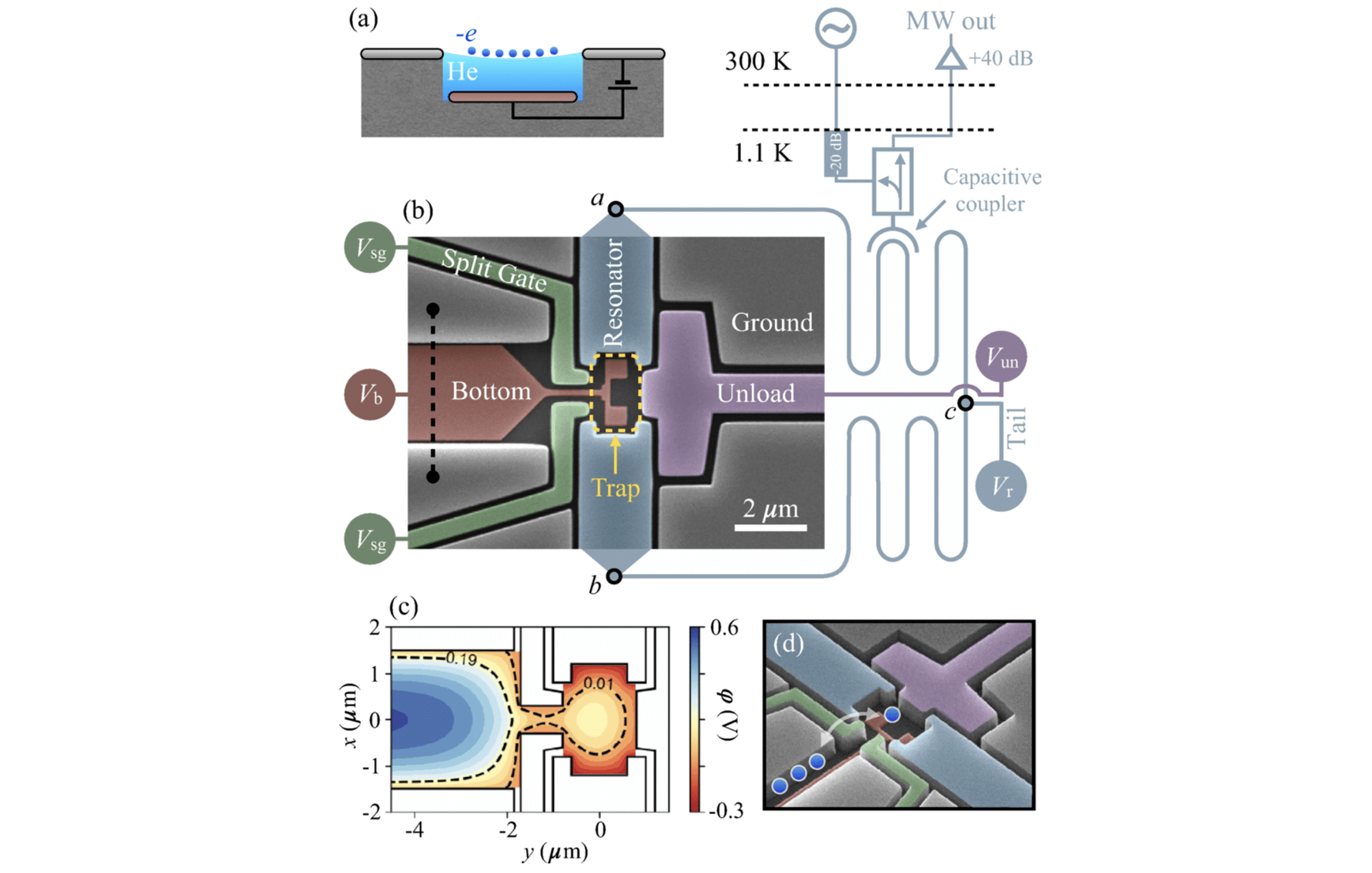

Another breakthrough is integrating control electronics directly into the cryogenic environment. For example, some research teams have developed cryo-CMOS and cryo-ASIC chips that sit inside the fridge, very close to the qubit chip, and handle signal generation, routing (Capability B.4), and decoding (Capability D.2). By doing so, they can send one high-bandwidth cable into the fridge which the cryo-controller then distributes to many qubits internally – dramatically cutting down the external wiring. A notable example is Intel’s development of cryogenic control chips (like “Horse Ridge” and “Pando Tree”), which act as signal multiplexers at low temperature. Such approaches have demonstrated that controlling, say, 1,000 qubits might only require on the order of 10 input lines instead of 1,000 separate wires, by encoding many control signals onto a few channels. This kind of innovation directly addresses the wiring bottleneck and is a strong signal of manufacturable design.

When examining a scalability plan, we ask: How many control lines per qubit will this architecture need, and how will they achieve that? A credible large-scale design will articulate how they avoid a linear explosion of wires. It might involve bus lines on the chip, advanced 3D packaging (stacking chips so that control circuitry sits right below the qubit layer), or optical control (using lasers and photonic waveguides instead of electrical wires, as in some ion trap and photonic qubit systems). The presence of integrated control schemes – whether it’s microwave multiplexers, FPGA/ASIC controllers at cryogenic temps, or photonic control planes – is a sign that the team is grappling with the practical realities of control at scale.

In summary, packaging and control engineering improvements are vital for E.1. Look for evidence like custom chip packaging solutions, reduced cable counts, on-chip filtering and routing networks, and explicit statements like “our new 1000-qubit system uses the same number of feedlines as our old 100-qubit system.” Those are the kinds of breakthroughs that enable scaling without the system literally getting strangled by its own wiring.

Cryogenic Cooling & Power Budget

Large quantum machines, especially those based on superconducting qubits or spin qubits, must operate at extremely low temperatures (millikelvins). Cryogenics thus becomes a major engineering concern: the bigger the system, the more heat it generates that must be removed by the refrigerator. One way to gauge engineering scalability is by examining the cryogenic power budget per qubit – essentially, how much cooling power is needed per qubit and whether that is improving over time.

In early small systems, the overhead per qubit (from control electronics, cabling, and the qubit’s own dissipation) is very high. If we simply extrapolated today’s power requirements, a million-qubit superconducting machine might require tens of megawatts of input power for the fridge, which is clearly infeasible (that’s like a small power plant!). Therefore, to scale, each qubit’s incremental heat load must shrink dramatically. Signals of progress include statements like “our new design reduces the heat load per qubit by a factor of 5” or concrete figures such as milliwatts or microwatts per qubit at various temperature stages. Some improvements come from the aforementioned cryo-electronics (since sending signals as digital pulses and demultiplexing at low temperature is often more efficient than hundreds of analog cables).

Other improvements might come from using higher-temperature superconductor materials (so that some stages can run a bit warmer) or from clever thermal engineering and insulation. When hardware teams report lower power consumption or more efficient cooling for bigger systems, it indicates they’re actively managing the cryogenic scaling issue.

We also look for total system power estimates for a full-scale quantum computer. Has anyone put forward a design and said “we think a 1,000,000-qubit machine can run on ~1 megawatt of power” (just to use a round number)? Or perhaps “our 10,000-qubit prototype draws 20 kilowatts, which suggests linear scaling to a few MW at a million qubits.” Such estimates, if under a few megawatts, would be a positive sign — a few megawatts is on par with large classical supercomputers or data centers, and might be acceptable.

On the other hand, if the projections are much higher, that indicates a serious hurdle. A realistic E.1 roadmap will acknowledge this and aim to keep the cooling and control power within a manageable envelope.

Finally, there’s the question of cryostat architecture. Instead of one monolithic fridge trying to house a million qubits (which might be physically enormous and unwieldy), many plans suggest multiple smaller cryostats or cooling zones networked together. We check if a project envisions a modular cryogenic setup – for example, ten refrigerators each holding 100k qubits, networked optically or via quantum links. Multiple cryostats could also mean easier maintenance (you can warm up one unit for service while others keep running) and easier manufacturing (many smaller identical units vs. one giant bespoke unit). If a quantum computing roadmap mentions something like a “fridge farm” or a distributed quantum data center with many cryomodules, that’s likely a mark of a practical, scalable mindset.

In summary, solving the cryo and power challenge is crucial for E.1. We want to see evidence that each added qubit doesn’t break the thermal budget – in fact, each generation of the machine should handle more qubits per watt of cooling. A combination of reducing per-qubit heat and partitioning the system intelligently will indicate that the cryogenic barrier to scaling is coming under control.

Modular Architecture & Inter-QPU Connectivity

Because purely monolithic scaling (everything on one chip in one fridge) is impractical beyond a point, modularity is a central theme in engineering large quantum computers. This involves dividing the processor into multiple QPU modules (as discussed) and then connecting those modules so they function as a single coherent quantum machine (Capability B.4). Achieving high-quality connectivity between modules is arguably as important as the quality of qubits within a module – it ensures that quantum information can be shared and error-corrected across the whole system.

A clear modular architecture by design is a promising sign. For instance, some superconducting quantum roadmaps explicitly plan for chips connected by superconducting links or through cavity resonators. Ion trap systems often talk about multiple ion traps linked via photonic interfaces (each trap might hold, say, 50–100 ions, and multiple traps network together with fiber optics and photonic entanglement). Even within one cryostat, there might be multiple chips bridged by superconducting buses or bonded connections. When evaluating E.1, we ask: Does the team have a strategy for scaling beyond a single chip or single module? If so, have they demonstrated at least a prototype of it – e.g., entangling qubits that reside on different chips?

Inter-module links can take various forms: electrical (superconducting wires, couplers, microwave cavities bridging two chips), or optical (fiber optic cables carrying entangled photons between cryostats). Each approach has trade-offs in speed, fidelity, and complexity. A major signal of progress is when such links move from one-off experiments to routine usage. For example, if a vendor announces that their 500-qubit system is actually 5 chips of 100 qubits each with reliable connections between chips, that’s a milestone in modular scalability.

We also look for quantitative targets: what fidelity and latency do they claim for these links? For quantum error correction to work seamlessly across modules, the interconnects likely need to be high-fidelity (error rates perhaps in the 1% or even 0.1% range per operation or better, comparable to on-chip gates) and low-latency (maybe microseconds to at most milliseconds, so as not to slow down the error correction cycles). If a design glosses over the difficulty of linking modules, that’s a red flag; conversely, a design that provides specifics like “we will use optical Bell-state distribution with X rate and Y fidelity between any two modules” shows a concrete path forward.

In summary, modular interconnects are the glue that holds a large-scale quantum computer together. Progress in E.1 is marked by demonstrating that multiple smaller quantum processors can be reliably networked into one larger one. It’s not enough to have big chips; they must talk to each other effectively. As technologies like quantum networking, photonic transduction, and chip-to-chip couplers mature, we anticipate seeing modular quantum architectures becoming standard in the quest for scale.

Reliability, Uptime & Automated Operations

Even if you can build a huge quantum computer, it won’t be useful if it’s only operational for a few minutes a day (Capability D.3). Today’s quantum devices often require painstaking calibration and can drift out of tune quickly. Reliability and uptime thus form another pillar of engineering scalability: a viable million-qubit machine must function more like an industrial system – stable, continuously available, with automated maintenance – rather than a fragile lab experiment.

One thing we watch for is evidence of long-duration runs or improved stability. For example, has a group kept a smaller quantum computer running computations for hours or days straight? Do they report metrics like “99% uptime over 24 hours” (excluding scheduled maintenance)? Such reports are currently rare, but even incremental improvements count. Perhaps an experiment ran a quantum error correction loop non-stop for several hours, or a system executed a series of jobs overnight without manual recalibration. These would be important proof points that the hardware and control software can handle extended operation.

Achieving high uptime usually requires automation and self-correction. We look for mentions of auto-calibration routines – software that periodically tunes qubit frequencies, laser powers, or voltage biases without human intervention. Some advanced setups might run calibration in the background or during idle cycles, so the system never strays far from optimal.

Similarly, self-healing or fault management is crucial: if a particular qubit or coupler fails or becomes erratic, can the system detect it and route around it on the fly? Maybe it can drop a bad qubit from a logical qubit encoding or substitute in a spare qubit (if the hardware architecture provided one). Robust systems will have diagnostic monitors and error logs, much like classical servers, and will be able to alert operators or take corrective action automatically when something goes out of spec.

We also consider the operational concept for a large quantum computer. Will it be run in a 24/7 data center environment? If so, how do they plan for maintenance and downtime? A practical design might include redundant cryostats or modules such that the system can continue running even if one module is offline for service. Or it might allow rolling updates – tuning one part of the system while the rest computes. If a roadmap or team talks about things like “remote system health monitoring dashboards,” “automated qubit recalibration every 30 minutes,” or “mean time between failures” in the context of their quantum machine, those are strong indicators that they are treating it as an engineering product, not just a physics experiment.

In short, industrial-grade reliability is a key aspect of E.1. The ultimate goal is a quantum computer that can run around the clock, much like a classical supercomputer, with minimal babysitting. Watching for longer and longer stable runs, and for the implementation of automated tuning and error handling, helps us gauge progress on this front. When quantum hardware behaves less like a delicate science project and more like a robust appliance, we’ll know we’ve crossed an important threshold in scalability.

Economic Feasibility & Deployment Plans

The final piece of the engineering scalability puzzle is economics and real-world deployment. Even if all the technical hurdles can be overcome, a million-qubit machine must be buildable within reasonable cost and infrastructure limits. Otherwise, Q-Day will remain a purely theoretical milestone. Thus, we examine whether there are concrete plans or estimates for actually deploying such a system.

One aspect is whether any cost estimates or resource estimates have been published for a full-scale cryptographically relevant quantum computer. For instance, did a company or government study say something about the capital expenditure (CapEx) or operating costs (OpEx) expected for, say, a 1 million qubit facility? A credible plan might not need precise dollars, but it should show awareness of factors like the number of dilution refrigerators required, the cleanroom fabrication capacity needed for qubit chips, the manpower to operate the system, energy costs, etc. If the discussion remains “we’ll worry about cost later,” that’s concerning.

On the other hand, if someone like a national lab says “we estimate a million-qubit quantum datacenter would cost on the order of $X hundred million and consume Y megawatts,” it signals that the feasibility is being assessed in practical terms.

We also track funding programs and roadmaps that target large-scale systems. Are governments, large tech companies, or consortia explicitly aiming for >100,000 qubits in a deployable system? In recent years, there have been increasing mentions of “quantum foundry” initiatives and multi-year plans to scale qubit counts by orders of magnitude. For example, a government might launch a “Quantum Moonshot” program that provides significant funding to build a prototype million-qubit machine by a certain year. Large tech companies (the so-called hyperscalers) like Amazon, IBM, Google, or Microsoft often publish roadmaps – if those roadmaps start talking about practical quantum machines with hundreds of thousands of qubits (not just laboratory milestones), that’s a sign of growing confidence in manufacturability. Essentially, we’re looking for signals that people are willing to invest big money and effort into actually constructing these machines, not just researching them in theory.

Another important factor is the connection to the existing manufacturing ecosystem. A strong indicator of eventual deployment is if quantum hardware teams are partnering with established semiconductor fabs, cryogenic equipment suppliers, and so on. For instance, using standard CMOS fabrication processes for certain qubit technologies (like silicon spin qubits or superconducting circuits) can leverage billions of dollars of existing infrastructure. If a company says “we’ve signed a deal with TSMC to produce our next-gen qubit chips” or “our design uses commercial off-the-shelf components for 90% of the system,” that bodes well for scalability.

Similarly, having a supply chain in place – from high-purity materials to dilution refrigerators (which are currently artisanal, limited-quantity devices) – is crucial. We watch for news like new facilities being built for quantum chip production, or partnerships with cryostat manufacturers to mass-produce fridges. These down-to-earth steps indicate that the community is serious about deploying quantum computers out of the lab and into the field.

In summary, economic and deployment considerations are where all the engineering work meets reality. E.1 isn’t fully achieved until we not only know how to build a million-qubit machine, but also have a plausible path to actually building it within real-world constraints. When you see detailed roadmaps that cover technology, timelines, cost, and partners – essentially, when quantum computing companies start sounding a bit like aerospace companies planning a moon mission – that’s when E.1 is on a firm footing.

Bringing it all together, Engineering Scale & Manufacturability is about the maturation of quantum hardware from lab prototypes to something akin to today’s classical computing infrastructure. It encompasses making qubit modules larger and defect-free, integrating control systems smartly, managing heat and power, linking modules into one coherent system, ensuring the whole thing runs reliably, and doing it all at a reasonable cost. Progress in any one of these areas is valuable, but it’s the combination of all of them that will determine when a large-scale quantum computer can actually be built.

Each of the signals and checkpoints discussed above serves as a benchmark on the road to that goal. By tracking these, we can gauge how close we are to the hardware capabilities necessary for Q-Day – the moment when quantum computers become powerful enough to upend our current cryptographic systems and solve problems previously out of reach. Each engineering breakthrough brings that moment closer, turning what was once a theoretical possibility into an impending reality.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.