Google Quantum AI Achieves 10x Reduction in Resources to Break Bitcoin’s Cryptography

Table of Contents

31 Mar 2026 – Google Quantum AI has published a 57-page whitepaper demonstrating that the quantum resources needed to break the elliptic curve cryptography protecting Bitcoin, Ethereum, and virtually every major cryptocurrency are roughly an order of magnitude smaller than previously estimated.

The paper, titled “Securing Elliptic Curve Cryptocurrencies against Quantum Vulnerabilities: Resource Estimates and Mitigations“ and co-authored with researchers from the Ethereum Foundation and Stanford University, presents two optimized quantum circuits for solving the 256-bit Elliptic Curve Discrete Logarithm Problem (ECDLP-256) on the secp256k1 curve — the cryptographic foundation of Bitcoin and Ethereum transaction signatures. The circuits achieve a roughly 10x improvement in spacetime volume over the best prior single-instance estimates, translating to fewer than 500,000 physical qubits and a runtime measured in minutes on a superconducting architecture.

In a move unprecedented in quantum cryptanalysis, the team withheld the circuits themselves and instead published a cryptographic zero-knowledge proof — built with SP1 zkVM and Groth16 SNARK — allowing anyone to verify the claims without accessing the attack details. The paper was accompanied by a responsible disclosure blog post in which Google stated it had engaged with the U.S. government prior to publication and urged other quantum computing research teams to adopt similar disclosure practices.

The Core Numbers

Google reports two circuit variants for ECDLP-256 on secp256k1:

- The low-qubit variant uses no more than 1,200 logical qubits and 90 million Toffoli gates.

- The low-gate variant uses no more than 1,450 logical qubits and 70 million Toffoli gates.

When compiled onto a superconducting architecture using surface code error correction — with planar degree-four connectivity, 10⁻³ physical error rates, 1-microsecond code cycle time, and 10-microsecond control system reaction time — these circuits require fewer than 500,000 physical qubits. This represents approximately a 20-fold reduction over the prior best physical qubit estimate for ECDLP-256 (Litinski, 2023: roughly 9 million physical qubits on a photonic architecture).

Crucially, the hardware assumptions are deliberately conservative. These are the same “benign” parameters used in Craig Gidney’s landmark 2025 RSA-2048 factoring paper and are consistent with a scaled-up version of Google’s own experimentally demonstrated superconducting processors. The improvement is purely algorithmic and compilational — no exotic hardware is assumed.

The 9-Minute Attack Window

The runtime estimates carry the paper’s most operationally significant implication. At 70 million Toffoli gates in reaction-limited execution, the low-gate variant resolves in approximately 18 minutes. But Shor’s algorithm can be “primed” — the first half of the computation depends only on fixed curve parameters and can be precomputed. Once a specific public key is revealed, the remaining computation takes approximately 9 minutes.

Bitcoin’s average block time is 10 minutes. Under idealized conditions, Google estimates a roughly 41% probability that a primed superconducting CRQC could derive a private key before a transaction is finalized — making “on-spend” attacks against active Bitcoin transactions a plausible threat scenario for fast-clock CRQCs.

The probability drops sharply for faster blockchains: less than 3% for Litecoin (2.5-minute blocks), less than 0.08% for Zcash (75-second blocks), and less than 0.01% for Dogecoin (1-minute blocks).

How This Compares to RSA-2048 Estimates

| Metric | Gidney 2019 (RSA-2048) | Gidney 2025 (RSA-2048) | Pinnacle 2026 (RSA-2048) | Google 2026 (ECDLP-256) |

|---|---|---|---|---|

| Logical Qubits | ~6,000 | ~1,400 | ~1,000-1,500 (qLDPC) | 1,200-1,450 |

| Toffoli Gates | ~3 billion | ~6.5 billion | (optimized) | 70-90 million |

| Physical Qubits | ~20 million | <1 million | <100,000 | <500,000 |

| Runtime | ~8 hours | <1 week | ~1 month | 9-23 minutes |

| Architecture | Surface code | Surface code | qLDPC (degree-10) | Surface code |

| Error Rate | 10⁻³ | 10⁻³ | 10⁻³ | 10⁻³ |

The comparison reveals two facts simultaneously. ECC-256 requires roughly 100x fewer Toffoli gates than RSA-2048 (70-90 million vs. 6.5 billion), which is why the runtime drops from days to minutes. And ECC requires roughly half the physical qubits of RSA-2048 on comparable surface code assumptions (500,000 vs. 1 million). ECC is, by these measures, the more immediately vulnerable primitive — and the one protecting essentially all cryptocurrency value.

The Pinnacle Architecture achieves lower physical qubit counts (~100,000 for RSA-2048) but relies on qLDPC codes requiring degree-ten non-planar connectivity that has not been demonstrated at scale, and its runtime stretches to roughly a month. Google explicitly acknowledges Pinnacle in the paper, noting that while such architectures could theoretically reduce ECDLP-256 qubit counts below 100,000, the planar surface code path at 500,000 qubits is “better understood and also likely more feasible.”

What This Paper Does NOT Claim

The paper does not claim that a working CRQC exists or is imminent. It does not claim that Bitcoin is broken today. It does not predict when a 500,000-qubit machine will be built. And it explicitly notes that most Bitcoin UTXOs — those using P2PKH or P2WPKH script types without address reuse — hide their public keys behind cryptographic hashes and remain safe from at-rest attacks.

What it does claim, with mathematical precision backed by a zero-knowledge proof, is that the engineering target for breaking cryptocurrency cryptography is substantially smaller, faster, and less exotic than the community has been led to believe.

Co-Authors and Context

The paper’s author list is itself significant: Ryan Babbush (Director, Quantum Algorithms, Google), Craig Gidney (author of the RSA-2048 estimates), Adam Zalcman, Michael Broughton, Tanuj Khattar, and Hartmut Neven (VP of Engineering) from Google Quantum AI, alongside Thiago Bergamaschi (UC Berkeley), Justin Drake (Ethereum Foundation), and Dan Boneh (Stanford). Google’s accompanying blog post names Coinbase, the Stanford Institute for Blockchain Research, and the Ethereum Foundation as collaborators on responsible approaches.

This paper almost certainly explains why Google announced its 2029 PQC migration deadline earlier this year. When the organization building the hardware publishes resource estimates this specific on their own demonstrated architecture, the timeline implications are difficult to dismiss.

My Analysis — What This Changes and What It Doesn’t

The Real Achievement: Compressing the Spacetime Volume

The headline numbers — 500,000 physical qubits, 9 minutes — are important. But the technically precise achievement is the roughly 10x reduction in spacetime volume for solving a single instance of ECDLP-256 compared to the best prior published work.

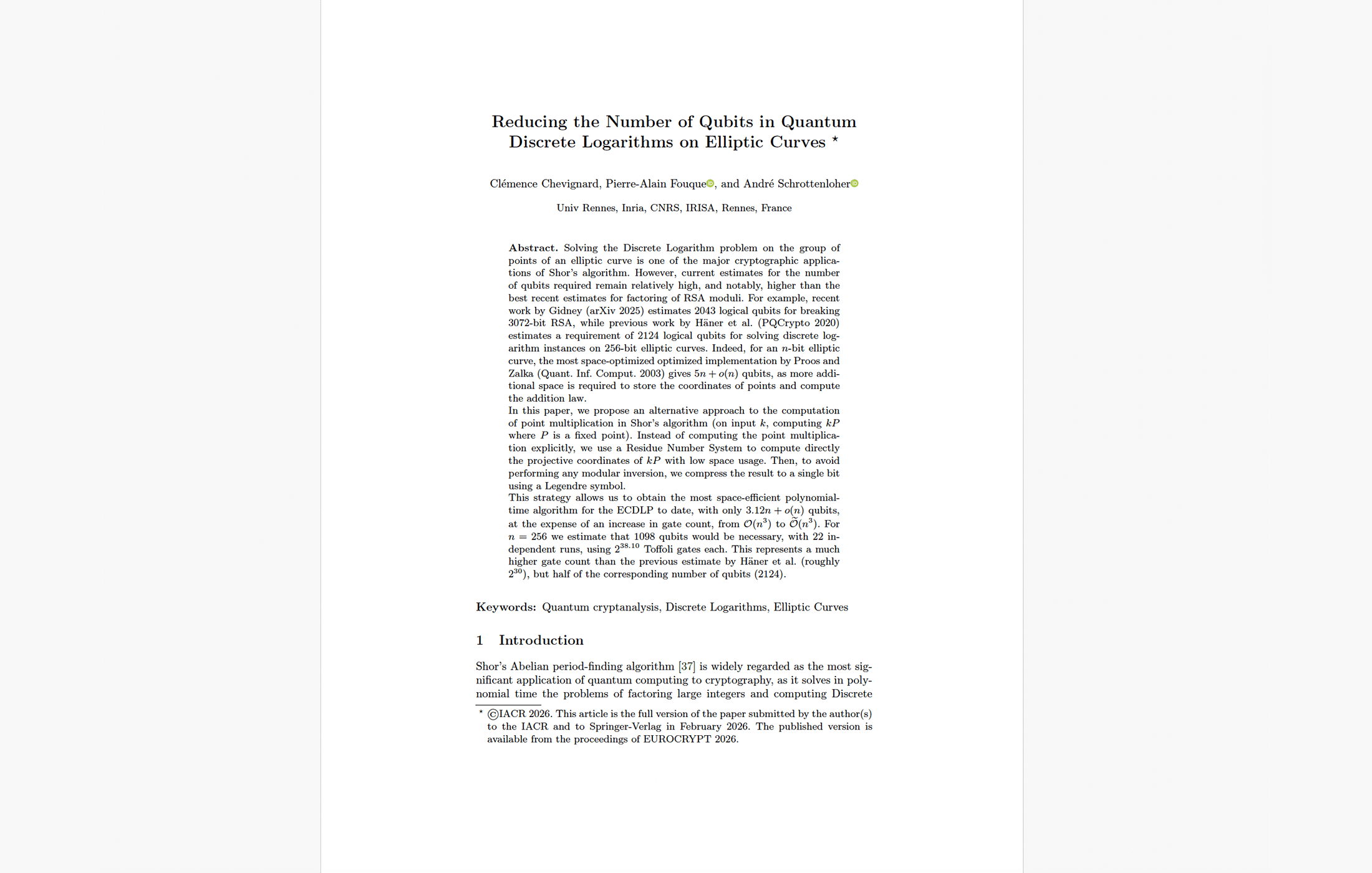

Spacetime volume is the product of logical qubits and gate count, and it is the metric that ultimately drives physical resource overhead because it determines both the size of the machine and the duration of error correction. Previous estimates forced a brutal choice: Chevignard et al.’s EUROCRYPT 2026 paper achieved ~1,100 logical qubits but required more than 100 billion Toffoli gates. Litinski (2023) achieved ~200 million Toffoli gates but needed ~2,500 logical qubits. Google’s team found the sweet spot between these extremes — close to Chevignard’s qubit count at close to Litinski’s gate count — and the result is a spacetime volume roughly 10x smaller than either.

This is the continuation of a pattern I have been tracking since Gidney’s 2019 RSA work and that the paper itself highlights in a figure showing how physical qubit estimates for RSA-2048 have dropped by roughly 20x per publication cycle. The paper’s own words are apt: “attacks always get better.”

What makes this particular reduction significant — and, frankly, vindicating — is that it was entirely predictable. For years, I have argued that ECC was receiving disproportionately little attention from quantum cryptanalysis researchers relative to its real-world importance. RSA-2048 became the unofficial benchmark for quantum computing progress, attracting the bulk of algorithmic optimization effort. But the cryptographic primitive actually protecting the largest pool of immediately stealable value — ECC on secp256k1 — had far fewer researchers working on efficient Shor implementations. I wrote about this extensively when covering Chevignard, Fouque, and Schrottenloher’s work on reduced-qubit ECDLP and in my broader analysis of why Bitcoin’s quantum risk was structurally closer than most assumed.

The thesis was simple: once the same caliber of algorithmic talent that had been optimizing RSA circuits for a decade turned its attention to ECC, the resource estimates would drop dramatically. And that is exactly what happened. Google’s paper explicitly acknowledges this dynamic, noting that RSA and quantum chemistry “have been the focus of significantly more published research historically than quantum algorithms for breaking ECDLP so it may be the case that algorithms for those applications are closer to optimal than they are for ECDLP.” In other words, there may be more optimization headroom remaining for ECC attacks than for RSA attacks — which means these estimates could continue to tighten.

Fast-Clock vs. Slow-Clock: The Throughput Fork

One of the paper’s most analytically valuable contributions is the distinction between “fast-clock” CRQCs (superconducting, photonic, silicon spin) and “slow-clock” CRQCs (neutral atom, ion trap). This maps directly onto my CRQC Readiness Benchmark and clarifies a distinction that most previous analyses glossed over.

The key isn’t just that slow-clock machines operate more slowly. It’s that the magic state throughput requirement changes the physical resource equation fundamentally. Executing 70 million Toffoli gates in 9 minutes requires generating roughly 500,000 T states per second. On a fast-clock architecture with 1-microsecond error correction rounds, this requires allocating approximately 25,000 physical qubits to magic state production — a small fraction of the ~500,000 total. But on a slow-clock architecture with 100-microsecond rounds, that same throughput demands roughly 2.5 million physical qubits for magic state production alone — five times the total qubit budget of the fast-clock machine.

This means that for slow-clock architectures, at-rest attacks (which can run for days and tolerate low T-state throughput) become feasible long before on-spend attacks. The two attack types decouple. For fast-clock architectures, they arrive essentially simultaneously. The cryptocurrency community needs contingency plans for both scenarios.

Where the Difficulty Shifts — Not Disappears

Through the lens of the CRQC Readiness Benchmark, Google’s paper primarily advances the algorithm and circuit dimension while keeping hardware assumptions constant. This is the cleanest form of resource reduction — it cannot be dismissed as relying on unproven hardware capabilities.

But the remaining engineering challenges have not disappeared. They have been clarified — and they map directly onto specific capabilities within my CRQC Quantum Capability Framework:

Fabrication scale (Capability E: Engineering Scale & Manufacturability and B.3: Below-Threshold Operation & Scaling): Producing ~500,000 physical qubits at 10⁻³ error rates on a planar grid remains a manufacturing challenge roughly 500x beyond today’s largest demonstrated devices. Maintaining below-threshold operation as you scale through orders of magnitude of qubit count is a qualitatively different challenge from demonstrating it on a single logical qubit.

Sustained operation (Capability D.3: Continuous Operation / Long-Duration Stability): The algorithm runs for 18-23 minutes of continuous fault-tolerant computation. Current experimental demonstrations of below-threshold surface code operation span hours at most, on far fewer logical qubits. Drift, calibration decay, and correlated errors over sustained runtimes at this scale remain largely uncharacterized.

Decoder throughput (Capability D.2: Decoder Performance): Real-time syndrome decoding at the rate demanded by a 500,000-qubit device — processing terabytes of measurement data per second — remains an unsolved system engineering problem at scale. Sub-microsecond decoders have been demonstrated for small code blocks, but scaling this to the full machine introduces latency that can itself become a source of logical error.

Control electronics (Capability D.1: Full Fault-Tolerant Algorithm Integration): Reaction-limited execution at 10 microseconds across half a million qubits requires classical control infrastructure — real-time feedforward, adaptive gate scheduling, magic state routing — that has not been demonstrated beyond small-scale prototypes.

These are engineering problems, not physics problems. That distinction matters. Physics problems may be impossible to solve; engineering problems are a matter of resources, time, and determination. When a company with Google’s resources publishes estimates on their own architecture and announces a 2029 PQC migration deadline, it is reasonable to infer that their internal assessment of these engineering timelines is more aggressive than what they publish.

The Zero-Knowledge Proof: Verification Without Weaponization

The ZK proof approach deserves careful analysis — both for what it accomplishes and what it signals about the future of quantum cryptanalysis disclosure.

The technical construction is sound. Google committed to their secret circuits via SHA-256 hash, generated 9,024 test inputs using the Fiat-Shamir heuristic (SHAKE256 XOF seeded with the circuit bytes), simulated the circuits inside SP1 zkVM, and wrapped the result in a Groth16 SNARK. The 9,024-test threshold provides 128-bit cryptographic security that the circuits work correctly on at least 99% of inputs — sufficient for Shor’s algorithm, which tolerates small error rates.

The Appendix transparently provides the formulas connecting the point addition circuit costs to the full ECDLP algorithm. With window size w=16, the total computation requires 28 windowed point additions for 256-bit ECDLP. The low-qubit circuit’s point addition subroutine uses at most 2,700,000 non-Clifford gates and 1,175 logical qubits; the low-gate variant uses 2,100,000 non-Clifford gates and 1,425 qubits. These numbers, plugged into the provided formulas, reproduce the full-algorithm resource estimates.

The paper’s authors note the irony that their Groth16 SNARK relies on pairing-friendly elliptic curves (BLS12-381) — themselves vulnerable to quantum attacks. The proof’s soundness holds only because CRQCs do not yet exist.

What matters most strategically is the precedent. If this ZK disclosure model is adopted by other research teams, the quantum cryptanalysis community will transition from full transparency to verified-but-opaque claims. This is consistent with responsible disclosure norms in cybersecurity, but it will make independent threat assessment harder for defenders who currently rely on open publications to calibrate their migration timelines.

The Comprehensive Blockchain Vulnerability Taxonomy

The paper’s coverage extends far beyond resource estimates. It provides the most systematic public taxonomy of quantum vulnerabilities across the cryptocurrency ecosystem to date. A few elements deserve particular attention from my readers:

The on-setup attack vector for Ethereum’s DAS. The KZG polynomial commitment scheme used in Ethereum’s Data Availability Sampling relies on a trusted setup ceremony. A CRQC can recover the “toxic waste” — the secret scalar — from publicly available parameters. Once extracted, this creates a permanent, reusable classical exploit that can forge data availability proofs without further quantum computation. This means a single successful quantum attack creates a tradeable classical exploit. In the context of my coverage of quantum risks to cryptocurrencies, this on-setup vector is arguably more insidious than direct key theft because it is persistent and does not require ongoing access to a CRQC.

Ethereum’s five-vulnerability taxonomy. The paper identifies Account Vulnerability (~20.5M ETH in the top 1,000 exposed accounts), Admin Vulnerability (~2.5M ETH plus >$200B in stablecoins/RWAs), Code Vulnerability (~15M ETH in L2 TVS), Consensus Vulnerability (~37M staked ETH), and Data Availability Vulnerability. The total potential exposure dwarfs Bitcoin’s, though Ethereum’s faster block time (12-second slots) makes on-spend attacks impractical for first-generation CRQCs.

The dormant asset analysis. Roughly 1.7 million BTC sits in P2PK scripts from the Satoshi era with permanently exposed public keys. An additional ~600,000 BTC is vulnerable due to address reuse and other exposure vectors, bringing the total dormant vulnerable supply to roughly 2.3 million BTC. These assets cannot be migrated because the private keys are lost. The paper’s “digital salvage” framework — treating CRQC-based recovery of these assets as a regulated activity analogous to maritime salvage law — is the most detailed public policy treatment of this problem I have seen.

Connecting the Dots: Why Google Published This Now

We should be clear-eyed about what this paper represents in context. Google has:

- Demonstrated quantum error correction below the surface code threshold (Willow, 2024)

- Published Gidney’s RSA-2048 estimate showing <1 million qubits on their own architecture (May 2025)

- Announced a 2029 internal PQC migration deadline (early 2026)

- Now published ECDLP-256 estimates showing <500,000 qubits and minutes-scale runtime on the same architecture (March 2026)

These are not isolated research papers. They are sequential signals from an organization that builds quantum hardware, develops quantum algorithms, and runs a global technology infrastructure that depends on the cryptographic primitives being analyzed. When Google tells the world that the engineering specification for a machine that breaks cryptocurrency cryptography is half the size of what breaks RSA-2048, and compatible with hardware they are already building, that carries a different weight than a university preprint.

As I have written since 2018 — when I first introduced the “Sign Today, Forge Tomorrow” concept — the trajectory of quantum cryptanalysis has been consistent and directional. Each year, the resource estimates tighten. Each year, the hardware matures. This paper does not predict Q-Day. But it narrows the margin for error in every migration timeline built on the assumption that ECC would remain safe for years beyond RSA.

What To Do With This Information

For CISOs and security leadership: this paper provides the most authoritative, defensible resource estimate for quantum vulnerability of ECC-based cryptocurrency systems published to date. The 500,000-qubit, 9-minute figure — from Google’s own quantum hardware team, on their own architecture — is the number to present to boards and risk committees.

For cryptocurrency protocol developers: the paper provides specific, implementable interim mitigations. Eliminate public key reuse. Avoid P2TR addresses where possible. Support BIP-360 (Pay-to-Merkle-Root). Implement private mempools and commit-reveal schemes. But none of these are durable solutions. The only long-term remedy is full migration to post-quantum cryptography — and the paper explicitly notes that Algorand, Solana, the XRP Ledger, QRL, Abelian, and Mochimo have already made real progress on this path.

For Ethereum stakeholders: the on-setup vulnerability in DAS and the Admin Vulnerability across stablecoin contracts warrant immediate attention. The consensus layer (BLS12-381 signatures) needs a migration path to post-quantum multi-signature schemes.

For everyone tracking quantum readiness: update your ECDLP-256 physical qubit threshold from ~9 million (Litinski 2023) to ~500,000 (Google/Babbush et al. 2026) for surface code architectures. The ECDLP challenge ladder remains a useful tracking mechanism, but as this paper warns — if a leading architecture overcomes all scaling barriers before demonstrating even 32-bit ECDLP, there may be little time between breaking 32-bit and breaking 256-bit curves. A public demonstration of Shor on a 32-bit curve should be treated not as a wake-up call to adopt PQC but as a signal that adoption has already failed.

The paper closes with a line worth repeating: “It is conceivable that the existence of early CRQCs may first be detected on the blockchain rather than announced.”

That is not Q-FUD. That is the considered assessment of the team building the hardware. Act accordingly.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.