A New Algorithm Shrinks the Quantum Attack Surface for ECC

Table of Contents

15 Mar 2026 – When Clémence Chevignard, Pierre-Alain Fouque, and André Schrottenloher submitted their latest paper to EUROCRYPT 2026, they already had a track record that the cryptographic community was watching closely. At CRYPTO 2025, the same team at INRIA Rennes had published a method that slashed the qubit requirements for quantum factoring of RSA integers – work that Craig Gidney at Google Quantum AI subsequently used as a foundation to bring the estimated physical qubit cost of breaking RSA-2048 from 20 million down to under one million. That result sent tremors through every risk committee tracking quantum timelines.

Now the Rennes team has turned its attention to the other pillar of modern public-key cryptography – and the results should concern anyone responsible for systems that depend on elliptic curves.

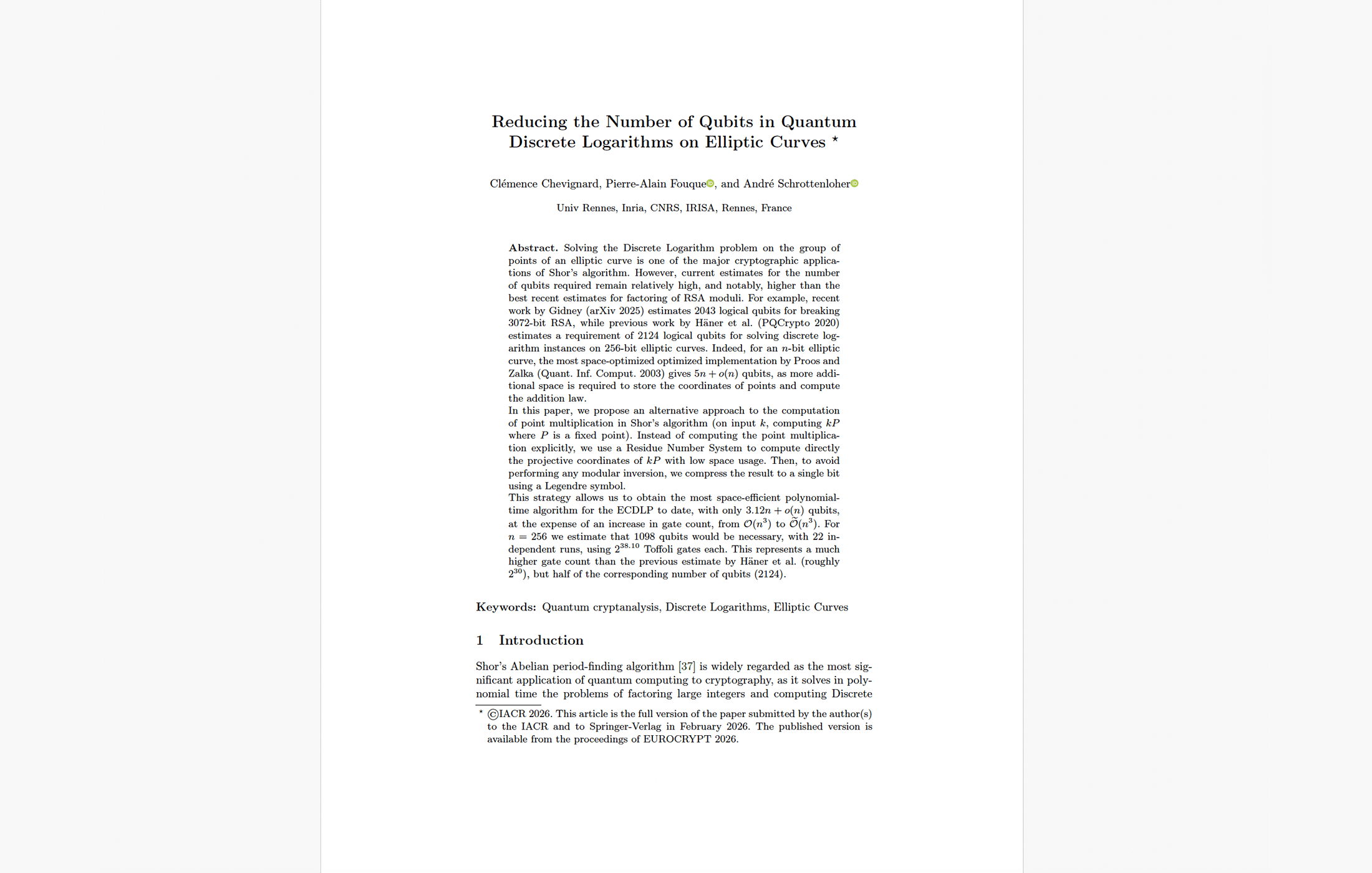

Their new paper, published in the proceedings of EUROCRYPT 2026, presents a quantum algorithm that solves the Elliptic Curve Discrete Logarithm Problem (ECDLP) on a 256-bit prime curve using just 1,193 logical qubits – roughly half the 2,124 qubits required by the previous best estimate from Häner et al. (2020). For the smaller P-224 curve, the count drops even further, to just 1,098. For P-384, it falls from 3,151 to 1,494. For P-521, from 4,258 to 1,895.

The catch is that this qubit reduction comes at the cost of a dramatically higher gate count. But as we will examine, that tradeoff may matter less than the headline numbers suggest, because in the race toward a cryptanalytically relevant quantum computer (CRQC), qubits have consistently been the harder constraint to satisfy.

The Quantum Security Inversion Gets Worse

PostQuantum.com has been tracking the convergence of quantum resource estimates for RSA and ECC for some time. The story, until recently, was straightforward: ECC was already the easier quantum target at equivalent classical security levels, requiring fewer qubits and fewer gates than RSA. As we detailed in our analysis of why Bitcoin’s quantum risk is closer than most realize, the industry’s fixation on RSA-2048 qubit estimates was obscuring the fact that the most widely deployed cryptographic primitive, ECC, was vulnerable at lower quantum thresholds.

This new paper widens that gap considerably. Consider the comparison at the 128-bit classical security level:

The previous state of the art, from Häner et al., required 2,124 logical qubits to break P-256. Gidney’s 2025 paper, building on the Rennes team’s RSA work, estimated 2,043 logical qubits for RSA-3072. At that point, RSA and ECC were roughly comparable in logical qubit cost – an already surprising result given that ECC was supposed to be the more compact, more efficient system.

Now, the Chevignard-Fouque-Schrottenloher algorithm drops P-256 to 1,193 logical qubits (1,098 for P-224). RSA-3072 still sits at 2,043. The qubit gap has flipped decisively: breaking a 256-bit elliptic curve now requires 42% fewer logical qubits than breaking an RSA key of equivalent classical security. For organizations that migrated from RSA to ECC precisely because of its efficiency advantages, this is a particularly uncomfortable irony.

Even more striking is the comparison at the 112-bit security level. Breaking P-224 now requires 1,098 logical qubits. Gidney’s estimate for RSA-2048 – the most commonly cited benchmark in quantum threat discussions – is 1,399 logical qubits. An elliptic curve instance is now breakable with 21.5% fewer logical qubits than the RSA key it was considered equivalent to.

How They Did It: Legendre Symbols, Residue Number Systems, and Ghost Pebbles

The technical ingenuity behind this result merits close examination, because it illustrates a broader pattern in quantum cryptanalysis: the most impactful advances often come not from new quantum algorithms, but from cleverer classical mathematics applied to quantum circuit design.

The fundamental challenge in implementing Shor’s algorithm for ECDLP is the arithmetic. Elliptic curve point addition is far more complex than the modular multiplication needed for RSA factoring. A point on an elliptic curve is represented by coordinates, and adding two points requires a sequence of field multiplications, additions, and, critically, a modular inversion. This inversion is expensive, and it is the reason that previous implementations required more qubits for ECC than for RSA despite the much smaller numbers involved.

The Rennes team’s approach eliminates the need for modular inversion entirely, through three interlocking innovations.

The Legendre symbol as a hash function. The first insight concerns output compression. In the standard implementation of Shor’s algorithm, the quantum computer must maintain a register large enough to hold the full output of the function being analyzed – in this case, a point on an elliptic curve. The May-Schlieper compression technique allows this output to be hashed down to a few bits, dramatically reducing the output register size. But for elliptic curves, applying this technique requires computing the affine x-coordinate of a point, which means performing a modular inversion.

Chevignard et al. sidestep this by using the Legendre symbol – a number-theoretic function that determines whether an integer is a quadratic residue modulo a prime – as a single-bit hash function. The Legendre symbol is multiplicative, which means it can be computed directly from the projective coordinates of a point (X and Z) without ever computing X/Z. This reduces the problem to computing the product XZ mod q, avoiding the costly inversion altogether.

The paper proves (under a heuristic about the pseudorandomness of Legendre symbols applied to point multiples, which is supported by prior work and experimental validation) that this hash function family is “almost universal” in the sense required by the May-Schlieper theorem, so the compressed algorithm produces measurement outcomes with essentially the same distribution as the uncompressed version.

Lifting to the integers via a Residue Number System. The second innovation addresses how to compute XZ mod q with minimal qubit overhead. Rather than performing elliptic curve arithmetic modulo q (which requires expensive modular reductions at each step), the authors lift all computations to the integers. They represent point coordinates as unbounded integers, performing additions in a binary tree structure without any modular reduction.

The resulting integers are enormous – on the order of n³ bits for an n-bit curve, meaning roughly 2²⁰ bits for P-256. But the algorithm never actually needs to store these giant numbers in quantum memory. Instead, it uses a Residue Number System: for each of roughly n³/log(n) small primes, it computes the residue of the result modulo that prime using a compact circuit, then reconstructs the final answer modulo q using the Chinese Remainder Theorem.

Each individual residue computation requires only O((log n)²) qubits of working space – a dramatic reduction from the O(n) qubits needed to store a full elliptic curve point.

Spooky pebbling for space-efficient tree evaluation. The binary tree of point additions poses a memory management problem: naive evaluation would require storing all intermediate nodes simultaneously. The authors apply the “spooky pebbling” technique introduced by Gidney, which uses quantum measurement-based uncomputation to “ghost” intermediate results – removing them from quantum memory at the cost of leaving behind a classical phase correction that must be cleaned up later. This keeps the number of simultaneously stored tree nodes logarithmic in the tree size.

The combination of these three techniques yields a total qubit count of 3.12n + o(n) for an n-bit curve, compared to the previous best of 5n + o(n) from Proos and Zalka (2003).

The Gate Count Question: How Much Does It Matter?

The elephant in the circuit is the gate count. Häner et al.’s implementation for P-256 used approximately 233 T-gates (roughly 231 Toffoli gates). The new algorithm requires approximately 239 Toffoli gates per run – and 22 independent runs, bringing the total to approximately 243.4 Toffoli gates. That is more than a thousand-fold increase.

This is not a number to dismiss. More gates mean longer runtime, more opportunities for errors to accumulate, and greater demands on the error correction infrastructure. In a fault-tolerant quantum computer using surface codes, the runtime is roughly proportional to the Toffoli gate count divided by the number of magic state factories, so a 1,000x increase in gates could translate to a 1,000x increase in wall-clock time – or require 1,000x more magic state distillation capacity.

But there are three reasons this tradeoff may be more favorable than the raw numbers suggest.

First, qubits have historically been the binding constraint in quantum hardware roadmaps. Every major quantum computing platform – superconducting circuits, trapped ions, neutral atoms, photonics – faces fundamental engineering challenges in scaling qubit count. Runtime, by contrast, can be addressed through parallelism and improved error correction protocols. A machine that needs 1,193 logical qubits running for a week is closer to the engineering frontier than a machine that needs 2,124 logical qubits running for an hour.

Second, the gate count is likely to decrease with further optimization. The authors themselves note that using Montgomery representation for modular multiplication could yield a factor of 2–4 improvement, and that the point addition circuit (Algorithm 1) has not been fully optimized. Gidney’s work on RSA demonstrated exactly this pattern: the Rennes team’s initial RSA algorithm had an astronomically high gate count, which Gidney then reduced by more than 100x while preserving the qubit savings. There is no reason to expect a similar optimization pipeline would not emerge for this ECC algorithm.

Third, the relevant comparison is not against the old algorithm, but against hardware progress. When we ask “how long until a CRQC can break P-256?”, the answer depends on the intersection of hardware scaling curves with algorithmic requirements. An algorithm that requires half the qubits but more time could reach the feasibility threshold sooner than the previous algorithm if qubit scaling is the bottleneck – which, by all indications, it is.

What the Numbers Mean for Each Curve

The paper provides detailed estimates for the NIST prime curves and related parameters. The results paint a consistent picture: approximately 2x qubit reduction across all security levels, with the gate cost increasing by three orders of magnitude.

For P-224 (112-bit security): 1,098 logical qubits, 2⁴²·⁴ total Toffoli gates across 20 runs. Compare this to Gidney’s estimate of 1,399 logical qubits for RSA-2048 at the same security level. The ECC instance is now clearly easier.

For P-256 (128-bit security): 1,193 logical qubits, 2⁴³·⁴ total Toffoli gates across 22 runs. The previous best was 2,124 qubits with 2³³ T-gates. This is the curve that underpins the vast majority of deployed ECC: TLS certificates, Bitcoin’s secp256k1 (which has similar parameters), ECDSA signatures in authentication systems, and ECDH key agreement in messaging protocols.

For P-384 (192-bit security): 1,494 logical qubits, 2⁴⁶ total Toffoli gates across 26 runs. Down from 3,151 qubits.

For P-521 (256-bit security): 1,895 logical qubits, 2⁴⁸ total Toffoli gates across 32 runs. Down from 4,258 qubits.

One detail worth noting: the Eker˚a-H˚astad variant of Shor’s algorithm used here requires multiple independent runs of the quantum subroutine, with the results combined through classical post-processing involving lattice sieving. This is different from RSA factoring using the same framework, where the number of runs is similarly small. The classical post-processing is efficient and well-understood, so the number of runs is not a practical barrier – but it does mean the total computational effort is the per-run cost multiplied by 20–32 depending on the curve.

The Same Team, the Same Pattern: Watch the Optimization Pipeline

There is a meta-lesson in the trajectory of this research group’s work that security planners should internalize.

In 2024, Chevignard, Fouque, and Schrottenloher circulated a preprint of their qubit-reduction technique for RSA factoring, which was subsequently published at CRYPTO 2025. The gate count was impractically high – on the order of 2 × 10¹² Toffoli gates. Within months, Craig Gidney at Google Quantum AI took their approach, optimized the circuit implementations, and reduced the Toffoli count by more than 100x – enough to bring the physical qubit estimate for RSA-2048 below one million for the first time.

The EUROCRYPT 2026 paper follows the same playbook: achieve a breakthrough in qubit reduction using novel mathematical techniques, accept a high gate count as the initial price, and leave the optimization to subsequent work. The authors have published their implementation code on Inria’s GitLab, explicitly inviting the community to improve their circuits.

If history is any guide, those improvements will come – and they could arrive faster than hardware scaling. This is the pattern that makes quantum resource estimation a moving target: algorithmic improvements can compress timelines in ways that hardware roadmaps alone would not predict.

Strategic Implications: What This Means for Your Migration

For CISOs and security architects, this paper sharpens several conclusions that were already becoming clear.

ECC is now definitively the easier quantum target. The debate about whether RSA or ECC would fall first to a quantum computer is effectively settled at the logical-qubit level. A quantum computer capable of fielding approximately 1,200 logical qubits – fewer than the number needed for RSA-3072 – would be sufficient to break P-256. Any organization whose quantum risk assessment benchmarks against RSA qubit estimates is using the wrong yardstick.

The “harvest now, decrypt later” window for ECC is wider than for RSA. This may seem counterintuitive – wouldn’t a lower qubit threshold mean a shorter window? – but consider the deployment landscape. ECC-based key agreement (ECDH, ECDHE) protects the confidentiality of data in transit. Any session encrypted today with ECDHE using a classical curve could be recorded and decrypted once a sufficiently powerful quantum computer exists. The lower the quantum threshold for breaking ECC, the more adversaries will be willing to invest in “harvest now, decrypt later” (HNDL) collection, because the expected payoff arrives sooner.

Bitcoin and other blockchain systems using secp256k1 face heightened urgency. As we previously analyzed, Bitcoin’s reliance on the secp256k1 curve (a 256-bit curve similar in structure to P-256, though defined over a different prime) means that quantum threat timelines derived from RSA estimates are misleading. The Chevignard et al. paper analyzes only NIST P-curves, not secp256k1 directly – but because secp256k1 is also a 256-bit prime-field curve with similar arithmetic properties, the asymptotic result of 3.12n + o(n) qubits applies, and the concrete qubit count should be closely comparable to the P-256 estimate of 1,193. The qubit threshold for breaking Bitcoin’s signature scheme is now well below the threshold for breaking RSA-2048, and the gap is widening.

Post-quantum migration for key agreement should be the top priority. The combination of HNDL risk and lower qubit thresholds makes migrating key agreement mechanisms – TLS key exchange, VPN tunnel establishment, secure messaging – the most time-sensitive element of any PQC transition plan. ML-KEM (formerly CRYSTALS-Kyber) is standardized and available. There is no technical barrier to deploying it today for key encapsulation, and the risk calculus increasingly favors urgency over caution.

Signature migration is also important, but the threat model is different. Breaking ECC signatures (ECDSA) requires a quantum computer in real time – an attacker cannot pre-record a signature challenge and solve it later. This means the threat to signatures materializes only when a CRQC actually exists, not before. But the governance and infrastructure changes required for signature migration (new certificate hierarchies, firmware signing keys, code signing workflows) take years to implement, so starting the planning process now is essential.

The Bigger Picture: Convergence of Algorithmic and Hardware Progress

This paper does not, by itself, move the estimated date of a CRQC. No 256-bit elliptic curve will be broken by a quantum computer tomorrow, or next year. The largest quantum processors today operate with dozens of logical qubits – a far cry from the 1,193 needed for P-256, even before accounting for the physical-to-logical qubit overhead imposed by error correction.

But the paper contributes to a trend that should make any complacent security leader uncomfortable. The algorithmic side of the equation is improving faster than most risk models assume. In the space of roughly 18 months, the same research team has:

- Halved the logical qubit count for RSA factoring (CRYPTO 2025)

- Enabled a 20x reduction in physical qubit estimates for RSA-2048 when combined with Gidney’s optimizations (arXiv 2025)

- Now halved the logical qubit count for ECDLP (EUROCRYPT 2026)

Meanwhile, on the hardware side, quantum computing companies are scaling rapidly. Quantinuum recently demonstrated 94 logical qubits on a trapped-ion processor with error rates approaching the fault-tolerance threshold. IBM’s roadmap targets 200 logical qubits with 100 million gates by 2029. Multiple neutral-atom startups are projecting 10,000+ physical qubits within the next two years.

The gap between “where we are” and “what’s needed to break ECC” is shrinking from both directions simultaneously. Algorithmic advances lower the target. Hardware advances raise the capability. Neither trend shows signs of slowing.

What to Do Now

Organizations that have not yet begun their PQC migration should treat this paper as another data point confirming that the window for orderly transition is finite. Specific actions include:

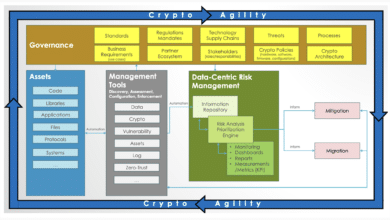

Prioritize cryptographic inventory for ECC dependencies. Identify every system, protocol, and certificate chain that relies on ECDH, ECDHE, ECDSA, or EdDSA. This is your primary quantum attack surface – not RSA.

Deploy ML-KEM for key agreement in all new systems. NIST has finalized ML-KEM (FIPS 203). Hybrid deployments combining ML-KEM with classical ECDH are supported by major TLS libraries and provide protection against both quantum and classical attacks during the transition period.

Begin signature migration planning. ML-DSA (FIPS 204) and SLH-DSA (FIPS 205) are standardized. Evaluate certificate chain implications, key sizes, and performance impacts for your specific infrastructure. The governance and compliance aspects of signature migration are typically more complex than the technical implementation.

Update quantum risk models with ECC-specific thresholds. If your risk model pegs quantum timelines to RSA-2048 estimates, it is overestimating the time available for ECC-protected systems. Recalibrate using ECC-specific qubit counts: approximately 1,200 logical qubits for P-256, not the 2,000+ that RSA-3072 requires.

Monitor the optimization pipeline. The Rennes team has published their code. Gidney and others in the quantum algorithms community will almost certainly work on reducing the gate count, as happened with the RSA result. Each optimization iteration brings the practical attack threshold closer.

The Shrinking Distance

In the early 2000s, the idea that a quantum computer might break ECC with just over a thousand logical qubits would have seemed absurd. The best estimates at the time required tens of thousands. The steady drumbeat of algorithmic improvements – from Proos and Zalka’s 5n qubits, to Häner et al.’s optimized circuits, to this paper’s 3.12n – represents exactly the kind of incremental-but-relentless progress that catches organizations off guard when they extrapolate linearly from a single point-in-time estimate.

The distance between current quantum hardware and a machine capable of breaking P-256 is still measured in orders of magnitude. But orders of magnitude are not what they used to be. In 2019, breaking RSA-2048 required an estimated 20 million physical qubits. In 2025, it required fewer than a million. That is a 20x reduction in six years, driven almost entirely by algorithmic and error-correction advances – not by building a bigger machine.

The same compression is now underway for ECC, and it starts from a lower baseline. The question for security leaders is no longer whether post-quantum migration is necessary, but whether their migration timeline accounts for the pace at which the target is moving.

The paper “Reducing the Number of Qubits in Quantum Discrete Logarithms on Elliptic Curves” by Clémence Chevignard, Pierre-Alain Fouque, and André Schrottenloher was published at EUROCRYPT 2026 and is available on the IACR Cryptology ePrint Archive. The authors’ implementation is available on Inria’s GitLab.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.