10,000 Qubits to Run Shor’s Algorithm

Table of Contents

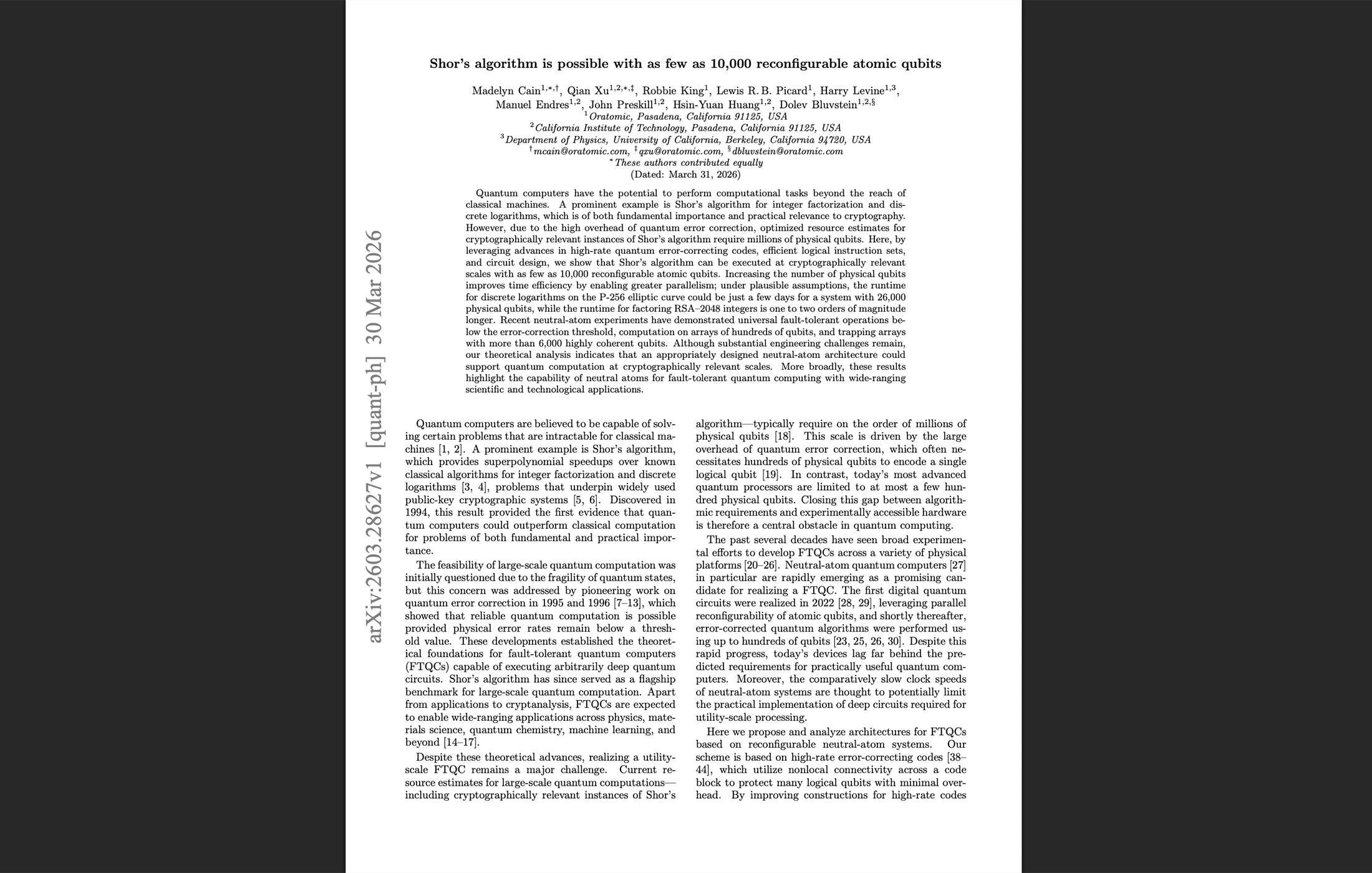

31 Mar 2026 – On the same day that Google Quantum AI published its landmark ECDLP-256 resource estimates showing fewer than 500,000 superconducting qubits could break cryptocurrency cryptography in minutes, a team from Oratomic, Caltech, and UC Berkeley quietly dropped a paper making an even more startling claim about qubit count: Shor’s algorithm can be executed at cryptographically relevant scales with as few as 10,000 reconfigurable neutral atom qubits.

The paper, titled “Shor’s algorithm is possible with as few as 10,000 reconfigurable atomic qubits,“ is authored by Madelyn Cain, Qian Xu, Robbie King, Lewis Picard, Harry Levine, Manuel Endres, John Preskill, Hsin-Yuan Huang, and Dolev Bluvstein. The team spans Oratomic — a new quantum computing startup based in Pasadena — Caltech, and UC Berkeley. For those tracking the field, the names Bluvstein (who led Harvard’s landmark neutral atom fault-tolerance demonstrations), Preskill (one of the founders of quantum error correction theory), and Endres (Caltech atomic physics) signal exceptional technical credibility.

The two papers are not independent. Oratomic’s ECC-256 resource estimates explicitly use Google’s newly published circuit compilations — the same circuits verified by Google’s zero-knowledge proof. Google showed the circuits are efficient. Oratomic shows those circuits can run on dramatically fewer physical qubits — but on a fundamentally different type of machine, with fundamentally different implications for the threat timeline.

The Core Numbers

For elliptic curve discrete logarithms on 256-bit curves (ECC-256), Oratomic explores architectures ranging from approximately 10,000 to 26,000 physical qubits. Their “space-efficient” architecture uses roughly 9,700–11,000 physical qubits. Their “balanced” architecture — which enables more parallel computation — uses roughly 12,000–13,300 physical qubits. A “time-efficient” architecture with greater parallelism scales to approximately 26,000 qubits.

For RSA-2048, the space-efficient and balanced architectures require roughly 11,000–13,300 physical qubits, while a parallelized time-efficient architecture scales to approximately 102,000 qubits.

These numbers are 50x lower than Google’s 500,000-qubit estimate and 100x lower than Gidney’s roughly 1-million-qubit RSA-2048 estimate. This is the first credible architecture paper showing cryptographically relevant Shor instances below 15,000 physical qubits on any platform. The reduction comes from one primary source: high-rate quantum Low-Density Parity-Check (qLDPC) codes with approximately 30% encoding rate, compared to roughly 4% for the surface codes used in Google’s and Gidney’s estimates. These lifted-product codes pack far more logical qubits per physical qubit — for example, their largest code encodes 1,480 logical qubits in just 5,278 physical qubits with distance 24.

The Runtime Tradeoff: Days and Months, Not Minutes

The 10,000-qubit number comes with a fundamental tradeoff that must not be glossed over: runtime.

Neutral atom platforms are “slow-clock” architectures. Where superconducting qubits operate with error correction cycle times around 1 microsecond, neutral atoms currently require milliseconds — roughly 1,000 times slower. Oratomic assumes a 1-millisecond stabilizer measurement cycle, which they note represents an engineering target that has not yet been achieved at scale (current experimental systems run at several milliseconds per cycle on much smaller arrays).

For ECC-256, the balanced architecture requires approximately 264 days. The time-efficient architecture with 26,000 qubits and parallelized operations brings this down to approximately 10 days. For RSA-2048, runtimes are one to two orders of magnitude longer still — meaning months to years depending on the architecture.

Compare this directly to Google’s estimates: 9–23 minutes for ECC-256 on a fast-clock superconducting machine.

This is the difference between being able to intercept a live Bitcoin transaction and being limited to attacking dormant wallets with long-exposed public keys. On-spend attacks — which Google showed are plausible for fast-clock CRQCs operating within Bitcoin’s 10-minute block time — are completely out of reach for a neutral atom CRQC running Shor’s algorithm over days or weeks.

The Same-Day Publication Is Not a Coincidence

The coordinated timing of these two papers tells a story that neither paper tells alone.

Google’s cryptocurrency whitepaper explicitly defines two scenarios for the emergence of CRQCs:

- Scenario 1: The first CRQCs are fast-clock devices (superconducting, photonic, silicon spin). Both at-rest and on-spend attacks become viable simultaneously. Google’s own resource estimates provide the detailed numbers for this scenario.

- Scenario 2: Slow-clock platforms (neutral atom, ion trap) advance more rapidly. At-rest attacks become viable well before on-spend attacks. The Oratomic paper provides the detailed numbers for this scenario.

Published on the same day, these papers present the complete threat landscape across both scenarios. Regardless of which hardware modality reaches cryptographic relevance first, the conclusion is identical: PQC migration must begin now.

The interrelationship goes deeper than timing. Google’s paper uses Oratomic’s work as a reference point, and Oratomic’s resource estimates build directly on Google’s circuit compilations. Google explicitly acknowledges in its paper that while its estimates focus on planar surface code architectures, “resource estimates could be reduced substantially by making more aggressive assumptions about hardware capabilities” — a direct nod to exactly the kind of high-rate qLDPC architecture Oratomic presents.

How This Compares Across the Full Landscape

| Google (Mar 2026) | Oratomic (Mar 2026) | Gidney (May 2025) | Pinnacle (Feb 2026) | |

|---|---|---|---|---|

| Target | ECC-256 | ECC-256 + RSA-2048 | RSA-2048 | RSA-2048 |

| Physical qubits | <500,000 | ~10,000–26,000 (ECC) | <1,000,000 | <100,000 |

| Error correction | Surface code (planar) | qLDPC (~30% rate) | Surface code (planar) | qLDPC (bicycle) |

| Cycle time | 1 μs | 1 ms | 1 μs | 1 μs |

| Platform | Superconducting | Neutral atom | Superconducting | Superconducting |

| ECC-256 runtime | ~9–23 min | ~10–264 days | N/A | N/A |

| RSA-2048 runtime | N/A | months–years | <1 week | ~1 month |

| On-spend viable? | Yes | No | N/A | N/A |

The pattern across these four papers is now clear. Surface code estimates on superconducting hardware have converged around 500,000–1,000,000 physical qubits with runtimes from minutes (ECC) to days (RSA). High-rate qLDPC estimates — from both Pinnacle (superconducting) and Oratomic (neutral atom) — push qubit counts down by 5–50x, but at the cost of either runtime (Oratomic: days-to-months), hardware maturity (Pinnacle: undemonstrated degree-10 connectivity), or both.

What This Paper Does NOT Claim

The paper does not claim that 10,000 neutral atom qubits exist today in a configuration capable of running Shor’s algorithm. Current neutral atom systems have demonstrated individually controlled tweezer arrays of up to 6,100 atoms (Caltech) and fault-tolerant operations on up to roughly 500 qubits. Separately, optical lattice experiments have loaded and imaged over 10,000 strontium atoms with high fidelity (Zeiher/planqc, 2023), though in a bulk-loaded lattice configuration rather than individually addressed tweezer qubits. The gap between demonstrated capability and the paper’s requirements — while smaller than for superconducting architectures — remains substantial.

The paper does not claim that its surgery gadgets for logical operations on high-rate qLDPC codes are optimized or fully mature. The authors explicitly describe their construction as “a theoretically consistent existence proof, which may not be optimal in terms of resource costs.” They note that constructing and verifying surgery gadgets for the largest codes becomes “computationally prohibitive.”

And the paper does not claim that 1-millisecond cycle times have been achieved. Current experimental systems operate at several milliseconds per cycle on far fewer qubits. Achieving the assumed cycle time at 10,000+ qubits requires significant engineering development in atom transport, readout speed, and laser beam management.

My Analysis — The Slow-Clock Scenario Gets Real

The 30% Encoding Rate: Where the Qubit Reduction Actually Comes From

The 50x reduction in physical qubits relative to Google’s surface code estimates is not magic — it is the direct consequence of switching from ~4% encoding rate codes to ~30% encoding rate codes. Surface codes encode one logical qubit per code block, with physical overhead scaling as roughly 2(d+1)² physical qubits per logical qubit (where d is the code distance). Oratomic’s lifted-product codes encode hundreds of logical qubits per block: their lp₂₄ code packs 1,480 logical qubits into 5,278 physical qubits at distance 24.

This is the same class of advantage that the Pinnacle Architecture claimed for RSA-2048 using bivariate bicycle codes. The fundamental insight is identical: high-rate codes use non-local connectivity to achieve dramatically better qubit density. The difference is that Pinnacle proposed this on superconducting hardware (requiring engineered non-planar connectivity that does not exist), while Oratomic proposes it on neutral atoms (where reconfigurable connectivity is a native capability of the platform).

This is a legitimate architectural advantage for neutral atoms. Reconfigurable atom arrays can move qubits into arbitrary configurations by physically repositioning optical tweezers, enabling the non-local connectivity that high-rate qLDPC codes demand. Superconducting qubits, by contrast, are fabricated in fixed positions on a chip. Achieving the degree-7 or degree-10 connectivity required by high-rate codes on a superconducting platform requires either physically wiring distant qubits (introducing noise and fabrication complexity) or using intermediary communication qubits (adding overhead).

Put differently: neutral atoms solve the Pinnacle routing problem with physics, not wiring. Where Pinnacle required hypothetical non-planar fabrication to exploit qLDPC density, neutral atoms achieve the same non-local connectivity through the native reconfigurability of optical tweezer arrays. Both papers point in the same direction — the field is moving beyond surface codes toward high-rate qLDPC architectures — but Oratomic’s hardware substrate makes the connectivity assumptions substantially more credible near-term. That convergence across two independent groups using different code families (Pinnacle: bivariate bicycle; Oratomic: lifted-product) reinforces that sub-100,000-qubit CRQCs are a serious architectural design space, not a single speculative proposal.

The Surgery Problem Is the Real Bottleneck

The headline qubit count is seductive, but the technical section that demands the most scrutiny is the code surgery construction. High-rate qLDPC codes are excellent at storing quantum information densely. Operating on that information — performing the logical gates needed to actually run an algorithm — is where the difficulty concentrates.

On surface codes, lattice surgery for logical operations is well-understood, extensively simulated, and has been demonstrated experimentally. On high-rate qLDPC codes, the situation is fundamentally different. The authors use a processor/memory architecture specifically because performing complex operations directly on the large memory codes is impractical: “the complication in actually executing logic on high-rate codes hides inside of the code surgery implementation, which can present substantial challenges.”

Their approach is to teleport logical qubits from a large, dense memory code to a smaller processor code (either a 248-qubit bivariate bicycle code or a 1,122-qubit LP code), perform operations there, and teleport back. This is clever, but each teleportation step requires multiple Pauli product measurements (PPMs), each of which is itself a surgery operation requiring ancilla qubits and multiple error correction cycles. The overhead per Toffoli gate ranges from roughly 8d/3 cycles (theoretical minimum) to 72·2d/3 cycles (space-efficient architecture for ECC-256), depending on how many qubits the processor code can handle at once.

The authors are transparent about the limitations. Their surgery gadgets are “existence proofs” verified numerically only for the smaller processor codes, and even that verification required extensive computational effort. For the largest codes, verification was not feasible. More advanced decoders could improve performance, but those decoders do not yet exist for these code families. One notable methodological detail: the paper mentions that several of its code instances were found via an “LLM-assisted heuristic computer search” — a sign of the times, and a construction approach the community will want to independently reproduce and validate.

This maps directly to Capabilities B.1 (QEC) and D.2 (Decoder Performance) in my CRQC Quantum Capability Framework. The codes themselves are impressive. The operational infrastructure to use them at algorithmic scale remains largely theoretical.

The Hardware Gap Is Smaller — But Still Real

One argument in Oratomic’s favor is that the gap between current experimental demonstrations and the paper’s requirements is narrower than for superconducting platforms. Current neutral atom systems have demonstrated 6,100 individually trapped atoms in tweezer arrays (Caltech, without computation) and fault-tolerant operations on ~500 qubits at 2x below threshold (also Caltech). Optical lattice experiments have separately demonstrated loading and high-fidelity imaging of over 10,000 atoms (Zeiher/planqc, 2023), though in a bulk configuration without individual qubit control. The paper requires ~10,000 individually addressable qubits at the same or better error rates. That is a 2x scaling in individually controlled trap size (from 6,100 atoms), though the lattice experiments demonstrate that trapping at the 10K scale is already physically feasible.

Compare this to Google’s scenario: scaling from current superconducting devices of ~1,000 qubits to 500,000 — a 500x increase.

The paper identifies a specific path to bridging the gap. Current neutral atom systems use continuous-wave lasers with only ~0.1% duty cycle for entangling operations (~200ns gates separated by ~200μs idle). By rastering the beam dynamically to increase duty cycle, they argue the number of atoms addressable at high fidelity could increase by three orders of magnitude. Metasurface-generated tweezer arrays with 360,000 trapping sites have already been demonstrated (without atoms), and arrays with 6,100 trapped atoms exist.

These are plausible engineering arguments, though none have been demonstrated in an integrated system. The relevant CRQC framework capabilities are E (Engineering Scale & Manufacturability), B.3 (Below-Threshold Operation & Scaling), and D.3 (Continuous Operation) — the latter being particularly demanding given that the ECC-256 computation would need to run continuously for days or weeks.

What This Means for Threat Timeline Assessment

The practical impact of the Oratomic paper is not that Q-Day is closer — it is that the range of plausible CRQC architectures threatening ECC has broadened dramatically.

If your threat model assumed that a CRQC would require millions of physical qubits, this paper is a direct rebuttal. The era of using “millions of qubits” as a comfortable proxy for “decades away” is over. Two credible, independent architecture papers — Google and Oratomic — now show CRQC-scale Shor execution below 500,000 qubits, with Oratomic pushing that number below 30,000.

Before these two papers, the implicit assumption in most threat assessments was that a CRQC would be a massive superconducting machine requiring millions of physical qubits. The “comfort margin” was the sheer engineering difficulty of building such a machine.

After these two papers, the picture is: a superconducting CRQC at ~500,000 qubits could break ECC in minutes (Google), OR a neutral atom CRQC at ~10,000–26,000 qubits could break ECC in days (Oratomic), OR a qLDPC-enabled superconducting machine at ~100,000 qubits could break RSA in a month (Pinnacle).

And a 10-day runtime, while useless for on-spend attacks, is devastating for at-rest targets. Consider what a slow-clock CRQC could systematically harvest at that pace. The ~1.7 million BTC in Satoshi-era P2PK scripts, where public keys sit directly on the blockchain, can be cracked one wallet at a time — and many individual wallets hold thousands of BTC. The roughly 5.2 million BTC in reused P2PKH addresses, where prior spend transactions exposed the keys. Every Ethereum account that has ever initiated a transaction — including the top 1,000 accounts holding ~20.5 million ETH. Every exposed smart contract admin key controlling stablecoin minting, bridge liquidity, or DeFi governance. And the on-setup targets — Ethereum’s KZG trusted setup parameters, Tornado Cash parameters, Mimblewimble commitment generators — where a single 10-day quantum computation produces a permanent classical exploit reusable without further quantum access.

A slow-clock CRQC does not need to be fast. It needs to be patient. And at 10,000–26,000 qubits, it needs to be far smaller than anyone was planning for.

The diversity of viable paths is itself the threat. It means that monitoring only one hardware modality — only superconducting, or only neutral atoms — provides an incomplete picture. And it means that multiple independent actors, with access to different technologies, could reach cryptographic relevance through different routes.

Google’s cryptocurrency paper made this point explicitly: “the final stages of the race to build a large fault-tolerant quantum computer may see ‘late-joiners’ attempting a rapid breakout toward a CRQC” and “the existence of early CRQCs may first be detected on the blockchain rather than announced.” Oratomic’s paper makes that scenario more concrete by showing that a relatively small, room-temperature neutral atom system — not a massive cryogenic superconducting installation — could be sufficient.

The ECC Thesis, Confirmed Again

This paper reinforces the same pattern I highlighted in my analysis of the Google paper: ECC is the more immediately vulnerable primitive. Oratomic’s own comparison shows ECC-256 requiring roughly 10,000 qubits and 10 days, while RSA-2048 on the same architecture requires 1–2 orders of magnitude more time. The paper’s Figure 1b shows how resource estimates for both problems have dropped by five orders of magnitude over two decades — with ECC consistently requiring less.

As I have argued for years, the quantum security community’s fixation on RSA-2048 as the benchmark has created a false sense of timeline distance for ECC-dependent systems. RSA is being phased out. ECC protects cryptocurrency. And ECC falls first, on every architecture.

What CISOs Should Take From Both Papers Together

The Google and Oratomic papers, published on the same day, form a complete threat assessment:

If the first CRQC is superconducting (Google’s scenario): ~500,000 qubits, minutes. Both at-rest and on-spend attacks. Immediate threat to all cryptocurrency transactions.

If the first CRQC is neutral atom (Oratomic’s scenario): ~10,000–26,000 qubits, days to weeks. At-rest attacks only. Immediate threat to dormant wallets and exposed-key assets (~6.9 million BTC, ~20.5 million ETH in top-1000 accounts), but active transactions with unexposed keys are safe for now.

Either way: PQC migration is urgent. The only difference between the scenarios is whether you also need on-spend mitigations (private mempools, commit-reveal schemes) immediately, or whether you have a brief window where key hygiene alone provides interim protection.

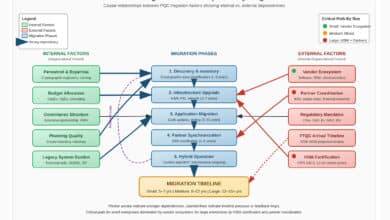

Organizations tracking quantum readiness should now maintain parallel threat models for both fast-clock and slow-clock CRQC scenarios, and update physical qubit thresholds accordingly: ~500,000 for surface code architectures, ~10,000–26,000 for high-rate qLDPC on neutral atoms.

The message converging from four independent papers in under two years is unambiguous: sub-100,000-qubit CRQCs are no longer science fiction. They are an engineering problem. The technical path to post-quantum security is clear — NIST-standardized algorithms exist and real-world deployments are underway on multiple blockchains. The remaining challenge is execution, coordination, and will. Two papers, one day, two architectures, one conclusion: migrate now.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.