Silicon Catches Its First Errors

Table of Contents

29 Jan 2026 – Quantum error correction is the wall that separates toy quantum computers from useful ones. Every qubit platform must eventually climb it. The first step up that wall is not correcting errors — that requires mid-circuit measurement and real-time feedback, capabilities that push the engineering envelope of any architecture. The first step is detecting them: identifying that an error has occurred, which qubit it affected, and what type of error it was, without destroying the quantum information you are trying to protect.

For silicon spin qubits, that first step has now been taken. A team at the Shenzhen International Quantum Academy (SZIQA) and Southern University of Science and Technology (SUSTech), led by Yu He and Dapeng Yu, has demonstrated stabilizer-based quantum error detection in a donor-based silicon quantum processor. Published in Nature Electronics, the work uses four phosphorus nuclear spin qubits and one electron spin auxiliary qubit to detect arbitrary single-qubit errors using the same stabilizer measurement framework that underpins the surface code and other leading error-correcting codes.

Previous error correction attempts in silicon — by Takeda et al. and van Riggelen et al. in 2022 — demonstrated phase-flip error correction using coherent conditional rotations, a different and more limited approach. This is the first time stabilizer-based error detection — the technique compatible with fault-tolerant architectures such as the surface code — has been realized in a silicon quantum processor.

The Processor and Its Capabilities

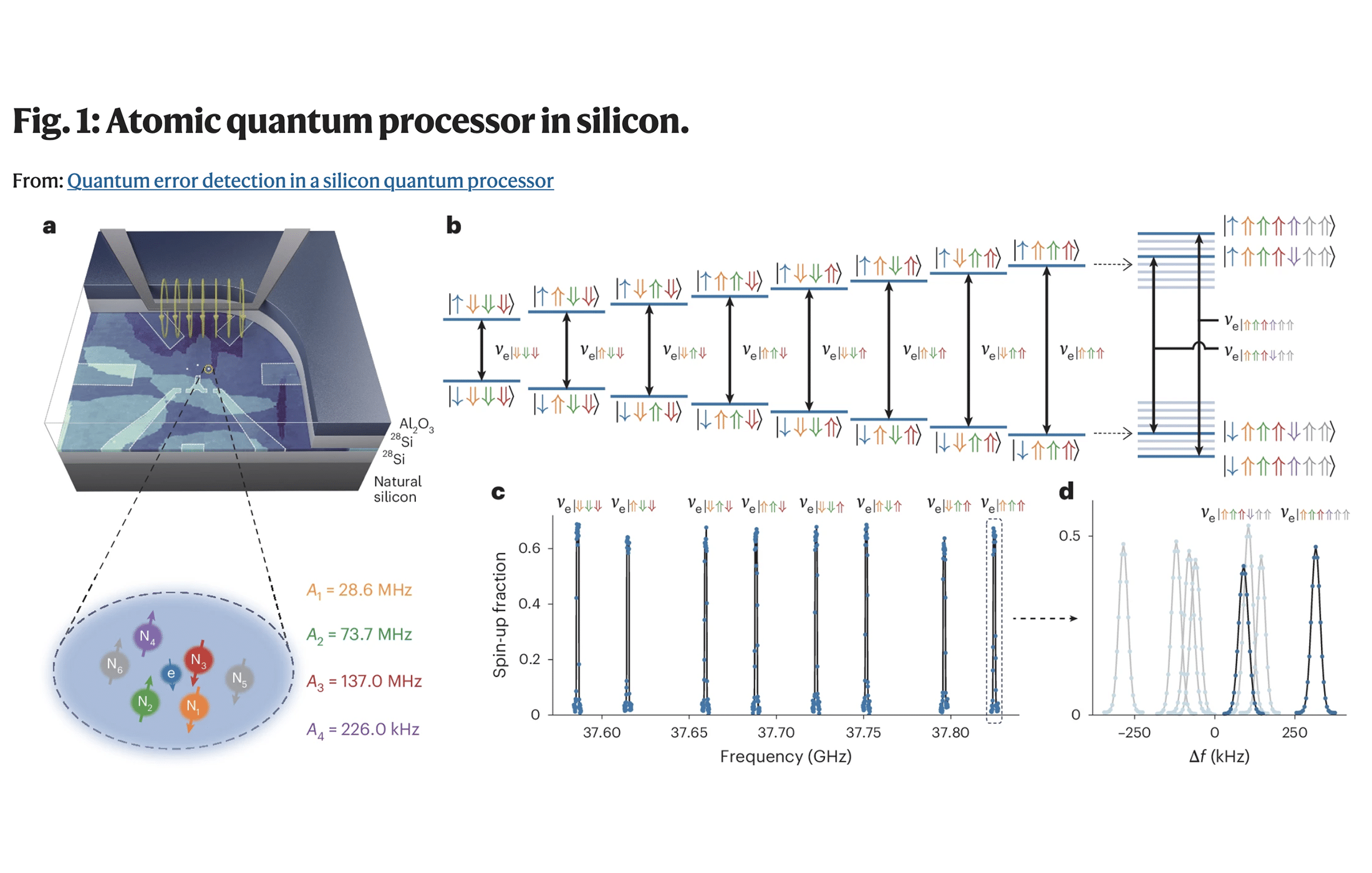

The SZIQA team’s processor is a phosphorus donor cluster in isotopically purified ²⁸Si, fabricated using STM hydrogen lithography. The cluster contains five ³¹P nuclei and one fortuitous hydrogen nucleus, all coupled to a shared electron through hyperfine interactions. Four of the five phosphorus nuclear spins serve as data qubits, while the electron spin functions as an auxiliary qubit for readout.

The device is controlled through a combination of NMR pulses (for nuclear spin rotations) and ESR transitions (for multi-qubit gates mediated by the electron). The hyperfine coupling strengths vary across the five nuclei — ranging from 226 kHz to 137 MHz — enabling individual addressability and high-connectivity multi-qubit gates within the cluster.

The team reports single-qubit Clifford gate fidelities of 99.57% and two-qubit CZ gate fidelities of 97.76%. These are solid but not at the level achieved by SQC’s 11-qubit processor, which reached 99.9% on two-qubit gates. The difference likely reflects both device-specific factors and the more challenging spectral environment of a five-donor cluster compared to SQC’s carefully separated two-register architecture.

The processor’s entanglement capability was first validated through the preparation of two-qubit Bell states between nuclear spins, followed by a four-qubit Greenberger-Horne-Zeilinger (GHZ) state — the maximally entangled state across all four data qubits — with a fidelity of 88.5 ± 2.3%. This four-qubit entanglement is a prerequisite for the error detection protocol, which relies on the ability to create and measure multi-qubit correlations.

How Stabilizer Error Detection Works

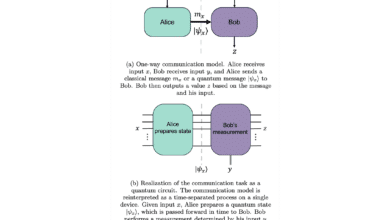

The core innovation in this paper is the implementation of stabilizer measurements for error detection — the same theoretical framework that underlies the surface code and all mainstream quantum error-correcting codes.

The idea, stripped to its essentials: in a quantum error-correcting code, the valid (error-free) quantum states satisfy specific mathematical relationships called stabilizer conditions. These are multi-qubit Pauli operators — like XXXX or ZZZZ — whose expectation values are fixed (+1) for any valid code state. If an error hits one of the qubits, it flips the sign of one or more stabilizer measurements, producing a “syndrome” that reveals both the presence and the type of the error.

The SZIQA team’s error detection circuit implements exactly this. After preparing an encoded state across four nuclear spins, they measure the stabilizer operators by mapping their eigenvalues onto the electron spin and reading it out. A syndrome of +1 on all stabilizers means no detectable error; a flipped stabilizer indicates which type of error occurred.

The experiment demonstrated detection of all three Pauli error types: bit-flip (X) errors, phase-flip (Z) errors, and the combination (Y errors). By introducing controlled decoherence delays, the team showed that their detection scheme could identify errors that accumulated naturally during idle time — not just artificially injected errors. And crucially, the encoded entanglement could be recovered after detection via a Pauli frame update (applied in classical postprocessing), confirming that the detection did not itself destroy the quantum information.

The Biased Noise Discovery

Perhaps the most consequential finding for the quantum security community is not the error detection itself but what the detection revealed about the nature of noise in silicon donor systems.

The stabilizer measurements showed that the noise is strongly biased: dephasing (phase-flip) errors dominate overwhelmingly, while relaxation (bit-flip) errors are minimal. This is fundamentally different from the noise profile of superconducting qubits, where errors are more symmetric between types.

The bias arises from the physics of nuclear spins in silicon. Nuclear spin lifetimes (T₁) in these systems are extraordinarily long — effectively infinite on the timescale of any current experiment. The nuclear spin simply does not spontaneously flip from |↑⟩ to |↓⟩ in any measurable timeframe. But the nuclear spin can lose its phase coherence (T₂) through fluctuations in its magnetic environment — primarily from the shared electron and any residual ²⁹Si nuclear spins nearby.

This asymmetry has direct implications for error correction design. Theoretical work by Tuckett, Bartlett, Flammia, and Brown has shown that quantum error-correcting codes can be dramatically more efficient when noise is biased: the fault-tolerance threshold for the surface code can exceed 5% under strongly biased noise, compared to approximately 1% under symmetric (depolarizing) noise. Tailored codes designed specifically for biased noise — such as the XZZX code — can push these advantages even further.

The practical translation: silicon donor qubits may require significantly fewer physical qubits per logical qubit than standard resource estimates assume, because those estimates typically model noise as symmetric. If the bias observed in this experiment persists at scale, the overhead of quantum error correction on silicon platforms could be substantially lower than on platforms with more symmetric error profiles.

For organizations using standard CRQC resource estimates to calibrate their PQC migration timelines, this is an important correction. The commonly cited estimates — Gidney’s RSA-2048 analysis, for example — assume surface code error correction with parameters that do not account for platform-specific noise bias. A silicon-optimized error correction architecture exploiting biased noise could meaningfully reduce the physical qubit requirements for cryptanalytic computations.

A Chinese Lab at the Frontier

This result comes from the Shenzhen International Quantum Academy, a research institute established under SUSTech and affiliated with the Hefei National Laboratory. China’s quantum computing efforts have been most prominently associated with photonic (Jiuzhang) and superconducting (Zuchongzhi) platforms. The SZIQA result demonstrates that Chinese researchers are now also operating at the frontier of silicon quantum computing — a platform whose CMOS compatibility gives it unique strategic significance.

The silicon donor qubit field has historically been dominated by Australian groups — particularly SQC and Andrea Morello’s team at UNSW — along with European contributors. The emergence of a competitive Chinese group working with the same STM lithography technique adds a new dimension to the geography of silicon quantum computing.

What Remains: From Detection to Correction

Error detection is necessary but not sufficient for fault-tolerant quantum computing. Detection tells you an error has occurred; correction fixes it in real time without interrupting the computation. The gap between the two is substantial.

Real-time error correction requires mid-circuit measurement — reading out syndrome information from ancilla qubits while the data qubits remain coherent — followed by fast classical processing to determine the appropriate correction, followed by the application of that correction, all within the coherence time of the system. The SZIQA team performs their stabilizer measurements destructively, at the end of the circuit, and applies corrections in classical postprocessing. This is a necessary step on the road, but not yet the destination.

The next milestone for silicon would be non-destructive, mid-circuit syndrome extraction with real-time feedback — the capability that Google demonstrated in superconducting qubits with their below-threshold surface code result, and that several trapped-ion and neutral-atom groups are pursuing. Achieving this in silicon will require advances in measurement speed, ancilla integration, and classical control infrastructure.

But the foundation is now in place. Silicon has demonstrated that its errors can be detected using the same stabilizer framework that the rest of the field has converged on. The noise profile is favourable. The physics is compatible. What remains is engineering.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.