QuiX Quantum Achieves First Below-Threshold Error Mitigation in Photonic Quantum Computing

Table of Contents

5 Apr 2026 – QuiX Quantum, a Netherlands-based photonic quantum computing company, announced it has demonstrated below-threshold error mitigation on a photonic quantum computer for the first time, in collaboration with NASA’s Quantum Artificial Intelligence Laboratory (QuAIL), the University of Twente, and Freie Universität Berlin.

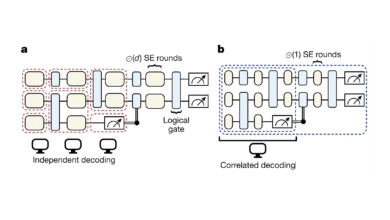

The result, described in a pre-print on arXiv currently undergoing peer review, demonstrates a technique called photon distillation on QuiX’s Bia cloud quantum computing platform. Using a programmable 20-mode silicon-nitride photonic processor, the team showed that quantum interference among multiple imperfect photons can be used to produce cleaner, more indistinguishable photons — reducing photon indistinguishability error by a factor of 2.2.

Critically, the team reports that the protocol achieves net-gain error mitigation even after accounting for noise introduced by the distillation gate itself, delivering a 1.2× net reduction in total error. This meets the two conditions considered essential for meaningful error mitigation in any quantum computing platform: the protocol removes more errors than it introduces, and it does not impede the operation of the rest of the computer.

QuiX claims this is the first time either condition has been demonstrated on photonic hardware, and the first European demonstration of production-ready error reduction on any quantum computing platform.

Numerical modeling presented in the paper suggests that integrating photon distillation with quantum error correction could reduce the number of photon sources required per logical qubit by up to a factor of four — a significant potential reduction in system complexity, since photon sources constitute the vast majority of components in a photonic quantum computer.

The project was partially funded by the Netherlands Ministry of Defense’s Purple NECtar Quantum Challenges initiative, through a project called QSHOR — a name that suggests the defense establishment is interested in photonic paths toward running Shor’s algorithm.

My Analysis: Photonics Finally Gets on the Board

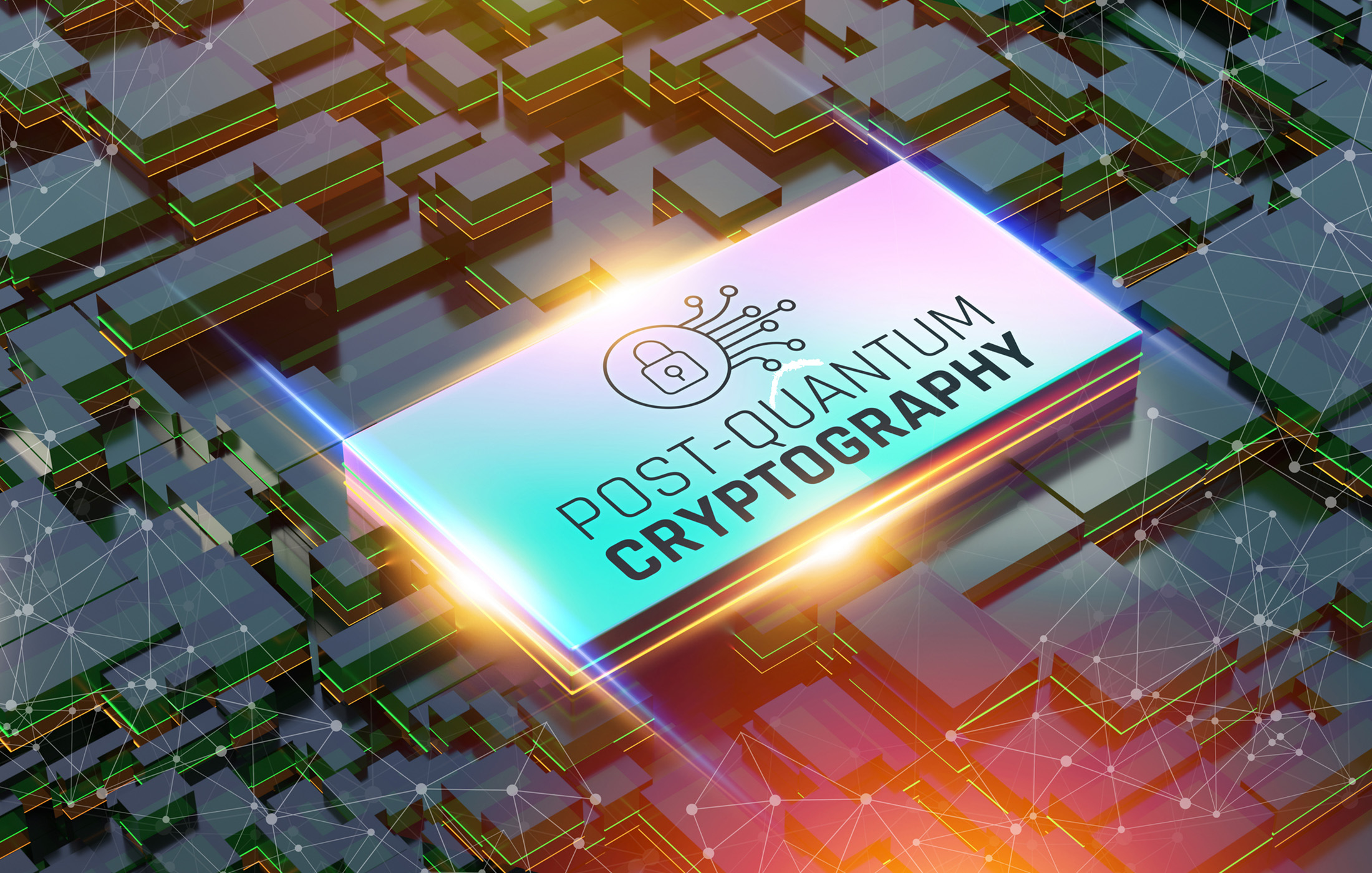

I published my CRQC Scorecard on April 1st — a comprehensive gap analysis mapping every major quantum computing modality against the engineering requirements for a cryptographically relevant quantum computer (CRQC). In that analysis, the photonic section was the starkest: zero demonstrated logical qubits, zero logical gates, and effectively the entire journey remaining on every metric. While superconducting, trapped-ion, and neutral-atom platforms have all demonstrated below-threshold operation, logical qubits, and — in some cases — fault-tolerant gate sets, photonics had nothing comparable to show.

Twenty-four hours later, QuiX has given photonics its first entry on the board.

What This Actually Demonstrates

Let me be precise about what was achieved and what wasn’t, because the press release language is, characteristically, more expansive than the paper warrants.

Photon distillation is not quantum error correction. It is not a logical qubit. It does not demonstrate logical gate operations, magic state production, syndrome extraction, or any of the system-level capabilities in my CRQC Quantum Capability Framework.

What it demonstrates is something more foundational: the ability to address photonic quantum computing’s specific dominant error source – photon indistinguishability – using a hardware-level, coherent technique that operates below threshold and is compatible with fault-tolerant architectures.

Every quantum computing modality has its characteristic noise problem. For superconducting qubits, it’s gate infidelity and decoherence. For trapped ions, it’s crosstalk and heating. For neutral atoms, it’s Rydberg gate fidelity. For photonics, it’s photon indistinguishability – the fact that photons from real-world sources carry subtle which-path information that degrades the quantum interference on which the entire computational model depends.

This is not a secondary concern. In measurement-based photonic quantum computing, everything – resource state generation, entanglement, computation – relies on high-quality quantum interference between indistinguishable photons. If your photons aren’t clean enough, you can’t build the cluster states, and no amount of downstream error correction can save you efficiently. The paper makes this point explicitly: indistinguishability errors both reduce the probability of successful resource state generation and introduce computation errors.

The QuiX result shows that a scalable protocol exists – demonstrated on real hardware, not just in theory – that can clean up photons before they enter the computational pipeline. The 2.2× raw error reduction and 1.2× net reduction (including gate noise) are modest numbers. But the significance is in the below-threshold nature of the result: the protocol crosses the threshold where it removes more error than it adds, meaning that in principle, it can be iterated and scaled.

Where This Sits in the CRQC Framework

In terms of my CRQC Quantum Capability Framework, photon distillation maps most directly to Capability B.3: Below-Threshold Operation & Scaling – the demonstration that error mitigation or correction can suppress errors faster than they accumulate. This is the foundational capability that every other capability depends on. Without below-threshold operation, scaling is impossible.

For superconducting qubits, this milestone was achieved by Google’s Willow processor in 2024 – a surface code memory qubit at distance 7 with error suppression that improved as the code grew. For neutral atoms, the Harvard/MIT/QuEra collaboration demonstrated below-threshold surface codes in their 448-atom fault-tolerant architecture. For trapped ions, Quantinuum has shown it across multiple demonstrations on the H2 and Helios platforms.

Photonics just achieved its equivalent, but at a pre-QEC level. Photon distillation sits below quantum error correction in the stack. It cleans up the physical inputs so that QEC can work efficiently on top of it. The paper’s modeling suggests that combining photon distillation with QEC could reduce physical qubit (photon source) requirements by 4×, which is a genuine architectural insight: don’t try to error-correct your way out of bad photons – fix the photons first, then error-correct.

This layered approach – hardware-level error mitigation before QEC – is something the photonic community has long theorized but never demonstrated in practice. It’s now demonstrated.

Does This Change the Scorecard?

Honestly? Not dramatically. My CRQC Scorecard assessment of photonic quantum computing listed the gap as “effectively the entire journey” across all three metrics – Logical Qubit Capacity, Logical Operations Budget, and Quantum Operations Throughput. That assessment remains correct. QuiX has not demonstrated a logical qubit, a logical gate, or any system-level fault-tolerant operation.

What changes is the narrative. Photonics is no longer the modality with zero demonstrated error reduction capabilities. It now has a proven, hardware-level technique for addressing its dominant error source – one that the paper shows is optimal (they prove that the error reduction achieved by Fourier-based distillation is the best possible over all unitaries) and scalable (the protocol extends to arbitrary photon numbers without concatenation).

The 4× reduction in photon sources per logical qubit is also significant for Capability E.1: Engineering Scale & Manufacturability. Photonic quantum computing’s biggest architectural challenge is the sheer number of photon sources required — millions for any useful computation. A 4× reduction doesn’t solve the problem, but it meaningfully relaxes the engineering constraints. And this is modeling based on current source performance. If photon sources themselves improve, as they are doing rapidly, particularly with quantum-dot and integrated sources, the combined effect of better sources plus distillation could be multiplicative.

The Broader Context: Why Photonics Matters Even at This Stage

I’ve been tracking photonic quantum computing with a mix of skepticism and respect. Skepticism because the demonstrated capabilities lag every other modality by a wide margin. Respect because the theoretical advantages of photonics – room-temperature operation, GHz-range clock speeds, silicon-compatible manufacturing, natural fiber-optic networking – are genuinely transformational if they can be realized. And because I tried to build a photonic quantum computing startup in the past.

Xanadu’s Aurora is the first universal photonic quantum computer – 12 qubits with all fault-tolerant subsystems integrated. PsiQuantum has DARPA selection but no public results. Photonic Inc. has proposed SHYPS qLDPC codes claiming 20× fewer physical qubits than surface codes. And now QuiX has demonstrated a concrete, hardware-level error mitigation capability.

None of these individually moves the needle on the CRQC timeline. But collectively, they suggest photonics is maturing from a theoretical promise into an experimental program. The QuiX result in particular is notable because it was conducted on a production cloud platform (Bia), not a one-off lab setup – suggesting this is a capability that can be integrated into real systems.

The Defense Funding Angle

It’s worth noting that this work was partially funded by the Netherlands Ministry of Defense through the “QSHOR” project. The name is not subtle. Defense establishments don’t fund photonic error mitigation research out of academic curiosity — they fund it because photonic quantum computing has unique advantages for certain deployment scenarios (no cryogenics, compact form factor, potential for distributed architectures) and because any path to running Shor’s algorithm at scale is of strategic interest.

NATO nations are increasingly hedging their CRQC bets across multiple modalities — and photonics is in the portfolio.

My Bottom Line

The QuiX photon distillation result is a genuine first for photonic quantum computing — the first below-threshold error mitigation demonstrated on real photonic hardware. It’s the kind of foundational result that, years from now, may be recognized as the moment photonics entered the fault-tolerant era.

But let’s maintain perspective. This is a single-photon purification protocol operating at the pre-QEC level. Photonics still has zero demonstrated logical qubits. The gap between cleaning up individual photons and running Shor’s algorithm on a million-photon cluster state remains immense.

What the result does is remove one of the arguments used by photonic skeptics: that the indistinguishability problem is fundamentally intractable at scale. It’s not. There’s now a demonstrated, optimal, scalable protocol for addressing it. The question is no longer whether photon errors can be mitigated below threshold – it’s how fast the remaining engineering challenges can be solved.

And as I argued in my CRQC Scorecard, the remaining challenges across all modalities are primarily engineering problems, not scientific ones. Photonics just provided another data point in support of that thesis.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.