Quantinuum Squeezes 94 Logical Qubits from 98 Physical — But What Does It Actually Mean?

Table of Contents

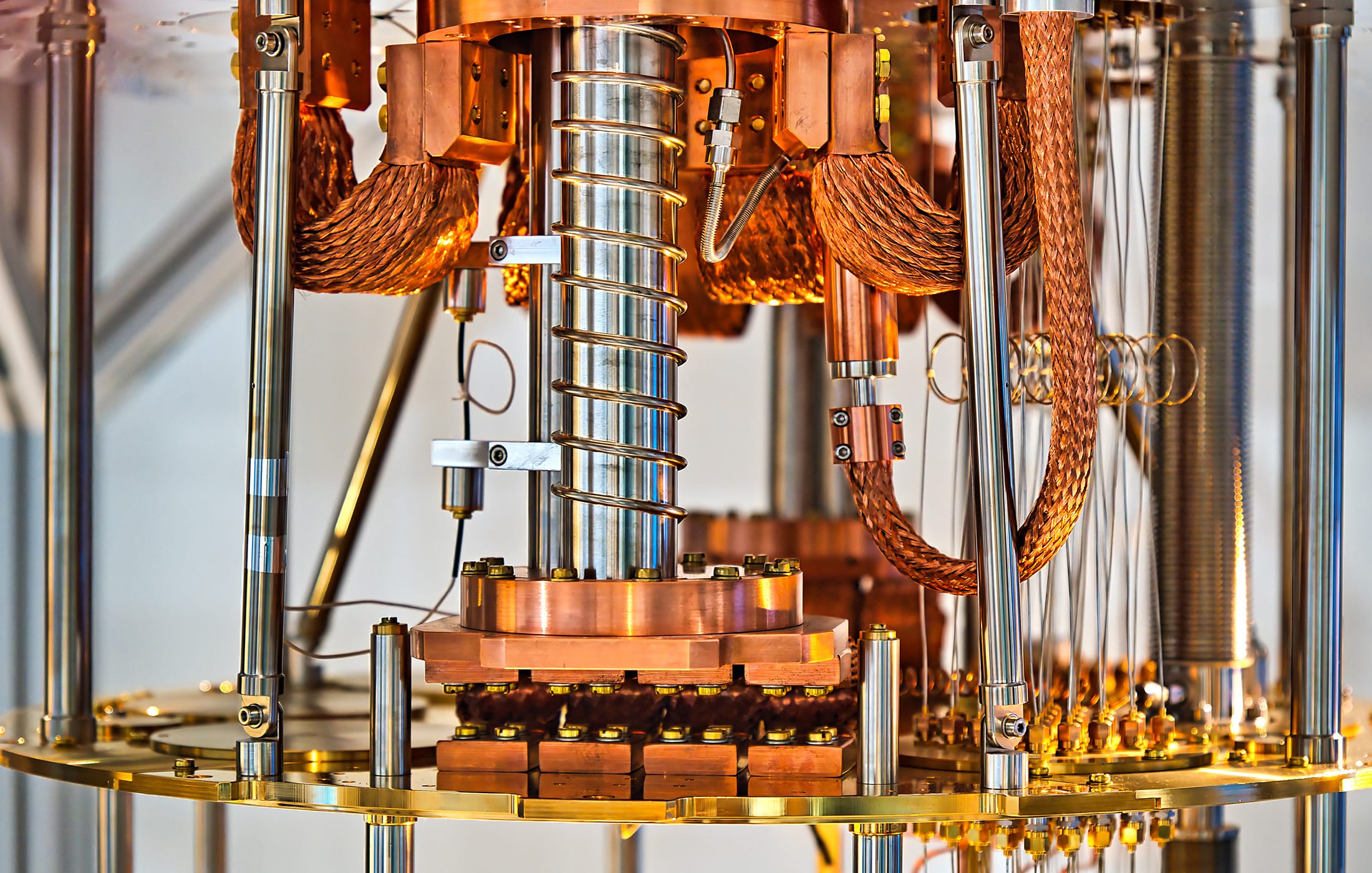

10 Mar 2026 – Quantinuum researchers have demonstrated quantum computations using up to 94 error-detected logical qubits and 48 error-corrected logical qubits on the company’s 98-qubit Helios trapped-ion quantum processor. The work, published as a pre-print on arXiv, reports “beyond break-even” performance across multiple benchmarks — meaning the encoded logical qubits outperformed their unencoded physical counterparts.

The team used a family of error-detection and error-correction schemes known as iceberg codes, which achieve a near 1:1 ratio of physical to logical qubits by requiring only two additional physical qubits for error-detection overhead. By concatenating — or nesting — iceberg codes, the researchers created distance-4 error-correcting codes that can both detect and correct certain classes of errors.

Key results include logical gate error rates of approximately 1 × 10⁻⁴ (one error per ten thousand operations), significantly lower than Helios’s bare physical two-qubit gate error rate of approximately 8 × 10⁻⁴. The team also demonstrated a 94-qubit GHZ (Greenberger-Horne-Zeilinger) entangled state with approximately 95% fidelity, and used 64 error-detected logical qubits to perform a quantum simulation of a three-dimensional XY model of quantum magnetism — a system whose forward time evolution the authors suggest may challenge classical simulation methods.

In a blog post, the researchers stated: “This is just the beginning: we are officially entering the era of large-scale logical computing.”

My Analysis

The Overhead Problem — and Iceberg’s Clever Workaround

To understand why this result matters, you need to understand the fundamental tension at the heart of quantum error correction (QEC). The dominant approach in QEC — the surface code, which Google famously demonstrated below threshold in late 2024 — requires enormous physical-to-logical qubit ratios. In practical surface code architectures, you might need hundreds or even thousands of physical qubits to encode a single logical qubit with error rates low enough for useful computation. That’s fine if you have a million physical qubits. It’s crippling if you have 98.

Iceberg codes take a radically different approach to this tradeoff. In their simplest form, they encode k logical qubits using just k + 2 physical qubits — two qubits of overhead to detect any single-qubit error across the entire block. The catch, of course, is that detection is not correction. When an error is detected, the computation is discarded and restarted. This postselection approach works when error rates are low enough that acceptance rates remain reasonable, but it doesn’t scale to arbitrary circuit depths.

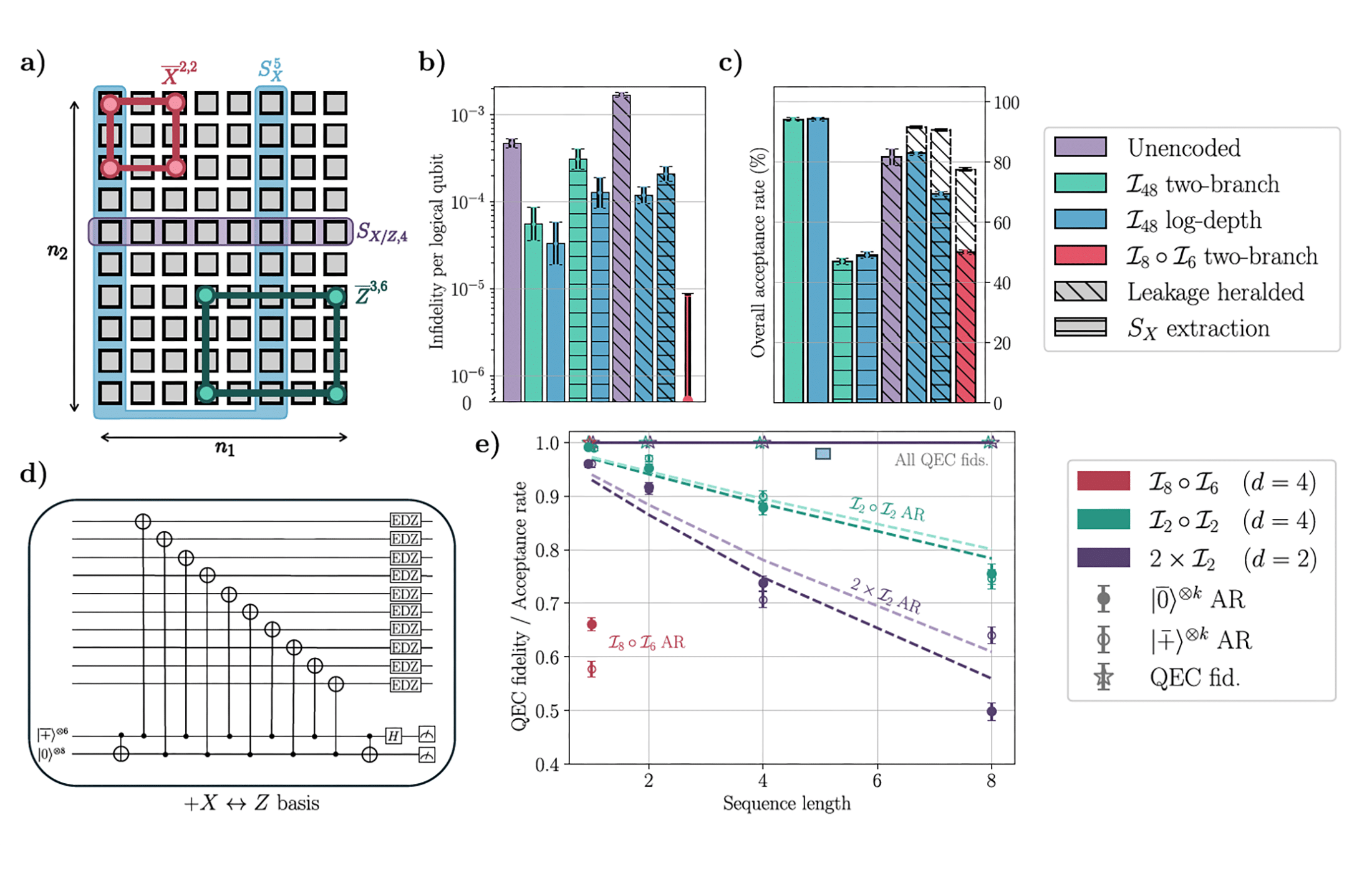

This is where concatenation enters. By nesting iceberg codes — using the logical qubits of one iceberg code as the physical qubits of another — the team created codes with distance 4, capable of actually correcting single errors and detecting double errors. The resulting [[80, 48, 4]] concatenated code (I₈ ∘ I₆) still achieves a remarkably efficient 80:48 physical-to-logical ratio, roughly 1.67:1. Compare that to a distance-4 surface code, which would require approximately 32 physical qubits per logical qubit.

The efficiency is striking. But it comes with important caveats.

What “Beyond Break-Even” Actually Means Here

The phrase “beyond break-even” is becoming the quantum computing industry’s equivalent of “our AI is the best” — technically meaningful but requiring careful parsing.

In this context, break-even means that the encoded logical operations produce fewer errors than the unencoded physical operations would produce on the same task. That’s a genuine milestone. It means the overhead of encoding, syndrome extraction, and decoding is less than the error reduction gained from the code — the error correction is actually helping rather than making things worse.

But it’s important to understand the specific comparisons being made. The logical SPAM (state preparation and measurement) error was measured at approximately 3 × 10⁻⁵ per logical qubit for the I₄₈ code with log-depth preparation, compared to 4.8 × 10⁻⁴ for unencoded physical SPAM — an order of magnitude improvement. For the concatenated I₈ ∘ I₆ code, no logical errors were observed across 4,000 shots in the SPAM experiment, bounding the error at below 8.3 × 10⁻⁶ per logical qubit.

The logical gate benchmarking showed error rates of roughly 1.0–1.2 × 10⁻⁴ per gate, compared to the physical two-qubit gate error of approximately 8 × 10⁻⁴ (from isolated randomized benchmarking) and an effective error rate of approximately 2 × 10⁻³ (from full-circuit benchmarks including transport and memory errors). These are genuine improvements, though the comparison point you choose matters.

The XY model simulation showed a more modest improvement — roughly a 30% reduction in effective per-gate error rate when encoded, from ε̃₂Q ≈ 10.7 × 10⁻⁴ (unencoded) to ε̃₂Q ≈ 7.1 × 10⁻⁴ (encoded with leakage heralding). This is the most realistic test of the encoding’s practical value, and the improvement, while genuine, is far from the order-of-magnitude gains seen in simpler benchmarks.

The Postselection Question

There’s an elephant in the lab that every coverage of this result should address: postselection overhead.

Many of the benchmarks rely on discarding runs where errors are detected. The acceptance rates tell the story. For the I₈ ∘ I₆ QEC cycle experiment, the overall acceptance rate was approximately 0.62. For the 94-qubit GHZ state preparation, it was approximately 0.25 (with leakage heralding). For the deepest XY model simulation circuits (10 Trotter steps), the acceptance rate dropped to approximately 3.2%.

This means that for the most computationally interesting experiments, more than 96% of the computational shots are discarded. The researchers are transparent about this — and they correctly note that postselection overhead should be exponentially suppressed with increasing code distance, since both the error rate and the discard probability decrease. The improvement in acceptance rates when going from distance 2 to distance 4 supports this argument: the average acceptance rate per QEC cycle improved from 0.945 to 0.972.

But the practical implication remains: scaling to deeper circuits with these codes still requires either higher code distances (meaning more physical qubits and more complex concatenation) or significantly lower physical error rates.

Why Trapped Ions Are Uniquely Suited

As I analyzed in my detailed look at the Helios architecture, Quantinuum’s trapped-ion approach has a specific architectural advantage that makes iceberg codes viable: all-to-all qubit connectivity. Iceberg codes require global Pauli product stabilizers — meaning every qubit in the code block needs to interact with shared ancilla qubits. On a nearest-neighbor architecture like Google’s superconducting chips, this would require extensive SWAP networks that would likely destroy the encoding advantage. On Helios, where any qubit can interact with any other qubit through the QCCD (quantum charge-coupled device) transport mechanism, these global operations are natural.

This is an important point for understanding the QEC landscape. Different hardware architectures naturally favor different code families. Surface codes favor nearest-neighbor architectures. Iceberg codes favor all-to-all connectivity. qLDPC codes require intermediate connectivity. The optimal error correction strategy is becoming inseparable from the hardware architecture — and Quantinuum is showing that their architectural choice has real QEC benefits.

The three-dimensional XY model simulation is a particularly good demonstration of this advantage. Mapping a 4 × 4 × 4 periodic cubic lattice onto a physically two-dimensional set of qubits requires exactly the kind of flexible, non-local connectivity that trapped-ion systems provide.

How Does This Compare to Google’s QEC Results?

Google’s late-2024 demonstration of below-threshold operation with the surface code on their Willow processor and Quantinuum’s iceberg code results represent fundamentally different — and complementary — approaches to the same problem.

Google showed that increasing the code distance from d=3 to d=5 to d=7 in the surface code consistently suppressed logical error rates, demonstrating the exponential error suppression that the theory predicts below threshold. This is the canonical demonstration of scalable QEC — showing that the path to arbitrarily low error rates exists.

Quantinuum’s result shows something different: that you can extract practical value from error correction today, with far fewer physical qubits, by using codes specifically designed for the available hardware. 94 error-detected logical qubits from 98 physical qubits is dramatically more efficient than anything surface codes can offer. But the distance-2 iceberg codes can only detect errors, not correct them, and the distance-4 concatenated codes still rely partly on postselection.

Neither result, on its own, is sufficient for a cryptographically relevant quantum computer (CRQC). Both are advancing different capabilities within my CRQC Quantum Capability Framework. Google’s work advances B.3: Below-Threshold Operation & Scaling for surface codes. Quantinuum’s work advances multiple capabilities — B.1: Quantum Error Correction and C.1: High-Fidelity Logical Clifford Gates — while demonstrating that high-rate codes are viable alternatives to surface codes for near-term fault-tolerant computation.

The CRQC Implications — Keep Calm and Carry On

Let me put this in the context that PostQuantum.com readers care about most: does this bring Q-Day closer?

The short answer is: not directly, and not meaningfully in isolation. As I detailed in my coverage of Quantinuum’s earlier quantum advantage claims, there remains a vast gap between impressive QEC demonstrations and the resources needed for cryptanalysis. The most optimized current estimates for breaking RSA-2048 — such as the Gidney 2025 estimate — still require on the order of hundreds of thousands of physical qubits, even with aggressive architectural assumptions. Ninety-four error-detected logical qubits is many orders of magnitude away from what’s needed for Shor’s algorithm to threaten real cryptographic keys.

That said, the result is significant for the trajectory. The demonstrated logical gate error rates of ~10⁻⁴ are promising — they need to reach ~10⁻⁸ to ~10⁻¹⁰ for practical fault-tolerant computation at scale, but the path through higher code distances is now experimentally validated. And the extremely efficient encoding rates of iceberg codes suggest that trapped-ion systems with hundreds or thousands of physical qubits could support meaningful fault-tolerant computations sooner than surface-code-based systems with comparable qubit counts.

What to Watch Next

Quantinuum’s blog post mentions plans to package iceberg codes into “QCorrect,” a tool for developers to automatically improve application performance. If this materializes effectively, it could make error-detected computation accessible to a broader set of users and applications — moving QEC from a physics experiment to an engineering tool.

The more technically interesting direction is whether concatenated iceberg codes can be pushed to higher distances. The paper presents strong evidence for distance 4; distance 6 or 8 would require more physical qubits but could potentially demonstrate the kind of aggressive error suppression needed for practical fault tolerance. As Quantinuum scales to larger processors — as I noted when Helios launched, the company’s roadmap targets hundreds of qubits — the iceberg-concatenation approach could become increasingly powerful.

The interaction between this work and the emerging family of quantum LDPC codes is also worth watching. The recently published Pinnacle Architecture from Iceberg Quantum (no relation to the iceberg code — confusingly) proposes breaking RSA-2048 with fewer than 100,000 physical qubits using QLDPC codes with high-connectivity hardware. Trapped-ion systems naturally provide the kind of non-local connectivity that both iceberg codes and QLDPC codes require, positioning Quantinuum to potentially adopt these more advanced code families as their hardware scales.

The Bottom Line

This is a genuinely impressive piece of QEC engineering. Squeezing 94 error-detected or 48 error-corrected logical qubits from 98 physical qubits, while demonstrating beyond-break-even performance across multiple benchmarks, is a clear technical achievement. The magnetism simulation with 64 logical qubits represents the first use of error-detected logical qubits for a computation at a scale that begins to challenge classical methods.

But let’s keep perspective. This is not fully fault-tolerant quantum computing. It is not a path to imminent cryptographic relevance. It is one important step — among many still needed — on the road from noisy intermediate-scale quantum devices to practical, error-corrected quantum computation.

For organizations watching the quantum landscape, the implication is what I continue to argue: the exact arrival date of a CRQC is less important than the fact that the deadlines for action are already set by regulators, insurers, and clients. Whether fault-tolerant quantum computing arrives in 2035 or 2040, the PQC migration needs to start now.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.