PQC Standards Fragmentation: What Multinationals Must Plan For Now

Table of Contents

One Migration Plan. Four Algorithm Families. Five Jurisdictions.

You are the CISO of a global financial institution. Your New York trading operations must comply with CNSA 2.0, which mandates NIST-standardized algorithms: ML-KEM (FIPS 203) for key encapsulation and ML-DSA (FIPS 204) for digital signatures. Your Frankfurt office falls under German BSI guidance recommending hybrid configurations that layer PQC alongside classical algorithms, and France’s ANSSI has expressed preference for FrodoKEM as a conservative lattice alternative. Your Shanghai branch processes data subject to China’s Cryptography Law and will need to support whatever algorithms emerge from China’s ICCS standardization process, which has not yet announced its selections. Your Seoul subsidiary handles Korean government contracts that require the KpqC competition winners: HAETAE for signatures and SMAUG-T for key encapsulation. Your Dubai office serves Gulf state clients who are generally NIST-aligned but building domestic cryptographic capability.

You need one PQC migration plan. How many algorithm families do you support? How many PKI hierarchies do you operate? How many HSM configurations do you maintain? What happens when a system in Frankfurt needs to establish a secure session with a system in Shanghai, and neither jurisdiction’s mandated algorithms are accepted by the other?

This is not a hypothetical scenario for a future decade. These standardization efforts are underway now. The KpqC winners were announced in January 2025. China’s ICCS launched its global call for proposals in February 2025 and a Chinese preprint proposed releasing initial GB/T standards (KEM plus signatures) before end of 2026. NIST’s standards are finalized and operational. The EU’s coordinated PQC roadmap directs organizations to begin transitioning by end of 2026. The jurisdictional clock is running on multiple independent timelines, and the CISO who plans for a single global algorithm family is planning for a world that will not exist.

In Sovereignty in the PQC Era, I surveyed the geopolitical motivations driving this fragmentation. In Quantum Sovereignty, I devoted a full chapter to cryptographic sovereignty and its implications. This article is the operational companion to that analysis: it maps the concrete consequences for enterprise architecture, PKI design, procurement, vendor management, and cross-border data flows, and provides a practical framework for building a migration plan that survives the fragmentation.

The Map: Who Is Standardizing What

The era of global cryptographic consensus, where the world defaulted to a single set of algorithms selected by NIST, is ending. It was never as complete as the industry pretended (China has maintained SM-series algorithms for years, Russia its GOST suite), but PQC is the inflection point where fragmentation becomes a first-order operational problem.

NIST (United States). The anchor standards for the Western world. ML-KEM (FIPS 203) for key encapsulation, ML-DSA (FIPS 204) for digital signatures, SLH-DSA (FIPS 205) for hash-based stateless signatures. FN-DSA (FIPS 206, formerly FALCON) is expected. HQC has been selected as a backup KEM. LMS and XMSS (SP 800-208) are approved for firmware and code signing. CNSA 2.0 sets aggressive timelines for US national security systems, and NIST IR 8547 targets deprecation of quantum-vulnerable algorithms by 2030 and disallowance by 2035. The complete US PQC regulatory framework maps the full compliance picture. For allied nations (UK, Canada, Australia, Japan, most of NATO), NIST selections are the de facto standard.

China (ICCS/NGCC). The State Cryptography Administration, through the Institute of Commercial Cryptography Standards (ICCS), launched a global call for Next-Generation Commercial Cryptography (NGCC) proposals in February 2025. The program solicits algorithms for public-key cryptography (primarily PQC), cryptographic hash functions, and block ciphers. No selections have been announced as of mid-2026, but the program is actively evaluating submissions. China’s pattern with classical cryptography provides the predictive model: the SM-series (SM2 for ECC, SM3 for hashing, SM4 for block ciphers) uses the same mathematical families as Western standards but with different specific constructions, different parameters, and domestic control over the specification. The algorithms are technically sound, but they are not interchangeable with their Western counterparts. A system that implements SM2 cannot interoperate with a system expecting NIST P-256 ECDSA, even though both are elliptic curve-based.

The PQC outcome will likely follow the same pattern: lattice-based and code-based algorithms drawing on the same mathematical foundations as NIST’s selections, but with different constructions, different parameter choices, and different performance characteristics. A Chinese preprint from August 2025 proposed a phased roadmap targeting initial GB/T standards (KEM plus signature) before end of 2026, with consistency test methods and a “main algorithm + backup algorithm” framework. The preprint was explicit about the urgency: without a systematic PQC national standards plan within three years, China risks being passively aligned with US standards, with consequences for supply chain autonomy, compliance sovereignty, and long-term confidentiality protection.

For enterprise CISOs, the critical question is not what specific algorithms China selects (that will be determined by the ICCS process) but whether Chinese regulations will mandate those algorithms for commercial use within China. Given the precedent set by the SM-series, the answer is almost certainly yes. Any organization processing data subject to China’s Cryptography Law, operating critical information infrastructure in China, or serving Chinese government clients should plan for mandatory use of Chinese-selected PQC algorithms, on a timeline that may be accelerated relative to the ICCS evaluation schedule.

South Korea (KpqC). The Korean Post-Quantum Cryptography competition, launched in 2021 by the National Intelligence Service in collaboration with the National Security Research Institute, announced its final winners in January 2025. For digital signatures: HAETAE (a lattice-based scheme closely related to ML-DSA but using a different, more efficient rejection sampling technique that produces more compact signatures) and AIMer (based on the MPC-in-the-Head paradigm, developed jointly by Samsung SDS and KAIST). For key encapsulation: SMAUG-T and NTRU+. These are distinct algorithms, not interchangeable with NIST’s selections, though HAETAE’s mathematical similarity to ML-DSA suggests functional convergence is possible. HAETAE has also been submitted to NIST’s additional digital signature standardization process, which could eventually lead to wider international recognition, but for now it is a Korean-specific requirement.

South Korea’s NIS roadmap targets PQC transition completion by 2035, with the current phase (2025-2026) focused on establishing procedures and supporting systems for cryptographic transformation. Samsung’s involvement in developing AIMer is significant: it signals that Korean conglomerates (chaebol) are positioning sovereign PQC algorithms as strategic national capabilities, not just academic exercises. For any organization with Korean government contracts, defense supply chain relationships, or operations subject to Korean national security requirements, KpqC compliance is a procurement and contract requirement, not an optional technical preference.

Europe (BSI/ANSSI/ETSI). Europe is not running its own standardization competition and will generally adopt NIST’s selections, but individual member states are layering additional requirements. Germany’s BSI recommends hybrid configurations that combine PQC with classical algorithms. France’s ANSSI has expressed preference for FrodoKEM as a conservative alternative to ML-KEM, citing concern that ML-KEM’s structured lattice problem has not received sufficient cryptanalytic attention relative to FrodoKEM’s unstructured variant. The Netherlands has adopted a hybrid policy requiring both classical and PQC algorithms simultaneously. The EU’s coordinated PQC roadmap (June 2025) sets transition milestones (begin by end 2026, protect high-risk systems by 2030, complete by 2035) but does not mandate specific algorithms beyond acknowledging NIST’s. The practical effect: a multinational operating across European jurisdictions may face a patchwork of hybrid requirements, parameter set preferences, and algorithm hedging strategies.

Russia. Russia maintains independent GOST cryptographic standards and is developing PQC variants, including the Shipovnik (Rosehip) signature family. Less immediately relevant for most Western multinationals, but significant for any organization with Russian-linked operations, supply chains, or legacy systems.

Emerging players. India is exploring sovereign cryptographic programs. Gulf states are generally NIST-aligned but investing in domestic capability. BRICS discussions have included exploration of alternative cryptographic standards independent of Western institutions.

The Operational Implications: What This Actually Means

The standards picture described above translates into concrete architectural and operational problems. These are the challenges that will consume budget, architecture hours, and procurement negotiations for multinational CISOs over the next five years.

Multiple PKI Hierarchies

If China mandates ICCS algorithms for systems handling data subject to its Cryptography Law, and NIST algorithms are mandated for US government-facing systems, a multinational may need to operate separate PKI hierarchies for each jurisdiction: separate root CAs, separate intermediate CAs, separate certificate issuance and renewal processes, separate revocation infrastructure, and separate HSMs backing each hierarchy’s root keys.

The operational weight of multiple PKI hierarchies is easy to underestimate. A single enterprise PKI is already a complex operational system. It involves root key ceremonies (multi-person, audited, physically secured events), certificate lifecycle management (issuance, renewal, revocation), OCSP responder infrastructure, CRL distribution, certificate transparency compliance (for publicly trusted certificates), and HSM management. Each additional PKI hierarchy multiplies this operational footprint. A multinational operating three PKI hierarchies (US/NIST, China/ICCS, Korea/KpqC) is running three parallel instances of all of this infrastructure, with three sets of root keys to protect, three sets of procedures to audit, and three sets of compliance requirements to satisfy.

European hybrid requirements add further complexity. If a European subsidiary must issue certificates carrying both a classical and a PQC signature (per BSI guidance), the PKI must support composite certificate formats, which are still being standardized by the IETF LAMPS working group. The certificate issuance pipeline must generate dual-algorithm key pairs, produce composite certificates, and distribute them through a certificate management system that understands the hybrid format. Each PKI hierarchy has its own key ceremony process, its own audit requirements, its own compliance obligations, and its own operational team.

Cross-certification between algorithm families is the unsolved problem at the center of this challenge. A certificate signed with ML-DSA cannot be verified by a system that only supports HAETAE, even though both are lattice-based signature schemes. The mathematics are similar; the implementations are not interchangeable. For an internal CA that must issue certificates trusted across all of an organization’s jurisdictions, the options are: operate multiple CA hierarchies (one per jurisdiction), issue multi-algorithm certificates (not yet standardized for cross-family use), or designate specific systems as jurisdiction-specific and segment the trust infrastructure accordingly. None of these options is simple, and the organization’s PKI team is likely already stretched thin managing the existing classical infrastructure.

Interoperability Across Jurisdictions

The most operationally painful consequence of standards fragmentation is cross-border interoperability. When a system in Frankfurt needs to establish a TLS session with a system in Shanghai, which algorithm does the session negotiate? When a signed document must be validated by parties in both jurisdictions, which signature algorithm does it carry?

If Chinese regulations mandate ICCS algorithms and do not accept NIST algorithms for sensitive communications, and US regulations require NIST algorithms, there is no single algorithm family that satisfies both jurisdictions simultaneously. The TLS cipher suite negotiation cannot find common ground because no common algorithm exists. The signed document cannot carry a single signature that both jurisdictions accept as compliant.

The likely engineering solution is gateway translation at jurisdictional boundaries: a controlled network boundary point that terminates one TLS session using one algorithm family and establishes a new session using a different algorithm family. The data transits in the clear at the gateway (within a secured hardware enclave or HSM), then is re-encrypted for the destination jurisdiction. This architecture reintroduces trusted intermediaries and complicates end-to-end security properties. It is architecturally retrograde (the industry has spent two decades trying to eliminate exactly these decryption-re-encryption points) but it may be the only operationally viable path when jurisdictions mandate incompatible algorithms.

The gateway translation model has precedents. Financial institutions operating in China already maintain data localization gateways that handle encryption translation between Chinese SM-series algorithms and Western algorithms. The PQC version of this problem will be structurally similar but operationally more complex, because the algorithm families involved are newer, the tooling is less mature, and the migration must happen under time pressure from regulatory deadlines. Organizations that already operate cross-border data gateways for GDPR or Chinese Cybersecurity Law compliance have an architectural foundation to build on. Those starting from scratch face a more substantial buildout.

The identity and authentication layer adds another dimension. If a SAML assertion is signed with ML-DSA in New York but the Shanghai system only accepts ICCS-approved signatures, the identity token cannot cross the jurisdictional boundary without being re-signed. This means the gateway is re-encrypting transport-layer data and re-signing application-layer identity assertions, which raises significant security and audit questions about the trust model at the boundary point.

This is what I mean in Quantum Sovereignty when I write that geopolitics becomes architecture. The political decision to standardize different algorithms in different jurisdictions produces a technical requirement for gateway translation, which produces a procurement requirement for multi-algorithm gateway appliances, which produces a staffing requirement for teams that can manage them, which produces a budget requirement that someone needs to justify to the board. Political sovereignty translates directly into engineering cost and architectural complexity.

Cloud Provider Dependencies

The cloud provider ecosystem will mirror the standards fragmentation. AWS, Azure, and Google Cloud Platform will support NIST algorithms as their primary PQC offerings. Alibaba Cloud, Tencent Cloud, and Huawei Cloud will support Chinese ICCS algorithms. South Korean cloud providers (NHN Cloud, KT Cloud) may prioritize KpqC algorithms for government workloads.

A multinational using both AWS for its Western operations and Alibaba Cloud for its Chinese operations has no unified cryptographic architecture. Key management, certificate lifecycle, encryption-at-rest, and TLS termination all operate on different algorithm families across the two providers. Data migration between regions requires re-encryption. Key material cannot be transferred between providers because the key formats and algorithm identifiers are incompatible.

The vendor lock-in risk is real: an organization that deeply integrates with a single cloud provider’s PQC implementation may find itself architecturally locked to that jurisdiction’s algorithm family. Moving workloads between providers becomes an infrastructure migration and a cryptographic migration simultaneously. Cloud-agnostic PQC architecture, where cryptographic operations are abstracted from the cloud provider’s specific implementation, is the hedge against this risk, but it is far more difficult to achieve than cloud-agnostic compute or storage.

HSM and Hardware Fragmentation

Western HSM vendors (Thales, Entrust, Utimaco, Marvell) are building FIPS 140-3 validated PQC support around NIST algorithms. Several have already obtained CMVP validation for ML-KEM, ML-DSA, and SLH-DSA. Chinese HSM vendors will build support around ICCS algorithms and Chinese national validation requirements. An organization operating across jurisdictions may need different HSM platforms in different regions, with different key management processes, different audit procedures, different firmware update cycles, and different vendor relationships.

Key ceremony processes, which are already among the most operationally intensive security procedures in any organization, multiply with each additional HSM platform. A key ceremony for a NIST-algorithm root CA in New York follows different procedures than a key ceremony for an ICCS-algorithm root CA in Shanghai, using different HSMs, different algorithm parameters, and potentially different regulatory witnesses. The key management team must be trained on multiple platforms, maintain multiple vendor relationships, and manage multiple firmware update schedules.

The procurement dimension is equally complex. Western organizations procuring HSMs from Chinese vendors may face scrutiny from their own regulators or national security agencies. Chinese organizations procuring HSMs from Western vendors face reciprocal concerns. The result may be that HSM procurement becomes jurisdiction-specific by necessity, with Western vendors serving Western regions and Chinese vendors serving Chinese operations. This hardware fragmentation is a permanent architectural constraint that software-level crypto-agility cannot fully abstract away.

Regulatory Compliance Collision

Data flowing between jurisdictions may be subject to conflicting cryptographic requirements. China’s Cryptography Law may require Chinese-approved algorithms for data processed within China. The GDPR’s requirement for “appropriate technical and organizational measures” will be interpreted, in practice, as requiring PQC algorithms that European regulators consider adequate. US ITAR and EAR export controls may restrict which algorithms can be deployed in which countries.

The collision scenarios are concrete. Consider a multinational bank that processes a cross-border payment between a Chinese counterparty and a US counterparty. The Chinese leg of the transaction may need to be encrypted with ICCS algorithms. The US leg needs NIST algorithms. The transaction data flows through both jurisdictions. Which algorithm applies to the data at rest? To the data in transit? To the cryptographic audit trail? To the non-repudiation signature on the payment authorization?

The CISO managing this environment is not solving a technical problem. They are solving a legal-technical compliance problem that requires input from general counsel, regulatory affairs, data protection officers, and counterparty risk teams. The technical architecture must be designed with legal compliance as a primary constraint, not an afterthought.

The US export control dimension adds further friction. The Export Administration Regulations (EAR) classify strong encryption as a controlled technology. While exemptions exist for mass-market and open-source implementations, the export control framework has not been fully updated for PQC. An organization deploying NIST PQC algorithms in a foreign subsidiary may need to verify that the specific implementation and key lengths are covered by applicable exemptions. Conversely, deploying Chinese ICCS algorithms in a US environment may trigger import-side regulatory questions. The intersection of cryptographic mandates (which jurisdiction’s algorithms you must use) with export controls (which algorithms you may use in which jurisdictions) creates a compliance matrix that no single regulatory framework governs.

For organizations in regulated industries (financial services, healthcare, defense supply chain), the compliance collision is compounded by sector-specific requirements. PCI DSS has not yet published standalone PQC guidance, though PCI DSS v4.0’s requirement for cryptographic inventory (Requirement 12.3.3) aligns with PQC readiness. Financial regulators in different jurisdictions will interpret “adequate cryptographic protection” differently based on their domestic PQC standards. A bank that uses NIST algorithms may be compliant in New York but non-compliant in Shanghai if Chinese regulators require ICCS algorithms for payment processing.

The Fragmentation Scenarios: How Bad Does It Get?

Three scenarios bound the range of outcomes. The prudent planning approach is to design for the middle scenario while monitoring for signals of the worst case.

Scenario A: Functional Convergence. China and South Korea select algorithms from the same mathematical families as NIST (lattice-based KEMs, lattice-based signatures). The specific implementations differ (just as SM2 differs from NIST P-256, both being ECC), but the security properties are equivalent and the mathematical foundations are shared. Interoperability is solvable by supporting multiple implementations of similar constructions. Protocol-level algorithm negotiation can bridge the gap. This is the most optimistic scenario, and there is evidence to support it: South Korea’s HAETAE is closely related to ML-DSA, and SMAUG-T uses the same module-lattice approach as ML-KEM. As Akamai’s analysis noted, Korea’s algorithm selections suggest industry consensus around module-lattice-based constructions. Experts like Joppe Bos (NXP) and Michael Osborne (IBM) have suggested that functional convergence across the lattice-based family is likely.

Scenario B: Partial Divergence. Major regions standardize on different specific algorithms from the same or related mathematical families, but mandate their own suite for domestic use. Multinationals need dual or triple implementations. Interoperability requires protocol-level negotiation with algorithm-family-aware gateways at jurisdictional boundaries. Management complexity is high but tractable with proper architecture. This is the most likely outcome based on current trajectories: China will almost certainly mandate its own algorithms for domestic use, even if those algorithms are mathematically related to NIST’s selections. Korea already has distinct algorithms that it will require for government contracts. Europe will layer hybrid requirements. The result is a world where any given data flow may need to support two or three algorithm families depending on which jurisdictions it touches.

Under Scenario B, a multinational bank’s TLS infrastructure might look like this: US-facing endpoints negotiate ML-KEM plus ML-DSA. China-facing endpoints negotiate ICCS-selected algorithms (likely a lattice-based KEM and signature with different constructions). Korean government-facing endpoints negotiate SMAUG-T plus HAETAE. European endpoints use hybrid configurations combining ML-KEM with classical ECDH. Internal backbone connections between regions use gateway translation at jurisdictional boundaries. The bank operates three HSM platforms, three PKI hierarchies, and a unified management layer that tracks which algorithm family applies to which system. This is operationally expensive but architecturally coherent. It is also the planning baseline I recommend.

Scenario C: Full Fragmentation. Regions adopt algorithms from genuinely different mathematical families with incompatible security assumptions. A jurisdiction mandates a code-based KEM while another mandates a lattice-based KEM, with no common family for negotiation. Interoperability becomes structurally difficult. Digital trade barriers emerge, with cryptographic compliance serving as a non-tariff barrier to cross-border data flows, similar to how data localization requirements have already constrained cloud architecture. This is the worst case and currently less likely, but it cannot be ruled out if geopolitical tensions escalate further or if a cryptanalytic breakthrough against lattice-based constructions forces some jurisdictions to adopt fundamentally different mathematical foundations. The BRICS exploration of alternative cryptographic standards, if it produces a genuinely distinct algorithm suite, could push toward this scenario.

My assessment: plan for Scenario B as the baseline. The evidence points toward mathematical convergence (lattice-based constructions dominating across jurisdictions) but implementation divergence (different specific algorithms mandated by each jurisdiction). This means the interoperability problem is solvable but the operational complexity is real and persistent. Organizations that build for Scenario B will handle Scenario A easily and have a reasonable foundation for Scenario C if it materializes.

Crypto-Agility as the Strategic Response

The only sustainable response to standards fragmentation is genuine crypto-agility: the architectural ability to support multiple algorithm families simultaneously, driven by policy rather than code changes. This is not a nice-to-have future capability. It is the prerequisite for operating across jurisdictions in the fragmented PQC world.

I covered the architectural details of what real crypto-agility requires in a companion article on crypto-agility architecture. The short version: crypto-agility requires centralized cryptographic services where algorithm selection is policy-driven, hardware that supports firmware-upgradeable algorithm implementations, protocols that negotiate rather than hard-code algorithms, and continuous cryptographic inventory management through a living CBOM.

In the context of standards fragmentation specifically, crypto-agility means: your KMS can generate and manage keys for ML-KEM, HAETAE, SMAUG-T, and whatever ICCS selects, switching between them based on which jurisdiction a given operation serves. Your PKI platform can issue certificates with ML-DSA for US-facing systems and ICCS-selected algorithms for China-facing systems, from a unified management interface. Your TLS termination points can negotiate algorithm suites based on the counterparty’s jurisdictional requirements. Your signing services can apply different signature algorithms to the same artifact depending on its intended distribution jurisdiction.

The cost of crypto-agility is real. Building and maintaining multi-algorithm infrastructure is more expensive than single-algorithm infrastructure. But the cost of not having it is higher: it is the cost of executing a second full PQC migration when a new jurisdictional requirement arrives. If the 120,000-task migration I led for a single algorithm family took years, repeating that effort for each additional jurisdiction is not an option most organizations can sustain. Crypto-agility is the architectural investment that converts future migration programs into platform configuration changes.

An organization that has hard-coded ML-KEM throughout its stack has solved the US migration problem but has created a second migration problem for every other jurisdiction that mandates a different algorithm. An organization that has built crypto-agile infrastructure can add a new algorithm family through a platform configuration change rather than a program-level migration. The difference in cost, timeline, and operational risk between those two architectures is the business case for crypto-agility.

What to Do Now

The fragmentation I have described is coming. Some of it is already here. The following actions are designed for a multinational CISO who needs to build a migration plan that works across jurisdictions rather than for a single one.

Map your jurisdictional cryptographic exposure. Before you can plan for fragmentation, you need to understand where you are exposed to it. Which systems serve which jurisdictions? Which data flows cross jurisdictional boundaries? Which vendor relationships are jurisdiction-specific? Which regulatory obligations apply to which data? This is a legal-technical exercise that requires collaboration between security architecture, legal, compliance, and business operations. The deliverable is a matrix: systems on one axis, jurisdictions on the other, with each cell indicating the applicable cryptographic requirements and the current algorithm in use. The PQC Readiness Self-Assessment Scorecard provides the assessment framework; the jurisdictional mapping is the additional dimension that multinational organizations must add. Without this map, your migration plan is built on assumptions about which requirements apply where, and those assumptions will be wrong.

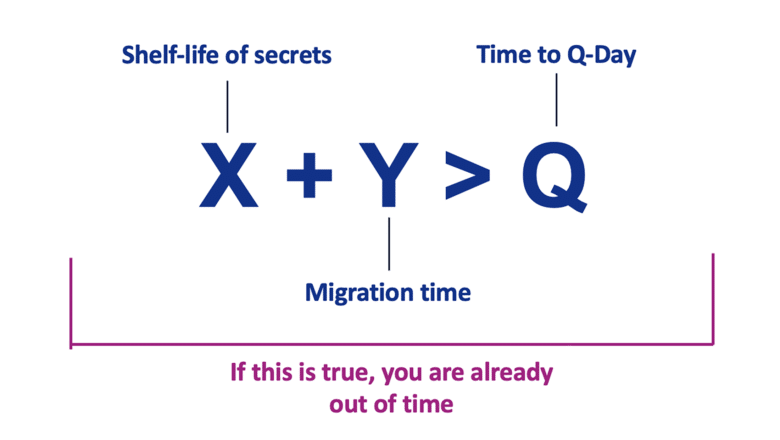

Start your NIST-based migration now. Do not wait for convergence. NIST’s algorithms are finalized, the tooling is mature, and the regulatory deadlines are set. Waiting for China’s ICCS to finalize its selections or for Korea’s KpqC algorithms to be fully deployed delays the work you can do today. NIST algorithms will be required for US operations regardless of what other jurisdictions mandate. They are the de facto standard for allied nations. And the migration work itself (inventory, architecture, procurement, testing) is algorithm-independent; you need to do it regardless of which algorithm family each jurisdiction ultimately mandates. If you build with crypto-agility from the start, adding support for ICCS or KpqC algorithms later is a platform configuration addition, not a re-do of your entire migration. The deadlines are already set, and they are not waiting for global convergence.

Build crypto-agility as an architecture requirement, not an afterthought. Every new system, every procurement contract, every vendor relationship should specify support for multiple algorithm families. “Supports NIST PQC algorithms” is necessary but insufficient for a multinational procurement requirement. The requirement should read: “Supports configurable PQC algorithm families including NIST ML-KEM/ML-DSA and the ability to add additional algorithm families through configuration or firmware update within 12 months of their standardization.” This language protects the organization against the fragmentation scenarios described above. The PQC Migration Framework at PQCFramework.com embeds crypto-agility as a core architecture principle across all eight migration phases. Quantum Ready covers the organizational strategy for making the business case.

Engage with the standardization processes you depend on. If your organization operates in China, track the ICCS process actively. Monitor the State Cryptography Administration’s announcements. Build relationships with Chinese cryptographic vendors who will implement ICCS algorithms. If you operate in South Korea, track KpqC implementation requirements and the NIS roadmap for cryptographic transformation. Engage with industry working groups (ETSI QSC, ISO/IEC JTC 1/SC 27, GSMA QSTF) that are working on international interoperability. Do not be surprised by mandates you could have anticipated with six months of monitoring. The cost of tracking these processes is trivial compared to the cost of being caught unprepared when a mandate arrives.

Plan for gateway translation at jurisdictional boundaries. If end-to-end cross-jurisdictional encryption with a single algorithm family becomes impossible (Scenario B or C), design for controlled re-encryption at jurisdictional boundary points. This means identifying your cross-border data flows, designating specific network boundary points as cryptographic translation gateways, procuring gateway hardware that supports multiple algorithm families, and establishing the operational procedures for managing the trust model at the boundary. Start with the highest-volume, highest-sensitivity cross-border flows (typically financial transactions, inter-office communications, and shared infrastructure management) and work outward.

Contribute to and invest in multi-algorithm open-source implementations. Libraries like liboqs (Open Quantum Safe) that support multiple algorithm families are critical infrastructure for a fragmented world. The broader the ecosystem of multi-algorithm tooling, the lower the cost of supporting multiple jurisdictions. Organizations that invest in this ecosystem today (through direct contribution, funding, or procurement preference for vendors that use open multi-algorithm libraries) are building the commons that will make their own fragmentation management cheaper. The alternative is paying each vendor separately to implement each jurisdiction’s algorithms, which is more expensive and less interoperable.

The World That Is Coming

The era of global cryptographic consensus is ending. The assumption that the world would converge on a single set of PQC algorithms selected by NIST was always optimistic, and the evidence now shows it was wrong. China is building its own suite, with a roadmap targeting initial standards before end of 2026. South Korea has already selected four distinct algorithms and is implementing them through Samsung and other national champions. European regulators are layering hybrid requirements and conservative hedges that go beyond NIST’s selections. Russia maintains its independent path with GOST PQC development.

For most of the classical cryptography era, this fragmentation was manageable. The SM-series algorithms were a China-specific requirement; most organizations handled them by maintaining a parallel cryptographic implementation for Chinese operations. The GOST algorithms were relevant only for Russian operations. The overhead was real but contained. PQC fragmentation is different in two fundamental ways.

First, it is arriving simultaneously with a migration deadline. Organizations must migrate away from quantum-vulnerable algorithms by 2030-2035, and they must do so into an environment where the destination is not a single standard but a jurisdiction-dependent set of standards. The migration and the fragmentation are concurrent challenges that compound each other. You cannot defer the fragmentation planning until after you complete your NIST-based migration, because the NIST-based migration’s architecture must be designed to accommodate fragmentation from the start. Retrofitting crypto-agility after completing a single-algorithm migration is substantially more expensive than building it in from the beginning.

Second, the number of jurisdictions with independent requirements is growing. Classical cryptographic fragmentation involved two or three independent standards tracks (NIST, SM-series, GOST). PQC fragmentation involves at least five (NIST, ICCS, KpqC, European hybrid mandates, GOST PQC), with the potential for more as India, Brazil, and other nations develop sovereign cryptographic capabilities. The combinatorial complexity of supporting five algorithm families across jurisdictional boundaries, with cross-border data flows requiring gateway translation, is qualitatively different from managing two.

For the geopolitical analysis behind this fragmentation, see Sovereignty in the PQC Era and Quantum Sovereignty. For the architectural foundations of crypto-agility, see the companion article on crypto-agility architecture. For practical migration steps, start with PQCFramework.com and Practical Steps to Quantum Readiness.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.