Inside Quantum Computing’s Modular Revolution – Discussion with QuantWare’s CEO Matt Rijlaarsdam

Table of Contents

Quantum computing is entering a new phase where scaling up isn’t just about qubit counts – it’s about how those qubits are built and integrated.

A recent discussion with QuantWare’s CEO, Matt Rijlaarsdam, shed light on “quantum open architecture” (QOA) approach that could transform the industry. By focusing on modular design and specialization (instead of monolithic, end-to-end systems), companies hope to break through scaling bottlenecks and democratize access to quantum machines. QuantWare – a Dutch startup supplying superconducting quantum processors – is at the forefront of this movement, but the implications go far beyond any single company. The vision: quantum computing could evolve more like the classical PC industry, where interchangeable components and shared standards turbocharged progress, rather than like the isolated mainframes of old.

From Lab Monoliths to Quantum Open Architecture

In the early days of quantum computing, academic labs and a few tech giants built vertically integrated machines – essentially doing everything in-house from qubits to cryogenics. This all-in-one approach produced pioneering systems, but it doesn’t scale well. Just as in classical computing history (where early mainframes gave way to an ecosystem of specialized chipmakers, component suppliers, and software firms), quantum tech is now seeing a shift toward specialization. This trend is epitomized by the Quantum Open Architecture (QOA) model, which enables different organizations to contribute modular pieces of a quantum computer. Instead of buying a closed “black box” system or trying to build everything from scratch, a university or company can assemble a quantum computer from plug-and-play components – sourcing a processor from one vendor, control electronics from another, a cryogenic fridge from yet another, and so on.

This modular approach lowers the barrier to entry. Organizations seeking a quantum computer had to either buy a closed system from a full-stack provider or build one from scratch. QOA is changing that by democratizing the ability to build quantum machines. A prime example is at the University of Naples in Italy, where researchers recently assembled the country’s largest quantum computer using a 64-qubit Tenor processor from QuantWare. They did so in a fraction of the time and cost it would have taken to develop it internally or purchase a proprietary system. As Prof. Francesco Tafuri, who leads the Naples lab, put it: “QuantWare’s Tenor QPU significantly accelerated our timeline and allowed us to focus on building the system and its applications,” instead of reinventing the wheel on processor fabrication.

The QOA philosophy mirrors how classical computing evolved into a multi-vendor ecosystem. Just as Intel became the go-to CPU supplier while others built motherboards, PCs, and software, the quantum industry is now seeing a similar division of labor. In our interview, Rijlaarsdam explicitly invoked this analogy: “We kind of think of ourselves as the Intel of quantum in the sense that in the 1950s, everyone made a whole mainframe computer, including the CPU. Then by the 1970s, Intel emerged and had a near-monopoly on CPUs… The play we made is that quantum has the exact same laws of economics.” In other words, as devices get exponentially more complex, specialization and volume production win out. By focusing narrowly on quantum processors (and related chip technology) and selling them widely, QuantWare aims to amortize R&D costs over many customers and drive down unit costs, much as classical chip vendors do. “If you deliver twice the number of chips as your competitor, you can amortize your equipment over twice the number of chips, so your margins are better,” Rijlaarsdam explained, highlighting the volume advantage. QuantWare says it has among the highest chip shipment volumes in the market, shipping QPUs and components to customers in over 20 countries. This scale, he argues, feeds a virtuous cycle: higher volume leads to lower costs and better yield, which attracts more customers, further increasing volume.

Key Players in the QOA Ecosystem

Under QOA, different specialists provide the building blocks of a quantum computer. Some examples include:

- Quantum Processors (QPUs) – the “brain” of the system. E.g., QuantWare develops and fabricates superconducting transmon qubit chips (17-qubit, 64-qubit, and upcoming larger devices) that can be integrated into various setups. By supplying QPUs to third parties, companies like QuantWare enable others to build a quantum computer at a fraction of the cost of a full-stack solution.

- Cryogenic Infrastructure – the ultra-cold refrigerators and wiring needed to keep qubits at millikelvin temperatures. E.g., Bluefors and Maybell Quantum specialize in dilution refrigerators, while Delft Circuits provides cryogenic microwave cabling. These ensure qubits stay coherent and properly connected without each quantum builder needing to master fridge engineering.

- Control Electronics – the hardware to manipulate and read out qubits with precision. E.g., Qblox, Quantum Machines, and Zurich Instruments offer high-fidelity microwave pulse generators, control modules, and software for operating qubits. This allows quantum system integrators to buy a ready-made control stack instead of developing everything in-house (much like classical computers use off-the-shelf GPU/CPU controllers).

- Calibration and Software – tools to tune qubit performance and run algorithms. E.g., QuantrolOx provides automated calibration software to optimize qubit parameters, and various quantum middleware firms ensure that different components can talk to each other smoothly.

- System Integration – pulling all the pieces together into a functional machine. E.g., Applied Quantum, ParTec and Treq act as integrators that can assemble a full quantum system for an end-user by combining the best available components. They orchestrate everything from the cryostat to the control software, delivering a turnkey solution without having built each part themselves.

One nuance from the interview: as systems scale, even the definition of a “processor” starts to blur. Rijlaarsdam argued that the quantum processor is no longer just “a qubit chip,” but a packaged stack that increasingly absorbs what used to sit around it – filters, amplifiers, and other cryogenic “chandelier” components that shape and protect the signals.

He also suggested that, eventually, “driver software” belongs in the same mental box as the processor itself. His analogy was pointed: imagine buying an NVIDIA GPU and being told to write your own drivers – it’s not a technical inconvenience, it’s a billion-dollar integration problem. In that framing, QOA is not only about swapping hardware modules; it’s about deciding what interfaces become standardized enough that system builders don’t spend years rewriting the same plumbing and control abstractions.

This disaggregated model means a lab or startup can mix-and-match QOA-compliant components to suit their needs. If a better microwave amplifier or a new qubit chip comes along, it can be swapped in, much as one might upgrade a graphics card in a classical computer. The CEO of QuantWare emphasizes that QOA at this stage is “more a long-term design philosophy and paradigm rather than something deeply technical today.” Rijlaarsdam offered a pragmatic way to think about how “standards” emerge in young industries. One path is the telecom-style route – committees, lobbying, and negotiated interfaces. The other is the semiconductor route: “volume begets standards.” When a platform is shipped widely enough and performs well enough, compatibility becomes a market outcome rather than a committee output. That’s an important nuance for QOA: it may not crystallize first as a formal spec sheet. It may crystallize as a de facto set of design assumptions that follow the highest-volume components – the parts everyone ends up designing around because they’re available, affordable, and proven in multiple integration contexts.

In practice, many current components are already cross-compatible (for example, most superconducting qubit chips can work with standard control electronics and fridges), but the QOA movement is about formalizing and embracing this modularity. Stakeholders are even forming collaborations to showcase multi-vendor quantum systems. In late 2025, a coalition in Colorado announced the first QOA-based quantum computer in the U.S., integrating QuantWare qubits, Qblox control hardware, Q-CTRL software, and Maybell’s cryogenics into a cloud-accessible platform. Each partner supplied their piece of the stack, demonstrating a “Lego block” approach to building quantum machines that can be replicated elsewhere.

“Intel of Quantum”: Specialization, Volume, and Quantum Sovereignty

QuantWare’s business strategy exemplifies the specialization trend. Founded in 2021 as a spin-off from TU Delft’s QuTech institute, the company explicitly set out to become a dedicated quantum chip provider rather than a full-stack vendor. “We develop, design and fabricate scalable superconducting quantum processors, and by supplying these to third parties, we allow them to build a quantum computer for one-tenth the cost of competing solutions,” its founders noted. This approach is analogous to a semiconductor foundry or a CPU company in the classical realm – focus on one critical piece of hardware and sell it to many customers. By 2025, QuantWare had grown to serve clients in 20+ countries and was delivering some of the largest QPUs commercially available (e.g. 64-qubit chips). Those customers range from academic groups and national labs to startups and corporations, all leveraging the open-market availability of quantum processors to accelerate their own development.

Crucially, this model is enabling what policymakers call quantum sovereignty for smaller players and nations. Governments and industries outside the U.S. are keen to ensure they aren’t entirely dependent on a few foreign tech giants (like IBM or Google) for quantum computing capabilities. The EU, for instance, has made “full-stack quantum sovereignty” a strategic goal – meaning Europe should control the entire supply chain from chip fabrication to software. QuantWare’s forthcoming KiloFab in the Netherlands – scheduled to open in 2026 – is a direct response to this imperative. It will be Europe’s first dedicated quantum chip factory, capable of producing the complex multi-layer wafers needed for next-gen QPUs. By investing in domestic fabrication capacity, Europe can reduce reliance on U.S. or Asian foundries for cutting-edge quantum devices. “This is a strategic move to ensure manufacturing keeps pace with the ambitious chip design,” I wrote in my analysis when KiloFab was announced. It positions QuantWare not just as a product company but as a potential quantum foundry for the industry – a one-stop shop that could fabricate processors for others, akin to how TSMC builds chips for many chip designers.

Rijlaarsdam confirmed this direction in our interview, noting that in addition to selling its own QPU designs, QuantWare offers foundry services (fabricating custom chip designs for select clients) and packaging services (using its proprietary 3D stacking technology to help other chipmakers scale up their devices). “We operate in three business models… but the majority of our volume is our standard QPU chips,” he said. The foundry and packaging offerings, he explained, are ways to bring more players onto QuantWare’s platform. If a national lab or a rival startup has a unique qubit design but no way to scale it beyond a small chip, QuantWare’s VIO-based integration could allow them to slot their “chiplet” into QuantWare’s modules, leveraging the engineering that QuantWare has already done on interconnects, amplification, and I/O (input-output) handling. By helping even competitors scale up, QuantWare hopes to make its architecture a de facto standard – “volume begets standards,” as Rijlaarsdam phrased it, meaning the platform with the most adoption naturally becomes the common platform.

What’s especially interesting is how that “packaging” role could become a subtle lever for ecosystem alignment. Rijlaarsdam described a strategy where other superconducting teams can keep their own qubit chip designs, but scale them by packaging their chiplets into a VIO-style stack. In his words, QuantWare can act “as a packaging house” – not replacing the qubit designer, but offering an on‑ramp to a scaling architecture.

Strategically, this is how open architectures often spread in practice: not by asking every incumbent to abandon their roadmap, but by offering an incremental compatibility path that solves an urgent bottleneck (I/O and scale) while letting the customer keep their “secret sauce” in the qubit layer.

This collaborative-yet-competitive approach reflects a maturing industry. It is notable that QuantWare’s customers already include not just research labs but commercial endeavors across 22 countries, according to the CEO. In the first couple of years, universities were the main buyers of its smaller chips, “but that has transitioned to be majority commercial now, especially as you get to larger chip sizes,” Rijlaarsdam said. Building a 50+ qubit system – let alone one with hundreds or thousands of qubits – is expensive and complex, so only well-funded companies or government-backed programs can attempt it. Instead of each of those efforts duplicating processor R&D, QOA allows them to purchase a state-of-the-art processor and concentrate on integration and applications.

This is precisely how Italy’s 64-qubit system came together: the QuantWare chip was paired with locally sourced cryogenics and control electronics, delivering Italy a sovereign quantum machine much faster than if they had waited for a tech giant’s black box.

We’re seeing similar interest in regions like the Middle East and Asia, where nations are investing in quantum computing programs and want hands-on capability – not just cloud access to someone else’s computer. Open architecture provides a pathway for them to build local competence (and pride) by assembling machines on their soil, with guidance from specialists. In our conversation, Rijlaarsdam acknowledged the geopolitical tightrope: quantum technology is strategic, and there are restrictions on working with certain countries, but the company views itself as a global supplier. “We continuously evaluate which geographies we can and want to operate in, given all the security constraints,” he noted, adding that they already have customers across Asia and the Middle East. For emerging quantum hubs (from Abu Dhabi to Tokyo to Toronto), having a menu of components to buy is extremely attractive – it means they can stand up a functional quantum computer in a couple of years, for perhaps single-digit millions of dollars, rather than embarking on a decade-long, open-ended research project.

To be clear, none of this implies that full-stack giants like IBM are out of the picture; rather, a parallel model is growing alongside. Industry giants still pursue vertically integrated designs (often citing tight integration as necessary for optimal performance). But even they are embracing modularity in some ways – IBM’s latest roadmaps, for example, involve modular quantum processors connected by high-speed links to scale beyond the limits of a single chip. The difference with QOA is who provides the pieces and how openly they’re shared. In the coming years, we might see a scenario akin to PCs vs. Mac: open-architecture quantum systems assembled from multivendor components on one side, and closed proprietary quantum machines on the other. Each approach has pros and cons, but if history is any guide, open ecosystems tend to spur faster adoption and innovation once standard interfaces take hold.

Breaking the Scaling Bottleneck: QuantWare’s VIO-40K Chip Architecture

One of the hardest engineering challenges in scaling quantum computers is getting control signals in and out of thousands of qubits. In present-day superconducting quantum chips (the technology used by IBM, Google, and QuantWare among others), each qubit requires multiple control lines – for example, microwave drive tones to flip the qubit state, flux bias lines to tune frequencies, and readout lines to measure outputs. On a 50-qubit chip, you might manage this with a few hundred microwires and coaxial lines wired to the chip perimeter. On a 1,000-qubit chip, however, you’d need thousands of microwave lines – a routing nightmare on a 2D plane. In fact, QuantWare estimates that in today’s designs, as much as 90% of the chip area gets eaten up by wiring and routing infrastructure, leaving only ~10% for the qubits themselves. This “I/O bottleneck” is a key reason superconducting chips have stalled in the low hundreds of qubits; beyond that, you simply can’t snake all the control lines across the chip without causing interference and consuming too much space.

QuantWare’s proposed solution – announced in late 2025 and codenamed VIO-40K – attacks this problem by going vertical. Instead of spreading control wiring alongside the qubits on the same layer, VIO-40K uses a 3D stack of chiplets to deliver signals from above to the qubit layer. In essence, the qubit chip sits at the bottom of this vertical stack of chips that carry the signal to the qubit plane, but also contain integrated components like filtering, amplification, and interconnections. The vertical chip stack allows control lines to come in through the qubit plane rather than sprawling outwards. By eliminating the “fan-out” of wiring on a single layer, a VIO-40K module can accommodate an enormous number of qubits without running out of real estate or introducing excessive cross-talk. The design supports up to 40,000 I/O connections (hence the name VIO-40K), which theoretically enables controlling 10,000 qubits with about four lines per qubit (for drive, flux, readout, etc.). Those control lines are delivered via ultra-high-fidelity chip-to-chip bonds within the stack, which the company says are as good as (or even better than) on-chip connections. In our interview, Rijlaarsdam noted that both their internal tests and results from others have shown negligible fidelity loss across chiplet boundaries – meaning two qubits on adjacent chiplets can interact as if they were on the same monolithic die. If done right, a processor built from tiled chiplets behaves like one large chip electrically and quantum-mechanically, but is much easier to manufacture (since each tile is smaller) and easier to wire up (since the wiring is partitioned into layers).

One small-but-telling detail from the interview: Rijlaarsdam described the vertical stack as a sequence of routing layers alternating with “spacer chips.” The claim is that this creates unusually well-shielded signal channels – a very different electromagnetic environment than a crowded 2D plane where lines run close together and cross.

The implication (and the bet) is counterintuitive: if the stack is engineered correctly, fidelity doesn’t have to degrade as the architecture becomes more complex; it could improve because the stack reduces crosstalk and isolates signal paths more cleanly than traditional planar layouts.

“The whole premise is that the connections between these chiplets are as good as on-chip connections,” Rijlaarsdam emphasized. “When made correctly, fidelity should be higher [than a conventional 2D design], because you have less crosstalk… signals are super well shielded in the vertical channels, compared to a planar design where everything is criss-crossing.” In other words, by adding the third dimension, the team actually expects to improve qubit coherence and gate fidelity, not just maintain it. This addresses a common skepticism in quantum circles: it’s hard enough to get, say, 99.9% fidelity on a single chip – won’t adding more components make things worse? If each junction between chiplets introduced even a small error or loss, those would compound. QuantWare’s bet is that advances in 3D packaging (much borrowed from classical 3D integration techniques) can make those interfaces essentially transparent to the qubits.

The modular nature of the design also has a big practical benefit: yield. Rather than fabricating one enormous 10,000-qubit die (which would almost certainly have zero yield – one defect among thousands of Josephson junctions can kill the whole chip), you fabricate many smaller chiplets and assemble them. If one chiplet in a batch is bad, you discard or rework that piece, but the others can still form a complete unit. This divide-and-conquer strategy is the only plausible way to reach thousands or millions of qubits in a superconducting platform without yield issues, as Rijlaarsdam pointed out. “The only way you’re going to make a device that size is by making a large chip out of many smaller ones that you can make with high yield, and then your connections should have high enough yield to tie them together,” he said. This is exactly how modern CPUs and GPUs have scaled to billions of transistors – by using chiplets, multi-die modules, and advanced packaging, rather than monolithic “mega-chips.” QuantWare is essentially translating that playbook to quantum.

The challenge then shifts from qubit physics to complex engineering: designing and manufacturing the 3D stack reliably. The company appears to be well aware of this. They are investing heavily in automated assembly techniques and facilities (hence the KiloFab) to ensure that stacking thousands of chips with precision is feasible at scale. Rijlaarsdam noted that superconducting qubits, being relatively large (tens of micrometers in size), actually allow for looser fabrication tolerances than modern semiconductor chips: “Our tolerances are basically 1970s [semiconductor] technology,” he said, implying they don’t need cutting-edge EUV lithography or 3nm transistors to make this work. The heavy lifting is in the packaging and alignment – which is why the team has the largest number of engineers devoted to quantum chip packaging of perhaps any organization. “By far the majority of our team is focused on quantum processor architectures [and packaging]. We have, in my view (of course I would say that), the best and also objectively the largest team working on it,” Rijlaarsdam said. That focus, he contends, is a strategic differentiator: while big integrated quantum companies also have packaging groups, those tend to be smaller or less central compared to their algorithm or software efforts. QuantWare, by contrast, is staking its whole business on solving the hardware scaling problem. As my previous analysis put it, this kind of innovation “lives in the unglamorous layer of quantum engineering that usually gets skipped in the first wave of headlines” – wiring density, chip packaging, cryogenic heat loads, etc. Yet these are precisely the issues that could make the difference between an “impressive lab device” and a truly usable quantum computer.

So what exactly has QuantWare announced and when will we see results? The VIO-40K architecture was unveiled in December 2025 with claims that it “enables 10,000-qubit processors with 40,000 I/O lines” and that first devices will ship to customers by 2028. In tandem, the company is building the KiloFab to produce the required 3D-stacked chips in volume by that date. Effectively, 2028 is the target for a fully realized 10k-qubit QPU in one cryostat. Achieving this means not just stacking chips but also handling the immense heat load and wiring at the fridge level. 10,000 qubits with 40,000 connections will generate significantly more heat (due to filtering, attenuation, etc.) and require far more physical wiring than today’s fridges typically support. But apparently that, too, is in hand: “Up to and including 10,000 qubits, today’s equipment will work,” Rijlaarsdam told me, hinting that their cryogenic partners have solutions on the way. [1] If true, that means by the time the chips are ready, the refrigeration technology will also be ready to house them – an important coordination in quantum engineering. The aim is to fit a 10k-qubit processor in a single dilution refrigerator, rather than requiring a network of many fridges (which some competing approaches would need). One big machine is easier (and likely cheaper per qubit) to operate than ten smaller ones, “if you can make it work,” as I wrote in my analysis. QuantWare is essentially saying: we’ll give you the blueprint to build a KiloQubit quantum computer as one integrated unit, so you don’t need a “fridge farm” or exotic quantum interconnects between systems.

It’s worth noting that QuantWare’s announcement was bold – and the company knows it. Until now, they had been relatively quiet in public about long-term plans, to avoid adding to the hype that often plagues quantum tech. “In December it was a bit of an experiment for us – the first time we were talking boldly publicly about what we’re working on,” Rijlaarsdam said. The gamble seems to be about finding the right balance between boldness and credibility. On one hand, the industry as a whole has been skewing more optimistic lately (some might say hyping), and a small startup could get ignored if it doesn’t make big claims. On the other hand, over-promising is dangerous in a field where technical realities eventually catch up. QuantWare tried to keep its claims grounded in engineering, not magic. Rather than saying “we have 10,000 qubits now,” they framed VIO-40K as an architecture – a plan to build such processors – and gave a concrete timeline (2028) along with evidence of each piece (patents on the vertical routing, a new fab under construction, partner relationships for other parts of the stack). “At least those things are all verifiable,” Rijlaarsdam noted. Indeed, outsiders can visit the Delft site when KiloFab opens, or track whether a 10k QPU gets delivered in a few years. By making a measurable promise, QuantWare puts pressure on itself to execute, but also sets a marker that could attract talent and collaborators who see that vision as credible. As I previously commented, QuantWare’s bold vision “represents a maturation of the quantum computing industry… a shift from one-off laboratory feats toward semi-standardized manufacturing and scalable architectures.” In other words, it’s the kind of step you’d expect as the field grows up.

Not Q-Day Yet: Keeping 10,000 Qubits in Perspective

Whenever a headline trumpets a big qubit number, it inevitably raises the question: Does this mean we can break RSA encryption now? Especially among the readers of PostQuantum.com. Let’s address that head-on – no, a 10,000-qubit chip in 2028 is not going to instantly crack the world’s cryptography, and QuantWare’s team is quick to acknowledge that. Those 10,000 are physical qubits, which in the near term will still be noisy. The whole point of reaching such high qubit counts is to run quantum error correction, which devours physical qubits to produce a much smaller number of stable logical qubits. The industry rule of thumb is that you might need hundreds or thousands of physical qubits to get one error-corrected logical qubit, depending on error rates and codes. In our interview, Rijlaarsdam estimated that a 10k-qubit device could yield on the order of “10 to 100 logical qubits,” though he cautioned that the exact number depends on many factors (coherence times, gate fidelities, the error correction code used, etc.). He paired that estimate with an infrastructure datapoint that’s easy to overlook: on the order of 200 kW of power for such a setup (not a desktop machine – closer to small data-center territory). It’s a useful reminder that “how many qubits?” is only one axis; power, cooling, and control complexity increasingly define what “scaling” really means. That range sounds modest, and it is – but it’s also extremely valuable. Having tens of logical qubits would allow experiments with true fault-tolerant computation for the first time in history. Researchers could run small algorithms to test error-corrected circuit depth, explore logical qubit connectivity, and maybe even perform some basic computations that surpass what uncorrected “NISQ” devices can do.

For comparison, IBM has openly stated a goal of ~200 logical qubits by 2029 as part of its roadmap to full fault tolerance. IBM’s target is tied to a concept of a machine (codenamed Quantum Starling in their plan) that can run 100 million quantum gates on those 200 logical qubits. In other words, by the end of the decade IBM hopes to demonstrate a medium-sized error-corrected quantum computer – still probably not enough to break RSA, which might require thousands of logical qubits, but certainly enough to do some useful algorithms. IonQ, a leader in trapped-ion quantum tech, likewise projects scaling to 2,000,000 physical qubits by 2030, which they claim could produce on the order of 40,000-80,000 logical qubits in their architecture. (IonQ’s approach relies on networking ion trap modules with photonic links, and they’ve laid out interim steps like ~10,000 physical qubits on a chip by 2027 and 20,000 via two chips in 2028.) And PsiQuantum, the photonic quantum startup, has consistently talked about needing a million physical qubits to achieve a fault-tolerant machine, and has plans for a million-qubit silicon-photonics based quantum computer within the next decade (they recently broke ground on a large facility in Illinois for this purpose).

Placed against that landscape, QuantWare’s “10k by 2028” ambition is not an outlier at all – it slots into a broader industry consensus that the late 2020s will be the dawn of small-scale fault-tolerant prototypes. The difference is in emphasis: QuantWare is addressing the hardware scaling and packaging problem for superconducting qubits (how to physically get to thousands on one device), whereas IBM is emphasizing an end-to-end logical qubit goal (with heavy focus on software and error correction protocols), IonQ is emphasizing total qubit count and modular networking, and PsiQuantum is focusing on manufacturing techniques for huge photonic systems. All are valid pieces of the puzzle, and in fact all these approaches might converge – e.g. a 10k-qubit QuantWare processor could be one module within an IBM logical-qubit architecture or part of an IonQ-like network, if standards allow. The key point is that everyone in the race sees the next 4-5 years as critical for scaling up, and each is attacking a different bottleneck. QuantWare’s focus on I/O and fabrication capacity complements others’ focus on error-correcting codes or photonic interconnects. No single breakthrough will make quantum computing useful overnight; it’s about pushing all the metrics (qubit quantity, quality, connectivity, speed, etc.) to the levels needed for practical algorithms.

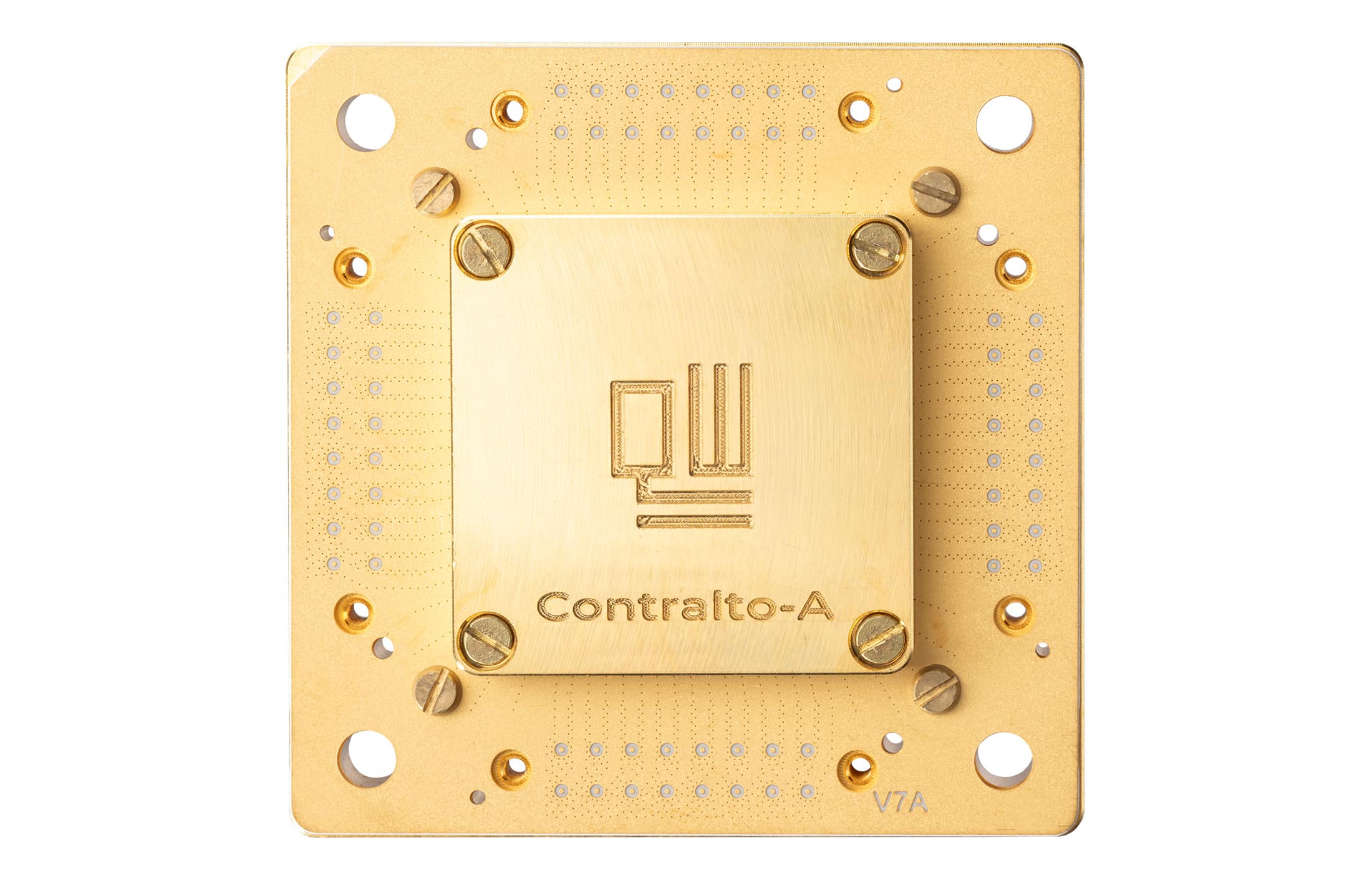

QuantWare itself is very transparent that 10,000 qubits is one step on a longer journey, not the final destination. On their website and in internal roadmaps, they outline a stepwise progression: first a 17-qubit error-correction test chip (Contralto-A17, optimized for a distance-3 surface code) which is shipping now; next a 41-qubit chip (Tenor-A41, planned in 2026) to demonstrate logical qubit operations like a logical two-qubit gate; then around 2027 a ~250-qubit device (Baritone-A250) aimed at running small logical circuits and more complex QEC routines; and only after those milestones comes the 10k-qubit “Bass” processor in 2028, envisioned to tackle the first algorithms beyond the reach of uncorrected quantum machines. In parallel, they have a “D-Line” of processors for NISQ applications and integrators, which goes from a tiny 5-qubit starter chip to a 64-qubit device in 2026 (of which the first devices have been shipped already) , a 450-qubit device in 2027 and even a projected ~18,000-qubit module by 2028 (focused on analog or hybrid quantum computing without full error correction). The details aren’t as important as the philosophy: each generation is meant to reach a specific capability target – not just a qubit count. As I noted previously, “the meaningful milestones are not just ‘more qubits,’ but hitting certain functional targets – real-time QEC, then logical qubits, then logical gates, then logical circuits, and only then full-blown algorithms”. QuantWare’s roadmap reflects that understanding, and even its 10k-qubit goal is framed as “the beginning of solving new problems, not as ‘we solved quantum computing’”.

This perspective resonated during our interview as well. Rijlaarsdam was cautious not to over-sell the 10k figure in terms of immediate impact. “Whether you get a useful fidelity [out of 10k qubits] is up to the rest of the stack – the control electronics, the software – to implement error correction,” he pointed out. QuantWare can provide the hardware with certain coherence times and connection fidelities, but turning physical qubits into powerful logical qubits is a shared responsibility. This admission underscores that the industry is still figuring out the division of labor: who guarantees the error rate? If something doesn’t work, is it the qubit maker’s fault or the calibrator’s fault? Today, it’s a bit of finger-pointing. In time, as reference QOA systems get built, best practices will emerge and everyone will know what piece they need to perfect. For now, the takeaway is that a 10,000-qubit device in 2028 would be a major enabler – a platform to try large-scale error correction and algorithms – but it won’t magically solve cryptography or outperform supercomputers on all problems on day one. It moves the frontier, potentially achieving the first fault-tolerant operations, and that is hugely significant even if it’s not “Q-Day.” In fact, as I wrote, QuantWare’s news is “a legitimate data point in an industry-wide convergence toward demonstrating fault-tolerance by the end of this decade… If anything, it reinforces that everyone in the race sees the next 4-5 years as critical for scaling”. Rather than heralding an instant quantum supremacy, the announcement adds momentum to a collective push: the race to the first true quantum computers is on, and it’s not science fiction – it’s an engineering marathon.

Rijlaarsdam put it bluntly: a supplier can promise coherence time (if installed correctly), but “fidelity” is often a system outcome – it depends on calibration quality, control electronics, software, and operator skill. That creates an accountability gap that feels familiar in complex tech stacks: when performance misses the target, everyone can plausibly blame someone else.

His proposed antidote is also borrowed from mature hardware industries: reference setups. If vendors can publish “if you assemble A+B+C and operate it competently, you should see X,” it becomes much easier to define responsibilities, compare components, and industrialize integration.

And even if the error-correction math works, scaling also has a mundane enemy: the economics of everything that connects to the qubits.

The interview also surfaced a more “unsexy” scaling bottleneck: the economics of cables and control. Rijlaarsdam noted that today a superconducting line can cost on the order of €1,500 per line – which is manageable at today’s scales but becomes absurd at “million-qubit” ambition levels. His point wasn’t that the physics is impossible, but that the bill of materials becomes the story unless the supply chain shifts from bespoke to industrial. He argued the material cost of these cables is closer to “cents,” and that the gap between cents and thousands of euros is largely a function of yield, labor, and low volume – the kind of curve that can move dramatically if the market actually orders at scale.

He made a similar argument for control electronics. Cryogenic control may be “at least a decade away” in his view, but room-temperature control racks are physically tractable – he suggested even extremely large systems could fit in a surprisingly modest footprint. The real barrier is cost: today’s control stacks are expensive because many channels are built around FPGAs and low-volume instrumentation economics. His thesis is that once volumes are high enough to justify ASICs, the cost curve collapses – and suddenly “how much compute per dollar” becomes the relevant metric, not whether the hardware is theoretically buildable.

These figures were presented as directional estimates to illustrate scaling economics rather than as a formal cost model.

Outlook: Engineering the Quantum Future, Openly

Talking to QuantWare’s CEO and surveying the broader landscape, one can’t help but sense that quantum computing is at an inflection point. The easy days of lab demonstrations are behind us; ahead lie years of grinding engineering work to turn fragile prototypes into reliable, large-scale machines. Specialized startups, big tech firms, and national programs are all hands on deck to solve different pieces of this puzzle. The emergence of Quantum Open Architecture is a sign of the field’s maturation – a recognition that no single player will single-handedly build the quantum future, and that progress will come faster through collaboration, standardization, and yes, competition to provide the best “building blocks.”

QuantWare’s approach – betting on modular hardware and selling it to all comers – encapsulates both the promise and the challenge of this next phase. If they succeed in delivering a 10,000-qubit processor by 2028 that works as advertised, it could fast-forward the entire industry by giving many groups access to a piece of hardware that was previously unimaginable. We could see a flourishing of experiments and applications on machines built from such processors, much like the PC clone boom of the 1980s unlocked creativity with a standard platform. It would also validate the open architecture model: showing that a small company focusing on one layer can out-innovate the vertically integrated giants in that niche, and in turn empower others to innovate on top of their achievement. “Through the Quantum Open Architecture, we empower the whole ecosystem to build upon our processors,” Rijlaarsdam said, reflecting on the Naples deployment, “This milestone shows the effectiveness of that approach: [they] are now operating a quantum computer that is beyond most of the systems built by closed architecture players.”

However, execution is everything. As the saying goes, “hardware is hard,” and scaling quantum hardware is extraordinarily hard. Significant challenges remain on the road to 10,000 qubits: maintaining uniform qubit quality across a huge device, minimizing crosstalk and errors, automating the assembly of thousands of delicate components, and developing control systems that can handle the data-crunching and feedback for error correction in real-time. And of course, there’s the business challenge – can a startup marshal the necessary capital, talent, and partnerships to pull this off before incumbents catch up? QuantWare is roughly doubling its team to over 150 people, indicating the scale of effort in the coming 1-2 years (for context, that’s approaching the size of some corporate quantum hardware teams). The company’s strategy of multiple revenue streams – selling off-the-shelf chips now, doing joint projects with early adopters, and offering foundry services to strategic partners – is meant to keep money coming in and knowledge flowing as they build the KiloFab and the 10k-qubit modules. It’s a delicate balance of staying afloat today while building for tomorrow’s moonshot. Rijlaarsdam put it succinctly: “The key problems are solved; now it’s an execution game… of course things always come up, but by far the majority is an engineering challenge and there’s very little science left – at least up to these sizes.” In his view, reaching 10k qubits is mostly about scaling known techniques and managing complexity, not waiting on new physics. That’s a confident claim – and perhaps a necessary mindset to take on such an audacious goal.

The next few years will reveal whether the open architecture approach truly gives quantum computing the boost its proponents hope. I’ll be watching for interim milestones: perhaps a 100+ qubit chiplet demonstration in 2026, early prototypes of the 3D stacked modules, and other QOA-based systems (like the Colorado initiative) coming online and showing what they can do. If multiple independent groups around the world can assemble 50-100 qubit QOA machines and start running useful experiments, it will validate the model and attract more contributors to the ecosystem. Success will breed more success: as more institutions deploy open-architecture systems, demand for specialized components will increase, driving economies of scale and further cost reductions. It could create a positive feedback loop, much as the PC industry saw an explosion of compatible peripherals and software once the market grew. On the other hand, if the engineering proves tougher than expected (e.g., if yields at KiloFab are low or the 3D integration introduces too much overhead), then hype could turn to disillusionment, and the pendulum might swing back to more tightly controlled R&D at a few big companies.

One thing is certain: quantum computing is no longer just a physics experiment – it’s becoming an engineering project and an industry, with all the messiness and opportunity that implies. The drive toward modularity and open standards is a sign of that maturation. It echoes the history of other technologies – openness and interoperability often unleash creativity and broad growth, whereas closed approaches can initially leap ahead but eventually hit limits. The words of QuantWare’s CEO ring optimistic but grounded: “It’s really important to balance boldness with credibility in this space.” Bold goals like 10,000 qubits are motivating and show what’s possible; credible execution will determine if we get there on time and in one piece. If the QOA vision holds, by the end of this decade we might see universities, startups, and countries running homegrown quantum computers built from globally sourced, standardized parts – an outcome that spreads the technology far and wide. And somewhere in Delft, a little “Intel of quantum” could be quietly supplying the brains for all of those machines, content to let others shine in delivering the final products. That, at its heart, is the promise of quantum open architecture: do what you do best, and cooperate on the rest, so that together we can achieve what none could do alone.

Editorial disclosure: PostQuantum.com does not accept sponsored content or paid editorial. QuantWare did not sponsor this article and provided no compensation or other consideration. I chose to cover the company because of its relevance to open quantum architecture and systems integration; for transparency, my firm Applied Quantum is also focused on systems integration.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.