The Infrastructure Beneath the Qubit: Four Enabling Technologies That Will Determine Which Quantum Computers Actually Scale

Table of Contents

Sometime in 2025, a quantum engineer at a European research lab ran into a problem that had nothing to do with qubit physics. Her team’s dilution refrigerator had developed a leak. The replacement lead time was seven months. The helium-3 needed to recharge the system was backordered. The control electronics they’d planned to upgrade were stuck in customs, delayed by new export screening procedures. And the FPGA boards at the heart of their error correction decoder were allocated to a defense contractor with higher priority.

The qubits were fine. The infrastructure to operate them was broken.

This scenario – composited from real experiences shared at the 2025 Silicon Quantum Electronics Workshop and American Physical Society meeting – captures a truth that no quantum computing press release will tell you: the rate of progress in quantum computing is not limited just by the cleverness of qubit designs. It is limited by the availability, performance, and scalability of the enabling technologies that surround them. The cryogenics that cool them. The electronics that control them. The decoders that correct their errors. The materials that make all of it possible.

If you’ve followed PostQuantum.com’s modality-by-modality mapping of the quantum supply chain – superconducting, trapped-ion, photonic, neutral-atom, and silicon spin – you’ve seen how each modality draws from a distinct supplier ecosystem. Superconducting needs dilution refrigerators and microwave electronics. Trapped ions need precision lasers and vacuum systems. Neutral atoms need spatial light modulators and high-power laser sources. Silicon spin needs isotopically purified substrates and CMOS foundries. Photonics needs single-photon detectors and silicon photonics fabs.

But beneath these modality-specific layers sits a shared infrastructure – technologies that every quantum computer needs, regardless of how its qubits are encoded. This article maps four of the most critical: the control electronics that orchestrate computation, the cryogenic systems that maintain quantum coherence, the error correction hardware that bridges the gap between noisy physical qubits and reliable logical ones, and the critical materials whose supply chains create chokepoints for the entire industry.

These are the layers where supply chain constraints become gating factors, where a handful of companies hold disproportionate leverage, and where investments made today will determine which quantum modalities can actually scale – and which will remain trapped in the lab.

This analysis examines technology and market dynamics. It does not constitute financial or investment advice.

I. Control Electronics: The Brain That Every Quantum Computer Shares

Every qubit, regardless of modality, must be told what to do. A microwave pulse shapes a superconducting transmon gate. A laser pulse drives a trapped-ion transition. A voltage pulse adjusts the exchange coupling between silicon spin qubits. An RF signal controls an acousto-optic deflector steering light to neutral atoms. In every case, the instruction originates in a piece of classical electronics – an arbitrary waveform generator, a signal processor, an FPGA-based controller – that converts a quantum algorithm into the precise analog signals that manipulate physical qubits.

This layer – often called quantum control electronics or quantum instrumentation – is simultaneously the most modality-agnostic and most strategically important enabling technology in the quantum stack. A company that builds control electronics for superconducting qubits can, with firmware changes and RF front-end modifications, serve trapped-ion, spin, or neutral-atom systems. This cross-modality flexibility makes control electronics one of the most investable layers in quantum computing: the customer base grows with every quantum computer deployed, regardless of which modality wins.

The Competitive Landscape

The quantum control electronics market was estimated at roughly $83.5 million in 2025 for superconducting systems alone, projected to reach $211 million by 2034 – and the total addressable market across all modalities is substantially larger. The market is growing at a compound annual rate exceeding 17%, driven by every new quantum computer installation requiring a control stack.

Four companies dominate the Western market, each with a distinct strategic position:

Zurich Instruments (Switzerland), acquired by test-and-measurement giant Rohde & Schwarz in 2021, launched its next-generation ZQCS Quantum Control System in March 2026. Engineered for the “logical qubit era,” the ZQCS supports over 1,000 channels per rack with microsecond-scale feedback for quantum error correction. Its modular AdvancedTCA architecture and direct-RF front end eliminate external mixers and reduce signal chain complexity. Zurich Instruments’ position is bolstered by the Rohde & Schwarz parent – a $3 billion test-and-measurement company with global service infrastructure, defense relationships, and deep RF engineering expertise. The ZQCS was selected for Fujitsu and RIKEN’s 256-qubit superconducting system in Japan, among other deployments.

Qblox (Netherlands), a QuTech spinout based in Delft, has emerged as the control electronics standard for Quantum Open Architecture (QOA) systems. Qblox supplied the control stack for the Q-PAC system – the first commercially deployable QOA quantum computer in the United States – and was selected by the DOE and Fermilab to manufacture and distribute the QICK quantum control platform for U.S. research institutions. With approximately $32 million in total funding, 146 employees, and partnerships spanning Bluefors (for cryogenic integration), Q-CTRL (for autonomous calibration), and QuantWare (for the Quantum Utility Block), Qblox is building the “picks and shovels” business model that benefits regardless of which QPU maker wins.

Quantum Machines (Israel) develops the OPX+ platform, which serves as the orchestration backbone for Israel’s Quantum Computing Center (IQCC) and installations worldwide. Quantum Machines has positioned itself not merely as an electronics vendor but as a quantum computing infrastructure company – offering the hardware, software, and cloud platform for operating quantum computers. The company has raised over $96 million and serves customers across superconducting, trapped-ion, and neutral-atom modalities.

Keysight Technologies (U.S., NYSE: KEYS) is the largest publicly traded company with significant quantum control exposure. With a market capitalization exceeding $30 billion, Keysight brings institutional scale, a global sales force, and decades of RF and microwave measurement expertise. Its Quantum Control System was selected for Fujitsu and RIKEN’s 256-qubit system, and the company has signed multi-year agreements with Singapore’s quantum ecosystem. For investors seeking quantum exposure through established public companies, Keysight offers the most direct control-electronics play.

Additional players include Tabor Electronics (Israel), Swabian Instruments (Germany), and several Chinese companies – QuantumCTek, Chengdu ZWDX, and Origin Quantum’s control division – that serve China’s rapidly growing domestic quantum ecosystem.

The Software Layer: Control Beyond Hardware

Hardware generates signals. Software decides what signals to generate. A parallel ecosystem of quantum control software companies has emerged to optimize qubit performance through algorithmic calibration, error suppression, and automated tuning:

Q-CTRL (Australia) develops AI-driven firmware that sits between the quantum algorithm and the control hardware, suppressing noise and optimizing gate performance across multiple hardware platforms. Q-CTRL’s Boulder Opal product integrates with control stacks from Quantum Machines, Qblox, Zurich Instruments, Tabor, and Keysight — making it a modality-agnostic performance layer. The company recently partnered with QuantWare and Qblox to launch the Quantum Utility Block.

QuantrolOx (UK/Finland) uses machine learning for automated qubit tuning – a challenge that becomes exponentially more complex as qubit counts grow, particularly for gate-defined quantum dots where each qubit requires individual voltage calibration.

Riverlane (UK), while primarily known for quantum error correction (covered in Section III), also provides control-layer software through its Deltakit platform.

Cryogenic Control: The Next Frontier

The most consequential development in quantum control electronics is not happening at room temperature. It’s happening inside the cryostat.

Every cable that carries a control signal from a room-temperature electronics rack to a millikelvin quantum processor adds heat to the cryogenic system. At current qubit counts (dozens to hundreds), this is manageable. At thousands or millions of qubits, it becomes physically impossible – the heat load from the wiring overwhelms the refrigerator’s cooling capacity. This is the wiring bottleneck, and it is arguably the single most important scaling constraint across all gate-based quantum computing modalities.

The solution is to move control electronics from room temperature into the cryostat – either to an intermediate temperature stage (1–4 K) or, ambitiously, to the millikelvin stage alongside the qubits. This cryo-CMOS and cryogenic digital logic approach is being pursued across multiple fronts:

Intel’s Horse Ridge and Pando Tree chips operate at 4 K, generating qubit control signals from inside the cryostat using Intel’s 22-nanometer FinFET process. SEEQC‘s Single Flux Quantum (SFQ) technology uses superconducting digital logic at millikelvin temperatures, potentially eliminating most external wiring. SEEQC’s partnership with Taiwan’s ITRI for SFQ chip manufacturing adds a semiconductor-industry dimension. SemiQon (Finland) has developed transistors specifically optimized for cryogenic operation, consuming 0.1% of the power of conventional transistors at sub-kelvin temperatures. Quobly and CEA-Leti demonstrated an 18.5 μW/qubit cryo-CMOS readout circuit at ISSCC 2025, using FD-SOI technology to co-integrate classical control with silicon spin qubits.

The cryogenic control layer is where quantum computing’s modality-specific supply chains may converge. Whether the QPU is superconducting, spin-based, or even certain trapped-ion architectures, the classical electronics operating at cryogenic temperatures face the same physics: shifted threshold voltages, altered noise characteristics, and extreme thermal constraints. Companies that master cryo-CMOS design serve all of them.

The investor read: Control electronics is the most modality-agnostic investable layer in the quantum stack. The market grows with every quantum computer deployed. The competitive landscape includes both venture-backed pure plays (Qblox, Quantum Machines) and publicly traded companies with quantum exposure (Keysight at $30B+ market cap, Rohde & Schwarz via Zurich Instruments). The cryo-CMOS frontier represents the next wave of value creation — companies solving the wiring bottleneck unlock scaling for the entire industry. Watch for the convergence of semiconductor process expertise (Intel, SEEQC, SemiQon) with quantum system integration (Qblox, Bluefors) as the most promising partnerships.

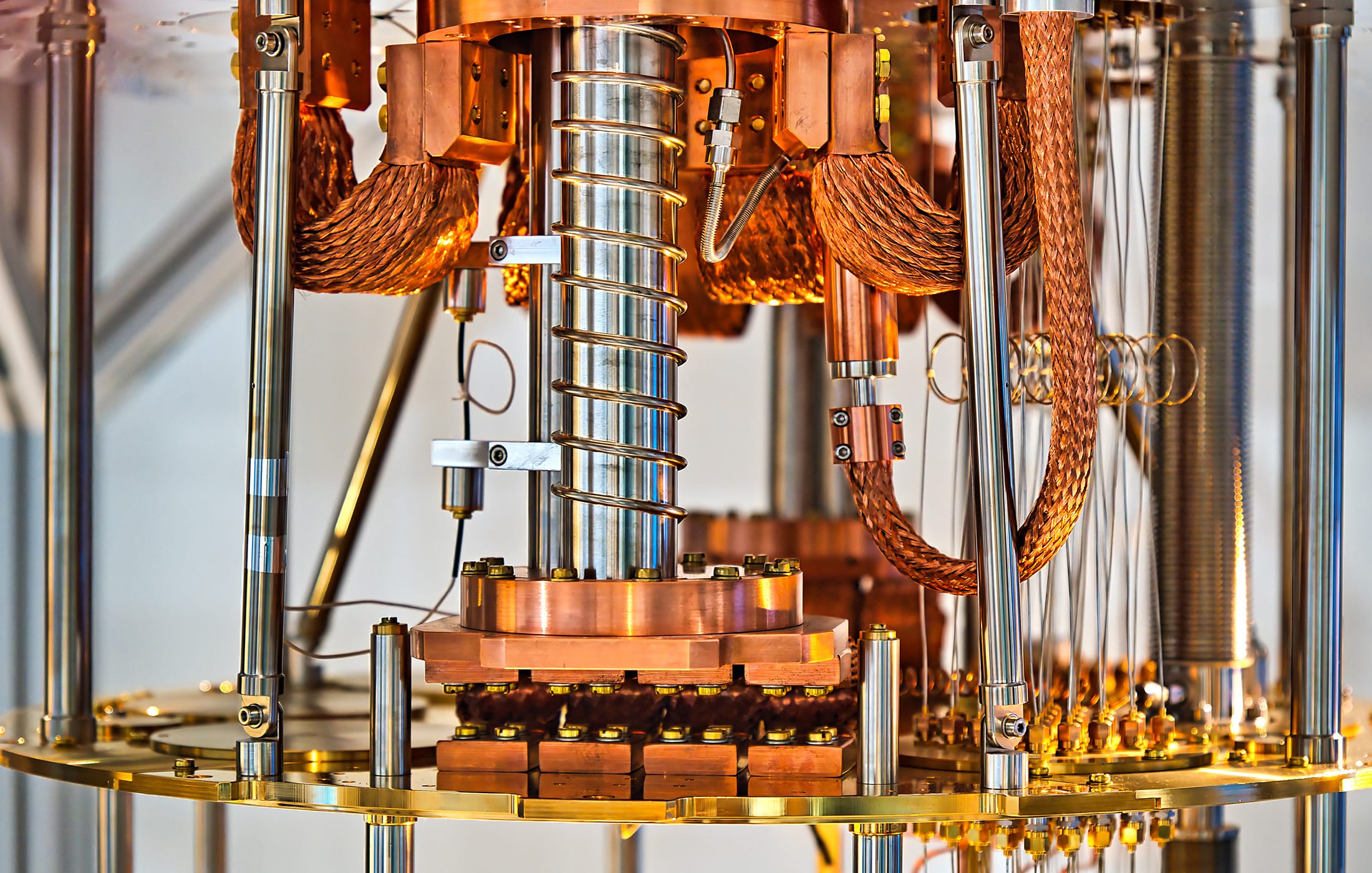

II. Cryogenics: The Thermal Foundation That Most Modalities Cannot Escape

Quantum coherence is fragile. Thermal energy – the random jiggling of atoms at room temperature – is its mortal enemy. The most direct way to protect a quantum state is to remove thermal energy by cooling the system to temperatures where thermal noise becomes negligible compared to the quantum energy scales being manipulated.

For superconducting qubits, this means approximately 10–15 millikelvin – one hundred times colder than interstellar space. For silicon spin qubits, typically 10 millikelvin to 1 kelvin, depending on the approach. For trapped ions, the atoms are laser-cooled to microkelvin temperatures, but the surrounding vacuum chamber sits at room temperature. For neutral atoms, similarly, laser cooling handles the atoms while the apparatus operates near ambient. For photonic systems, the single-photon detectors often require cryogenic cooling (around 1–2 K for superconducting nanowire detectors), even though the photonic circuits themselves may operate at room temperature.

The result: cryogenics is not universal across modalities, but it touches most of them – and for the two modalities with the largest installed base (superconducting and, increasingly, silicon spin), it is absolutely essential.

The Dilution Refrigerator Market

The dilution refrigerator is the defining piece of infrastructure for cryogenic quantum computing. As detailed in our superconducting supply chain analysis, the global market was valued at roughly $117–173 million in 2024 and is projected to reach $190–270 million by 2031. The market is extraordinarily concentrated:

Bluefors (Finland) is the dominant player for quantum applications, with over 1,500 dilution refrigerators delivered worldwide. Its KIDE Cryogenic Platform supports IBM’s Quantum System Two, and the company has expanded U.S. manufacturing in Syracuse, New York. Bluefors’ acquisition of Cryomech secured an upstream cryocooler dependency, and its Interlune helium-3 supply agreement (up to 10,000 liters per year from 2028–2037) signals the scale of anticipated demand.

Oxford Instruments (UK, LSE: OXIG) is a publicly traded alternative with a dilution refrigerator product line serving both quantum and condensed-matter physics markets.

Leiden Cryogenics (Netherlands), CryoConcept (France, now part of Air Liquide), and FormFactor (U.S.) round out the established Western suppliers.

The disruptors are arriving from two directions. Maybell Quantum (U.S.) claims three times the qubit capacity in one-tenth the footprint and supplied the cryogenic platform for the Q-PAC QOA system. Kiutra (Germany) eliminates helium-3 entirely through solid-state magnetic cooling, securing €13 million in 2025 specifically to address quantum supply chain resilience. And China has built out at least ten domestic dilution refrigerator manufacturers, part of a deliberate vertical integration strategy to eliminate Western dependencies.

The Helium Problem (Both Isotopes)

The quantum cryogenics supply chain rests on two helium isotopes with very different supply dynamics.

Helium-3 is the working fluid in dilution refrigerators. It does not exist in meaningful natural quantities on Earth; the primary source is tritium decay from nuclear weapons programs. Prices range from roughly $2,000 to $15,000 per liter, making it per-unit-mass more valuable than gold. Each dilution refrigerator uses dozens of liters; next-generation systems may require hundreds or thousands. The Bluefors-Interlune and Maybell-Interlune contracts for lunar helium-3 mining signal the severity of the supply constraint.

Helium-4 is the commodity gas used in cryocoolers, MRI systems, semiconductor fabrication, and as a pre-coolant in dilution refrigerators. While more abundant than helium-3, helium-4 supply has experienced repeated shortages – driven by competition from healthcare (MRI), aerospace, and semiconductor industries. The U.S.-Qatar geopolitical relationship affects helium-4 supply because Qatar is a major producer; disruptions there could cascade through the broader cryogenic ecosystem.

Strategic responses are emerging across three fronts: lunar mining (Interlune), helium-3-free cooling (Kiutra’s magnetic refrigeration), and closed-loop recycling (helium recovery and recirculation systems).

Temperature Regimes and Modality Dependencies

A critical nuance often lost in supply chain analysis: not all cryogenic requirements are equal. The temperature at which a quantum system operates determines which cooling technology it needs, and the technologies at different temperature regimes have very different supply chain characteristics.

At 10–20 millikelvin (superconducting qubits, some spin qubits): Dilution refrigerators are essential. Market dominated by five companies. Helium-3 dependent. Six-to-nine-month lead times. Costs exceeding $500,000 per unit. At 1–4 kelvin (some silicon spin qubits, photonic detectors, cryo-CMOS electronics): Gifford-McMahon or pulse tube cryocoolers suffice. Broader, more competitive supplier base. Established industrial supply chains from MRI and semiconductor applications. At room temperature (trapped ions, neutral atoms, some photonic systems): No cryogenic dependency for the qubits themselves, though ancillary components (photon detectors, some electronics) may still need cooling.

This hierarchy has strategic implications. Modalities that can operate at 1 K or above (Diraq’s silicon spin qubits, for instance) decouple from the most constrained layer of the cryogenic supply chain. Modalities that require millikelvin operation (superconducting, most current spin systems) remain dependent on the dilution refrigerator oligopoly and its helium-3 supply chain.

The investor read: Cryogenics is the enabling technology with the most concentrated supplier base and the most constrained input materials. Bluefors is the single most critical company in the quantum computing supply chain — a disruption to Bluefors’ operations would halt superconducting quantum development globally within months, as the NATO Transatlantic Quantum Community study noted. For investors, the cryogenics layer offers exposure through publicly traded Oxford Instruments (LSE: OXIG) and through the private companies (Bluefors, Maybell, Kiutra) that are diversifying the supply base. The helium supply chain creates commodity-level risk that is unusual for a technology sector — analogous to how rare earth supply concentration affects the electric vehicle industry. Companies developing helium-free cooling (Kiutra) or helium recycling technologies represent supply chain resilience plays.

III. Quantum Error Correction Hardware: The Emerging Layer Nobody Can Afford to Ignore

There is a widespread misconception that quantum error correction (QEC) is a software problem. It is not. Or rather, it is not only a software problem. Real-time QEC – the kind required for fault-tolerant quantum computing – is a hardware problem of staggering proportions.

Here’s why. A quantum error-correcting code works by distributing quantum information across many physical qubits, then repeatedly measuring “syndrome” qubits to detect errors without disturbing the encoded data. For the surface code – the most widely studied QEC approach – each round of syndrome measurement must be decoded (interpreted and corrected) before the next round begins. In a superconducting quantum computer, that cycle time is roughly one microsecond. In that microsecond, the decoder must process the syndrome data from every patch of logical qubits, identify the most likely errors, and send correction instructions back to the control electronics.

For a system with a few hundred physical qubits, this is computationally tractable. For a system with millions of physical qubits – the scale required for commercially relevant quantum computing – the data throughput reaches petabytes per second, and the decoding must happen in real-time with sub-microsecond latency. No software decoder running on conventional CPUs or GPUs can keep up. The decoding must be done in specialized hardware: FPGAs, ASICs, or potentially superconducting digital logic operating at cryogenic temperatures.

This realization – that QEC is a hardware infrastructure requirement, not just an algorithmic research topic – is what created Riverlane’s market opportunity and is now spawning an emerging supply chain layer that didn’t exist three years ago.

The QEC Hardware Landscape

Riverlane (Cambridge, UK) is the undisputed leader in purpose-built QEC hardware. Its Deltaflow stack is deployed with partners including Infleqtion, Oxford Quantum Circuits, Rigetti, and Oak Ridge National Laboratory – spanning superconducting, neutral-atom, and other modalities. The company’s Local Clustering Decoder (LCD), published in Nature Communications in December 2025, was the first hardware decoder to simultaneously deliver sub-microsecond decoding speed, high accuracy, and adaptive noise modeling on FPGA hardware.

Riverlane’s numbers are striking. The LCD achieves a four-fold reduction in the physical qubits required for a given logical error rate – meaning that a quantum computer using Riverlane’s decoder needs roughly 75% fewer qubits to achieve the same computational reliability. The company claims this can accelerate the path to utility-scale quantum computing by three to five years. Riverlane’s roadmap targets MegaQuOp capability (one million reliable quantum operations) by the end of 2026, with GigaQuOp (one billion) targeted for the early 2030s.

In March 2026, Riverlane published a comprehensive QEC roadmap defining the milestones required to scale from current experimental systems to commercially useful fault-tolerant quantum computers. The company partners with over 60% of the world’s quantum computer manufacturers, making it a de facto infrastructure provider for the QEC layer.

Riverlane also publishes the annual Quantum Error Correction Report, which in 2025 documented an explosion in QEC research: 120 new peer-reviewed papers in the first ten months of 2025, up from 36 in all of 2024 – a clear signal that the field is transitioning from theoretical exploration to practical engineering. The report also flagged a severe talent gap as the “ultimate bottleneck” for QEC progress.

Google Quantum AI develops internal QEC decoding capabilities, demonstrated in its landmark 2024 below-threshold surface code result on the Willow chip. Google’s approach integrates decoding tightly with its superconducting hardware, using custom FPGA and potentially ASIC implementations. While not a commercial QEC product, Google’s internal capabilities set the performance benchmarks that other players must match.

IBM has pivoted toward quantum Low-Density Parity-Check (qLDPC) codes, which promise more efficient use of physical qubits than the surface code but require different decoder architectures. IBM’s internal decoding research represents a potential fork in QEC standards – if qLDPC becomes the dominant error correction approach, the decoder hardware requirements will differ from those optimized for surface codes.

Quantinuum has demonstrated QEC on its trapped-ion systems and develops internal decoding capabilities. Trapped ions’ lower error rates and all-to-all connectivity may enable different (and potentially simpler) QEC architectures than those required for superconducting or spin qubits.

Several academic and national-lab groups are also contributing decoder hardware research. The DOE’s national laboratories – particularly Oak Ridge, Argonne, and Fermilab – host QEC research programs that feed into the broader ecosystem. University-based groups at ETH Zurich, TU Delft, and Yale have published decoder implementations on FPGAs.

The FPGA Dependency

A less visible but strategically important dependency underlies the entire QEC hardware ecosystem: FPGAs. Field-Programmable Gate Arrays from AMD/Xilinx and Intel/Altera are the substrate on which most quantum control and QEC systems are built. Riverlane’s Deltaflow runs on FPGAs. Qblox’s control electronics use FPGAs. Quantum Machines’ OPX+ is FPGA-based. HRL’s spinQICK uses Xilinx RFSoC FPGAs.

This creates an upstream dependency on two companies (AMD and Intel) for a critical component across the entire quantum computing stack. The FPGAs used in quantum systems are typically the highest-performance variants – the same parts sought by defense, telecommunications, and AI industries. Allocation conflicts are already occurring, as the anecdote opening this article illustrated.

As QEC requirements grow, purpose-built ASICs (Application-Specific Integrated Circuits) may eventually replace FPGAs for production systems. Riverlane, SEEQC, and potentially others are expected to pursue ASIC development for high-volume decoder deployments. This transition – from flexible FPGAs to optimized ASICs – would create a new semiconductor fabrication requirement and a new supply chain node.

The investor read: QEC hardware is the most nascent of the four enabling technology layers, but it may be the most consequential. Without real-time error correction, no quantum computer achieves fault tolerance – and without fault tolerance, no quantum computer reaches commercial utility. Riverlane is the clear first-mover, with a cross-modality deployment model and a defensible IP position (the LCD technology). The company’s claim that its decoder accelerates utility-scale quantum computing by three to five years, if validated, positions QEC hardware as the highest-leverage investment in the quantum stack. The broader QEC ecosystem also creates demand for FPGA hardware (AMD, Intel), cryogenic electronics (SEEQC, for millikelvin-stage decoders), and classical computing (for QEC simulation and training). The December 2025 “QEC code explosion” – 120 papers in ten months – signals that this layer is entering its rapid growth phase.

IV. Critical Materials: The Periodic Table’s Quantum Tax

Quantum computers are not built from abundant, commodity materials. They demand substances that are rare, expensive, difficult to purify, geopolitically concentrated, or all four at once. The NATO Transatlantic Quantum Community study identified this as one of the most urgent vulnerabilities in the quantum supply chain: over 90% of high-purity material processing for quantum applications occurs outside NATO territories.

Each modality imposes its own material requirements, but several critical materials cross modality boundaries or create supply chain chokepoints that affect the industry as a whole.

Helium-3 and Helium-4

Already covered in Section II, but worth re-emphasizing in the materials context: helium-3 is not merely a coolant input. It is a strategic material whose supply is intertwined with nuclear weapons programs, whose price has increased tenfold over two decades, and whose alternatives (magnetic cooling, lunar mining) remain years from commercial scale. Helium-4, while more available, faces competition from healthcare, semiconductor, and aerospace industries that creates price volatility and periodic shortages.

Silicon-28

As detailed in our silicon spin supply chain analysis, isotopically enriched silicon-28 is essential for silicon spin qubits. Natural silicon contains 4.7% silicon-29, whose nuclear spin destroys qubit coherence. Enrichment to 99.99% silicon-28 extends coherence times by three orders of magnitude.

The supply chain is nascent. ASP Isotopes (NASDAQ: ASPI) began commercial production in South Africa in March 2025, with initial capacity exceeding 80 kg per year. Silex Systems provides laser-separation enrichment in Australia. Historical supplies came from Russia’s Isotope JSC (via gas centrifuge processing of SiF₄ from the Avogadro Project), but geopolitical constraints have reduced accessibility. The transition from kilogram-scale research supply to tonne-scale commercial production has not yet occurred, and no current facility approaches the throughput that widespread silicon spin deployment would require.

Niobium

Niobium is the workhorse metal of superconducting quantum computing. It forms the superconducting wiring in processors from D-Wave, and is used in the NbTi cables inside cryostats. Niobium-based qubits are experiencing a research revival at Argonne National Laboratory and elsewhere.

The geopolitical concentration is severe: Brazil accounts for roughly 90% of global niobium production. While niobium is not scarce in absolute terms, the processing of high-purity niobium for quantum applications – where parts-per-billion impurities can destroy qubit performance – is a specialized capability with limited providers. The NATO study flagged niobium as a material “sourced from potentially unstable or non-allied regions.”

Tantalum

Tantalum has emerged as a promising material for superconducting qubits, with multiple groups reporting improved coherence times in tantalum-based transmon devices compared to aluminum. Tantalum supply is concentrated in Central Africa (primarily the Democratic Republic of Congo and Rwanda), creating a supply chain with well-documented ethical and governance challenges. For quantum applications, the required purity levels add a processing bottleneck on top of the mining concentration.

Rare Earth Elements

Quantum systems use specific rare earth elements depending on their design. Erbium and ytterbium are essential for the optical amplifiers and detectors used in photonic quantum computing and quantum networking. Europium and neodymium are used in quantum memory research. Rare earths are not geologically scarce, but the processing infrastructure is overwhelmingly concentrated in China, which controls over 60% of global rare earth mining and over 85% of rare earth processing.

For quantum applications, the required purity levels (often 99.999% or higher) narrow the supplier base even further. The NATO study noted that dependence on non-NATO sources for rare earth processing creates a supply chain vulnerability that parallels the better-known challenges in electric vehicles and defense electronics.

Sapphire and Specialty Substrates

Superconducting quantum processors are fabricated on either high-resistivity silicon or sapphire (single-crystal aluminum oxide) substrates. Sapphire offers superior dielectric properties but is more expensive and less available in semiconductor-standard formats. The sapphire substrate market is dominated by a small number of crystal growers – primarily serving the LED and semiconductor industries – with quantum computing representing a tiny but growing fraction of demand.

The Materials Vulnerability Map

The cross-modality view of critical materials reveals a pattern: the quantum computing industry depends on materials that are either geopolitically concentrated (niobium in Brazil, rare earths in China, tantalum in Central Africa), inherently scarce (helium-3), or require processing capabilities that are concentrated in a handful of facilities globally (high-purity quantum-grade materials processing). The NATO study’s finding that over 90% of high-purity material processing occurs outside NATO territories is not about any single material – it’s about a systemic vulnerability across the materials palette.

The investor read: Critical materials create a different risk profile from other enabling technology layers. The risk is not that a single company dominates a market (as Bluefors does in dilution refrigerators), but that entire material supply chains are geopolitically fragile or have no commercial-scale source. ASP Isotopes is the most investable pure-play on quantum-grade materials (through silicon-28), but the broader materials challenge – niobium processing, rare earth refining, tantalum sourcing – is more naturally addressed through government industrial policy than through venture capital. For investors, the materials layer is most relevant as a risk factor in evaluating quantum computing companies: does the company’s technology roadmap depend on materials with constrained supply chains? If so, has it secured long-term supply agreements? Companies that have addressed their materials dependencies (as Bluefors did with the Interlune helium-3 deal) are better positioned than those that have not.

The Cross-Modality View: Where Dependencies Converge

One of the most useful frameworks for understanding quantum enabling technologies is to map which modalities depend on which layers. The result reveals both universal dependencies and modality-specific advantages.

Dilution refrigerators are required by superconducting (essential), silicon spin MOS at millikelvin (essential for most current systems), and silicon spin donor-based (essential). They are not required by trapped ions, neutral atoms, or photonic systems – though photonic systems may need cryocoolers for their single-photon detectors.

Control electronics (room temperature) are required by every modality – this is the universal dependency. The specific signal types differ (microwave for superconducting and spin, RF and laser drivers for trapped ions and neutral atoms, optical modulators for photonics), but the FPGA-based real-time processing and arbitrary waveform generation are shared infrastructure.

QEC hardware will be required by every modality that pursues fault-tolerant quantum computing – which is all of them. The timeline differs: superconducting and silicon spin are pursuing surface-code QEC that demands the highest decoder throughput. Trapped ions may use different codes that are less decoder-intensive. Photonic systems using measurement-based approaches (like PsiQuantum’s) have distinct QEC architectures. But the fundamental requirement for real-time classical processing of syndrome data is universal.

Isotopically enriched silicon-28 is required only by silicon spin qubits – but the semiconductor-grade substrates required by other modalities (high-resistivity silicon for superconducting, silicon photonics wafers for photonic systems) create a broader dependency on specialized silicon supply.

Helium-3 is required by any system using a dilution refrigerator, making it a shared dependency for superconducting and millikelvin spin systems. Niobium is primarily a superconducting dependency but also appears in cryogenic cabling used across modalities.

This convergence analysis reveals an important strategic insight: the modalities with the fewest enabling technology dependencies – trapped ions and neutral atoms – also happen to be the modalities with the most challenging modality-specific bottlenecks (precision lasers, vacuum systems, ion trap fabrication). There is no free lunch in quantum engineering. Every modality trades one set of supply chain risks for another.

What This Means for Quantum Strategy

For Investors

The enabling technology layers offer something rare in quantum investing: opportunities to profit from the growth of the quantum computing industry without betting on which modality wins. Control electronics (Keysight, Qblox, Quantum Machines, Zurich Instruments) and QEC hardware (Riverlane) serve all modalities. Cryogenics companies (Bluefors, Oxford Instruments) serve most modalities. Materials companies (ASP Isotopes) serve specific modalities but operate in markets with few competitors.

The highest-conviction enabling technology investments share a common characteristic: they solve problems that get harder as quantum computers scale. Control electronics must handle more channels. Cryogenics must cool larger systems. Decoders must process more data faster. Materials must be available in larger quantities at higher purity. Companies positioned on the right side of these scaling dynamics have structural growth drivers independent of modality outcomes.

For Technology Executives

Organizations evaluating quantum readiness should assess not just qubit quality and count, but the enabling technology maturity of their chosen provider’s stack. Questions to ask include: what cryogenic system does the quantum computer use, and what is its provider’s lead time? What control electronics platform underlies the system, and is it upgradeable? Does the provider have a QEC strategy, and does it rely on commercially available decoder technology or internal R&D? Are there materials in the bill of materials that face supply chain concentration risk?

The answers to these questions may be more predictive of near-term system availability and reliability than raw qubit specifications.

For Policymakers

The NATO study’s recommendations deserve amplification. The enabling technology layers contain the quantum computing industry’s most acute sovereignty vulnerabilities: concentrated dilution refrigerator manufacturing, non-NATO rare earth processing, single-source material dependencies, and FPGA allocation pressures. National quantum sovereignty strategies that focus exclusively on qubit development while neglecting the enabling technology supply chain are building capability on a fragile foundation.

Specific policy priorities that emerge from this analysis: coordinate with semiconductor initiatives to ensure FPGA and ASIC availability for quantum applications; develop domestic high-purity material processing capabilities; diversify the dilution refrigerator supplier base through targeted manufacturing incentives; and invest in QEC workforce development to address the talent gap that Riverlane’s 2025 report identified as the field’s ultimate bottleneck.

For CISOs

The enabling technology analysis does not change the fundamental calculus of post-quantum cryptography migration – the urgency remains. But it adds nuance to threat timeline modeling. The supply chain constraints documented here – particularly in cryogenics, helium-3, and QEC hardware – function as natural brakes on quantum computing scaling. A CRQC requires not just a breakthrough in qubit quality but a scaling of the entire enabling technology stack. The pace of that scaling is not determined by physics alone; it is determined by industrial capacity, material availability, and engineering talent.

This provides modest comfort, but not complacency. The enabling technology supply chains are being actively developed, with Riverlane’s decoder potentially compressing QEC timelines by three to five years and hot-qubit silicon spin approaches relaxing cryogenic constraints. The brakes are real, but they are being addressed.

The Stack Beneath the Stack

Quantum computing captivates with its physics: superposition, entanglement, interference. The companies that build qubits get the headlines, the funding rounds, and the breathless media coverage. But the companies that build the infrastructure beneath the qubits – the electronics that control them, the refrigerators that cool them, the decoders that correct their errors, the materials that make all of it possible – hold a disproportionate influence over which quantum computers actually work, which ones scale, and which ones remain laboratory curiosities.

The most important company in quantum computing may not be building a quantum computer at all. It may be building a decoder chip in Cambridge, a dilution refrigerator in Helsinki, a control stack in Delft, or enriching silicon isotopes in Pretoria. The infrastructure beneath the qubit is the infrastructure that will determine whether the quantum revolution actually happens – and who profits when it does.

This article is part of PostQuantum.com’s Quantum Ecosystem series, mapping the technologies, companies, and supply chains that make quantum computing possible. For modality-specific supply chain analyses, see our deep dives on superconducting, trapped-ion, photonic, neutral-atom, and silicon spin quantum computing.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.