The Cryptographic Iceberg Inside a Mobile Banking Transaction

Table of Contents

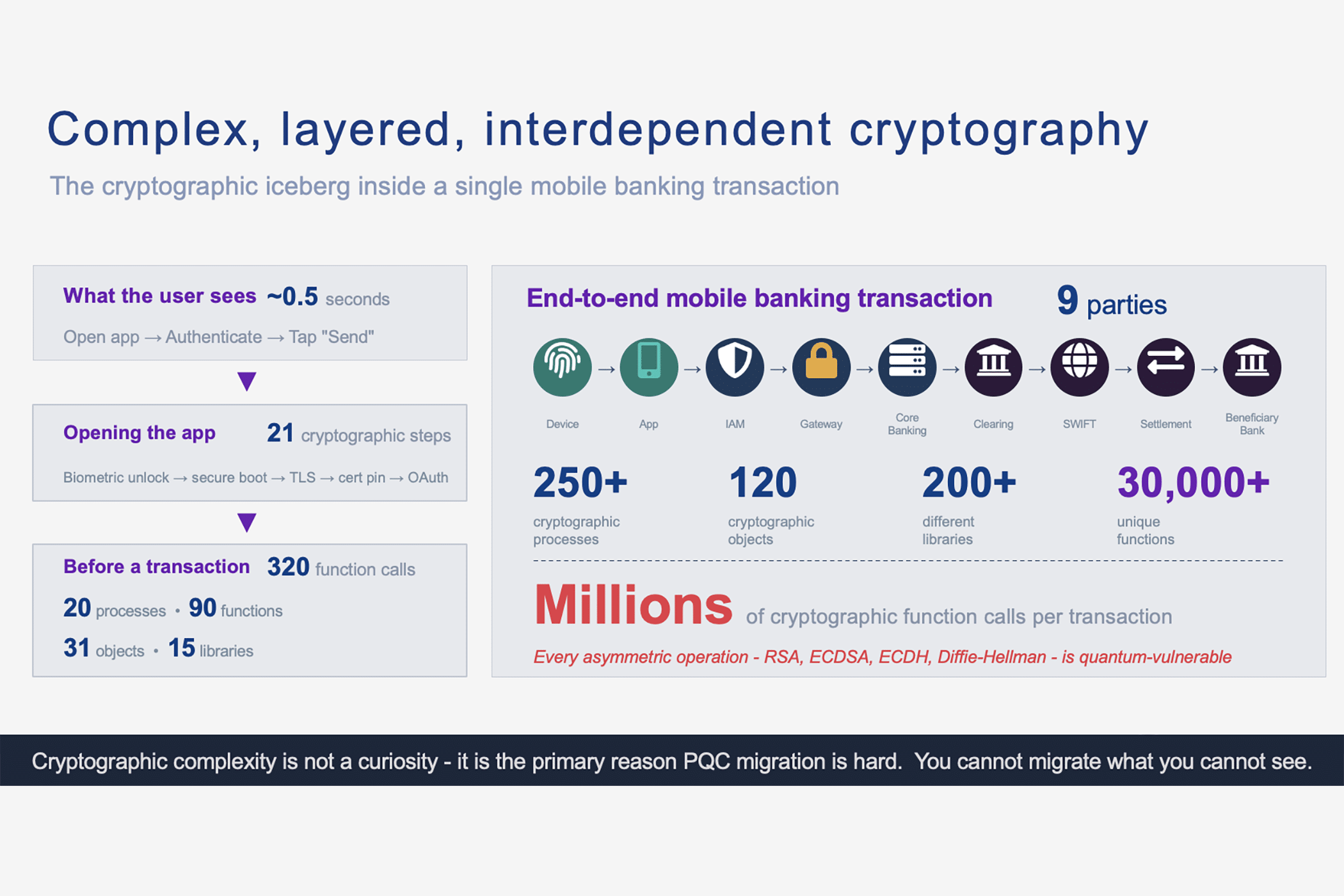

320 function calls before you even type an amount

It takes roughly half a second. You press your thumb against the sensor, your banking app opens, and a familiar interface appears – account balance, recent transactions, a “Send Money” button. The gesture feels effortless, even mundane. You might do it six or seven times a day without thinking.

But in that half-second, your phone has executed approximately 320 cryptographic function calls.

Not after you transfer money. Not when you enter an amount or confirm a payee. Before any of that. Just to get you from a locked screen to an app that’s ready to accept instructions, your device has activated 20 distinct cryptographic processes, called upon 15 different cryptographic libraries, instantiated 31 cryptographic objects, and invoked 90 unique cryptographic functions.

And that’s just what happens on the phone.

When you actually tap “send” and a payment traverses the full end-to-end chain – from your device through your bank’s API gateway, through card networks or interbank messaging, through clearing houses and central bank settlement systems – the cryptographic footprint explodes. A single mobile banking transaction can involve nine independent parties, 250 or more cryptographic processes, 120 cryptographic objects, more than 200 libraries, upward of 30,000 unique cryptographic functions, and literally millions of individual cryptographic function calls.

These figures come from an internal cryptographic discovery exercise performed by my team on a limited set of representative components at a point in time. They’re included to illustrate order-of-magnitude complexity, not as an industry benchmark, not as a statistically representative average, and not as a guarantee that every mobile banking stack will look similar. And they reveal something that most payment industry professionals intuit but rarely see quantified: mobile banking is one of the most cryptography-dense consumer experiences in modern life.

(A word of context before we go deeper. These figures represent an idealized, end-to-end view across all nine parties in a cross-border payment chain – most of which no single organization controls or can independently verify. No practitioner should read what follows as a target to replicate in full. The purpose is to make the scale of cryptographic interdependence visible, not to suggest that any one institution can or should inventory every function call across every counterparty’s infrastructure.)

This article is a companion to my earlier mapping of the Cryptographic Stack in Modern Interbank Payment Systems, which traced cryptographic operations from customer authentication through SWIFT messaging to central bank settlement. Where that analysis followed the payment between institutions, this one follows the payment from the moment a consumer reaches for their phone. It reconstructs, layer by layer, the cryptographic architecture that makes a mobile banking transaction possible – from the immutable hardware keys fused into your phone’s silicon during manufacturing, through the transport and application security stack, into the payment processing infrastructure, and out through interbank settlement.

The purpose is not to impress with complexity for its own sake. It is to make that complexity visible – because you cannot migrate what you cannot see. And with post-quantum transition timelines approaching, the clock is already running on every one of those millions of function calls.

The trust chain begins in silicon

Mobile banking security does not start with the bank. It starts with the phone – and specifically, with cryptographic keys that were burned into the processor before it ever left the fabrication facility.

Secure boot: verifying reality from the ground up

On an iPhone, the first code that executes when the device powers on is the Boot ROM – a small block of immutable, read-only code etched into the Apple silicon during manufacturing. That Boot ROM contains the Apple Root CA public key, and its sole job is to cryptographically verify the signature on iBoot, the next stage of the boot process. iBoot, in turn, verifies the iOS kernel. The Secure Enclave – Apple’s dedicated security coprocessor – performs its own independent boot chain, verifying that sepOS (the Secure Enclave’s operating system) is signed by Apple. Each stage authenticates the next. If any link in the chain fails signature verification, the device refuses to boot normally.

On Android, the mechanism differs in implementation but follows the same principle. Hardware root-of-trust keys are burned into SoC fuses during manufacturing. Android Verified Boot (AVB) cryptographically verifies boot partitions and system images. Since Android 7.0, boot state is bound to the OS version and security patch level through hardware attestation – meaning a rollback to a vulnerable OS version can be detected and rejected.

This matters for mobile banking because every higher-layer security guarantee – biometric authentication, secure key storage, device attestation – implicitly depends on the boot chain being intact. If an attacker could substitute a modified operating system, every subsequent cryptographic operation would be compromised regardless of its algorithmic strength. The secure boot chain is the foundation on which all other mobile banking security stands.

From a cryptographic counting perspective, boot alone involves multiple digital signature verifications (RSA or ECDSA depending on platform and generation), hash computations for integrity verification, and secure random number generation for anti-replay mechanisms. We are already several cryptographic operations deep, and no app has launched.

It is worth pausing on what this means for quantum vulnerability. The secure boot chain’s digital signatures are currently based on classical algorithms – RSA or ECDSA. If an adversary could forge a signature on a malicious bootloader, they could subvert the entire trust chain. Today, this requires factoring large numbers or solving the elliptic curve discrete logarithm problem – both computationally infeasible with classical computers. A CRQC running Shor’s algorithm would change that equation entirely.

Apple and Google have not yet disclosed post-quantum roadmaps for their boot chain signatures, making this one of the “hidden” quantum vulnerabilities that most PQC migration plans overlook because it sits below the application layer in vendor-controlled silicon.

The Secure Enclave and TEE: where keys live and never leave

The single most important architectural decision in modern mobile security is the isolation of cryptographic key material from the application processor. On Apple devices, this isolation is physical: the Secure Enclave is a dedicated coprocessor with its own processor core (running a custom L4 microkernel called sepOS), its own AES Engine with side-channel attack countermeasures, its own Public Key Accelerator (formally verified for mathematical correctness since the A13 chip), and its own True Random Number Generator built from ring oscillators post-processed through CTR_DRBG.

The Secure Enclave holds a UID – a Unique ID fused into the silicon during manufacturing – that is never readable by any software, including Apple’s own. Hardware keys derived from this UID are used by the AES Engine but never leave the engine itself; even the Secure Enclave’s operating system cannot see them. Starting with the A11 chip, Secure Enclave memory is protected by encryption and an integrity tree rooted in dedicated SRAM, providing anti-replay protection.

For mobile banking, the Secure Enclave stores the most security-critical keys: the device binding key used to prove device identity to the bank, the transaction signing key used to authorize payments, and the biometric-gated keys that ensure only an authenticated user can trigger those operations. These are typically ECC (P-256) key pairs or AES keys today; on newer Apple OS releases, developers can also use standardized post-quantum primitives exposed via CryptoKit (ML-KEM and ML-DSA) as additional building blocks for quantum-secure workflows where supported.

On Android, the equivalent architecture uses the hardware-backed Keystore system, which ensures key material never enters the application process. Cryptographic operations are executed within either the TEE (TrustZone on ARM processors — an isolated execution environment within the main SoC) or StrongBox (introduced in Android 9, a discrete tamper-resistant secure element with its own CPU, secure storage, and TRNG). The key attestation mechanism produces certificate chains signed by Google’s Hardware Attestation Root CA, embedding metadata about the device’s boot state, security patch level, and whether the key is truly hardware-backed.

The practical consequence is profound: a mobile banking app can generate a private key that, by design, can never be exported from the device. Authentication becomes “prove possession of the private key” rather than “transmit a shared secret.” This is exactly the direction that financial-grade API security profiles like FAPI 2.0 push toward – and it is why mobile banking can achieve hardware-backed authentication without issuing every customer a physical token.

Secure storage: Keychain and Keystore as cryptographic subsystems

When a banking app “remembers” anything – device identifiers, refresh tokens, device-binding keys, risk signals, cached account summaries – it typically stores them through the platform’s secure storage rather than implementing its own encryption layer.

Apple’s Keychain data protection encrypts items using AES-256-GCM with two tiers of keys – a metadata key and a per-row key – with the Secure Enclave governing the key wrapping and unwrapping hierarchy. Apple’s broader Data Protection model extends this to per-file encryption keys mediated by class keys, which are themselves wrapped under a key derived from the user’s passcode and the device UID. Data protection classes like “Complete Protection” ensure that protected data is cryptographically inaccessible when the device is locked – a guarantee enforced by the Secure Enclave refusing to release class keys until biometric or passcode authentication succeeds.

Android’s model differs in implementation but achieves similar goals: keys reside in the Keystore service, cryptographic operations execute in hardware-backed environments, and key properties can be attested to remote servers. Banking apps typically configure keys to require biometric authentication for every use – meaning even if the application process is compromised, the cryptographic keys cannot be accessed without a fresh biometric event at the hardware level.

The net effect is that even local data storage on a mobile banking app involves multiple cryptographic operations: key derivation, AES-GCM encryption, integrity tagging, and policy enforcement mediated by the secure coprocessor. These operations contribute to the “320 function calls” tally in ways that are invisible to both users and most application developers – part of the “cryptographic dependency surface” that CBOM tools exist to reveal.

Biometrics: the cryptography behind the glance

When you authenticate with Face ID or Touch ID, what feels like a simple sensor check is actually a multi-stage cryptographic operation conducted entirely within the Secure Enclave.

Apple’s biometric security documentation describes the architecture: during enrollment, the Secure Enclave processes, encrypts, and stores the biometric template. During authentication, the sensor captures new biometric data and transmits it to the Secure Enclave over a channel encrypted and authenticated with a negotiated session key based on a factory-provisioned shared key unique to each individual sensor-enclave pair. The Secure Enclave performs the comparison and returns only a binary result – match or no match. Raw biometric data never leaves the enclave, is never transmitted to Apple’s servers, and is never accessible to the banking app.

On Android, biometric authentication components communicate their authentication state to the Keystore service through authenticated channels. A successful biometric match can unlock hardware-backed keys that were generated with a biometric-binding policy – meaning those keys literally cannot be used without a fresh biometric authentication event.

The cryptographic primitives involved in this single “glance at your phone” include AES encryption of the sensor-to-enclave channel, the biometric template comparison within the enclave, key derivation to unlock the protected keychain, and the release of authentication state to the operating system. One user gesture; multiple distinct cryptographic operations.

App integrity: proving “this is really our app”

Before a banking app can establish a session with the bank’s servers, it must first prove it is genuine – not a repackaged clone, not running in an emulator, not instrumented by malware.

On iOS, App Attest provides hardware-backed app attestation. The system generates a key pair inside the Secure Enclave, tied to the specific app and device. Apple issues an attestation certificate chain that the banking app’s backend can verify, confirming that the request originated from a genuine instance of the app, running on genuine Apple hardware, with an uncompromised operating system. This transforms the phone into a signing oracle for the bank’s API: instead of presenting a static API key (a fundamentally weak pattern), the device proves itself cryptographically per session using a hardware-bound key whose provenance can be verified through Apple’s attestation chain.

On Android, the Play Integrity API serves an equivalent role, allowing backend servers to verify that requests come from the genuine app binary, installed through Google Play, running on a certified device. The integrity verdict is signed by Google and interpreted server-side.

App code signing itself adds another cryptographic layer. iOS enforces mandatory code signing – every executable memory page is verified at load time. On Android, APK Signature Scheme v3 (introduced in Android 9) signs the entire APK as a whole and adds proof-of-rotation structures enabling signing key rotation – a feature with direct crypto-agility implications for PQC migration.

These attestation and signing mechanisms collectively ensure that when the bank’s server receives a request, it can have cryptographic confidence that the request originated from an unmodified app, on an uncompromised device, with a user who authenticated biometrically. The “21 steps” visible in architectural audits are not padding – they are the implementation of defense-in-depth, each layer adding a distinct cryptographic assurance.

The wire: TLS, certificate pinning, and financial-grade API security

The moment the banking app begins communicating with the bank’s servers, it enters a second cryptographic domain: transport and application-layer security.

What TLS 1.3 actually does in a banking session

For many engineers, “we use HTTPS” is the end of the security conversation. In payments, it is barely the beginning.

TLS 1.3 completes its handshake in a single round trip – down from two in TLS 1.2. The client sends a ClientHello containing supported cipher suites and, crucially, a key_share extension with preemptive ECDHE public keys (typically for x25519 and P-256 curves). The server responds with its own public key. From this point forward, everything is encrypted – including the server’s certificate, which in TLS 1.2 traveled in cleartext.

The key exchange uses ECDHE (Elliptic Curve Diffie-Hellman Ephemeral): each side generates a random private key per session, computes a public key on the chosen curve, exchanges public values, and derives a shared secret. Because ephemeral keys are discarded after the session, TLS 1.3 provides forward secrecy by default – compromise of long-term keys cannot decrypt past sessions. Key derivation uses HKDF (HMAC-based Key Derivation Function), replacing TLS 1.2’s legacy PRF.

Application data flows under AES-256-GCM – an Authenticated Encryption with Associated Data (AEAD) cipher that combines encryption and integrity protection in a single operation. Each TLS record uses a per-record nonce derived from the record sequence number and a per-connection IV. Together with AEAD integrity protection and sequence-number tracking, this provides record-level integrity and order protection (for example, out-of-order or duplicated records within a connection fail to authenticate). Replay across connections (especially TLS 1.3 0-RTT) is a separate concern and must be handled at the TLS/application design level. Banking apps typically negotiate TLS_AES_256_GCM_SHA384 or TLS_CHACHA20_POLY1305_SHA256.

Certificate verification builds a trust chain from the server’s leaf certificate through intermediate Certificate Authorities to a root CA in the device’s trust store. Each link in the chain requires a signature verification (RSA or ECDSA), expiration and revocation checks (via OCSP or CRL), and domain name validation via Subject Alternative Names per RFC 5280.

From a “cryptographic steps” perspective, a single app-to-bank TLS session involves: secure random number generation for ephemeral keys and nonces, ECDHE key agreement, two or more signature verifications for the certificate chain, HKDF-based key derivation for session keys, and ongoing per-record AEAD operations for every byte of application data. This is why the claim of “20+ cryptographic operations before a transaction even begins” is conservative – a TLS handshake alone can account for much of that count.

Certificate pinning: security gain, operational tightrope

Most banking apps go beyond standard TLS certificate validation by implementing certificate pinning – embedding expected certificate data at build time and rejecting any server certificate that doesn’t match, regardless of whether it chains to a trusted root.

Public key pinning (pinning the SPKI hash rather than the whole certificate) is preferred because it survives certificate renewal as long as the same key pair is retained. On Android, Network Security Configuration provides declarative XML-based pinning with built-in expiration dates. On iOS, implementations typically use NSURLSession delegates or libraries like TrustKit. The OWASP Mobile Application Security Testing Guide recommends pinning for any app handling sensitive financial data.

Pinning is not “one cryptographic step.” It adds a policy enforcement gate on top of X.509 validation – a gate that depends on cryptographic hash comparisons and strict certificate lifecycle management. And it creates operational risk: unexpected certificate rotation can cause self-inflicted outages. This tension between security and operational brittleness is precisely the kind of trade-off that makes crypto-agility essential.

OAuth, PKCE, and financial-grade API security

Mobile banking apps authorize API access through OAuth 2.0, typically using the Authorization Code flow with PKCE (Proof Key for Code Exchange). PKCE binds the authorization code to a per-session secret, mitigating code interception attacks that are particularly dangerous for mobile “public clients” that cannot safely embed secrets.

Access tokens are JWTs – compact, signed tokens typically using RS256 (RSASSA-PKCS1-v1_5 with SHA-256), PS256 (RSASSA-PSS), or ES256 (ECDSA with P-256). Some implementations encrypt tokens as JWEs using RSA-OAEP or ECDH-ES for key encryption and AES-256-GCM for content encryption.

What distinguishes banking from general-purpose OAuth is the recognition that standard OAuth is not strong enough for high-value transactions. That recognition produced the Financial-grade API (FAPI) 2.0 security profile, finalized in early 2025, which mandates PKCE, Pushed Authorization Requests (PAR), and sender-constrained tokens via mTLS certificate binding or DPoP (Demonstration of Proof-of-Possession). Client authentication requires private_key_jwt or mTLS – client secrets are explicitly prohibited. The security model has been formally verified.

For cryptographic counting, this layer adds token signature verification, potentially per-request signing, mTLS handshakes for sender-constrained tokens, and key management for client credentials stored in the device’s secure hardware. The banking app now maintains multiple concurrent cryptographic contexts: device-bound keys in the Secure Enclave for attestation, TLS session keys for transport security, and OAuth/JWT signing keys for API authorization.

Where cryptography becomes money: authorizing the payment

Opening the app and establishing a secure session is the table stakes. Authorizing a payment adds a harder requirement: cryptographic proof that is specific to the money amount, the payee, and the transactional context – plus auditability.

Strong customer authentication and dynamic linking

In the European Union – and increasingly in other jurisdictions that follow similar principles – the Delegated Regulation (EU) 2018/389 requires that when strong customer authentication (SCA) is applied to a payment, the authentication code generated must be specific to the amount and the payee agreed to by the payer. This is the “dynamic linking” requirement, and it is not merely compliance language. It is a cryptographic design constraint.

If you must bind approval to amount and payee, you need a data structure – the “transaction to be signed” – and a cryptographic mechanism that commits the user’s authorization to exactly those fields. In practice, dynamic linking means generating a cryptographic value that is a function of the transaction data, protected so that altering the amount or payee invalidates the authorization code. The implementation typically uses either HMAC-based one-time passwords computed over transaction-specific inputs, or, increasingly, digital signatures from device-bound keys.

Transaction signing: the critical middle layer

Transaction signing is arguably the most security-relevant cryptographic operation in the entire mobile banking flow, because it provides integrity of the payment instruction, non-repudiation, resistance to man-in-the-browser and overlay attacks (when designed well), and compliance alignment with dynamic linking requirements.

A typical modern design works as follows. The bank provisions (or the app generates) a device-bound signing key stored in the Secure Enclave or hardware-backed Keystore. The bank registers the corresponding public key – often after verifying the device’s attestation certificate chain. When the user approves a payment, the app constructs a canonical payment authorization payload – amount, payee identifier, timestamp, nonce, transaction ID, and risk metadata – and signs it with the device-bound private key. The backend verifies the signature, often cross-referencing attestation context and token binding, before releasing the funds.

The cryptographic primitives here are classical public-key signatures (ECDSA with P-256 is typical today) plus hashing and canonicalization rules. The exact scheme varies by institution, but the architecture is consistent across high-security designs. And every one of these signature operations uses algorithms that a cryptographically relevant quantum computer would break.

Beyond the app: where “end-to-end” becomes nine parties

When the payment leaves the banking app’s immediate relationship with the bank’s API server and enters payment rails – whether card networks, domestic instant payment systems, or cross-border interbank messaging – the cryptographic stack multiplies dramatically. This is where the figures balloon from hundreds of cryptographic operations to tens of thousands of unique functions and millions of function calls.

The nine-party map

A realistic end-to-end mobile banking payment can traverse up to nine distinct parties, each with its own cryptographic trust anchors, key management systems, and HSM infrastructure. Mapping these parties – consistent with the methodology in my earlier interbank payment cryptographic analysis – reveals the full scope:

Party 1: The customer’s device and OS security subsystems. Hardware root of trust, Secure Enclave/TEE, biometric authentication, key storage, and secure boot – all the on-device cryptography we have already traced.

Party 2: The mobile banking app and its embedded security SDKs. App attestation, anti-tamper controls, certificate pinning, RASP (Runtime Application Self-Protection) modules, and the numerous cryptographic libraries these SDKs depend on. A Cryptomathic analysis identified 14 or more distinct cryptographic key types and 10 or more cryptographic libraries within a single mobile payment wallet – and that count covers only the app layer, not the operating system or backend.

Party 3: The bank’s identity and access management layer. OAuth/OIDC authorization server, multi-factor authentication services, risk engine, device registration, and step-up authentication – each with its own TLS termination, JWT signing keys, and token encryption infrastructure.

Party 4: The bank’s API gateway and service mesh. TLS termination, mTLS enforcement, request signing policies, rate limiting, WAF integration, and token validation. In microservices architectures, internal service-to-service calls use mutual TLS with certificates issued by the bank’s internal CA – creating an internal zero-trust environment where trust is established cryptographically rather than by network location.

Party 5: Core banking and payments orchestration. Business logic, fraud detection, sanctions screening, payment formatting – all underpinned by HSM-protected operations for transaction signing, MAC generation, and key management. This is where the institutional HSM infrastructure comes into play: FIPS 140-3 validated modules holding master keys, zone keys, and working keys under strict dual control and split knowledge ceremonies.

Party 6: The domestic clearing or instant payment system operator. For domestic transfers, this might be FedNow, SEPA Instant, Faster Payments, or the relevant national scheme. Each has its own participant authentication framework, message signing requirements, and network security infrastructure. FedNow, for example, uses ISO 20022 messages with PKI-based digital signatures throughout.

Party 7: Cross-border messaging networks. For international transfers, SWIFT’s PKI infrastructure adds another complete cryptographic layer. As mapped in detail in the interbank payment analysis, SWIFTNet requires each institution to hold PKI key pairs (stored on HSMs), with all messages digitally signed for non-repudiation and encrypted in transit. The SWIFT Customer Security Programme mandates controls including HSM-stored private keys, dual control for privileged operations, and annual independent assessment.

Party 8: Central bank settlement infrastructure. RTGS systems like Fedwire, TARGET2, or BOJ-NET, where final settlement occurs. These systems have their own HSM-protected signing keys, TLS/mTLS requirements, and message authentication mechanisms. BIS Project Leap Phase 2 tested post-quantum signatures precisely in this layer, replacing RSA with CRYSTALS-Dilithium (the Round 3 predecessor to the now-standardized ML-DSA) in TARGET2’s ISO 20022 message flow – and found PQC signature verification averaged 209.9 milliseconds versus 28.1 milliseconds for traditional cryptography.

Party 9: The beneficiary bank and confirmation channels. The receiving bank performs its own signature verification, account matching, sanctions screening, and posting – then generates confirmation messages back through the chain and notification to the beneficiary through its own authenticated channels.

Not every transaction touches every node. A simple intra-bank transfer might traverse only parties 1 through 5. But the slide data describes the class of payments where the transaction travels “outside the app” into payment rails – and nine parties is entirely realistic for a cross-border mobile payment.

Why the numbers multiply

At each party boundary, the cryptographic surface expands because financial systems operate on a zero-trust premise applied across institutional lines. Data is not encrypted once and forwarded to its destination. At each hop, messages are decrypted, validated, re-signed with the current institution’s keys, re-encrypted under the next hop’s parameters, and logged with integrity-protected audit trails. Each institutional boundary is a cryptographic demarcation point – a trust boundary crossing that requires its own TLS tunnels, message signing and verification, certificate validation, and HSM interactions.

This multiplicative effect is what transforms a few dozen cryptographic operations on the phone into 30,000 unique cryptographic functions across the ecosystem. The math is not mysterious: nine parties, each running dozens of cryptographic processes, each backed by multiple libraries and HSM calls, each processing messages that require per-operation key derivation, signing, verification, and encryption. The “millions of function calls” figure reflects the aggregate across all parties, all protocol layers, and all backend infrastructure for a single payment instruction.

Card rails and digital wallets: a parallel cryptographic universe

Many transactions that originate in a mobile banking app ultimately travel card payment rails – in-app purchases, wallet-based contactless payments, or card-on-file transactions. When that happens, the cryptographic stack expands into an entirely separate universe of tokenization, EMV cryptography, and 3-D Secure authentication.

EMV tokenization and digital wallets

When a card is enrolled in Apple Pay or Google Pay, the payment network’s Token Service Provider – Visa VTS or Mastercard MDES – creates a Device Account Number (DPAN), a 16-digit surrogate that replaces the actual card number (FPAN). The TSP maintains a secure mapping in a token vault; the token itself is domain-restricted (tied to a specific device, merchant, or channel) and cannot be mathematically reversed to recover the FPAN. EMVCo’s Payment Tokenisation specification defines this framework.

On Apple devices, the DPAN is stored exclusively in the Secure Element – a tamper-resistant microcontroller embedded in the NFC chip, certified to Common Criteria standards and physically isolated from iOS. The actual card number is never stored on the device or on Apple’s servers. Per-transaction, the Secure Element generates a dynamic cryptogram using the DPAN, a token-specific key, transaction amount, and transaction-specific data. For EMV contactless payments, this produces an Application Request Cryptogram (ARQC) – a MAC computed under session keys derived from a card-specific Unique Derivation Key using the Application Transaction Counter and transaction data.

Google Pay takes a different architectural approach, using Host Card Emulation – the Android application emulates a payment card in software rather than using a hardware Secure Element. Card Master Keys are stored on the Token Service Provider’s cloud servers, and Limited Use Keys (LUKs) are derived and downloaded to the device. LUKs generate per-transaction cryptograms and are limited by both tap count and expiration time – providing security even though the key material resides in a software environment rather than a dedicated hardware element. For in-app and web payments, Google uses ECIES (Elliptic Curve Integrated Encryption Scheme): ECDH with P-256 for key agreement, HKDF-SHA256 for key derivation, AES-256-CTR for encryption, and HMAC-SHA256 for authentication.

The EMV cryptogram: where math meets money

The EMV application cryptogram – known as the ARQC (Authorization Request Cryptogram) – is where the transaction becomes mathematically bound to its context. Understanding its construction reveals why card payment cryptography is both elegant and deeply entangled with legacy constraints.

The process begins at the issuer’s HSM, which holds an Issuer Master Key (IMK). From this master key, a card-specific Unique Derivation Key (UDK) is derived using the card’s PAN and PAN Sequence Number – typically via 3DES encryption per EMV Book 2, Annex A1.4.1. When the card (or its digital wallet equivalent) processes a transaction, session keys are derived from the UDK combined with the Application Transaction Counter (ATC), ensuring that every transaction uses unique cryptographic material.

The ARQC itself is generated by concatenating transaction data elements – amount, country code, terminal verification results, currency code, transaction date, transaction type, the unpredictable number provided by the terminal, Application Interchange Profile, ATC, and Issuer Application Data – appending ISO padding (0x80 followed by zeros), and applying MAC Algorithm 3 per ISO 9797-1 (the “Retail MAC”) with the session key. The result is an 8-byte cryptogram mathematically bound to the specific transaction details and the specific card, verifiable only by the issuer holding the corresponding master key.

At the issuer’s authorization system, validation works in reverse: the issuer’s HSM reconstructs the session key from its IMK and the card’s PAN/ATC, recomputes the MAC over the received transaction data, and compares. A match confirms that the transaction data was not altered in transit and that the card (or device) is genuine. The issuer then generates an ARPC (Authorization Response Cryptogram) – typically 3DES encryption of the session key applied to the ARQC XORed with the Authorization Response Code – which the card validates to confirm the response is genuinely from its issuer.

This bidirectional cryptographic exchange – card proves itself to issuer, issuer proves itself to card – occurs within the sub-500-millisecond budget of a contactless tap. The session key derivation, MAC computation, and response validation together involve multiple HSM calls at the issuer and potentially at intermediate processors.

Offline data authentication: RSA’s deepest foothold in payments

EMV contactless also supports card authentication through offline data authentication (ODA) – digital signatures that allow a terminal to verify a card’s authenticity without contacting the issuer. This is where RSA maintains its deepest and most operationally embedded presence in the payments ecosystem.

Three levels exist, all anchored in the EMVCo Certificate Authority’s RSA key hierarchy. SDA (Static Data Authentication) verifies that card data has not been modified since personalization – the issuer signs static card data, and the terminal verifies the signature chain from EMVCo’s root CA through the issuer’s public key certificate. DDA (Dynamic Data Authentication) adds cloning resistance: the card holds its own RSA private key and signs a terminal-provided challenge, proving it possesses the key rather than merely carrying a copy of signed data. CDA (Combined DDA with Application Cryptogram) wraps both the dynamic signature and the application cryptogram together, providing the strongest guarantees – confirmed by formal verification using the Tamarin prover by researchers at ETH Zurich.

The quantum vulnerability here is stark. A CRQC capable of deriving an issuer’s RSA private key from publicly available transaction data would enable the creation of perfectly authenticated counterfeit cards that offline terminals would accept without question. In environments where offline authentication is common – mass transit turnstiles, underground rail networks, in-flight purchases, rural merchants with intermittent connectivity – this represents a systemic fraud vector with no real-time detection mechanism.

3-D Secure 2.0: authentication for card-not-present

For online or in-app card transactions, EMV 3-D Secure adds another cryptographic layer. The protocol operates across three domains: the Acquirer domain (merchant and 3DS Server), the Issuer domain (Access Control Server), and the Interoperability domain (Directory Server operated by the card scheme).

The 3DS SDK encrypts device information using the Directory Server’s public key in JWE format, preventing intermediaries from accessing device-level data used for risk assessment. During challenge flows, the SDK and ACS perform Diffie-Hellman key exchange using ephemeral key pairs to establish encrypted session keys for challenge request/response messages. Successful authentication produces a CAVV (Cardholder Authentication Verification Value) or AAV (Accountholder Authentication Value) – a cryptogram that travels with the authorization message to the issuer.

HSMs, PIN blocks, and the key hierarchy

Deep within the bank’s data centers – and at every institution along the card payment chain – Hardware Security Modules (HSM) perform the cryptographic heavy lifting. Per-transaction, an HSM may execute PIN block decryption, PIN translation between key zones, PIN verification, EMV cryptogram validation (ARQC verification), MAC generation and verification, and authorization response cryptogram (ARPC) generation. That could be up to eight HSM calls per transaction, each involving multiple cryptographic operations inside the tamper-resistant boundary.

The key hierarchy that governs these operations runs from the Local Master Key (LMK) – which never leaves the HSM and encrypts all other keys – through Key Encrypting Keys and Zone Master Keys (used to exchange working keys between institutions) down to Terminal PIN Keys, Zone PIN Keys, PIN Verification Keys, Card Verification Keys, and Card Master Keys. Key ceremonies operate under dual control and split knowledge: no single person ever possesses a complete key. Components are XORed inside the HSM from smart card inputs provided by two or three key custodians in a physically secured room, following procedures defined by PCI PIN Security and ANSI X9.24.

PIN verification itself uses either the IBM 3624 method or the Visa PVV (PIN Verification Value) method – both of which perform their computations entirely within HSMs. The cleartext PIN is never exposed outside tamper-resistant hardware at any stage of processing. PIN blocks travel between institutions encrypted under DUKPT (Derived Unique Key Per Transaction) or similar schemes, with translation between key zones performed inside HSMs at each institutional boundary.

DUKPT and ISO 8583: the plumbing of payment message security

Two standards deserve particular attention because they illustrate how deeply cryptography is embedded in payment message infrastructure – and how that embedding complicates migration.

DUKPT (Derived Unique Key Per Transaction), defined in ANSI X9.24, provides unique encryption keys per transaction derived from a single Base Derivation Key (BDK). The BDK combined with a device’s Key Serial Number derives an Initial PIN Encryption Key (IPEK), which then generates future keys through a binary tree structure. Each transaction key derives variant keys for PIN encryption, MAC generation, and data encryption. The critical security property: compromising one session key reveals nothing about adjacent keys. The original 3DES variant supports approximately one million transactions per initial key due to its transaction counter construction; AES-DUKPT extends the usable counter space to allow on the order of billions of transactions per initial key. In AES-DUKPT deployments, migrating from legacy 3DES PIN blocks commonly involves ISO Format 4 PIN blocks to align with AES-based processing.

ISO 8583 payment-message integrity is typically provided with symmetric MACs between endpoints (for example, the ANSI X9.19 / ISO 9797-1 Algorithm 3 “Retail MAC” in some profiles). The underlying MAC produces a full 64-bit (8-byte) value before any truncation; whether it is transmitted as the full 8 bytes or a truncated subset (often 4 bytes) is profile/network dependent. Placement also depends on the ISO 8583 variant and message bitmap usage (often fields numbered 64 or 128 in common variants).

PIN block encryption follows ISO 9564: Format 0 (the most widely deployed) XORs the PIN with the PAN before 3DES encryption. Format 4, introduced for AES, uses a two-phase AES encryption over a 128-bit block. PIN translation between key zones – the process by which an acquirer’s encrypted PIN block is re-encrypted under the issuer’s key – happens entirely within HSMs at each institutional boundary. The cleartext PIN materializes only inside tamper-resistant hardware, for a fraction of a millisecond, at each translation point.

These standards collectively define what “millions of cryptographic function calls” means at the infrastructure level. Every card transaction that traverses multiple institutions requires DUKPT key derivation, PIN block decryption and re-encryption at each zone boundary, MAC computation and verification on every ISO 8583 message, and ARQC validation – all performed within HSMs that are themselves governed by strict key management ceremonies. The Cryptomathic analysis of key management risks provides additional context on the operational complexity these processes entail.

Leading payment HSM vendors – Thales (payShield 10K), Utimaco (whose Atalla line pioneered banking HSM security in the 1970s), Entrust (nShield series), and Futurex (Excrypt Plus) – all require FIPS 140-2/3 Level 3 minimum certification. Cloud HSM solutions from AWS (CloudHSM), Azure (Payment HSM), and Google provide equivalent cryptographic boundaries with shared PCI certification responsibilities. The migration of these HSMs to support PQC algorithms – particularly lattice-based signatures like ML-DSA – is one of the longest-lead-time and most capital-intensive elements of the quantum transition, because payment HSMs contain hardware accelerators specifically optimized for RSA and ECC arithmetic that cannot simply be repurposed for lattice-based mathematics through firmware alone.

The PCI and regulatory cryptographic overlay

Layered on top of all these technical cryptographic operations is a governance and compliance framework that imposes its own cryptographic requirements.

PCI DSS v4.0 mandates that Primary Account Numbers be rendered unreadable everywhere they are stored – through encryption, truncation, keyed cryptographic hashing, or tokenization. New in v4.0: hashes used to render PANs unreadable must be keyed cryptographic hashes – HMAC, CMAC, KMAC, or GMAC – with minimum key strength of 112 bits for HMAC/KMAC or 128 bits for CMAC/GMAC. The standard also introduced Requirement 4.2.1.1, mandatory since March 2025, requiring organizations to maintain an inventory of trusted keys and certificates – essentially mandating the kind of cryptographic visibility that CBOM initiatives aim to provide. (A companion requirement, 12.3.3, mandates documentation and review of cryptographic cipher suites and protocols in use, on the same compliance timeline.)

PCI PIN Security requires FIPS 140-2 Level 3 or higher HSMs for PIN processing, ISO 9564 PIN block formats, and governance controls like dual control and split knowledge. PCI P2PE (Point-to-Point Encryption) extends protection from the point of interaction through to the secure decryption environment inside HSMs.

The EU’s Strong Customer Authentication requirements under PSD2 (and their implementation through the RTS on SCA) add dynamic linking requirements that directly shape cryptographic implementation, as discussed above. DORA (Digital Operational Resilience Act) adds further cryptographic governance requirements for EU financial entities.

These are not separate from the technical cryptographic stack – they are forces that shape it. Every PCI requirement translates into specific algorithmic choices, key management procedures, and operational ceremonies. The “processes” counted in architectural audits include these governance-driven cryptographic activities alongside the purely technical ones.

What this means for the quantum transition

PostQuantum.com audiences care about one question above all others: what does this complexity mean for PQC migration?

The answer is direct. Cryptographic complexity is not a curiosity or an academic exercise. It is the primary reason the post-quantum transition is hard – and it is the primary reason that generic enterprise PQC guidance is insufficient for the payments industry.

What breaks and what survives

Every asymmetric algorithm in this stack – RSA in all key sizes, ECDSA, ECDH, EdDSA, Diffie-Hellman – is vulnerable to Shor’s algorithm on a cryptographically relevant quantum computer (CRQC). This means: TLS key exchange, every certificate signature in every chain, code signing, app attestation, device-bound transaction signing keys, SWIFT PKI, JWT signing, FAPI client authentication, EMV offline data authentication (SDA, DDA, CDA), 3-D Secure SDK encryption keys, and interbank message signing. The attack surface is not a few isolated endpoints. It spans every party and every layer.

Symmetric algorithms with adequate key lengths survive. AES-256, SHA-256, SHA-3, HMAC-SHA-256, and AES-GCM remain secure under Grover’s algorithm (which halves effective key length, leaving AES-256 at an effective 128-bit security level – still robust). The symmetric operations inside HSMs – PIN block encryption, MAC generation, key derivation – are quantum-resistant with current key lengths.

But here’s the catch: nearly every symmetric key in the system is distributed using asymmetric cryptography. AES-256 protecting transaction data is only as secure as the ECDHE key exchange that established the session key. HSM master keys might survive, but the protocols used to exchange zone keys and working keys between institutions depend on RSA or ECC. The quantum vulnerability is not limited to the asymmetric operations themselves – it cascades into every system that depends on asymmetric cryptography for key establishment.

The performance reality

When you know that a mobile banking transaction involves millions of cryptographic function calls – and that tens of thousands of unique functions across nine parties use quantum-vulnerable asymmetric algorithms – the scale of PQC migration becomes visceral rather than abstract.

The BIS Project Leap Phase 2 findings make the performance implications concrete. Testing CRYSTALS-Dilithium signatures (the algorithm that was subsequently standardized as ML-DSA in FIPS 204) in TARGET2’s ISO 20022 payment flow revealed average signature verification time of 209.9 milliseconds versus 28.1 milliseconds for traditional RSA – a 7.5× slowdown. ML-DSA signatures are approximately 2,420 bytes (at the ML-DSA-44 security level) versus 256 bytes for RSA-2048 – roughly a 10× increase. For ML-DSA-65, which provides stronger security, the public key is 1,952 bytes and the signature is 3,309 bytes.

Now multiply that across every signature verification in a nine-party payment chain. The performance budget for a contactless “tap-and-go” transaction is under 500 milliseconds. Adding 200+ milliseconds of cryptographic overhead at even one point in the chain threatens that user experience. Adding PQC overhead at every point – app attestation, TLS handshake, transaction signing, message signing, HSM operations, settlement – compounds into a fundamental architectural challenge.

This is why I have consistently argued that PQC is necessary but not sufficient – that building genuine quantum resilience requires not just algorithm replacement but architectural rethinking of how cryptographic operations are distributed, parallelized, and optimized across the payment chain.

The CBOM imperative

The slide data that motivates this article – 20 processes, 15 libraries, 31 objects, 90 functions, 320 function calls, just to open the app – is effectively a CBOM argument in miniature. It demonstrates that cryptography in mobile banking is not a single “TLS module” that can be upgraded in isolation. It is spread across app code, OS frameworks, security SDKs, identity stacks, API gateways, microservices, HSM infrastructure, and payment rails.

The CycloneDX CBOM standard provides a structured vocabulary for inventorying cryptographic assets – algorithms, keys, certificates, protocols, and their dependencies. NIST’s NCCoE Migration to Post-Quantum Cryptography project includes a cryptographic discovery workstream, and NIST’s draft PQC migration guidance explicitly discusses CBOMs as extending SBOMs to describe cryptographic assets. PostQuantum.com has previously analyzed the CBOM framework in depth.

When you re-read the “20 processes / 15 libraries” through this lens, it stops being a curiosity and becomes a statement about inventory scope: even before a payment exists, the app and platform have activated cryptographic dependencies that must eventually be made quantum-safe – or at minimum, crypto-agile enough to swap primitives without a full rewrite.

What a CBOM can and cannot see

A necessary caveat on scope and feasibility. The figures in this article – 250+ processes, 120 cryptographic objects, 30,000+ functions – are derived from a cryptographic discovery exercise that, like any such exercise, operated under idealized conditions and incorporated assumptions we could not independently verify. Vendor documentation and statements were taken at face value where binary-level analysis could not reach. Some cryptographic operations occur inside hardware trust boundaries (Secure Enclaves, HSMs, Secure Elements) where no external tool can observe the internal implementation. Others are embedded in closed-source, obfuscated, or hardened binaries where static and dynamic analysis tools produce incomplete or ambiguous results.

No organization will fully map the cryptographic surface across all nine parties described here – nor should that be the goal. You do not control Apple’s Secure Enclave firmware, Visa’s token vault, or your correspondent bank’s HSM key hierarchy. A realistic CBOM program focuses on what the organization can inventory and does control: its own application code and embedded SDKs, its own backend services and API gateways, its own HSM infrastructure, and the contractual and attestation boundaries with external parties. The value of a CBOM is not in achieving a perfect, all-seeing cryptographic map – it is in making the invisible visible enough to prioritize migration, identify the highest-risk quantum-vulnerable dependencies, and hold informed conversations with the vendors and counterparties who control the layers you cannot directly inspect.

The purpose of this article is to illustrate the scale and interconnectedness of the problem – to show why migration planning that treats cryptography as a handful of TLS certificates will fail. It is not a claim that any single organization can or should attempt to inventory every cryptographic function call across every party in the chain. The complexity is the point. The response to that complexity must be realistic, risk-prioritized, and incremental.

Crypto-agility as permanent discipline

NIST defines crypto-agility as the capability to replace and adapt cryptographic algorithms across protocols, applications, libraries, hardware, firmware, and infrastructure while preserving security and ongoing operations. That definition maps directly onto the “complex, layered, interdependent” reality this article describes.

The BIS Project Leap observation reinforces this: hybridization – running classical and PQC algorithms in parallel during the transition – requires substantial system evolution if agility was not designed in originally. Payment message formats have strict size constraints (legacy ISO 8583 fields were designed for 256-byte signatures, not multi-kilobyte PQC signatures). HSM firmware must be upgraded to support lattice-based cryptography. Certificate chains with PQC signatures will be significantly larger.

For the mobile banking stack specifically, the crypto-agility challenges are distributed across every party:

The device layer depends on silicon vendors and OS cryptographic APIs. Apple has publicly deployed post-quantum cryptography in its operating systems and added standardized PQC building blocks to CryptoKit (including ML-KEM and ML-DSA) starting in Apple platform version 26, with hardware-isolated execution benefits tied to Secure Enclave.

On Android, the hardware-backed Keystore/StrongBox model similarly depends on coordinated OS, TEE, and secure-element support. Google has publicly documented ongoing evolution of the attestation ecosystem (for example, deprecating RSA attestation roots in favor of ECDSA); any future adoption of PQC signatures inside roots-of-trust would require the same kind of platform-wide coordination and long-tail rollout across device generations.

Apple Pay’s Secure Element and its tokenization infrastructure must support PQC cryptograms – but the Secure Element’s processing and memory constraints (similar to EMV smart card chips) present the same challenges that have made PQC migration on physical EMV cards so difficult. Mastercard’s experiments with IDEMIA showed that PQC computation on typical Cortex-M3 processors consumed nearly the entire transaction time budget; similar constraints apply to Secure Elements in smartphones.

TLS libraries on both client and server must support hybrid key exchange. The industry is converging on X25519 + ML-KEM-768 as the preferred hybrid combination, but integrating this into the millions of deployed mobile banking app instances requires coordinated app updates, backend infrastructure changes, and certificate authority readiness. OAuth/JWT token signing must transition to PQC signature algorithms – but ML-DSA signatures at 3,309 bytes for ML-DSA-65 are dramatically larger than the 64-byte ECDSA-P256 signatures currently used in compact JWTs, potentially breaking payload size assumptions across API gateways and token validation middleware.

And every HSM in every institution must be upgraded or replaced – a process that, as noted above, involves not just firmware changes but potentially physical hardware swaps for HSMs whose cryptographic accelerators are hardwired for classical arithmetic.

The G7 Cyber Expert Group’s January 2026 roadmap sets a target of 2030–2032 for migrating critical financial systems and 2035 for full migration completion. The Citi Institute’s report estimated that a quantum-enabled attack on Fedwire could trigger $2–3.3 trillion in indirect economic losses. And PostQuantum.com’s analysis of the 120,000 discrete program tasks required for PQC migration at a single large enterprise gives a sense of the operational magnitude – a figure that, in light of the cryptographic density revealed in this article, may itself be conservative.

What to do now: practical recommendations

For CISOs and security architects in payment institutions, the cryptographic iceberg revealed in this analysis points to five concrete actions:

Commission a cryptographic discovery exercise focused on mobile channels. Use CBOM-capable tools to scan your mobile banking app binary, its embedded SDKs, and the backend services it communicates with. The results will likely surprise you – most organizations significantly underestimate the number of cryptographic libraries, key types, and algorithm dependencies in their mobile stack. Frame the output as a risk-driven inventory that prioritizes quantum-vulnerable asymmetric operations.

Map your institutional trust boundaries. Identify every point where your mobile banking transaction crosses a cryptographic demarcation – between device and app, between app and API gateway, between gateway and core banking, between core banking and payment rails, between payment rails and settlement. Each boundary is a PQC migration workstream. Each involves different vendors, different timelines, and different hardware constraints.

Engage your HSM vendors now. Payment HSMs are the longest-lead-time component in the PQC migration. Thales, Utimaco, Entrust, and Futurex have announced PQC firmware capabilities, but full FIPS 140-3 Level 3 certification with PQC is still in progress. Understand your vendor’s roadmap and plan for either firmware upgrades or physical replacement of legacy HSMs that cannot support lattice-based cryptography.

Design for crypto-agility in every new system. Any mobile banking system being designed or substantially refactored today should abstract cryptographic operations behind configurable service layers. Hardcoding algorithm choices into application logic – which is common in legacy systems – creates the exact kind of “cryptographic sprawl” that makes migration so painful. PCI DSS v4.0’s inventory requirements provide regulatory backing for this approach.

Pilot hybrid TLS and PQC transaction signing. Test ML-KEM-768 + x25519 hybrid key exchange on internal TLS connections between your API gateway and core banking services. Measure the latency impact with your actual traffic patterns. Separately, prototype PQC transaction signing with ML-DSA-65 to understand the signature size and verification time implications for your specific mobile banking architecture.

The half-second that protects trillions

Look at your phone. Think about the last time you opened your banking app. In the time it took for the screen to appear, your device executed a boot chain verification, authenticated your biometric data inside a physically isolated coprocessor, verified the app’s code signature, established a fresh TLS session with ephemeral key exchange and certificate chain validation, performed certificate pinning verification, exchanged OAuth tokens through a PKCE-protected flow, and initialized multiple security SDKs – each with its own cryptographic library dependencies.

That was before you typed a single digit.

When you tapped “send,” the cryptographic surface expanded across nine parties, through card networks or interbank messaging, through clearing and settlement, through HSMs operating under dual-control key ceremonies – involving thousands of unique cryptographic functions and millions of individual function calls.

Every one of the asymmetric operations in that chain – every ECDHE key exchange, every RSA certificate signature, every ECDSA transaction signature, every Diffie-Hellman key agreement in 3-D Secure – will need to be replaced or augmented before a cryptographically relevant quantum computer arrives. And because payment data subject to regulatory retention (seven to ten years or more under SOX, Basel, and PCI) is already being intercepted for future decryption, the migration timeline is not “when CRQC arrives” but “now minus the shelf life of the data.”

The cryptographic iceberg beneath a mobile banking transaction is real, it is quantifiable, and it is the reason that the payments industry’s PQC migration will be the most complex cryptographic transition any industry has ever attempted. The first step toward navigating it is making it visible.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.