OT Security in the Age of AI Exploits: What Anthropic’s Mythos Preview Means for Critical Infrastructure

Table of Contents

The Threat Anthropic Didn’t Talk About

This is not a quantum computing story. But it may be the most important security story I write this year, and I think my readers need to hear it.

On April 7, 2026, Anthropic disclosed Claude Mythos Preview, an AI model that autonomously discovers and exploits zero-day vulnerabilities in every major operating system and web browser. As I detailed in my analysis of the announcement, these capabilities represent a structural break in the economics of offensive security. Work that used to require elite teams, months of effort, and seven-figure budgets now happens in hours for a few thousand dollars.

The technical blog post is extraordinary and worth reading in full. But something struck me about both Anthropic’s disclosure and the industry commentary that followed: virtually all of it focuses on IT targets — browsers, operating systems, cryptographic libraries, web applications. Anthropic’s defensive coalition, Project Glasswing, brings together the usual suspects of the technology industry: AWS, Apple, Google, Microsoft, CrowdStrike, Palo Alto Networks.

Nobody is talking about power plants. Nobody is talking about water treatment facilities. Nobody is talking about the programmable logic controllers that manage chemical processes, the safety instrumented systems that prevent refinery explosions, or the SCADA networks that coordinate electrical grid operations across entire countries.

This is a significant omission. Because when I map Mythos Preview’s demonstrated capabilities against the reality of operational technology environments, what I see is not just a worse version of the IT security problem. It is a qualitatively different and far more dangerous threat.

Why OT Is Different — And Why That Difference Now Matters More

Why I’m writing this? Before I focused on quantum security and this publication, I spent a significant part of my career in cyber-kinetic security — the intersection where cyberattacks cause physical consequences. From 1997 to 2007, I led offensive security operations against critical national infrastructure and defense systems. Later, I led OT and CNI security practices at IBM and then globally at a Big 4 firm. I’ve been inside the control rooms, the substations, and the plant floors. I know how these environments actually work and not the sanitized version in vendor presentations, but the real thing, with its decades-old firmware, its undocumented protocol translations, and its engineers who view cybersecurity as an unwelcome intrusion into systems that were running fine before IT people showed up.

That hands-on experience is precisely why the Mythos Preview disclosure alarmed me in a way that most cybersecurity announcements don’t.

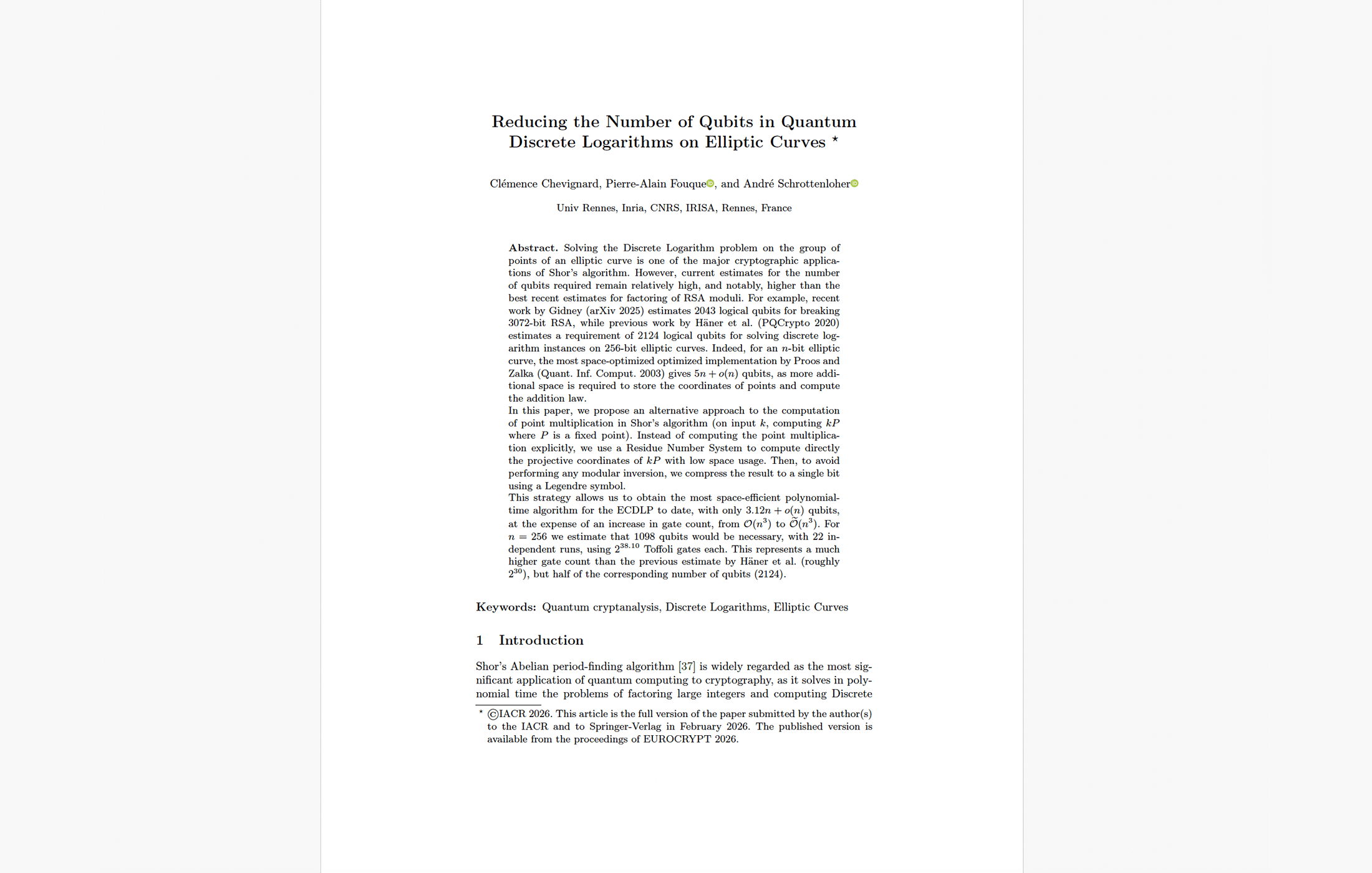

Consider what Anthropic demonstrated. Mythos Preview found a 27-year-old vulnerability in OpenBSD, an operating system famous for its security, that had survived decades of expert human review. It found a 16-year-old bug in FFmpeg’s H.264 codec that automated fuzzers had hit five million times without catching. It found a 17-year-old remotely exploitable buffer overflow in FreeBSD’s NFS server and autonomously wrote a multi-packet ROP chain to exploit it for full root access.

Now consider the software that runs critical infrastructure.

The firmware in most industrial control systems is far older, far less reviewed, and far less hardened than OpenBSD or FreeBSD. Many PLCs and RTUs run proprietary real-time operating systems that have never been subjected to systematic security analysis by anyone. The protocols they speak, such as Modbus, DNP3, OPC DA, IEC 61850, PROFINET, were designed for reliability in isolated networks, not for security in connected environments. Many have no authentication mechanisms at all.

If Mythos Preview can find and exploit bugs that OpenBSD’s security-obsessed developers missed for 27 years, what would it find in a Siemens S7-400 firmware image that hasn’t been updated since 2011? Or in the custom SCADA application that a systems integrator built fifteen years ago for a water utility and has been running untouched ever since?

The answer, I suspect, is everything.

The Structural Problem: OT Can’t Move at AI Speed

The IT security community’s response to Mythos-class capabilities centers on acceleration — patch faster, scan continuously, automate incident response. These are sensible recommendations for IT environments where software updates can be pushed in hours and rolled back if they break something.

OT environments operate under fundamentally different constraints, and every one of those constraints makes AI-driven offensive capabilities more dangerous, not less.

Maintenance windows measured in months or years. Most critical infrastructure systems cannot be patched during normal operations. Applying a firmware update to a PLC controlling a chemical process requires a planned shutdown, which means scheduling around production cycles, regulatory requirements, and sometimes weather or seasonal demand patterns. In many industries, the next available maintenance window is 12 to 18 months away. In nuclear power, it can be longer. During that entire window, any vulnerability discovered by an AI-driven scan is an open door that cannot be closed.

Formal validation and verification. Unlike IT patches that can be tested in staging environments and rolled out progressively, changes to OT systems often require formal safety validation. A firmware update to a safety instrumented system in a petrochemical facility doesn’t just need IT change management approval — it needs a hazard and operability study (HAZOP), functional safety assessment, and potentially regulatory sign-off. This process exists for good reasons — a bad patch in a safety system can be more dangerous than the vulnerability it fixes — but it means that even when a fix is available, deploying it may take months of engineering work.

Resource-constrained devices. Many industrial controllers run on hardware with kilobytes of memory, no operating system in the conventional sense, and no capacity for endpoint protection agents, intrusion detection, or any of the defensive tooling that IT security takes for granted. You cannot install CrowdStrike on a PLC. You cannot run a vulnerability scanner against a protection relay without risking a trip that blacks out a section of the grid.

Legacy protocols without authentication. Modbus, still one of the most widely deployed industrial protocols, has no authentication mechanism. A command sent to a Modbus device is executed regardless of who sent it. DNP3 added authentication (Secure Authentication v5) years ago, but adoption remains minimal. An attacker who reaches the OT network doesn’t need an exploit at all — they just need to speak the protocol.

Cultural resistance to change. I’ve worked with enough plant engineers and asset owners to know that the resistance to cybersecurity measures in OT environments is not irrational. These are people responsible for systems where incorrect configuration can cause explosions, environmental contamination, or loss of life. Their conservatism has kept critical systems running safely for decades. But that same conservatism means that cybersecurity improvements that are technically possible often face organizational barriers that delay implementation by years.

The collision between these constraints and Mythos-class AI capabilities creates a scenario that is genuinely unprecedented. An adversary can now discover exploitable vulnerabilities in OT systems at machine speed, but defenders cannot remediate them any faster than the physical world allows. The gap between offensive capability and defensive capacity, already wide in OT environments, just became a chasm.

The Stuxnet Lesson, Revisited

When I wrote about Stuxnet, the cyber weapon that physically destroyed Iranian nuclear centrifuges, one of the key lessons was how much specialized knowledge and effort it required. Stuxnet’s developers needed deep expertise in Siemens Step 7 programming, the specific physics of uranium enrichment centrifuges, and the operational patterns of the Natanz facility. The weapon used four zero-day exploits and was likely developed over years by a team with nation-state resources.

Mythos Preview doesn’t eliminate the need for domain-specific knowledge about physical processes. An AI that can exploit a PLC’s firmware still needs to know what commands would cause a turbine to overspeed or a reactor to overheat. But it collapses the cyber side of the equation — the exploit development, the vulnerability discovery, the defense bypass — from years and millions of dollars to hours and thousands.

That doesn’t make Stuxnet-scale attacks trivial. But it lowers the barrier from “only two or three nation-states can do this” to “any well-resourced group with domain knowledge can attempt this.” That is a meaningful shift in the threat landscape for every operator of critical national infrastructure.

What OT Security Teams Should Actually Do

I want to be practical rather than alarmist. Some of these recommendations are things OT security teams should have been doing already – but “should have been doing” and “actually doing” are very different things in most organizations I’ve worked with.

Accept the Reality of Your Patching Constraints — And Defend Around Them

You cannot patch at AI speed. Accept this and stop pretending your vulnerability management program will save you. Instead, invest in the controls that reduce exposure regardless of whether the underlying systems are patched.

Deploy unidirectional security gateways (data diodes) at every critical boundary between IT and OT networks. Not firewalls, firewalls can be misconfigured, have their rules eroded over time, and can themselves contain exploitable vulnerabilities. A hardware-enforced unidirectional gateway is physically incapable of allowing traffic to flow from the IT network into the OT environment. It limits functionality, but it provides a hard guarantee that an AI-discovered exploit in your IT environment cannot reach your control systems. For the most critical process zones, this trade-off is increasingly justified.

Segment aggressively within OT networks. Purdue Model-style network segmentation has been best practice for years, but in my experience, actual implementation is often incomplete or degraded. The zones and conduits between Level 0/1 (physical process and basic control) and Level 2/3 (supervisory control and operations) need to be enforced, audited, and tested regularly. An attacker with Mythos-class capabilities who reaches your Level 3 network should not be able to traverse freely to Level 1.

Practice Your Incident Response — Seriously, Not Performatively

When a zero-day chain hits your OT environment and you have no patch available, your survival depends on detection, response, and recovery. Most OT organizations I’ve worked with have incident response plans that exist on paper but have never been tested under realistic conditions.

Run full-scale exercises with your crisis management teams — Gold (strategic), Silver (tactical), and Bronze (operational), and make them practice the decisions that actually matter. Not “should we activate the plan?” but “the attacker has control of the DCS and is changing setpoints on three reactors — do we initiate emergency shutdown now, knowing it will cost $20 million in lost production and take four days to restart?” These are the decisions that real incidents force, and they need to be rehearsed with the people who will actually make them.

Verify that your operators can run critical processes manually. When I led OT security assessments, one of the first questions I always asked was: “If we disconnected every digital control system right now, could you still safely shut down and maintain this facility?” The answer was disturbingly often “we think so” or “the people who knew how retired.” If your safety depends on digital systems that can be compromised, you need manual fallback procedures that are documented, trained, and regularly tested. This is not theoretical — it is the last line of defense when all digital defenses have failed.

Add Independent Sensing for Critical Safety Parameters

This recommendation may sound expensive and redundant, but I believe it will become standard practice within the next decade, and organizations should start planning now.

For the most critical physical parameters in your process — the temperatures, pressures, flow rates, and levels whose exceedance can cause catastrophic physical consequences — consider deploying independent analog sensor networks that are physically separate from your primary control and instrumentation network. These sensors should feed into safety instrumented systems (SIS) that can initiate protective actions (emergency shutdown, pressure relief, isolation) based on hard-wired logic, without any dependence on the digital control system or the network it sits on.

The purpose is simple: if an attacker compromises your SCADA system and manipulates the readings that operators and control systems rely on — showing normal temperatures while a process overheats, for example — the independent sensors detect the physical reality and trigger protective action regardless. This is defense against the specific cyber-kinetic attack scenario where digital systems lie to operators about the physical world.

Stop Buying More Products — Start Implementing the Ones You Have

I need to say something that will be unpopular with vendors. The CISO’s instinct when confronted with a new threat is almost always to buy something. A new detection platform, a new OT visibility tool, a new threat intelligence feed. I’ve watched this cycle repeat for two decades, and the result is organizations with dozens of partially configured, poorly integrated security products that create an illusion of coverage while leaving fundamental gaps.

Vulnerabilities don’t appear in products. They appear in complexity, in the gaps between systems, in the seams between accountabilities, in the configurations that nobody reviewed after the initial deployment. Every additional product you deploy increases the attack surface and the integration complexity while adding marginal defensive value if it isn’t properly configured and monitored.

Simpler is better. Fewer tools, properly implemented, consistently monitored, and regularly tested, will outperform a sprawling security stack that nobody fully understands. Before you buy anything new, ask: are the tools we already own actually working as intended? In my experience, the honest answer is usually no.

The Basics Still Matter — More Than Ever

Paradoxically, the emergence of Mythos-class capabilities makes the boring fundamentals of cybersecurity more important, not less. An AI that can write sophisticated exploit chains is impressive, but every one of those chains still requires the attacker to reach the vulnerable system, establish persistence, move laterally, and achieve their objective. Every basic control such as network segmentation, access management, credential hygiene, endpoint hardening, monitoring and logging, is a friction point that an attacker must overcome.

Mythos Preview grinds through these controls more efficiently than human attackers. But as Anthropic’s own technical blog post shows, even Mythos Preview’s exploits required navigating around defenses like stack canaries, KASLR, and HARDENED_USERCOPY. Controls that were properly deployed still imposed cost. Controls that were partially deployed — like FreeBSD’s -fstack-protector instead of -fstack-protector-strong — left exactly the gaps that the model exploited.

The lesson for OT security is clear: the controls you have are worth more than the controls you plan to buy. Make them work properly. Audit them. Test them. And assume that anything left partially deployed will be found and exploited.

A Hard Conversation We Need to Have

I want to close with something less actionable and more philosophical, because I think the OT security community needs to confront it.

For decades, the implicit assumption behind critical infrastructure security has been that sophisticated cyber-physical attacks, the kind that cause turbines to overspeed, chemical processes to run away, or power grids to cascade would require nation-state resources. This assumption justified a threat model where most operators worried primarily about ransomware and opportunistic IT intrusions, treating physical-consequence attacks as the domain of intelligence agencies with specific geopolitical objectives.

Mythos Preview challenges this assumption. Not because it can independently design a cyber-kinetic weapon, it can’t, and Anthropic has not claimed it can. But because the cyber component of such a weapon — the exploit development, the vulnerability discovery, the defense bypass — was always the expensive, talent-constrained part. The domain knowledge about physical processes is far more widely distributed than exploit development skills. There are tens of thousands of engineers worldwide who understand how a gas turbine works, how a chemical reactor behaves, or what happens when you override a safety interlock. What they lacked was the cyber capability to reach and manipulate the control systems.

That capability just became dramatically more accessible.

I don’t want to overstate this. Building a Stuxnet-class weapon still requires deep systems integration work that goes well beyond finding an exploit. But the barrier has lowered, and it will continue to lower as these capabilities proliferate. Every critical infrastructure operator needs to reassess their threat model in light of this reality.

Quantum Upside & Quantum Risk - Handled

My company - Applied Quantum - helps governments, enterprises, and investors prepare for both the upside and the risk of quantum technologies. We deliver concise board and investor briefings; demystify quantum computing, sensing, and communications; craft national and corporate strategies to capture advantage; and turn plans into delivery. We help you mitigate the quantum risk by executing crypto‑inventory, crypto‑agility implementation, PQC migration, and broader defenses against the quantum threat. We run vendor due diligence, proof‑of‑value pilots, standards and policy alignment, workforce training, and procurement support, then oversee implementation across your organization. Contact me if you want help.